The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTRise of the Tomb Raider

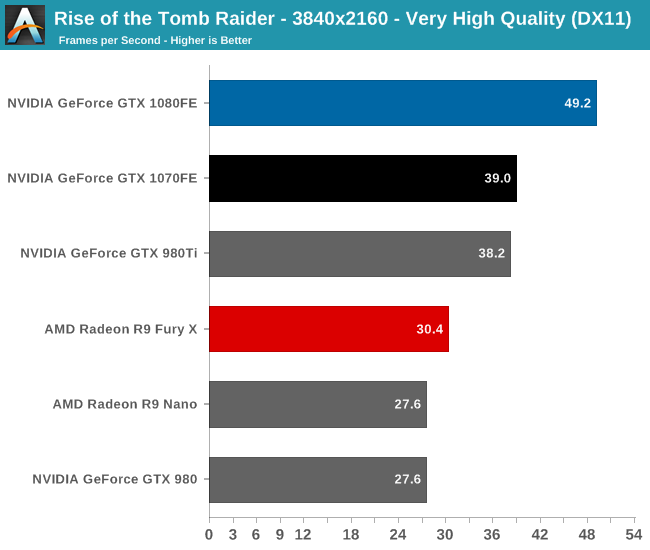

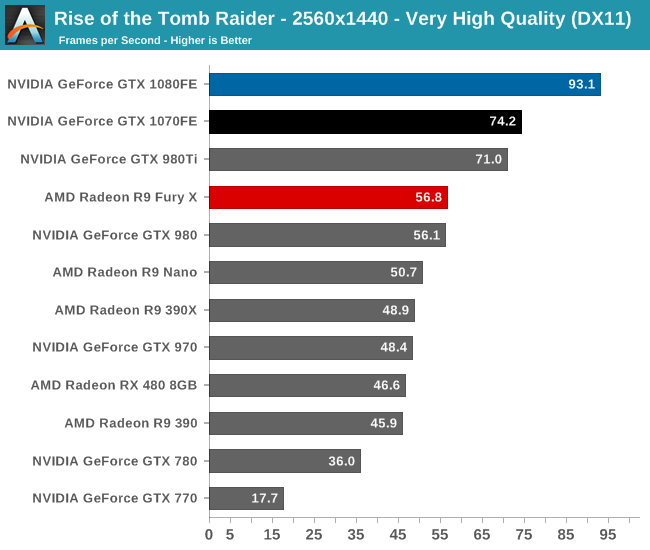

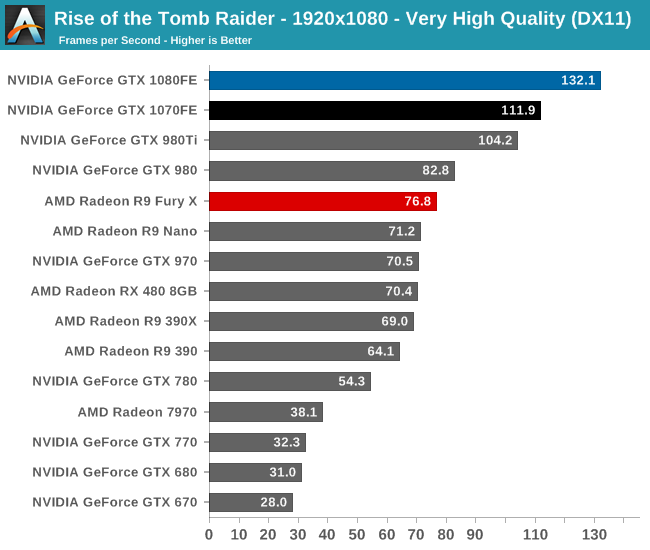

Starting things off in our benchmark suite is the built-in benchmark for Rise of the Tomb Raider, the latest iteration in the long-running action-adventure gaming series. One of the unique aspects of this benchmark is that it’s actually the average of 4 sub-benchmarks that fly through different environments, which keeps the benchmark from being too weighted towards a GPU’s performance characteristics under any one scene.

To kick things off then, while I picked the benchmark order before collecting the performance results, it’s neat that Rise of the Tomb Raider ends up being a fairly consistent representation of how the various video cards compare to each other. The end result, as you might expect, puts the GTX 1080 and GTX 1070 solidly in the lead. And truthfully there’s no reason for it to be anything but this; NVIDIA does not face any competition from AMD at the high-end at this point, so the two GP104 cards are going to be unrivaled. It’s not a question of who wins, but by how much.

Overall we find the GTX 1080 ahead of its predecessor, the GTX 980, by anywhere between 60% and 78%, with the lead increasing with the resolution. The GTX 1070’s lead isn’t quite as significant though, ranging from 53% to 60#. This is consistent with the fact that the GTX 1070 is specified to trail the GTX 1080 by more than we saw with the 980/970 in 2014, which means that in general the GTX 1070 won’t see quite as much uplift.

What we do get however is confirmation that the GTX 1070FE is a GTX 980 Ti and more. The performance of what was NVIDIA’s $650 flagship can now be had in a card that costs $450, and with any luck will get cheaper still as supplies improve. For 1440p gamers this should hit a good spot in terms of performance.

Otherwise when it comes to 4K gaming, NVIDIA has made a lot of progress thanks to GTX 1080, but even their latest and greatest card isn’t quite going to crack 60fps here. We haven’t yet escaped having to made quality tradeoffs for 4K at this time, and it’s likely that future games will drive that point home even more.

Finally, 1080p is admittedly here largely for the sake of including much older cards like the GTX 680, to show what kind of progress NVIDIA has made since their first 28nm high-end card. The result? A 4.25x performance increase over the GTX 680.

200 Comments

View All Comments

Ryan Smith - Wednesday, July 20, 2016 - link

Thanks.Eden-K121D - Wednesday, July 20, 2016 - link

Finally the GTX 1080 reviewguidryp - Wednesday, July 20, 2016 - link

This echoes what I have been saying about this generation. It is really all about clock speed increases. IPC is essentially the same.This is where AMD lost out. Possibly in part the issue was going with GloFo instead of TSMC like NVidia.

Maybe AMD will move Vega to TSMC...

nathanddrews - Wednesday, July 20, 2016 - link

Curious... how did AMD lose out? Have you seen Vega benchmarks?TheinsanegamerN - Wednesday, July 20, 2016 - link

its all about clock speed for Nvidia, but not for AMD. AMD focused more on ICP, according to them.tarqsharq - Wednesday, July 20, 2016 - link

It feels a lot like the P4 vs Athlon XP days almost.stereopticon - Wednesday, July 20, 2016 - link

My favorite era of being a nerd!!! Poppin' opterons into s939 and pumpin the OC the athlon FX levels for a fraction of the price all while stompin' on pentium. It was a good (although expensive) time to a be a nerd... Besides paying 100 dollars for 1gb of DDR500. 6800gs budget friendly cards, and ATi x1800/1900 super beasts.. how i miss the dayseddman - Thursday, July 21, 2016 - link

Not really. Pascal has pretty much the same IPC as Maxwell and its performance increases accordingly with the clockspeed.Pentium 4, on the other hand, had a terrible IPC compared to Athlon and even Pentium 3 and even jacking its clockspeed to the sky didn't help it.

guidryp - Wednesday, July 20, 2016 - link

No one really improved IPC of their units.AMD was instead forced increase the unit count and chip size for 480 is bigger than the 1060 chip, and is using a larger bus. Both increase the chip cost.

AMD loses because they are selling a more expensive chip for less money. That squeezes their unit profit on both ends.

retrospooty - Wednesday, July 20, 2016 - link

"This echoes what I have been saying about this generation. It is really all about clock speed increases. IPC is essentially the same."- This is a good thing. Stuck on 28nm for 4 years, moving to 16nm is exactly what Nvidias architecture needed.