Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTThe Performance Impact of Asynchronous Shading

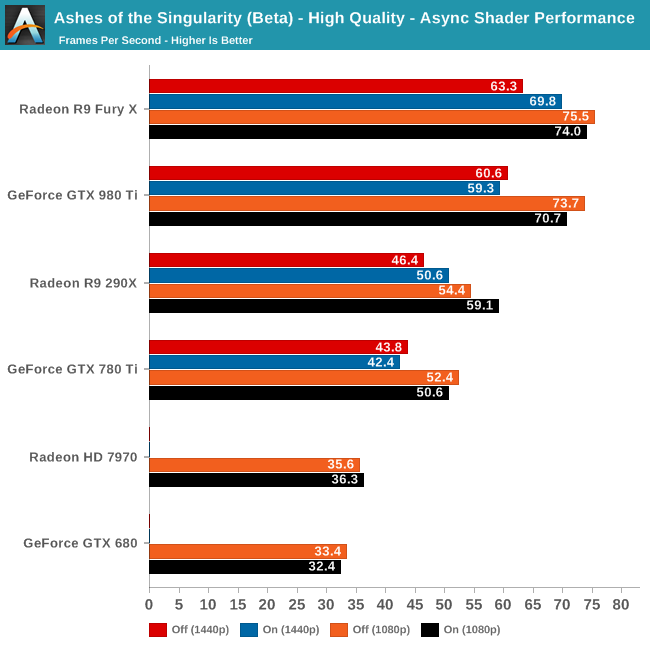

Finally, let’s take a look at Ashes’ latest addition to its stable of DX12 headlining features; asynchronous shading/compute. While earlier betas of the game implemented a very limited form of async shading, this latest beta contains a newer, more complex implementation of the technology, inspired in part by Oxide’s experiences with multi-GPU. As a result, async shading will potentially have a greater impact on performance than in earlier betas.

Update 02/24: NVIDIA sent a note over this afternoon letting us know that asynchornous shading is not enabled in their current drivers, hence the performance we are seeing here. Unfortunately they are not providing an ETA for when this feature will be enabled.

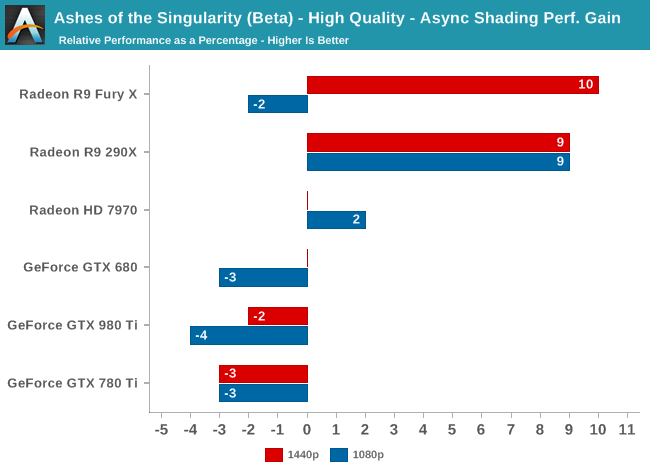

Since async shading is turned on by default in Ashes, what we’re essentially doing here is measuring the penalty for turning it off. Not unlike the DirectX 12 vs. DirectX 11 situation – and possibly even contributing to it – what we find depends heavily on the GPU vendor.

All NVIDIA cards suffer a minor regression in performance with async shading turned on. At a maximum of -4% it’s really not enough to justify disabling async shading, but at the same time it means that async shading is not providing NVIDIA with any benefit. With RTG cards on the other hand it’s almost always beneficial, with the benefit increasing with the overall performance of the card. In the case of the Fury X this means a 10% gain at 1440p, and though not plotted here, a similar gain at 4K.

These findings do go hand-in-hand with some of the basic performance goals of async shading, primarily that async shading can improve GPU utilization. At 4096 stream processors the Fury X has the most ALUs out of any card on these charts, and given its performance in other games, the numbers we see here lend credit to the theory that RTG isn’t always able to reach full utilization of those ALUs, particularly on Ashes. In which case async shading could be a big benefit going forward.

As for the NVIDIA cards, that’s a harder read. Is it that NVIDIA already has good ALU utilization? Or is it that their architectures can’t do enough with asynchronous execution to offset the scheduling penalty for using it? Either way, when it comes to Ashes NVIDIA isn’t gaining anything from async shading at this time.

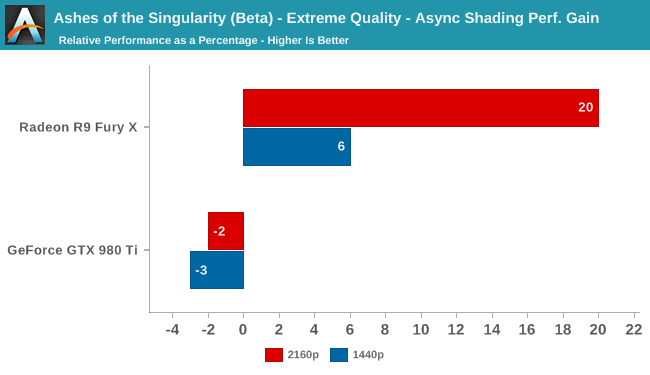

Meanwhile pushing our fastest GPUs to their limit at Extreme quality only widens the gap. At 4K the Fury X picks up nearly 20% from async shading – though a much smaller 6% at 1440p – while the GTX 980 Ti continues to lose a couple of percent from enabling it. This outcome is somewhat surprising since at 4K we’d already expect the Fury X to be rather taxed, but clearly there’s quite a bit of shader headroom left unused.

153 Comments

View All Comments

permastoned - Sunday, February 28, 2016 - link

Wasn't trolling - there are other metrics that show the case; for you to imply that 3dmark isn't valid is just silly: http://wccftech.com/amd-r9-290x-fast-titan-dx12-en...Another thing; what's the deal with all these fanboys? There is no benefit to being a fanboy of either AMD or Nvidia, it is just going to cause you problems because it may cause you to buy based on brand, rather than on performance per dollar, which is the factor that actually matters. At different price ranges different brands are better - e.g top end, a 980Ti is better than a fury X, however if you are looking in the price bracket below, and want buy a 980, you will get better performance and performance per dollar from a standard fury.

Being a fanboy will blind you from accepting the truth when the tides shift and the tables eventually turn. It helps you in no way at all, it disadvantages you in many. It also causes you to get angry on forums for no reason, and call people 'trolls' when they are stating facts.

Soulwager - Sunday, March 20, 2016 - link

Poorly how, exactly? It looks to me like DX12 is just removing a bottleneck for AMD that Nvidia already fixed in DX11. It would be more correct to say that AMD has poor DX11 performance compared to Maxwell, and neither are constrained by driver overhead in DX12.SunLord - Wednesday, February 24, 2016 - link

DX12 by desing will slightly favor older AMD designs simply because of the design decisions that AMD made compared to Nvidia with regards DX11 that are paying off with Dx12 while Nvidia benefited from it with DX11 games which is why they own around 80% or so of the gaming GPU market. How much of an impact this will be depends on the game just like how it is with DX11 games some do better on AMD some will be better on Nvidia.anubis44 - Thursday, February 25, 2016 - link

If results like these continue with other DX12 games, nVidia's going to be the one with only 20% in a matter of months.althaz - Thursday, February 25, 2016 - link

Even in generation where AMD/ATI have been dominant in terms of performance and value, they've still not really dominated in sales.Just like even when AMD's CPUs were offering twice the performance per watt and cheaper performance per dollar, they still sold less than Intel.

Doing it for a short time isn't enough, you have to do it for *years* to get a lead like nVidia has.

Firstly you have to overturn brand-loyalty from complete morons (aka everybody with any brand loyalty to any company, these are corporations that only care about the contents of your wallet, make rational choices). That will happen only a small percentage of people at a time. So you have to maintain a pretty serious lead for a long time to do it.

AMD did manage to do it in the enthusiast space with CPUs, but (arguably due to Intel being dodgy pricks) they didn't quite turn that into mainstream market dominance. Which sucks for them, because they absolutely deserved it.

So even if AMD maintains this DX12 lead for the rest of the year and all of the next, they'll still sell less GPUs than nVidia will in that time. But if they can do it for another year after that, *then* would they be likely to start winning the GPU war.

Personally, I don't care a lot. I hope AMD do better because they are losing and competition is good. However, I will make my next purchasing decision on performance and price, nothing else.

permastoned - Sunday, February 28, 2016 - link

Wasn't trolling - there are other metrics that show the case; for you to imply that 3dmark isn't valid is just silly: http://wccftech.com/amd-r9-290x-fast-titan-dx12-en...2 points = trend.

Another thing; what's the deal with all these fanboys? There is no benefit to being a fanboy of either AMD or Nvidia, it is just going to cause you problems because it may cause you to buy based on brand, rather than on performance per dollar, which is the factor that actually matters. At different price ranges different brands are better - e.g top end, a 980Ti is better than a fury X, however if you are looking in the price bracket below, and want buy a 980, you will get better performance and performance per dollar from a standard fury.

Being a fanboy will blind you from accepting the truth when the tides shift and the tables eventually turn. It helps you in no way at all, it disadvantages you in many. It also causes you to get angry on forums for no reason, and call people 'trolls' when they are stating facts.

Continuity28 - Wednesday, February 24, 2016 - link

By the time DX12 becomes commonplace, I'm sure they will have cards that were built for DX12.It makes a lot of sense to design your cards around what will be most useful today, not years in the future when people are replacing their cards anyways. Does it really matter if AMD's DX12 performance is better when it isn't relevant, when their DX11 performance is worse when it is relevant?

Senti - Wednesday, February 24, 2016 - link

Indeed it makes much sense to build cards exactly for today so people would be forced to buy new hardware next year to have decent performance. From certain green point of view. But many people are actually hoping that their brand new mid-top card would last with decent performance at least some years.cmdrdredd - Wednesday, February 24, 2016 - link

Hardware performance for new APIs is always weak with first gen products. That isn't changing here. When there are many DX12 titles out and new cards are out there, you'll see that people don't want to try playing with their old cards and will be buying new. That's how it works.ToTTenTranz - Wednesday, February 24, 2016 - link

"Hardware performance for new APIs is always weak with first gen products."Except that doesn't seem to be the case with 2012's Radeon line.