AMD Beema/Mullins Architecture & Performance Preview

by Anand Lal Shimpi on April 29, 2014 12:00 AM EST

When AMD launched its Kabini and Temash APUs last year it delivered a compelling cost/performance story, but its power story wasn’t all that impressive. Despite being built out of relatively low power components, nearly all of AMD’s entry level APUs carried 15W TDPs, with a couple weighing in at 8 - 9W and only a single 1GHz dual-core part dropping down to 3.9W. By comparison, Intel was shipping full blown Haswell Ultrabook parts at 15W - offering substantially better CPU performance, in a similar thermal envelope (although at a higher cost). The real disruption for AMD was Intel’s Bay Trail, which showed up with a similar looking micro architecture running at substantially higher clock speeds and TDPs below 8W.

AMD seemed to have all of the right pieces to build a power efficient mobile SoC, but for some reason we weren’t seeing it. Today that begins to change with the the successors to Kabini and Temash.

Codenamed Beema and Mullins, these are the 2014 updates to Kabini and Temash (respectively). Beema is aimed at entry level notebooks, while Mullins targets tablets. For now, both are designed for Windows machines. Although I suspect we’ll eventually see AMD address the native Android market head on, for now AMD is relying on running Android on top of Windows for those who really want it. No word on if/when we’ll get a socketed Beema for entry level desktops.

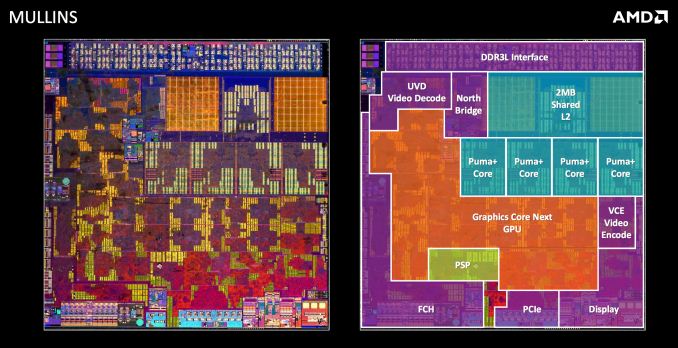

Like their predecessors, Beema and Mullins combine four low power AMD x86 cores (Puma+ this time, instead of Jaguar) with 128 GCN based Radeon GPU cores. AMD will continue to offer a couple of dual-core SKUs, but they are harvested from a quad-core die. AMD remains unwilling to release official die area figures, but there is a slight increase in transistor count:

| AMD/Intel Transistor Count & Die Area Comparison | |||||

| SoC | Process Node | Transistor Count | Die Area | ||

| AMD Zacate | TSMC 40nm | 450M+ | 75mm2 | ||

| AMD Kabini/Temash | TSMC 28nm | 914M | ~107mm2 (est) | ||

| AMD Beema/Mullins | GF 28nm | 930M | ~107mm2 (est) | ||

| AMD Llano | GF 32nm SOI | 1.18B | 228mm2 | ||

| AMD Trinity/Richland | GF 32nm SOI | 1.30B | 246mm2 | ||

| AMD Kaveri | GF 28nm SHP | 2.41B | 245mm2 | ||

| Intel Haswell (4C/GT2) | Intel 22nm | 1.40B | 177mm2 | ||

I’d expect a similar die size to Kabini/Temash. It’s interesting to note that these SoCs have a transistor count somewhere south of Apple’s A7.

Puma+ is based on the same micro architecture as Jaguar. We’re still looking at a 2-wide OoO design with the same number of execution units and data structures inside the chip. The memory interface remains unchanged as well at 64-bits wide. These new SoCs are still built on the same 28nm process as their predecessor. The process however has seen some improvements. Not only are both the CPU and GPU designs slightly better optimized for lower power operation, but both benefit from improvements to the manufacturing process resulting in substantial decreases in leakage current.

AMD claims a 19% reduction in core leakage/static current for Puma+ compared to Jaguar at 1.2V, and a 38% reduction for the GPU. The drop in leakage directly contributes to a substantially lower power profile for Beema and Mullins.

AMD also went in and tweaked the SoC’s memory interface. Kabini/Temash had a standard PC-like DDR3 memory interface. All of the complexity required for broad memory module compatibility and variations in trace routing was handed by the controller itself. This not only added complexity to the DDR3 interface but power as well. With Beema and Mullins, AMD took a page from the smartphone SoC design guide and traded flexibility for power. These platforms now ship with more strict guidelines as to what sort of memory can be used on board and how traces must be routed. The result is a memory interface that shaves off more than 500mW when in this more strict, low power mode. OEMs looking to ship a design with socketed DRAM can still run the memory interface in a higher power mode to ensure memory compatibility.

These SoCs won’t be available in a PoP configuration unfortunately - OEMs will have to rely on discrete DRAM packages rather than a fully integrated solution. Beema/Mullins also show up to a 200mW reduction in power consumed by the display interface compared to Kabini/Temash.

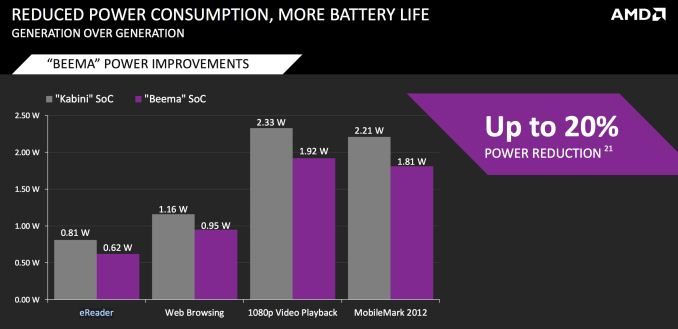

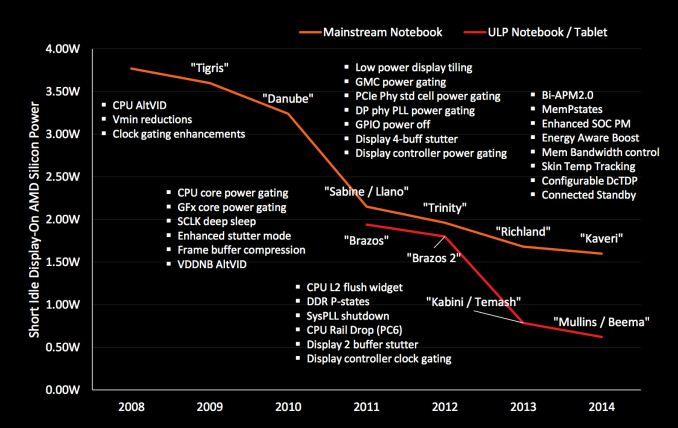

The combination of all of this is 20% lower idle power compared to the previous generation of AMD entry level and low power APUs. AMD put together a nice graph illustrating its progress over the years:

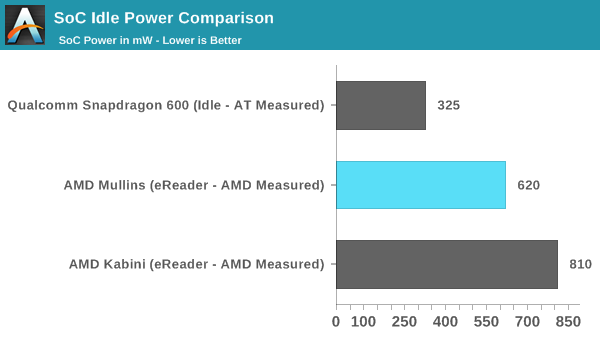

Beema and Mullins are definitely in a good place, however they still do consume more power at idle than the smartphone SoCs we typically find in iOS and Android tablets. AMD isolated APU power for the graph above and is using an “eReader” workload (aka display on but not animating, system otherwise idle). It just so happens I gathered similar data for our 2013 Nexus 7 review. The workloads and measurements are different (AMD isolates APU power, I’m looking at total platform power minus display) but it’s enough to put things in perspective:

AMD has dropped power consumption considerably over the years, but it’s still not as power efficient as high end mobile silicon.

AMD sees no value in supporting Microsoft's Connected Standby standard at this point, which makes sense given the limited success of Windows 8 tablets. Once again this seems to point to AMD eventually adopting Android for its tablet aspirations.

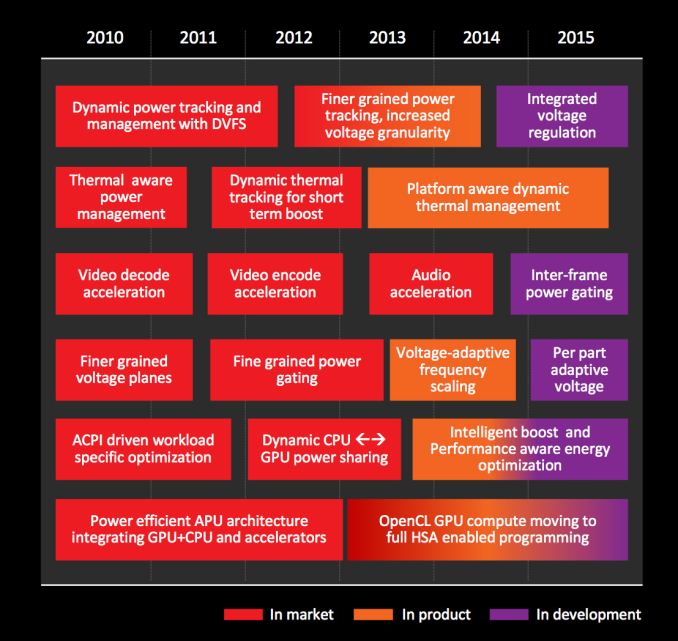

Looking forward, AMD has more tricks up its sleeve to continue to drive power down. Most interesting on the list? We’ll see an integrated voltage regulator (ala Haswell’s FIVR) from AMD in 2015.

82 Comments

View All Comments

Nintendo Maniac 64 - Tuesday, April 29, 2014 - link

Hmmm, sounds like the AMD equivalent of an Intel "tick", especially considering that the IPC between Puma+ and Jaguar is unchanged.Interestingly enough, this would mean that the PS4 and Xbone could use Puma+ cores in the future (with turbo disabled obviously).

nevertell - Tuesday, April 29, 2014 - link

Why would they need to disable turbo? I believe nobody is hitting the CPU performance limits just to have a fps limit or rely on the raw performance for timing, whereas this could improve some load times or improve performance during context switching.mwarner1 - Tuesday, April 29, 2014 - link

Consoles have fixed performance hardware to prevent games & applications performing differently on different hardware revisions. If you bought a PS4 today and then next week a new version was released (but likely not announced) that made games smoother / more playable then you would have the right to be annoyed.Havor - Tuesday, April 29, 2014 - link

No the main reason for fixed performance hardware is that developers dont have too add code or scalable textures to adjust or performance differences.And thus they have a more efficient single spec code, that dose not have to adjust to hardware spec.

nathanddrews - Tuesday, April 29, 2014 - link

Yeah, I highly doubt they'll switch architectures since it has never happened before. Power savings and console redesigns come from shrinks and on-die packaging.Samus - Wednesday, April 30, 2014 - link

There's nothing stopping Sony or Microsoft from launching a "performance edition" PS4 or XBOX One with a hardware bump that simply added antialiasing, etc, to games.This has already been done over the years with Nintendo offering the 4MB RAMBUS upgrade for the N64, and various performance storage options for XBOX 360/PS3 to assist load times of disc-based games. The SSD-edition of the 360 can load games/levels virtually instantly compared to running from disc or disk.

mfoley93 - Wednesday, April 30, 2014 - link

These aren't really higher performance though, just lower power. They could just lower the clock to offset whatever slight performance gains there are to equal the launch products.nathanddrews - Wednesday, April 30, 2014 - link

1. When Microsoft or Sony want to increase performance, they only do so via software updates that don't destabilize the platform as a whole. Neither can afford to break millions of consoles with a bad update or segregate the community into two camps. On the other hand, if developers of individual games find a way to improve framerates or AA, they can submit updates for download - but only after it is tested by the console manufacturers.2. I have the 4MB upgrade for my N64, only TWO games required it, a very small percentage of N64 games supported it, and even fewer truly benefited from it. It's mild success was due entirely to ZMM, DK, and PD, but Nintendo hasn't tried anything like it since. (Lest we forget the 64DD...)

3. Only Sony lets you install any drive you want. Most reviews from those that have upgraded to SSDs say it just isn't worth it. It's a consumer option, not something Sony changes at the platform level. The games still run at the same speed with the same textures.

4. There is no consumer "SSD Edition" Xbox 360 and they won't let you install one (officially). Are you referring to the 4GB Slim? That's not an SSD and most 360 games are too big to install onto it.

5. I have yet to see a console SSD upgrade result in anything instantaneously... except regret. :D

Kevin G - Wednesday, April 30, 2014 - link

Consoles are to be 'fixed spec' so that game developers know exactly what to expect in terms of hardware. The lone exception has been storage capacity. The N64 memory expansion is an excellent example of why developers aim for the lower guaranteed spec: only three games required it with a handful of games that'd use it if present.Both MS and Sony could come out with a hardware revision that does a bit more outside of gaming without impacting game developers. For example, MS could release an Xbox One with a digital tuner + DVR hardware. Such a change would have no impact to the gaming side of things. Ditto if MS or Sony were to add backwards compatibility via hardware: it'd be unavailable to use in an Xbox One or PS4 game.

Arbee - Thursday, May 1, 2014 - link

There was a late revision of the original PlayStation where the GPU got significantly faster for some operations, which resulted in higher frame rates in some games. (This was when the debug units switched from blue to green, in order to differentiate).