Understanding Camera Optics & Smartphone Camera Trends, A Presentation by Brian Klug

by Brian Klug on February 22, 2013 5:04 PM EST- Posted in

- Smartphones

- camera

- Android

- Mobile

Trends in Smartphone Cameras

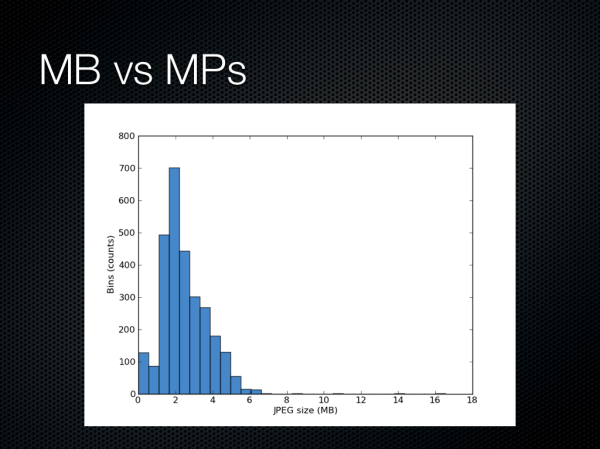

Recently, I became annoyed with the way Dropbox camera upload just dumps images with no organization into the “/Camera Uploads” folder, and set out to organize things. I have auto upload enabled for basically all the smartphones I get sampled or use on a daily basis, and this became a problem with all the sample photos and screenshots I take and want to use. I wrote a python script to parse EXIF data, then sort the images into appropriate camera make and model folders to organize things. An aberration of having this script put together was easy statistical analysis of the over four thousand images I’ve captured on smartphones from both rear and front facing cameras, and I was curious about just how much storage space the megapixel race is costing us. In addition I was curious about whether there’s much of a trend among certain OEMs and the compression settings they choose for their cameras. The plot shows a number of interesting groupings, and without even doing a regression we can see that indeed storage space is unsurprisingly being consumed more by larger images, with the 13 MP cameras already consuming close to 5 MB or more per image.

If we sort them into bins we can see that most really end up between a few KB in size to 6 MB, with the panoramas and other large stitched composites providing a long tail out to 16 MB or more.

The conclusion for the broader industry trend is that smartphones are or are poised to begin displacing the role of a traditional point and shoot camera. The truth is that certain OEMs who already have a point and shoot business can easily port some of their engineering expertise to making smartphone cameras better, those without any previous business are at a disadvantage, at least initially. In addition we see that experiences and features are still highly volatile, there’s real innovation happening on the smartphone side.

60 Comments

View All Comments

Sea Shadow - Friday, February 22, 2013 - link

I am still trying to digest all of the information in this article, and I love it!It is because of articles like this that I check Anandtech multiple times per day. Thank you for continuing to provide such insightful and detailed articles. In a day and age where other "tech" sites are regurgitating the same press releases, it is nice to see anandtech continues to post detailed and informative pieces.

Thank you!

arsena1 - Friday, February 22, 2013 - link

Yep, exactly this.Thanks Brian, AT rocks.

ratte - Friday, February 22, 2013 - link

Yeah, got to echo the posts above, great article.vol7ron - Wednesday, February 27, 2013 - link

Optics are certainly an area the average consumer knows little about, myself included.For some reason it seems like consumers look at a camera's MP like how they used to view a processor's Hz; as if the higher number equates to a better quality, or more efficient device - that's why we can appreciate articles like these, which clarify and inform.

The more the average consumer understands, the more they can demand better products from manufacturers and make better educated decisions. In addition to being an interesting read!

tvdang7 - Friday, February 22, 2013 - link

Same here they have THE BEST detail in every article.Wolfpup - Wednesday, March 6, 2013 - link

Yeah, I just love in depth stuff like this! May end up beyond my capabilities but none the less I love it, and love that Brian is so passionate about it. It's so great to hear on the podcast when he's ranting about terrible cameras! And I mean that, I'm not making fun, I think it's awesome.Guspaz - Friday, February 22, 2013 - link

Is there any feasibility (anything on the horizon) to directly measure the wavelength of light hitting a sensor element, rather than relying on filters? Or perhaps to use a layer on top of the sensor to split the light rather than filter the light? You would think that would give a substantial boost in light sensitivity, since a colour filter based system by necessity blocks most of the light that enters your optical system, much in the way that 3LCD projector produces a substantially brighter image than a single-chip DLP projector given the same lightbulb, because one splits the white light and the other filters the white light.HibyPrime1 - Friday, February 22, 2013 - link

I'm not an expert on the subject so take what I'm saying here with a grain of salt.As I understand it you would have to make sure that no more than one photon is hitting the pixel at any given time, and then you can measure the energy (basically energy = wavelength) of that photon. I would imagine if multiple photons are hitting the sensor at the same time, you wouldn't be able to distinguish how much energy came from each photon.

Since we're dealing with single photons, weird quantum stuff might come into play. Even if you could manage to get a single photon to hit each pixel, there may be an effect where the photons will hit multiple pixels at the same time, so measuring the energy at one pixel will give you a number that includes the energy from some of the other photons. (I'm inferring this idea from the double-slit experiment.)

I think the only way this would be possible is if only one photon hits the entire sensor at any given time, then you would be able to work out it's colour. Of course, that wouldn't be very useful as a camera.

DominicG - Saturday, February 23, 2013 - link

Hi Hlbyphotodetection does not quite work like that. A photon hitting a photodiode junction either has enough energy to excite an electron across the junction or it does not. So one way you could make a multi-colour pixel would be to have several photodiode junctions one on top of the other, each with a different "energy gap", so that each one responds to a different wavelength. This idea is now being used in the highest efficiency solar cells to allow all the different wavelengths in sunlight to be absorbed efficiently. However for a colour-sensitive photodiode, there are some big complexities to be overcome - I have no idea if anyone has succeeded or even tried.

HibyPrime1 - Saturday, February 23, 2013 - link

Interesting. I've read about band-gaps/energy gaps before, but never understood what they mean in any real-world sense. Thanks for that :)