In-Depth with the Windows 8 Consumer Preview

by Andrew Cunningham, Ryan Smith, Kristian Vättö & Jarred Walton on March 9, 2012 10:30 AM EST- Posted in

- Microsoft

- Operating Systems

- Windows

- Windows 8

Starting with Windows Vista, Microsoft began the first steps of what was to be a long campaign to change how Windows would interact with GPUs. XP, itself based on Windows 2000, used a driver model that predated the term “GPU” itself. While graphics rendering was near and dear to the Windows kernel for performance reasons, Windows still treated the video card as more of a peripheral than a processing device. And as time went on that peripheral model became increasingly bogged down as GPUs became more advanced in features, and more important altogether.

With Vista the GPU became a second-class device, behind only the CPU itself. Windows made significant use of the GPU from the moment you turned it on due to the GPU acceleration of Aero, and under the hood things were even more complex. At the API level Microsoft added Direct3D 10, a major shift in the graphics API that greatly simplified the process of handing work off to the GPU and at the same time exposed the programmability of GPUs like never before. Finally, at the lowest levels of the operating system Microsoft completely overhauled how Windows interacts with GPUs by implementing the Windows Display Driver Model (WDDM) 1.0, which is still the basis of how Windows interacts with modern GPUs.

One of the big goals of WDDM was that it would be extensible, so that Microsoft and GPU vendors could add features over time in a reasonable way. WDDM 1.0 brought sweeping changes that among other things took most GPU management away from games and put the OS in charge of it, greatly improving support for and the performance of running multiple 3D applications at once. In 2009, Windows 7 brought WDDM 1.1, which focused on reducing system memory usage by removing redundant data, and support for heterogeneous GPU configurations, a change that precluded modern iGPU + dGPU technologies such as NVIDIA’s Optimus. Finally, with Windows 8, Microsoft will be introducing the next iteration of WDDM, WDDM 1.2.

So what does WDDM 1.2 bring to the table? Besides underlying support for Direct3D 11.1 (more on that in a bit), it has several features that for the sake of brevity we’ll reduce to three major features. The first is power management, through a driver feature Microsoft calls DirectFlip. DirectFlip is a change in the Aero composition model that reduces the amount of memory bandwidth used when playing videos back in full screen and thereby reducing memory power consumption, as power consumption there has become a larger piece of total system power consumption in the age of GPU video decoders. At the same time WDDM 1.2 will also introduce a new overarching GPU power management model that will see video drivers work with the operating system to better utilize F-states and P-states to keep the GPU asleep more often.

The second major feature of WDDM 1.2 is GPU preemption. As of WDDM 1.1, applications effectively use a cooperative multitasking model to share the GPU; this model makes sharing the GPU entirely reliant on well-behaved applications and can break down in the face of complex GPU computing uses. With WDDM 1.2, Windows will be introducing a new pre-emptive multitasking model, which will have Windows preemptively switching out GPU tasks in order to ensure that every application gets its fair share of execution time and that the amount of time any application spends waiting for GPU access (access latency) is kept low. The latter is particularly important for a touch environment, where a high access latency can render a device unresponsive. Overall this is a shift that is very similar to how Windows itself evolved from Windows 3.1 to Windows 95, as Microsoft moved from a cooperative multitasking to a preemptive multitasking scheduling system for scheduling applications on the CPU.

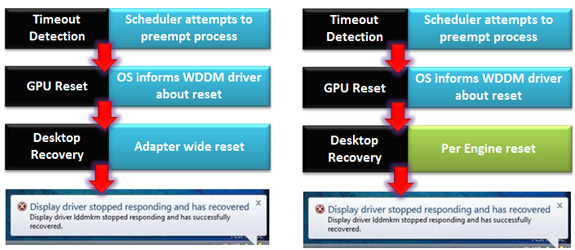

The final major feature of WDDM 1.2 is improved fault tolerance, which goes hand in hand with GPU preemption. With WDDM 1.0 Microsoft introduced the GPU Timeout and Detection Recovery (TDR) mechanism, which caught the GPU if it hung and reset it, thereby providing a basic framework to keep GPU hangs from bringing down the entire system. TDR itself isn’t perfect however; the reset mechanism requires resetting the whole GPU, and given the use of cooperative multitasking, TDR cannot tell the difference between a hung application and one that is not yet ready to yield. To solve the former, Microsoft will be breaking down GPUs on a logical level – MS calls these GPU engines – with WDDM 1.2 being able to do a per-engine reset to fix the affected engine, rather than needing to reset the entire GPU. As for unyielding programs, this is largely solved as a consequence of pre-emption: unyielding programs can choose to opt-out of TDR so long as they make themselves capable of being quickly preempted, which will allow those programs full access to the GPU while not preventing the OS and other applications from using the GPU for their own needs. All of these features will be available for GPUs implementing WDDM 1.2.

And what will be implementing WDDM 1.2? While it’s still unclear at this time where SoC GPUs will stand, so far all Direct3D 11 compliant GPUs will be implementing WDDM 1.2 support; so this means the GeForce 400 series and better, the Radeon HD 5000 series and better, and the forthcoming Intel HD Graphics 4000 that will debut with Ivy Bridge later this year. This is consistent with how WDDM has been developed, which has been to target features that were added in previous generations of GPUs in order let a large hardware base build up before the software begins using it. WDDM 1.0 and 1.1 drivers and GPUs will still continue to work in Windows 8, they just won't support the new features in WDDM 1.2.

Direct3D 11.1

Now that we’ve had a chance to take a look at the underpinnings of Windows 8’s graphical stack, how will things be changing at the API layer? As many of our readers are well aware, Windows 8 will be introducing the next version of Direct3D, Direct3D 11.1. As the name implies, D3D 11.1 is a relatively minor update to Direct3D similar in scope to Direct3D 10.1 in 2008, and will focus on adding a few features to Direct3D rather than bringing in any kind of sweeping change.

So what can we look forward to in Direct3D 11.1? The biggest end user feature is going to be the formalization of Stereo 3D support into the D3D API. Currently S3D is achieved by either partially going around D3D to present a quad buffer to games and applications that directly support S3D, or in the case of driver/middleware enhancement manipulating the rendering process itself to get the desired results. Formalizing S3D won’t remove the need for middleware to enable S3D on games that choose not to implement it, but for games that do choose to directly implement it such as Deus Ex, it will now be possible to do this through Direct3D and to do so more easily.

AMD’s Radeon HD 7970: The First Direct3D 11.1 Compliant Video Card

The rest of the D3D11.1 feature set otherwise isn’t going to be nearly as visible, but it will still be important for various uses. Interoperability between graphics, video, and compute is going to be greatly improved, allowing video via Media Foundation to be sent through pixel and compute shaders, among other things. Meanwhile Target Independent Rasterization will provide high performance, high quality GPU based anti-aliasing for Direct2D, allowing rasterization to move from the CPU to the GPU. Elsewhere developers will be getting some new tools: some new buffer commands should give developers a few more tricks to work with, shader tracing will enable developers to better trace shader performance through Direct3D itself, and double precision (FP64) support will be coming to pixel shaders on hardware that has FP64 support, allowing developers to use higher precision shaders.

Many of these features should be available on existing Direct3D11 compliant GPUs in some manner, particularly S3D support. The only thing we’re aware of that absolutely requires new hardware support is Target Independent Rasterization; for that you will need the latest generation of GPUs such as the Radeon HD 7000 series, or as widely expected, the Kepler generation of GeForces.

286 Comments

View All Comments

yannigr - Friday, March 9, 2012 - link

This is more of a funny post but.... do you hate AMD systems? Are AMD processors extinct? I mean 8 systems ALL with Intel cpus? Come on. Test an AMD system JUST FOR FUN..... We will not tell Intel. It will be a secret. :pGothmoth - Friday, March 9, 2012 - link

AMD?who is still using AMD?

except some poor in third world countrys?

no.. im just joking... AMD is great and makes intel cheaper.. if only they would be a real competition.

but what about ARM?

that would be more interesting.. but i guess we have to wait for that.

JarredWalton - Friday, March 9, 2012 - link

In defense of Andrew's choice of CPU, you'll note that there's only one desktop system and the rest are laptops. Sorry to break it to you, but Intel has been the superior laptop choice ever since Pentium M came to market. Llano and Brazos are the first really viable AMD-based laptops, and both of those are less than a year old. AFAIK, Andrew actually purchased (or received from some other job) the laptops he used for testing, and they're all at least a year old. Obviously, the MacBook stuff doesn't use AMD CPUs, so that's three of the systems.As for the two laptops I tested, they're also Intel-based, but I only have one laptop with an AMD processor right now, and it's a bit of a weirdo (it's the Llano sample I received from AMD). I wouldn't want to test that with a beta OS, simply because it's likely to have driver issues and potentially other wonkiness. Rest assured we'll be looking at AMD systems and laptops when Win8 is final, but in the meantime the only thing likely to be different is performance, and that's a well-trod path.

DiscoWade - Friday, March 9, 2012 - link

Last year, I needed to buy a new laptop. I wanted a Blu-Ray drive and a video card. I thought I would have to settle for a $1000 computer with an Intel processor. I had narrowed my choices down to a few all with the Intel i-series CPU. When I went to test some out at Best Buy, because I wanted to play with the computer to see if I liked it, I saw a discontinued HP laptop on sale for $550. It was marked down from $700. It had the AMD A8 Fusion CPU and a video card and a Blu-Ray drive. So I got a quad-core CPU with 4 hour actual battery life that runs like a dream very cheap. I was a little apprehensive at first with buying the AMD CPU, but a few days of use allayed my fears.If you say Intel makes better laptop CPU's, you haven't used the AMD A series CPU. It has great battery life and it runs great. How often will I use my laptop for encoding video and music? The dual-AMD graphics is really nice. Whenever I run a new program, it prompts which graphic card to use, the discrete for power savings or the video card for maximum performance. I like that.

Yes if I wanted more power, the Intel is the way to go. But my laptop isn't meant for that. And most people don't need the extra performance from an Intel CPU. Every AMD A8 and A6 I've used runs just as good for my customers and friends who don't need the extra performance of an Intel.

However, I haven't yet been successful installing my TechNet copy of W8CP on this laptop. I'm going to try again this weekend while watching lots of college basketball. (I love March Madness!) If anybody can help, I would appreciate if you let me know at this link:

http://answers.microsoft.com/en-us/windows/forum/w...

MrSpadge - Friday, March 9, 2012 - link

You do realize that Jared explicitely excluded Llano and Brazos from his comment? A8, A6, A4 - they're all Llano.Samus - Monday, March 12, 2012 - link

I'm actually shocked he didn't use an AMD E-series laptop (HP DM1z, Lenovo x120/x130, etc) as they have sold hundreds of thousands in the last 12 months. I see a DM1z every time I'm in an airport, and x120's are very commonplace in education.Remembering the Sandybridge chipset recall last year, this really gave AMD a head start selling low power, long battery life laptops, and they have sold very well, and belong in this review when you consider the only laptops you can buy new for <$400 are AMD laptops, and that is a huge market.

silverblue - Monday, March 12, 2012 - link

This isn't a review. Also, he didn't have one.Quite open to somebody benching a DM1z on W8CP, though. ;)

phoenix_rizzen - Friday, March 9, 2012 - link

While Intel may have the better performance CPU in laptops, they have the *worst* (integrated) graphics possible in laptops, and have 0 presence in the sub-$500 CDN market.You'd be surprised how many people actually use AMD-based laptops, especially up here in Canada, mainly for three reasons:

- CPU is "good enough"

- good quality graphics are more important than uber-fast CPU

- you can't beat the price (17" and 19" laptops with HD4000+ graphics for under $500 CDN, when the least expensive Intel-based laptop has crap graphics and starts at over $700 CDN)

frozentundra123456 - Friday, March 9, 2012 - link

A bit confused by your post. What is HD 4000 graphics? Granted Llano is superior to SB, but Llano is 66xx series isnt it? I though AMD 4000 series was a motherboard integrated graphics solution that is very weak. Intel SB graphics will be far superior to any integrated solution except Llano.I agree for my use, I would buy Llano in a laptop ( and only in a laptop) because I want to do some light gaming, but I dont understand your post. I would also not really call SB graphics "crap" unless you want to play games.

inighthawki - Friday, March 9, 2012 - link

HD 4000 is referring to the intel integrated graphics on the new ivy bridge chips - nothing to do with AMD chips