ASUS N53JF: Midrange 15.6” 1080p, Take Four

by Jarred Walton on December 28, 2010 1:40 AM ESTASUS N53JF Battery Life: Not Bad for 48Wh, but Please Give Us 63Wh!

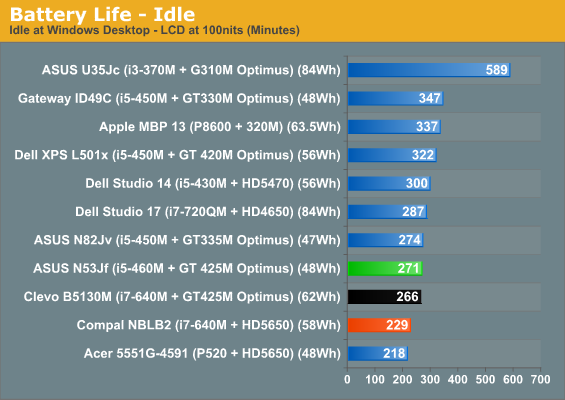

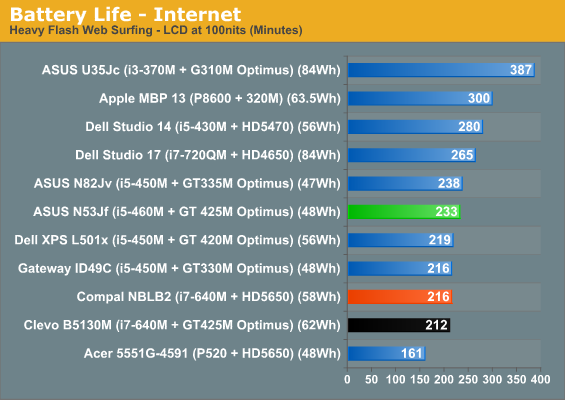

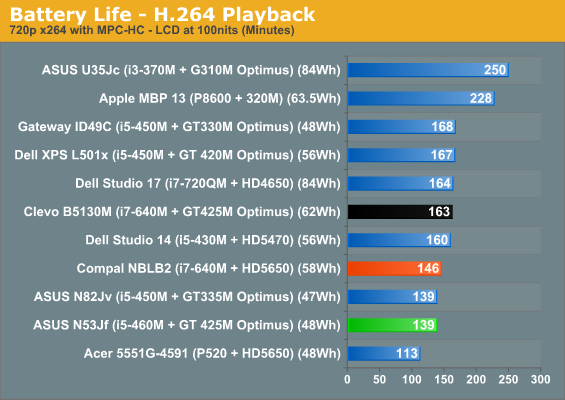

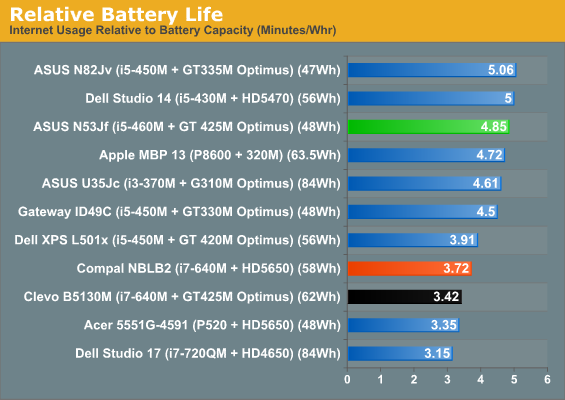

We’ve complained about the use of older and smaller 48Wh batteries in midrange notebooks quite a few times, but the N53JF continues that trend. That puts it at a definite disadvantage relative to Dell XPS’ 56Wh battery, as well as Compal’s 58Wh and Clevo’s 62Wh offerings. But higher capacity isn’t the only game in town; making better use of that capacity is still possible with BIOS and hardware optimizations, and ASUS does very well in this regard. Combined with NVIDIA’s Optimus Technology, we end up with roughly four hours of useful battery life, or just under 2.5 hours of H.264 playback.

ASUS also has their “Super Hybrid Engine” available, which underclocks the CPU and locks the maximum multiplier when enabled. We test with modified Power Saver settings normally, which already locks the CPU multiplier to the minimum, but SHE is still able to wring an extra few minutes out of the battery. We left it enabled for the battery life testing, so the results are the best-case scenario. We also disable any unnecessary utilities and software, and set the LCD to 100nits (45% in this case).

Despite having a smaller battery, ASUS beats the Clevo notebook in two of the three tests. The Compal result on the other hand isn’t so surprising, given the always-on GPU. We commented in the past that the Dell XPS had a substandard Internet result (despite multiple test runs), and that shows up again as the one inexplicable loss to the ASUS. In general, though, battery life is decent and enough for typical users. You’ll still want the power brick handy if you play any games or want to watch a Blu-ray from an actual disc, however—we measured just 90 minutes playing an 35Mbps AVC Blu-ray video (Jumper).

65 Comments

View All Comments

anactoraaron - Tuesday, December 28, 2010 - link

That they would pack in USB 3.0, bluray and then put in that below average 1080p display. Not that it matters with Sandy Bridge on the horizon. Best advise is still to wait.ET - Tuesday, December 28, 2010 - link

It's nice to see 1080p becoming more prevalent at this size laptop, but why can't we see some higher res displays at 20"+? I had a 19" 1600x1200 CRT eight years ago, and resolution hasn't gone up since then, and even dropped from 1920x1200 to 1080p in recent times. Laptops these days have some high DPI displays and I'd love to see some on the desktop.Ushio01 - Tuesday, December 28, 2010 - link

1920x1080 monitors are replacing 1680x1050 TN panels in the mid range monitor segment just as 1680x1050 replaced 1280x1024 monitors with the advantage of either 120hz TN or IPS screens. 1920x1200 monitors still exist and are just as expensive as always along with the 2560x1440 and 2560x1600 in the high and very high end segments.jabber - Tuesday, December 28, 2010 - link

1080p will be a curse for us all in a couple of years time.Never will a standard have been surpassed and found wanting so quickly.

They should have made it 1440p at least.

Now us computers users have to suffer from the display world being lazy and sticking to a screen depth not much more that what we were used to 10 years ago.

Thats progress.

DanNeely - Tuesday, December 28, 2010 - link

I think the main bottleneck for the resolution picked for the HD standard was the capacity of dtv broadcast/blueray/hddvd disks without any compression artifacts. Bumping the frame sizes up 77% would have needed a significantly higher compression level and would've resulted in the videophiles who're currently reviling netflix/hulu/etc's streaming offerings for low quality to have slammed the new standards; potentially rendering them stillborn at birth, and almost certainly slowing adoption down significantly.The other hangup would be the size of the TV screen needed to get full use of the resolution in the living room. 1080p is generally not worthwhile on less than a 40" screen because the angular size of the pixels at 720p are too small to resolve at couch distance. The smaller pixels of a 1080p screen won't be visible as individual pixels until about 56". At the time the standards were being written 56" was an enormously large TV. It's still larger than most TVs sold today.

Until that changes (and bluerays, or the bandwidth needed to stream them at full quality, become commodity items) I don't expect anything to change on the consumer video market. When that happens I expect the new standard will be one of the 4k resolutions; probably either 3996×2160 (1.85:1) or 4096×1714 (2.39:1). We'd also need a higher density video cable standard. DP 1.2 will carry the 2d version of either signal, but would need doubled again to support 3d. Hopefully lightpeak will be mainstream by then and able to carry the data.

TegiriNenashi - Tuesday, December 28, 2010 - link

2.39:1 ? That is insane.DanNeely - Tuesday, December 28, 2010 - link

It's the wide-wide screen mode at theaters today. IT would render all but the largest desktop computer displays too short to be useful for anything except consuming content. The video industry would see this as a feature.TegiriNenashi - Tuesday, December 28, 2010 - link

I don't think letterbox has any future in the movie industry itself. Avatar 3D was rendered at 1.78 : 1. Let the 2.39:1 die, the sooner the better!Hrel - Tuesday, December 28, 2010 - link

here hereJarredWalton - Tuesday, December 28, 2010 - link

Hear, hear?