ARM Launches DynamIQ: big.Little to Eight Cores Per Cluster

by Ian Cutress on March 21, 2017 1:00 AM EST

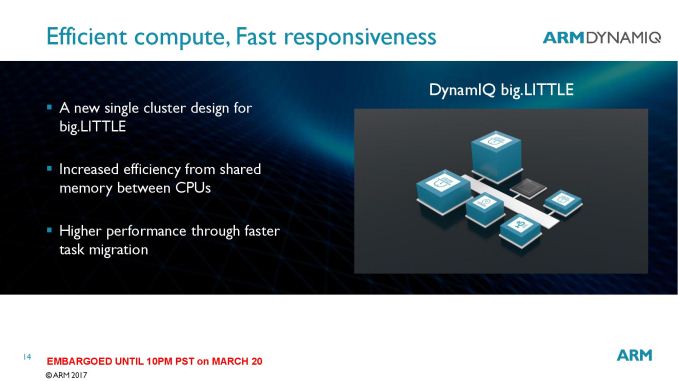

Most users delving into SoCs know about ARM core designs over the years. Initially we had single CPUs, then paired CPUs and then quad-core processors, using early ARM cores to help drive performance. In October 2011, ARM introduced big.Little – the ability to use two different ARM cores in the same design by typically pairing a two or four core high-performance cluster with a two or four core high-efficiency cluster design. From this we have offshoots, like MediaTek’s tri-cluster design, or just wide core mesh designs such as Cavium’s ThunderX. As the tide of progress washes against the shore, ARM is today announcing the next step on the sandy beach with DynamIQ.

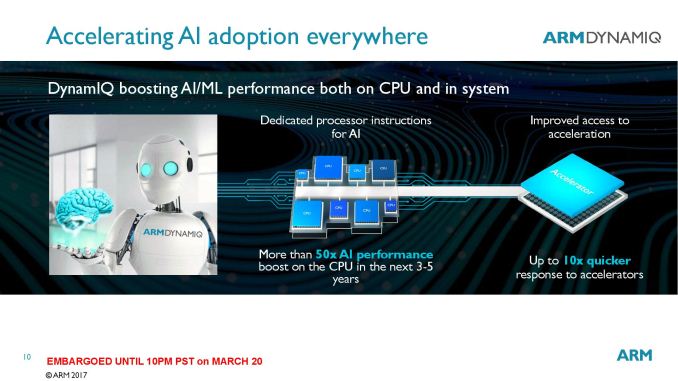

The underlying theme with DynamIQ is heterogeneous scalability. Those two words hide a lot of ecosystem jargon, but as ARM predicts that another 100 billion ARM chips will be sold in the next five years, they pin key areas such as automotive, artificial intelligence and machine learning at the interesting end of that growth. As a result, performance, efficiency, scalability, and latency are all going to be key metrics moving forward that DynamIQ aims to facilitate.

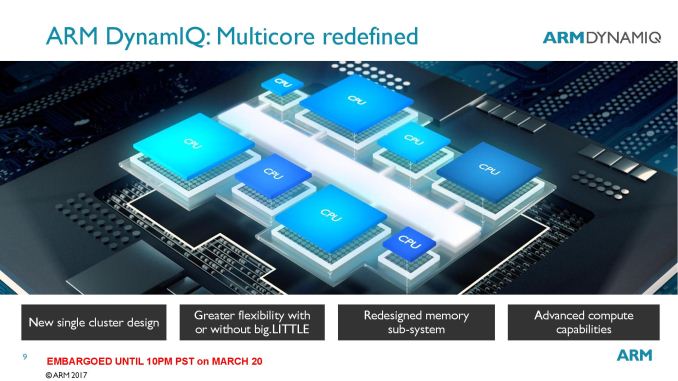

The first stage of DynamIQ is a larger cluster paradigm - which means up to eight cores per cluster. But in a twist, there can be a variable core design within a cluster. Those eight cores could be different cores entirely, from different ARM Cortex-A families in different configurations.

Many questions come up here, such as how the cache hierarchy will allow threads to migrate between cores within a cluster (perhaps similar to how threads migrate between clusters on big.Little today), even when cores have different cache arrangements. ARM did not yet go into that level of detail, however we were told that more information will be provided in the coming months.

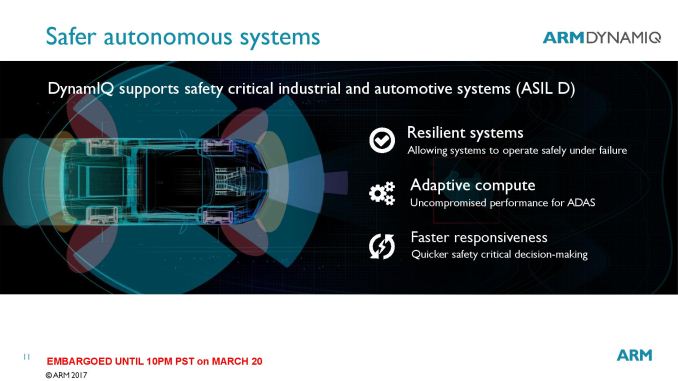

Each variable core-configuration cluster will be a part of a new fabric, with uses additional power saving modes and aims to provide much lower latency. The underlying design also allows each core to be controlled independently for voltage and frequency, as well as sleep states. Based on the slide diagrams, various other IP blocks, such as accelerators, should be able to be plugged into this fabric and benefit from that low latency. ARM quoted elements such as safety critical automotive decisions can benefit from this.

One of the focus areas from ARM’s presentation was one of redundancy. The new fabric will allow a seemingly unlimited number of clusters to be used, such that if one cluster fails the others might take its place (or if an accelerator fails). That being said, the sort of redundancy that some of the customers of ARM chips might require is fail-over in the event of physical damage, such as automotive car control is retained if there are >2 ‘brains’ in the vehicle and there is an impact which disables one. It will be interesting to see if ARM’s vision for DynamIQ extends to that level of redundancy at the SoC level, or if it will be up to ARM’s partners to develop on the top of DynamIQ.

Along with the new fabric, ARM stated that a new memory sub-system design is in place to assist with the compute capabilities, however nothing specific was mentioned. Along the lines of additional compute, ARM did state that new dedicated processor instructions (such as limited precision math) for artificial intelligence and machine learning will be integrated into a variant of the ARMv8 architecture. We’re unsure if this is an extension of ARMv8.2-A, which introduced half-precision for data processing, or a new version. ARMv8.2-A also adds in RAS features and memory model enhancements, which coincides with the ‘new memory sub-system design’ mentioned earlier. When asked about which cores can use DynamIQ, ARM stated that new cores would be required. Future cores will be ARMv8.2-A compliant and will be able to be part of DynamIQ.

ARM’s presentation focused mainly on DynamIQ for new and upcoming technologies, such as AI, automotive and mixed reality, although it was clear that DynamIQ can be used with other existing edge-case use models, such as tablets and smartphones. This will depend on how ARM supports current core designs in the market (such as updates to A53, A72 and A73) or whether DynamIQ requires separate ARM licenses. We fully expect any new cores announced from this point on will support the technology, in the same way that current ARM cores support big.Little.

So here’s some conjecture. A future tablet SoC uses DynamIQ, which consists of two high-powered cores, four mid-range cores, and two low-power cores, without a dual cluster / big.Little design. Either that or all three types of cores are on different clusters altogether using the new topology. Actually, the latter sounds more feasible from a silicon design standpoint, as well as software management. That being said, the spec sheet of any future design using DynamIQ will now have to list the cores in each cluster. ARM did state that it should be fairly easy to control which cores are processing which instruction streams in order to get either the best power or the best efficiency as needed.

ARM states that more information is to come over the next few months.

35 Comments

View All Comments

beginner99 - Tuesday, March 21, 2017 - link

I always wondered why Intel hasn't done something like this yet. Pair of Core2-like CPUs and a pair of Atoms (SIlvermont+) cores for a laptop. This removes the need for stuff like CoreM.Tricky part is how to assign threads to cores. Probably needs a new API and scheduler. Each core communicates his performance and power use and OS schedules background tasks to the slow, powersaving cores and user threads to the fast cores.

tuxRoller - Tuesday, March 21, 2017 - link

A good chunk of the work is done. The model is called EAS.https://www.linaro.org/blog/core-dump/energy-aware...

https://lwn.net/Articles/706374/

For each soc, part of the bringup will include the (normalized to the fastest core's highest pstate) performance, power states and state change costs. Those values are then used by the scheduler to determine what to place where.

StevoLincolnite - Tuesday, March 21, 2017 - link

Cost probably.Intel would rather give you a small efficient processor on Idle, that can also clock high when the need arises.

Krysto - Tuesday, March 21, 2017 - link

Because Intel cares first and foremost about profit margin. Core + Atom > just low-clocked Core.Meteor2 - Tuesday, March 21, 2017 - link

So why does ARM do it? Hopefully they care about profit too. If they don't they'll go out of business.Rakib - Tuesday, March 21, 2017 - link

They sell licenses, cpu's.saratoga4 - Tuesday, March 21, 2017 - link

The reason arm does it is explained in this article. Having heterogeneous cores is more power efficient if you need to scale between multiple performance levels.saratoga4 - Tuesday, March 21, 2017 - link

X86 cores are now so small the cost of the core itself is negligible.I think the real reason is that Intel tries to use one design for a lot of niches and they don't want to start optimizing heavily for one application the way arm does. I suspect they may have to eventually however.

BurntMyBacon - Wednesday, March 22, 2017 - link

Keep in mind, the cost of the core and the price Intel charges for it are two different things.Leaving aside whether the cost/price for an x86 core is insignificant, I'm not fully convinced that big.LITTLE is the best approach in the first place. It seems to me that, rather than put the necessary work in to allow for cores to use lower power states and even off states, ARM simply decided that it was more profitable to design a new low power core to sell as the solution and make their customers deal with the increased die area. There is also an associated cost to the end consumer that I certainly wouldn't welcome when only half my cores worth of die space is always inactive. Kind of reminds me of how much money I'm wasting on the IGP die space of an i7-7700K that is effectively dead space. Apple's A9 and predecessors did quite well despite not using big.LITTLE.

Perhaps big.LITTLE would make more sense to me if there were a much larger difference in the processing power of the big and LITTLE cores. That said, I'm not sure what application would care. Phones can't make use of high TDP processors. Tablets may be able to, but not for any meaningful length of time. Laptops can work with higher TDP processors (relative to phones), but an extremely low TDP processor (like a phone) would be unbearably slow, but perhaps there is a niche here. I've played the "use a phone / tablet as a full computer" game on iOS, Android, and Windows and come away less than impressed for anything beyond basic media consumption (even that is lacking in some ways). The associated battery life gains would often go unappreciated anyways given that many laptops can already achieve 8 hrs (north of 12 hrs in some cases) battery life. Desktops and workstations don't care for the low performance cores. Some servers may like low performance cores, but would benefit far more if all the cores were usable simultaneously (so not big.LITTLE).

I do like where this DynamIQ is going, though. Heterogeneous sets of cores with their own sets of compute characteristics that can be active for any appropriate workload regardless of what the other cores are doing makes a lot of sense. Though, I feel like this will get used mainly for high / low power cores, when it would make more sense to use a general purpose sequential core / highly parallel core / special purpose (media decoding, security processing, etc.) split.

extide - Wednesday, May 10, 2017 - link

FYI, current implementations of big.LITTLE do allow for all cores to be used at the same time -- not just cores from one cluster at a time. Only some of the first chips out, and nvidia's chips are like that.