Intel’s 140GB Optane 3D XPoint PCIe SSD Spotted at IDF

by Ian Cutress on August 26, 2016 12:01 PM EST- Posted in

- SSDs

- Intel

- Enterprise

- Enterprise SSDs

- 3D XPoint

- IDF_2016

- Optane

As part of this year’s Intel’s Developer Forum, we had half expected some more insights into the new series of 3D XPoint products that would be hitting the market, either in terms of narrower time frames or more insights into the technology. Last year was the outing of some information, including the ‘Optane’ brand name for the storage version. Unfortunately, new information was thin on the ground and Intel seemed reluctant to speak any further about the technology that what had already been said.

What we do know is that 3D XPoint based products will come in storage flavors first, with DRAM extension parts to follow in the future. This ultimately comes from the fact that storage is easier to implement and enable than DRAM, and the characteristics for storage are not as tight as those for DRAM in terms of break-neck speed, latency or read/write cycles.

For IDF, Optane was ultimately relegated to a side presentation at the same time as other important talks were going on, and we were treated to discussions about ‘software defined cache hierarchy’ whereby a system with an Optane drive can define the memory space as ‘DRAM + Optane’. This means a system with 256GB of DRAM and a 768GB Optane drive can essentially act like a system with ‘1TB’ of DRAM space to fill with a database. The abstraction layer in the software/hypervisor is aimed at brokering the actual interface between DRAM and Optane, but it should be transparent to software. This would enable some database applications to move from ‘partial DRAM and SSD scratch space’ into a full ‘DRAM’ environment, making it easier for programming. Of course, the performance compared to an all-DRAM database is lower, but the point of this is to move databases out of the SSD/HDD environment by making the DRAM space larger.

Aside from the talk, there were actually some Optane drives on the show floor, or at least what we were told were Optane. These were PCIe x4 cards with a backplate and a large heatsink, and despite my many requests neither demonstrator would actually take the card out to show what the heatsink looked like. Quite apart from which, neither drive was actually being used - one demonstration was showing a pre-recorded video of a rendering result using Optane, and the other was running a slideshow with results of Optane on RocksDB.

I was told in both cases that these were 140 GB drives, and even though nothing was running I was able to feel the heatsinks – they were fairly warm to the touch, at least 40C if I were to narrow down a number. One of the demonstrators was able to confirm that Intel has now moved from an FPGA-based controller down to their own ASIC, however it was still in the development phase.

Click through for high resolution

One demo system was showing results from a previous presentation given earlier in the lifespan of Optane: rendering billions of water particles in a scene where most of the scene data was being shuffled from storage to memory and vice versa. In this case, compared to Intel’s enterprise PCIe SSDs, the rendering reduced down from 22hr to ~9hr.

It's worth noting that we can see some BGA pads on the image above. The pads seem to be in an H shape, and there are several present, indicating that these should be the 3D XPoint ICs. Some of the pads are empty, suggesting that this prototype should be a model that offers a larger size. Given the fact that one of the benefits of 3D XPoint is density, we're hoping to see a multi-terabyte version at some point in the distant future.

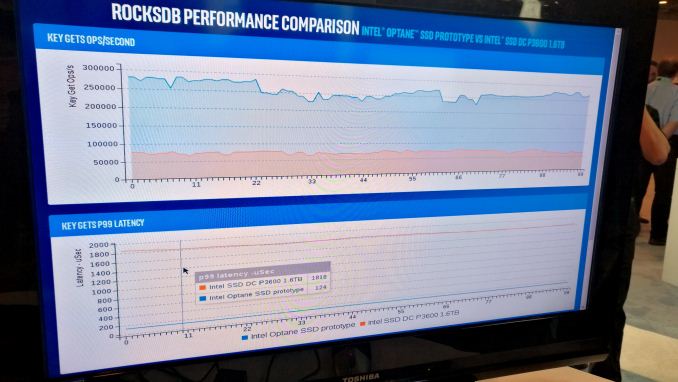

The other demo system was a Quanta / Quanta Cloud Technology system node, featuring two Xeon E5 v4 processors and a pair of PCIe slots on a riser card – the Optane drive was put into one of these slots. Again, it was pretty impossible to see more of the drive aside from its backplate, but the onscreen presentation of RocksDB was fairly interesting, especially as it mentioned optimizing the software for both the hardware and Facebook.

RocksDB is a high-performance key/store database designed for fast embedded storage, used by Facebook, LinkedIn and Yahoo, but the fact that Facebook was directly involved in some testing indicates that at some level the interest in 3D XPoint will brush the big seven cloud computing providers before it hits retail. In the slides on screen, the data showed a 10x reduction in latency as well as a 3x improvement in database GETs. There was a graph plotted showing results over time (not live data), with the latency metrics being pretty impressive. It’s worth noting that there were no results shown for storing key/value data pairs.

Despite these demonstrations on the show floor, we’re still crying out for more information about 3D XPoint, how it exactly work (we have a good idea but would like confirmation), Optane (price, time to market) as well as the generation of DRAM products for enterprise that will follow. With Intel being comparatively low key about this during IDF is a little concerning, and I’m expecting to see/hear more about it during Supercomputing16 in mid-November. For anyone waiting on an Optane drive for consumer, it feels like it won’t be out as soon as you think, especially if the big seven cloud providers are wanting to buy every wafer from the production line for the first few quarters.

More images in the gallery below.

66 Comments

View All Comments

tspacie - Friday, August 26, 2016 - link

Did they say how the "software defined cache hierarchy" was different from a paging file and OS virtual memory ?Omoronovo - Friday, August 26, 2016 - link

The difference is in abstraction. Paging files or swap space are explicitly advertised to software; software which knows there's 8GB of ram and an 8GB swap area/page file will likely modify its behaviour to fit within the RAM envelope instead of relying on the effective (16gb) working space.The idea of the software defined cache hierarchy is that everything below the hypervisor/driver layer only sees one contiguous space. It's completely transparent to the application. In the above example, that would mean the application would see 8GB+*size of xpoint* as ram, then whatever else on top as paging area.

saratoga4 - Friday, August 26, 2016 - link

>The difference is in abstraction. Paging files or swap space are explicitly advertised to software; software which knows there's 8GB of ram and an 8GB swap area/page file will likely modify its behaviour to fit within the RAM envelope instead of relying on the effective (16gb) working space.While you can of course query the operating system to figure out how much physical memory it has, for all intents and purposes the page file and physical memory form one unified continuous memory space (literally, that is what the virtual address space is for). Where a page resides (physical memory or page file) is NOT advertised to software.

>The idea of the software defined cache hierarchy is that everything below the hypervisor/driver layer only sees one contiguous space. It's completely transparent to the application.

Which is how virtual memory works right now. The question is what is actually different.

Omoronovo - Friday, August 26, 2016 - link

The virtual address space - which has multiple definitions depending on your software environment, so lets assume Windows - is limited by the bit depth of the processor and has nothing directly to do with Virtual Memory. Applications that have any kind of realistic performance requirement - such as those likely to be used in early adopter industries of this technology - most certainly need to know how much physical memory is installed.The abstraction (and the point I was trying to make, apologies for any lack of clarity) is that right now an application can know exactly how much physical address space there is, simply by querying for it. That same query in a system with 8GB of ram and a theoretical 20GB Xpoint device configured as in the article will return a value of 28GB of ram to the application; the application does not know it's 8GB+20, and will therefore treat it completely the same as if there were 28GB of physical ram. This sits above Virtual Memory in the system hierarchy, so any paging/swap space in addition to this will be available as well, and treated as such by the application.

The primary benefit to this approach is that no applications need to be rewritten to take advantage of the potentially large performance improvements from having a larger working set outside of traditional storage - even if it isn't quite as good as having that entire amount as actual physical RAM.

Hopefully that makes more sense.

saratoga4 - Friday, August 26, 2016 - link

>The virtual address space - which has multiple definitions depending on your software environment, so lets assume Windows - is limited by the bit depth of the processor and has nothing directly to do with Virtual Memory.Actually virtual address space and virtual memory are the same thing. Take a look at the wikipedia article.

> Applications that have any kind of realistic performance requirement - such as those likely to be used in early adopter industries of this technology - most certainly need to know how much physical memory is installed.

This is actually not how software works on Windows. You can query how much physical memory you have, but it is quite rare.

Dumb question: have you ever done any Windows programming? I get the impression that you are not aware of how VirtualAlloc works on Windows...

>The primary benefit to this approach is that no applications need to be rewritten to take advantage of the potentially large performance improvements from having a larger working set outside of traditional storage - even if it isn't quite as good as having that entire amount as actual physical RAM.

This is actually how Windows has worked for the last several decades. Pages can be backed by different types of memory or none at all, and software has no idea at all about it. What you are describing isn't above or below virtual memory, it is EXACTLY virtual memory :)

This is what the original question was asking, what is changing vs the current system where we have different types of memory hidden from software using a virtual address space.

dave_s25 - Friday, August 26, 2016 - link

Exactly. Windows even has a catchy marketing term for "Software Defined Cache" : ReadyBoost. Linux, the BSDs, all major server OS can do the exact same thing with the virtual page file.Omoronovo - Friday, August 26, 2016 - link

Sorry guys, that's about as well as I can describe it since I'm a hardware engineer, not a software engineer, and this is how it was described to me at a high level when I was at Computex earlier this year.I'm unlikely going to be able to describe in enough specificity to satisfy you, but hopefully Intel themselves can do a better job of explaining it when the stack is finalised; until now a lot of us are having to make a few assumptions (including me, and I should have made this more clear from my first post).

Of particular interest is hypervisors being able to provision substantially more RAM than the system physically contains without VM contention as happens with current memory overcommitment technologies like from VMware. A standard virtual memory system cannot handle this kind of situation with any kind of granularity; deduplication can only take you so far in a multi-platform environment.

Exciting times ahead for everyone, I'm just really glad the storage market isn't going to turn into the decades of stagnation we had with hard disks since SSD's are now mainstream.

jab701 - Saturday, August 27, 2016 - link

Hi,I have been reading this thread and I thought I might answer, as I have experience designing hardware working in virtual memory environments.

Personally, this optane stuff fits nicely into the current virtual memory system. Programs don't know (or care) where their data is stored, provided that when they load from/store to memory that they data is where it is meant to be.

Optane could behave like an extra level of the cache hierarchy or just like extra memory. The OS will have to know what to do with it, #1 if it is like a cache then it needs to ensure that it is managed properly and flushed as required. #2 If it is like normal memory then the OS can map virtual pages to the physical memory address space where optane sits, depending on what is required.

Situation #1 is more suited to a device that looks like a drive where the whole drive isnt completely IO mapped and is accessed through "ports". #2 is suited to the situation where the device appears as memory usable by the OS.

Software defined cache hierarchy would mean the device is like readyboost...but the cache is managed by the OS and not transparently by the hardware...

Sorry a bit rambley :)

Klimax - Saturday, August 27, 2016 - link

Sorry, but Virtual Address Space is NOT Virtual Memory. See:https://blogs.msdn.microsoft.com/oldnewthing/20040...

Omoronovo - Saturday, August 27, 2016 - link

This is what I was referring to in a broader context, but since I'm not any kind of software engineer (beyond implementing it of course), I didn't feel justified in trying to argue the point with someone who actually does it for a living. Thanks for clarifying. For what it's worth, I don't think much of what I said was too far off base, but when Intel releases the implementation details we'll be able to know for sure. Hopefully sooner rather than later, I doubt Western Digital or Sandisk/HP are likely to stay quiet about their own SCM products for long.