Intel Announces Xeon E3-1500 v5: Iris Pro and eDRAM for Streaming Video

by Ian Cutress on May 31, 2016 2:00 AM EST

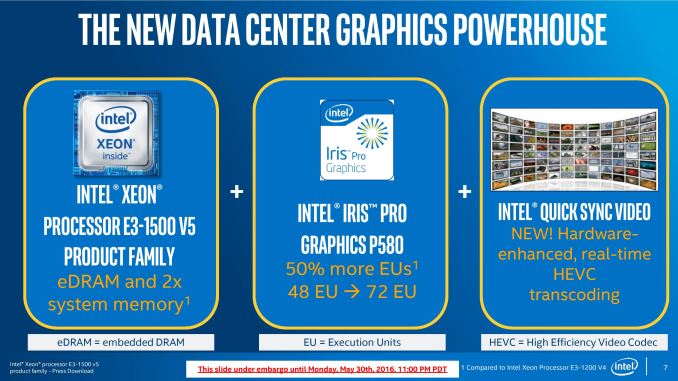

The rise of video stream services, especially live services, has accelerated the need for dynamic and on-the-fly conversion of video content and the infrastructure to do so. Moving from HD to FHD and 4K as well as 360-degree video requires a lot of immediate compute power in order to keep up with the event being filmed, as well as keeping enough quality in tow to maintain the user experience. Traditionally there are three ways to do this: raw CPU horsepower, FPGAs, custom fixed-function ASICs, or GPUs. In line with this, Intel is releasing their new E3-1500 v5 series of processors with a primary focus on Intel Quick Sync. These are Skylake based CPUs, using four cores with hyperthreading, but are backed with Iris Pro graphics with the 72 execution units available and a redesigned embedded DRAM to accelerate computation over the previous generation.

These fit between Intel’s E3-1200 v5 Xeon processors, which are standard Skylake based Xeons up to four cores, and Intel’s E5-1600/2600 v4 Broadwell-based Xeons with up to 22 cores in a single socket:

| Intel Xeon Families | |||||

| E3-1200 v5 | E3-1500 v5 | E5-1600 v4 E5-2600 v4 |

E7-4600 v3 | E7-8800 v3 | |

| Core Family | Skylake | Skylake | Broadwell | Haswell | Haswell |

| Core Count | 2 to 4 | 2 to 4 | 4 to 22 | 4 to 18 | 4 to 18 |

| Integrated Graphics | Few, HD 520 | Yes, Iris Pro | No | No | No |

| DRAM Channels | 2 | 2 | 4 | 4 | 4 |

| Max DRAM Support (per CPU) | 64 GB | 64 GB | 768 GB | 512 GB | 512 GB |

| DMI/QPI | DMI 3.0 | DMI 3.0 | 2600: 1xQPI | 3 QPI | 3 QPI |

| Multi-Socket Support | No | No | 2600: 1S or 2S | 1S, 2S or 4S | Up to 8S |

| PCIe Lanes | 16 | 16 | 40 | 40 | 40 |

| Cost | $213 to $612 |

Unknown | $294 to $4115 |

$1223 to $7007 |

$4061 to $7174 |

| Suited For | Entry Workstations | QuickSync, Memory Compute |

High-End Workstation | Many-Core Server | World Domination |

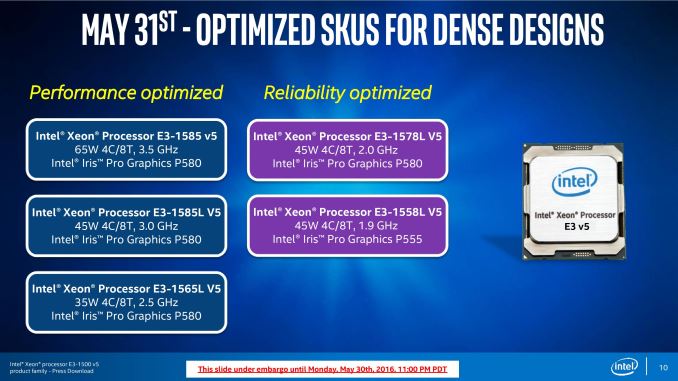

Five processors are set to be released under the E3-1500 v5 name, one at 65W, three at 45W, and one at 35W. The main performance differentiator between them all is the frequency of the processor, with the E3-1558L v5 will also have a slightly cut down version of Iris Pro graphics. All CPUs will be soldered down ‘BGA’ models to be directly embedded into the motherboard. We were told by Intel that there are no plans to release socketable versions of these processors at this time, meaning that the mock up design of the processor on the Intel slide below is just for show, and not a real representation of these parts.

All the processors have Iris Pro Graphics P580, which affords the largest implementation of Intel’s Gen 9 integrated graphics at 72 execution units (72 EUs), along with the largest application of embedded DRAM at 128 MB – this is colloquially known as GT4e, giving a 4+4e silicon die (four cores plus GT4e). The E3-1558L v5, using Iris Pro Graphics P555, is an extreme oddball of the bunch. The P555 arrangement uses only 48 EUs, or Intel’s GT3, with 128 MB of embedded DRAM, making it GT3e. With four cores, this is 4+3e. At the launch of Intel’s Skylake platform, and more recently at events, Intel’s silicon roadmap only afforded two variants of Iris Pro: 2+4e with 64 MB of eDRAM, or 4+4e with 128 MB of eDRAM. This means that the 4+3e is most likely a cut-down version of a 4+4e chip, with part of the graphics disabled.

| Intel E3-1500 v5 Xeon Family | |||||

| E3-1585 v5 | E3-1585L v5 | E3-1578L v5 | E3-1565L v5 | E3-1558L v5 | |

| TDP | 65W | 45W | 45W | 45W | 35W |

| Cores | 4 | 4 | 4 | 4 | 4 |

| Base Frequency | 3.5 GHz | 3.0 GHz | 2.0 GHz | 2.5 GHz | 1.9 GHz |

| Turbo Frequency | 3.9 GHz | 3.7 GHz | 3.4 GHz | 3.5 GHz | 3.3 GHz |

| Integrated Graphics | Iris Pro P580 | Iris Pro P580 | Iris Pro P580 | Iris Pro P580 | Iris Pro P555 |

| Execution Units | 72 | 72 | 72 | 72 | 48 |

| eDRAM | 128 MB | 128 MB | 128 MB | 128 MB | 128 MB |

| GPU Frequency | 350 MHz | 350 MHz | 700 MHz | 350 MHz | 650 MHz |

| GPU Turbo Frequency | 1150 MHz | 1150 MHz | 1000 MHz | 1050 MHz | 1000 MHz |

| Form Factor | BGA | BGA | BGA | BGA | BGA |

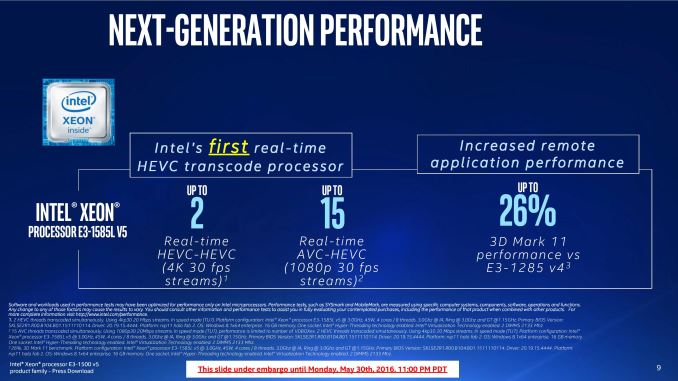

While we’re unlikely to get these units in hand anytime soon, Intel’s listed the performance of the top 45W part as up to 26% faster in a synthetic benchmark over the previous generation Broadwell edition at 65W: most of this will be down to the increase in execution units between the two. For HEVC, the top 45W part is reported as supporting, in real-time, two streams doing HEVC to HEVC 4K30 transcodes (essentially splitting a scene in two directions). Alternatively, Intel lists the performance for AVC-to-HEVC 1080p30 capture and encode for 15 simultaneous streams, suitable for a multi-camera live event.

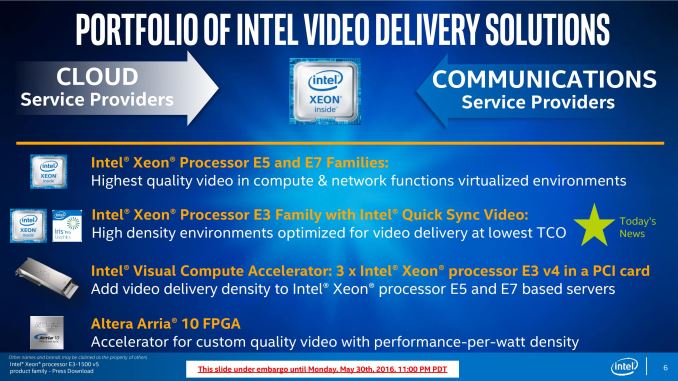

Intel’s focus on video conversion and delivery systems is not small. After introducing processors with embedded DRAM (code named Crystal Well) combined with the Broadwell CPU architecture last generation in a variety of solutions, Intel are now expanding their lines depending on the quality, cost or speed required by the customer.

Intel likes to promote that their solution line covers both cloud and communications with a range of applicable products. The performance-per-watt element of the stack is the Altera Arria family of FPGAs, now that Altera is formally merged with Intel. While we are under the impression that Intel will start to integrate FPGA functionality in their main product lines over the next decade, but at this point an FPGA is the peak perf/watt solution.

For users who want compute density, in a limited space, Intel offers its Visual Compute Accelerator through partners, and these cards put three Iris Pro enabled processors from the last generation on a single 225W PCIe card. These can be added into any system similar to GPUs or MICs, making it platform agnostic. The focus of these cards is typically for the E5/E7 servers.

Also aimed at high density, but also for the lowest total cost of ownership, are the new E3-1500 v5 CPUs using second generation eDRAM and Iris Pro graphics with Skylake cores. With Intel Quick Sync integrated into the processor, Intel aims this as an HEVC streaming solution with new features.

At the top of the stack are the E5 and E7 processor lines, using multiple CPUs in a single system and extracting parallelism, focusing on high-quality video but at the highest cost. These can be bundled with the Visual Compute Accelerators to allow for high density when a mix of quality and HEVC-accelerated Quick Sync is needed.

The other half of today’s announcement revolves around virtualization. When a virtualized server uses the same hardware across multiple clients/users, typically a time-share slicing methodology is used to give each user a suitable amount of processor time. Depending on the capability of the hypervisor and the underlying hardware, there are limits to how much software can be used by all users at once, or who has access to specific hardware features. With the new generation of Skylake plus Iris Pro based Xeon E3-1500 v5 processors, Intel is supporting new Graphics Virtualization Technology (GVT) modes under Citrix XenServer 7.0 to start.

Previously, Intel’s Xeons with Iris Pro could support two GVT modes.

The first, GVT-s, allowed API virtualization such that multiple users could take advantage of the same software package on the same hardware. This could be applied in design studios all using the same application or web servers where multiple clients tap into the same API.

Second is GVT-d, giving direct control of all the resources to one user. This would adjust the time slicing effect such that one user would essentially own 100% of the CPU time, as if there were no virtualization in play. Typically this is something that might occur anyway when the other users are idle, however with assigned resources it allows certain VMs to elevate their hypervisor priority in the event of immediate processing power requests and makes the other VMs doing time insensitive work use less resources and extends their compute wall time.

The new element with Skylake Xeons with Iris Pro is GVT-g, or a shared platform between seven users on a single processor. This extends the first mode, GVT-s, to allow any user to use any application sufficient for the hardware. This is achieved through both driver and API virtualization, and comes with the Intel drivers at no extra cost. The first product to support the GVT-g mode will be Citrix XenServer 7.0, and we are told that others will follow.

There are some other features of the new processors not mentioned in Intel’s slide deck that we probed the company about. First is Skylake’s new Speed Shift feature, which allows an OS level driver to return the dynamic frequency/voltage scaling to the CPU which affords a faster frequency change (1-3 ms rather than 30-100 ms) for quicker immediate response and power saving. When used as a normal CPU without virtualization, these CPUs will support Quick Sync under Windows 10. However, in a virtualized environment, Speed Shift is not supported even if the OS in each VM does support it. Intel did not go into detail as to why this is the case, but it would seem that Speed Shift requires some extra hardware or software work for this instance.

Next up is the HEVC encoding requirements. We asked for Intel to clarify support for various HEVC modes, and we received the following:

- HW HEVC Main 8b 4:2:0 (E3-1500 v5 only. This requires Intel MSS 2017)

- GPU accelerated HEVC 10b 4:2:0 (Supported on E3-1200 v3, E3-1200 v4, and E3-1500 v5. Enabled with Intel MSS professional 2016+)

- SW HEVC 10b 4:2:2 (Recommended for Intel® Xeon® processor E5 and E7 families. Enabled with Intel MSS professional 2016+)

- You may use Media Server Studio 2016 R6 – Professional Edition that includes HEVC SW as well as GPU-accelerated. Check https://software.intel.com/en-us/intel-media-server-studio/details#professional for details.

- HEVC full hardware on Linux requires Intel MSS 2017, which is in Engineering Release for ISVs and OEMs.

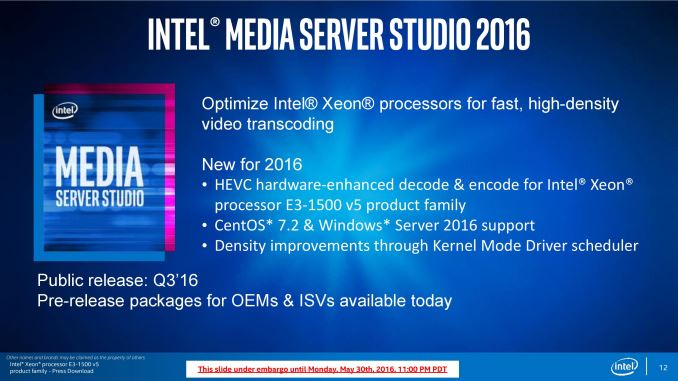

Alongside the E3-1500 v5 announcement, Intel is also announcing Media Studio Server 2016.

The press slide says it all: the package aims to be an optimized set of libraries for Iris Pro enabled CPUs with various OS support. OEMs and ISVs can obtain pre-release software, and it will have general availability in Q3 2016.

Back to the processors, and Intel has several partners who have developed or are developing systems around the new Skylake Xeons with Iris Pro graphics, including Hewlett Packard Enterprise, Supermicro, Kontron, ASRock Rack, Adlink and Artesyn. We expect these companies (and others) to make announcements over Q2 and Q3.

Analysis and Future Conjecture

A further note to add into the mix. It would seem that Intel is (at least in part of the product stack) dedicated to the Iris Pro strategy of bundling embedded DRAM with their CPU cores. We remember that a number of review websites and analysts were particularly praising about the commercial release of the Broadwell editions for consumers, and requested that Intel expand this program. Based on what we heard in this announcement, I feel (personal injection) that Intel will most likely keep eDRAM enabled parts in the embedded space, and particularly for Xeons, for the foreseeable future.

From a revenue and profit perspective, Intel’s goal is to sell high-end, high margin E5/E7 parts which can do similar things but cost up to 10x. By offering server level eDRAM parts at consumer prices when there is no competition in that space could drive potential customers for cheaper options, lowering Intel’s potential, and why we only see Iris Pro on quad core ECC-enabled processors at this time. There are a few Iris Pro enabled SKUs at the consumer space, but as mobile parts or for mini/all-in-one machines, rather than full blown gaming systems or workstations.

The Xeon E3-1500 line seems set to stay as the eDRAM and Iris Pro enabled parts, and it makes me wonder if that is how it will stay, or if Intel will migrate the eDRAM up to E5-2500 type parts in the extended future when it feels there is competition (either from other CPUs, FPGAs or GPUs). There’s also the angle of Intel’s acquisition of Altera, the FPGA company, which should mean that an FPGA-like structure should be an obvious feature in a future commercial core at some point, but at the minute Intel is keeping its cards close to its chest.

But for now, we are told that the embedded/BGA method for Iris Pro is the line we will see.

28 Comments

View All Comments

qap - Tuesday, May 31, 2016 - link

Has anyone tested quality of hardware HEVC encoders lately? At work we need realtime video encoding, but last time we did our research, output from hw HEVC encoders was barely comparable to software AVC encoders set to "fast" (=low quality, so we could process them in realtime).JoeyJoJo123 - Tuesday, May 31, 2016 - link

"Software encoding" (specifically x264, and to an extent the relatively newer x265) are the best software libraries when encoding live video to H.264 encoded content.I really don't like the terms "hardware encoders" and "software encoders" because in either instance, both hardware and software are involved. In other words, "hardware encoders" use dedicated encoding chips (hardware) baked into other hardware (CPU/GPU) to offload otherwise intensive operations, and use dedicated (often proprietary) software to do these processes. It's the same thing with "software encoders" which use CPU resources to process these intensive operations, and use software libraries such as x264 to do these processes.

qap - Tuesday, May 31, 2016 - link

Great. You have proven how widely used technical terms bothers you. Now - do you have anything relevant about the question I asked?JoeyJoJo123 - Tuesday, May 31, 2016 - link

My post is relevant, but because your intelligence prevents you from reread the post to understand the message, I'll spoonfeed you again. I've made it clear that the best quality option is x264, quite clearly, so here goes again.Intel's QuickSync has poorer quality. (Reasonably quick, but prone to lose frames and to have blocky video artefacts.)

AMD's Video Coding Engine (VCE) is the same story.

Nvidia's Shadowplay is the same story.

To date, all these "hardware encoding" options have used an underpowered purpose-built chip running proprietary software to handle the encoding. Meanwhile, the power of open-source software (x264) and traditional "software encoding" allows the community to make truly high quality video encoding possible. That being said, most livestreaming is usually done on the "Fastest" setting in Xsplit, OBS, etc for minimal delay to the stream, even with powerful hardware, but if you don't mind delay and want to record high-quality local copies of the video file, some people use slower presets.

Also, instead of asking Anandtech comments, which are a festering pile of arguments and nonsense, you can also Google "x264 quicksync shadowplay vce comparison" and judge the quality for yourself.

Oh and by the way, nobody's entitled to having someone else digest easily researchable information and feed you like a mother bird in the comments section of an article.

qap - Wednesday, June 1, 2016 - link

Using your words - is "your intelligence preventing you" understanding the meaning of the words "lately" and "test"? In original post you gave me your opinion (!= test). And in this one you gave me extremely helpful suggestion "go google" (if you did it yourself, you would find problem with the word "lately").Then you ranted about how it is implemented - everyone who regularly reads AT knows how, but everyone else understood that it is not relevant if someone is looking for a recent test.

JoeyJoJo123 - Wednesday, June 1, 2016 - link

Dude, seriously?You got your answer, why are you still complaining about the answer that was provided to you, free of charge?

bobdvb - Monday, June 6, 2016 - link

There are proprietary AVC codecs which are superior to x264, x264 just happens to be the most easily integrated with most people's workflows. There are hardware ASICs for AVC encoding which can't really be called software codecs because they are heavily dependent on the hardware that they are coded for where as most software codecs are using more generic mathematics perhaps optimised by the encoder. And bindings to hardware such as QuickSync show problems because they are hardware encoder blocks which simply aren't very good.bobdvb - Monday, June 6, 2016 - link

http://www.ambarella.com/products/broadcast-infras...azrael- - Tuesday, May 31, 2016 - link

They lost me at "soldered down BGA". If they had been socketed I'd very much have considered building a system around one of them.r3loaded - Tuesday, May 31, 2016 - link

"From a revenue and profit perspective, Intel’s goal is to sell high-end, high margin E5/E7 parts which can do similar things but cost up to 10x. By offering server level eDRAM parts at consumer prices when there is no competition in that space could drive potential customers for cheaper options, lowering Intel’s potential, and why we only see Iris Pro on quad core ECC-enabled processors at this time. There are a few Iris Pro enabled SKUs at the consumer space, but as mobile parts or for mini/all-in-one machines, rather than full blown gaming systems or workstations."Translation: Intel are committed to the cause of being asshats about this. AMD really needs to hit it out of the park with Zen.