ARM Announces New Cortex-R8 Real-Time Processor

by Andrei Frumusanu on February 17, 2016 7:01 PM EST- Posted in

- Mobile

- Arm

- Embedded

- Embedded World

- Cortex R8

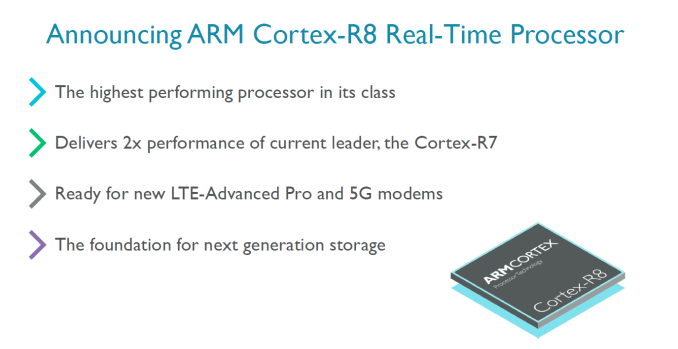

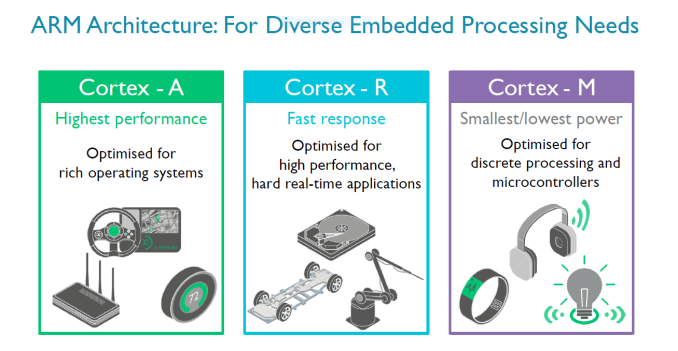

ARM’s Cortex-R range of processor IP is something we haven’t talked about too much in the past, yet it’s a crucial part of ARM’s business and is integrated in a lot of devices. ARM divides its CPU offerings into three categories – At the high-end performance end we find the Cortex-A profile of application processors which most of us should be familiar with as cores such as the Cortex A53 and Cortex A72 are ubiquitous in today’s smartphone media coverage and get the most attention. The low-end should also be pretty familiar as the Cortex-M microcontrollers are found in virtually any conceivable gadget out there and also has seen increased exposure in the mobile segment due to their usage as the brain inside of both discrete as well as SoC-integrated sensor-hubs.

The Cortex-R profile of real-time processors on the other hand has seen relatively small coverage due to the fact that its use-cases are more specialized. Today with the announcement of the new Cortex-R8 we’ll be covering one well-established segment as well as an increasingly growing application of the real-time processors from ARM.

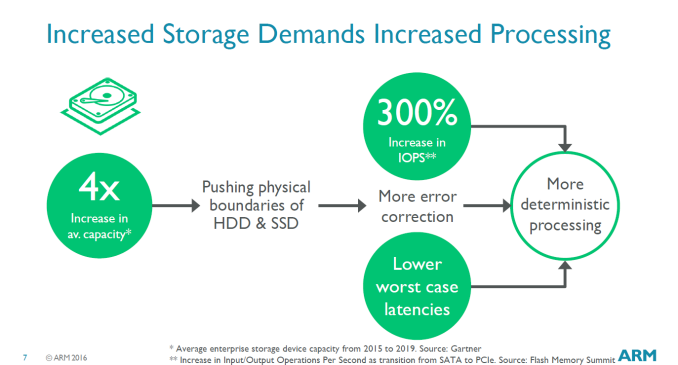

In storage devices such as disk drive microcontrollers the Cortex R processors are well established as such systems require response-times in the microsecond range. These systems use increasingly complex algorithms for things such as error correction and the control software. SSDs in particular require increasingly higher performance controllers as data-rates increase with each generation. ARM discloses that currently all major hard-drive and SSD manufacturers use controllers based on Cortex R processors, which is least to say an interesting market position.

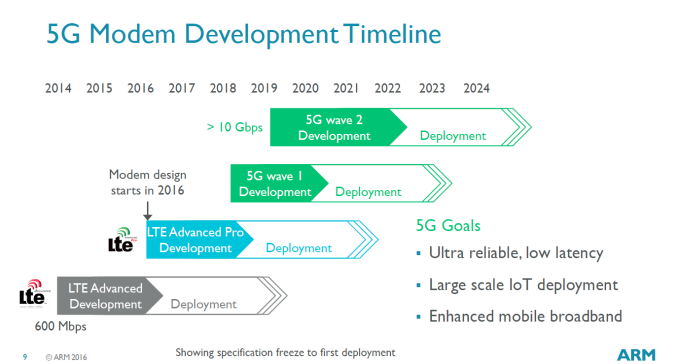

Today’s announcement of the Cortex R8 was particularly centred on the use of R-profile processors in the modem space with a focus on the increasing performance requirements required to run future cellular standards such as LTE Advanced Pro and 5G. Here the processors are used for scheduling the data-flows through the signal processing for reception and transmission and as well run the protocol stack’s software tasks. These are so-called hard real-time tasks in which the processor must respond to events in the communication channel with a microsecond granularity. New standards such as 5G will vastly increase the transmission speeds to gigabits with complex carrier frequency and MIMO configurations which will also increase the feature-set requirements and workloads for the modem processors.

ARM also discloses that modem designers are looking more and more to modems that manage Layer-1 scheduling activities to be done by software on the processor to provide more flexibility among different standards, something which requires a lot of investment and R&D to do in hardware.

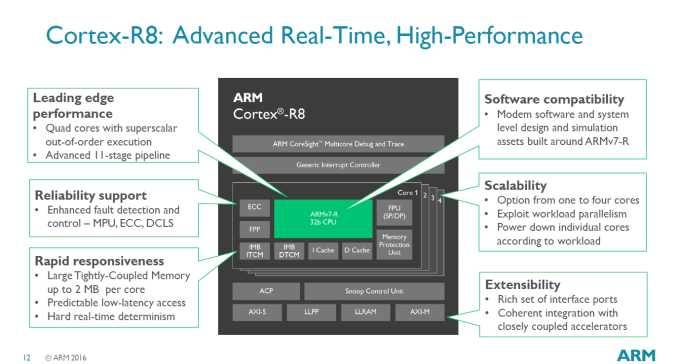

The Cortex-R8 is similar in architecture to the R7 - we still see usage of an 11-stage OoO (Out-of-Order) execution pipeline and clocks of up to 1.5GHz on a 28nm HPM process. The differences are found in the configuration options: The new core can now be deployed as a quad-core, versus the limited dual-core configuration of the R7, doubling the theoretical processing power over its predecesssor. The cores can also be run asymetrically and also each have their own power-plane, meaning they can be turned off for power savings and increased battery life. While concrete performance figures were a bit scarce, ARM talks about an example quad-core configuration on a 28nm or 16nm FinFET process being able to reach up to 15000 Dhrystone MIPS at 1.5GHz frequency.

Cortex-R processors are able to employ a low-latency on-CPU memory called Tightly-Coupled Memory (TCM) which is able to be used as a predictable and guaranteed memory subsystem that is able to service interrupts as quickly as possible with code and data, avoiding longer and less deterministic latency cycles when fetching data out of the cache memory system. The Cortex R8 now is able to significantly increase the size of the TCM and now provides up to 2MB (1MB instruction, 1MB data, up from 128KB instruction/data on the R7) of TCM per core for a maximum of 8MB for a quad-core configuration.

ARM disclosed one of the licensees being Huawei:

“The ARM architecture is the trusted standard for real-time high-performance processing in modems,” said Daniel Diao, deputy general manager, Turing Processor Business Unit, Huawei. “As a leader in cellular technology, Huawei is already working on 5G solutions and we welcome the significant performance uplift the Cortex-R8 will deliver. We expect it to be widely deployed in any device where low latency and high performance communication is a critical success factor.”

Among other licensees we'll also definitely see vendors such as Samsung who also currently deploy Cortex-R inside of their modems, such as the Shannon 333 found in last year's Galaxy devices.

22 Comments

View All Comments

twotwotwo - Wednesday, February 17, 2016 - link

It just shows what I don't know, but interesting to me to see 'out-of-order' and 'real-time' together. I thought the branch prediction you typically see in OoO, and the misprediction penalties it comes with, would be bad for worst-case performance (your limiter in hard realtime situations) even if good in the average case.webdoctors - Wednesday, February 17, 2016 - link

I'm not sure what the definition of real-time is in this product. Real-time systems are generally deadline driven, and although the OoO does introduce uncertainty to timing, the timescale makes it immaterial.Realistically, media frame based systems just need the frame to be done within a certain number of ms, networks have packet latency on the order of ms. A millisecond is huge when an OoO CPU is running at GHz (where 1ms is 1000us and thats 1million clks/ns). Just a guess, I've never made a "RT" CPU...

ddriver - Friday, February 19, 2016 - link

The RT problem of desktop grade CPUs is not that they are out of order, but the degree of abstraction required to support a user operating system. Programs are running in user space, there are thousands of threads running on few hardware threads, there is a lot of context switching and it is all fairly coarse grained, making such system impractical for RT critical scenarios.Compared to that, OoO is minuscule and negligible, in this regard OoO is not obstructive to real-time applications but actually constructive, as it allows significantly higher computational throughput so you can accomplish a lot more complex tasks still in real time.

That being said, there are still some areas where good old 8bit AVR still can't be beat - if timing is indeed very very critical and the application is very very simple, the simplicity of the 8bit AVR chip offers significantly better accuracy than 32bit ARM, even if they are in order they are still a lot more complex, hard to predict and more latent relatively.

willis936 - Wednesday, February 17, 2016 - link

Real time is often synonymous with deterministic. It means that any tasks will be done on a regular interval every time. Like if you need to send a packet every 50 us +/- 5 us you can't use regular operating systems. As long as the processor is fast it doesn't matter if the instructions are reordered as it's executing them. All that matters is that operations don't wait long periods of time. Even a maxed out reorder buffer is a relatively short period of time compared to most real time applications.Dmcq - Thursday, February 18, 2016 - link

Yes you're right, to a large extent it is about the maximum time. The main problem with using a normal processor though for real time is the memory access - having virtual memory and loading the address translation and the data. Their R and M series don't use virtual store and the Tightly-Coupled Memory gets rid of the major problem with accessing memory. Disk controllers would still have a lot of shared memory for cacheing the disk but that would be a well contained problem.RobATiOyP - Thursday, February 18, 2016 - link

The misprediction penalty is due to a the pipeline. If you have some complex iterative processing your loop will be very fast, then you fall out and the CPU stalls but it'll still be finished within a shorter worst case time than a simpler non-superscalar processor which isn't interleaving instructions.As others have said, it's the maximum latency and that it be deterministic, so no virtiual memory which may be cached or sitting on some HDD

psychobriggsy - Thursday, February 18, 2016 - link

Real time is usually defined against a metric - a deadline to do something when the interrupt comes in, 50 microseconds for example.Here we have a CPU that runs at 1500000000 Hz.

If your metric is "react within 1 microsecond" then you have 1500 cycles to deal with that interrupt. That would be pretty harsh (many would see it as a fun challenge) but more typically you'd want tens to hundreds of microseconds, and that's plenty of cycles to check data, signal something else to work on it, etc.

Think of the OoO aspects and the pipeline allowing higher clock speeds as gravy on top, rather than challenges, and it becomes more acceptable.

ddriver - Friday, February 19, 2016 - link

The RT problem of desktop grade CPUs is not that they are out of order, but the degree of abstraction required to support a user operating system. Programs are running in user space, there are thousands of threads running on few hardware threads, there is a lot of context switching and it is all fairly coarse grained, making such system impractical for RT critical scenarios.Compared to that, OoO is minuscule and negligible, in this regard OoO is not obstructive to real-time applications but actually constructive, as it allows significantly higher computational throughput so you can accomplish a lot more complex tasks still in real time.

iwod - Thursday, February 18, 2016 - link

I am starting to think the amount of complexity in 5G Modem will be insane.And why aren't these done on 20nm?

Andrei Frumusanu - Thursday, February 18, 2016 - link

ARM disclosed that the Cortex-R8 will likely see implementations at 28, 16, 10 and even 7nm.