Fable Legends Early Preview: DirectX 12 Benchmark Analysis

by Ryan Smith, Ian Cutress & Daniel Williams on September 24, 2015 9:00 AM ESTDiscussing Percentiles and Minimum Frame Rates

Up until this point we have only discussed average frame rates, which is an easy number to generate from a benchmark run. Discussing minimum frame rates is a little tricky, because it could be argued that the time taken to render the worst frame should be the minimum. All it then takes is a bad GPU request (misaligned texture cache) which happens infrequently to provide skewed data. To this end, thanks to the logging functionality of the benchmark, we are able to report the frame rate profiles of each run and percentile numbers.

For the GTX 980 and AMD Fury X, we pulled out the 90th, 95th and 99th percentile data from the outputs, as well as plotting full graphs. For each of these data points, the 90th percentile should represent the frame rate (we’ll stick to reporting frame rates to simplify the matter) a game will achieve during 90% of the frames. Similar logic applies to the 95th and 99th percentile data, where these are closer to the absolute maximum but should be more consistent between runs.

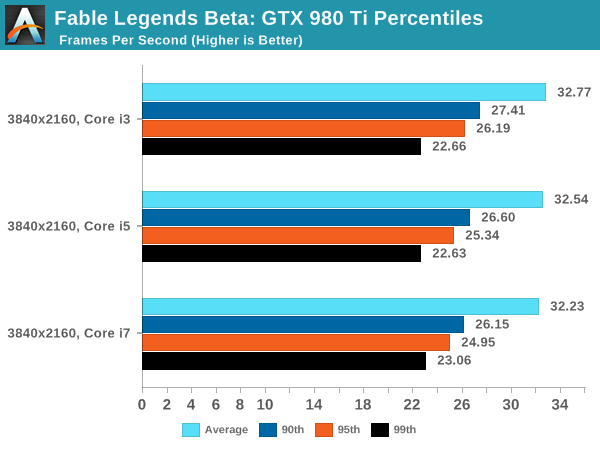

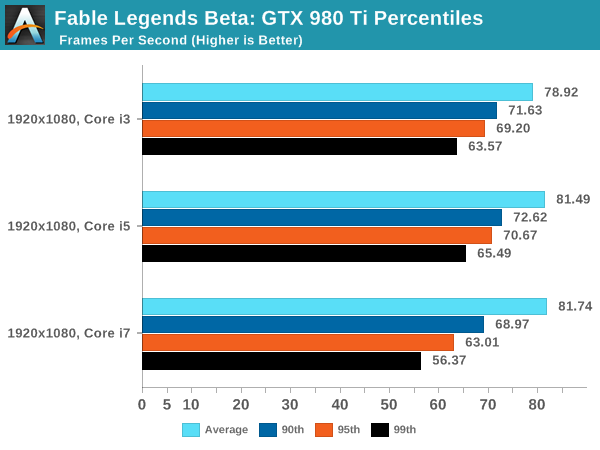

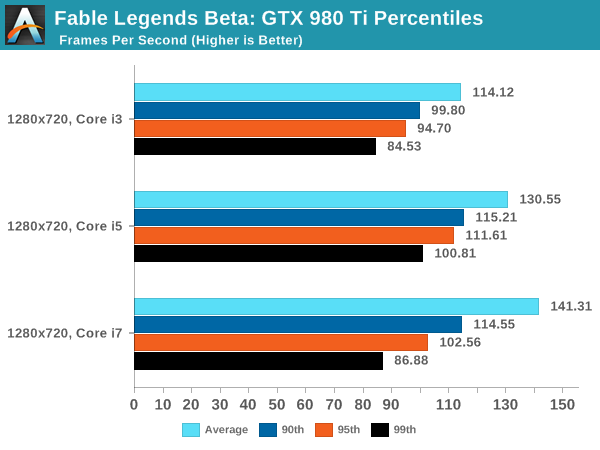

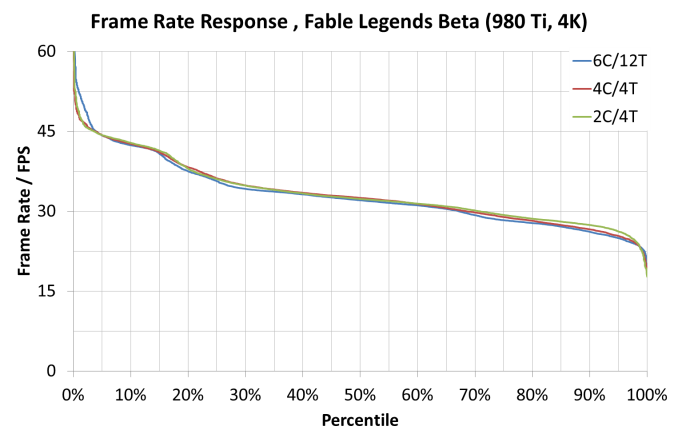

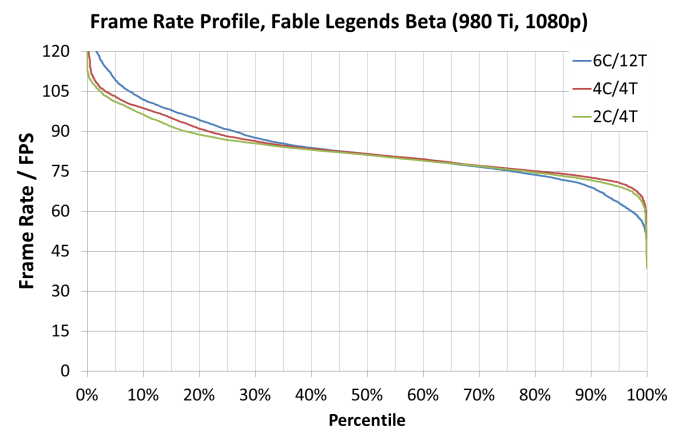

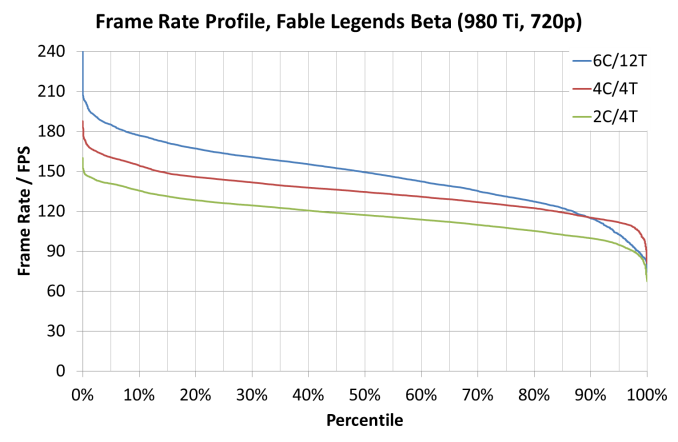

This page (and the next) is going to be data heavy, but our analysis will discuss the effect of CPU scaling on percentile data on both GPUs in all three resolutions using all three CPUs. Starting with the GTX 980 Ti:

All three arrangements at 3840x2160 perform similarly, though there are slight regressions moving from the i3 to the i7 along most of the range, perhaps suggesting that having an excess of thread data has some issues. The Core i7 arrangement seems to have the upper hand at the low percentile (2%-4%) numbers as well.

At 1080p, the Core i7 gives greater results when the frame rate is above the average and we see some scaling effects when the scenes are simple (giving high frame rates). But for whatever reason, when the going gets tough the i7 seems to bottom out as we go beyond the 80th percentile.

If we ever wanted to see a good representation of CPU scaling, the 720p graph is practically there – all except for the 85th percentile and up which makes the data points pulled out in this region perhaps unrepresentative of the whole. This issue might be the same issue when it comes to the 1080p results as well.

141 Comments

View All Comments

anubis44 - Friday, October 30, 2015 - link

The point is not whether you use DP, the point is that the circuitry is now missing, and that's why Maxwell uses less power. If I leave stuff out of a car, it'll be lighter, too. Hey look! No back seats anymore, and now it's LIGHTER! I'm a genius. It's not because nVidia whipped up a can of whoop-ass, or because they have magic powers, it's because they threw everything out of the airplane to make it lighter.anubis44 - Friday, October 30, 2015 - link

And left out the hardware based scheduler, which will bite them in the ass for a lot of DX12 games that will need this. No WAIT! nVidia isn't screwed! They'll just sell ANOTHER card to the nVidiots who JUST bought one that was obsolete, 'cause nVidia is ALWAYS better!Alexvrb - Thursday, September 24, 2015 - link

Not every game uses every DX12 feature, and knowing that their game is going to run on a lot of Nvidia hardware makes developers conservative in their use of new features that hurt performance on Nvidia cards. For example, as long as developers are careful with async compute and you've got plenty of CPU cycles, I think everything will be fine.Now, look at the 720p results. Why the change in the pecking order? Why do AMD cards increase their lead as CPU power falls? Is it a driver overhead issue - possibly related to async shader concerns? We don't know. Either way it might not matter, an early benchmark isn't even necessarily representative of the final thing, let alone a real-world experience.

In the end it will depend on the individual game. I don't think most developers are going to push features really hard that kill performance on a large portion of cards... well not unless they get free middleware tools and marketing cash or something. ;)

cityuser - Sunday, September 27, 2015 - link

quite sure it's nvidia again do some nasty work with the game company that descale the performance of AMD card !!!Look at where the nvidia cannot corrupt, futuremark's benchmark tells another story!!!

Drumsticks - Thursday, September 24, 2015 - link

As always, it's only one data point. It was too early to declare AMD a winner then, but it's still too early to say they aren't actually going to benefit more from DX12 than Nvidia. We need more data to say for sure either way.geniekid - Thursday, September 24, 2015 - link

That's crazy talk.Beararam - Thursday, September 24, 2015 - link

Maybe not ''vastly superior'', but the gains in the 390x seem to be greater than those realized in the 980. Time will tell.https://youtu.be/_AH6pU36RUg?t=6m29s

justniz - Thursday, September 24, 2015 - link

Such a large gain only on AMD just from DX12 (i.e. accessing the GPU at a lower level and bypassing AMD driver's DX11 implementation) is yet more evidence that AMD's DX11 drivers are much more of a bottleneck than nVidia's.Gigaplex - Thursday, September 24, 2015 - link

That part was pretty obvious. The current question is, how much of a bottleneck. Will DX12 be enough to put AMD in the lead (once final code starts shipping), or just catch up?lefty2 - Thursday, September 24, 2015 - link

I wonder if they were pressurized not to release any benchmark that would make Nvidia look bad, similiar to the way they did in ashes of the singularity