Fable Legends Early Preview: DirectX 12 Benchmark Analysis

by Ryan Smith, Ian Cutress & Daniel Williams on September 24, 2015 9:00 AM ESTCPU Scaling

When it comes to how well a game scales with a processor, DirectX 12 is somewhat of a mixed bag. This is due to two reasons – it allows GPU commands to be issued by each CPU core, therefore removing the single core performance limit that hindered a number of DX11 titles and aiding configurations with fewer core counts or lower clock speeds. On the other side of the coin is that it because it allows all the threads in a system to issue commands, it can pile on the work during heavy scenes, moving the cliff edge for high powered cards further down the line or making the visual effects at the high end very impressive, which is perhaps something benchmarking like this won’t capture.

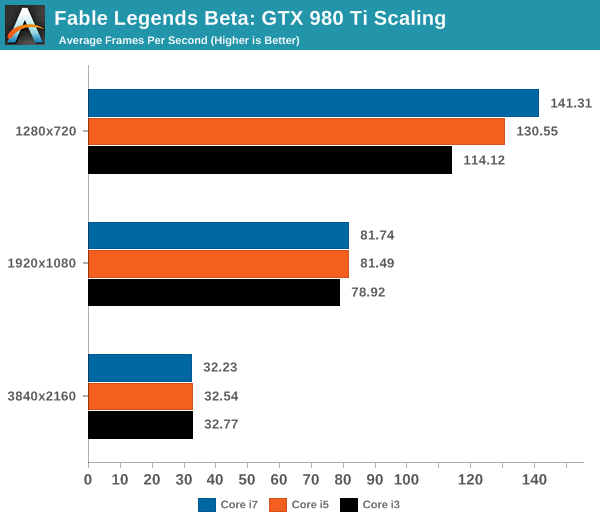

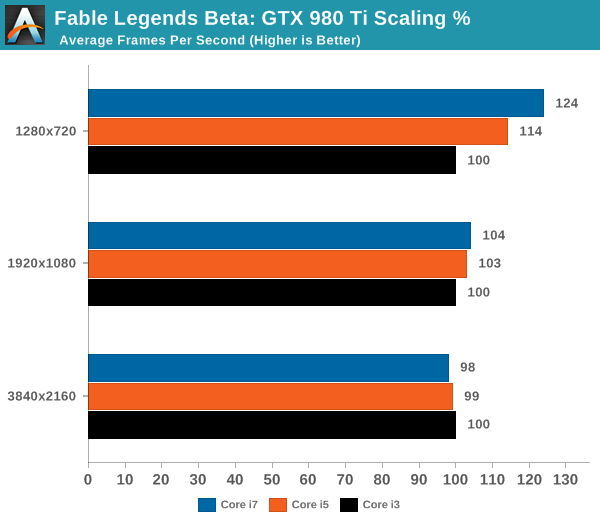

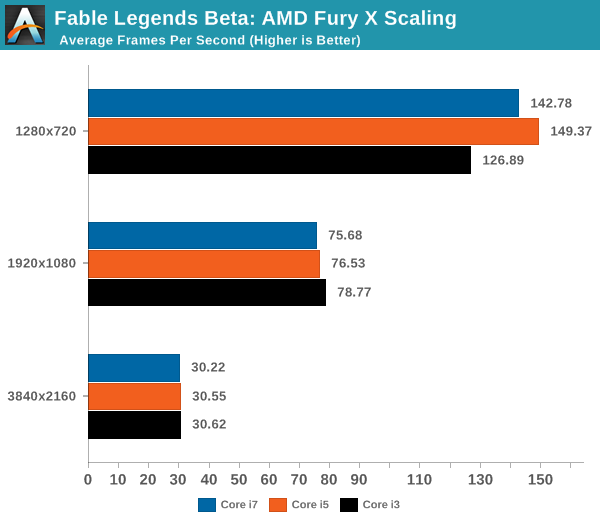

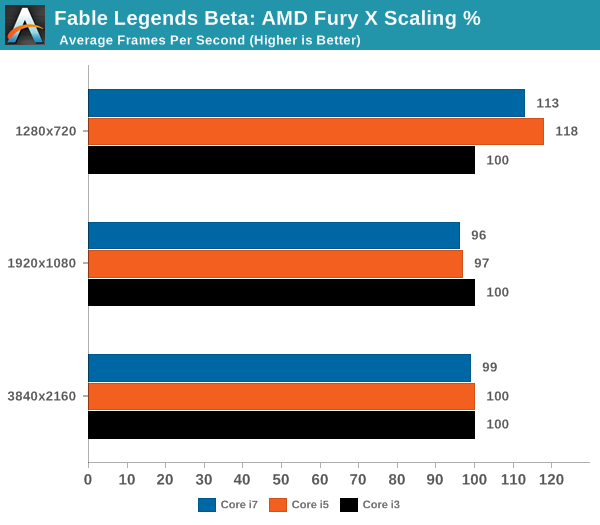

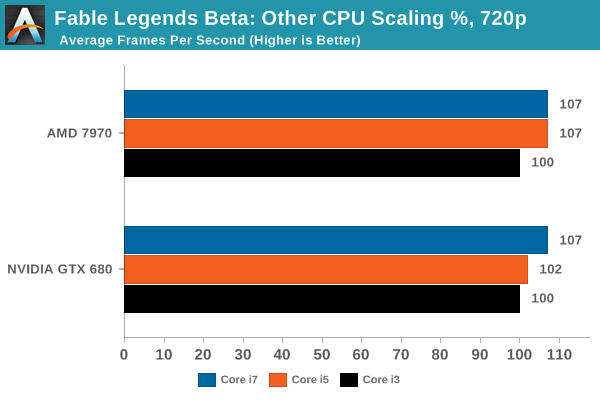

For our CPU scaling tests, we took the two high end cards tested and placed them in each of our Core i7 (6C/12T), Core i5 (4C/4T) and Core i3 (2C/4T) environments, at three different resolution/setting configurations similar to the previous page, and recorded the results.

Looking solely at the GTX 980 Ti to begin, and we see that for now the Fable Benchmark only scales at the low resolution and graphics quality. Moving up to 1080p or 4K sees similar performance no matter what the processor – perhaps even a slight decrease at 4K but this is well within a 2% variation.

On the Fury X, the tale is similar and yet stranger. The Fable benchmark is canned, so it should be running the same data each time – but in all three circumstances the Core i7 trails behind the Core i5. Perhaps in this instance there are too many threads on the processor contesting for bandwidth, giving some slight cache pressure (one wonders if some eDRAM might help). But again we see no real scaling improvement moving from Core i3 to Core i7 for our 1920x1080 and 3840x2160.

As we’ve seen in previous reviews, the effects of CPU scaling with regards resolution are dependent on both the CPU architecture and the GPU architecture, with each GPU manufacturer performing differently and two different models in the same silicon family also differing in scaling results. To that end, we actually see a boost at 1280x720 with the AMD 7970 and the GTX 680 when moving from the Core i3 to the Core i7.

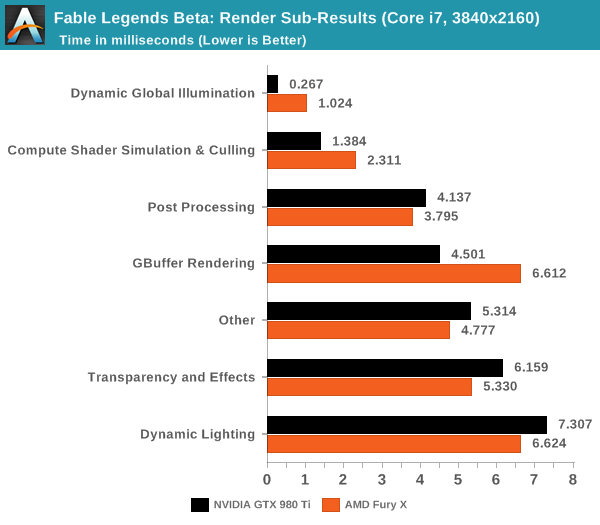

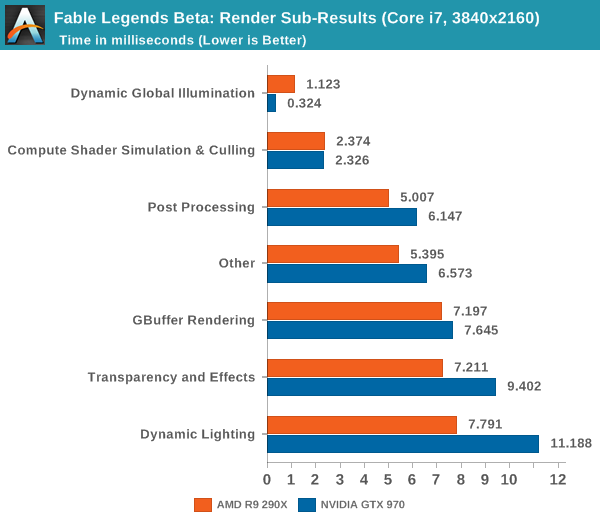

If we look at the rendering time breakdown between GPUs on high end configurations, we get the following data. Numbers here are listed in milliseconds, so lower is better:

Looking at the 980Ti and Fury X we see that NVIDIA is significantly faster at GBuffer rendering, Dynamic Global Illumination, and Compute Shader Simulation & Culling. Meanwhile AMD pulls narrower leads in every other category including the ambiguous 'other'.

Dropping down a couple of tiers with the GTX 970 and R9 290X, we see some minor variations. The R9 290X has good leads in dynamic lighting, and 'other', with smaller leads in Compute Shader Simulation & Culling and Post Processing. The GTX 970 benefits on dynamic global illumination significantly.

What do these numbers mean? Overall it appears that NVIDIA has a strong hold on deferred rendering and global illumination and AMD has benefits with dynamic lighting and compute.

141 Comments

View All Comments

tackle70 - Thursday, September 24, 2015 - link

Nice article. Maybe tech forums can now stop with the "AMD will be vastly superior to Nvidia in DX12" nonsense.cmdrdredd - Thursday, September 24, 2015 - link

Leads me to believe more and more that Stardock is up to shenanigans just a bit or that not every game will use certain features that DX12 can perform and Nvidia is not held back in those games.Jtaylor1986 - Thursday, September 24, 2015 - link

I'd say Ashes is a far more representative benchmark. What is the point of doing a landscape simulator benchmark. This demo isn't even trying to replicate real world performancecmdrdredd - Thursday, September 24, 2015 - link

Are you nuts or what? This is a benchmark of the game engine used for Fable Legends. It's as good a benchmark as any when trying to determine performance in a specific game engine.Jtaylor1986 - Thursday, September 24, 2015 - link

Except its completely unrepresentative of actual gameplay unless this grass growing simulator.Jtaylor1986 - Thursday, September 24, 2015 - link

"The benchmark provided is more of a graphics showpiece than a representation of the gameplay, in order to show off the capabilities of the engine and the DX12 implementation. Unfortunately we didn't get to see any gameplay in this benchmark as a result, which would seem to focus more on combat."LukaP - Thursday, September 24, 2015 - link

You dont need gameplay in a benchmark. you need the benchmark to display common geometry, lighting, effects and physics of an engine/backend that drives certain games. And this benchmark does that. If you want to see gameplay, there are many terrific youtubers who focus on that, namely Markiplier, NerdCubed, TotalBiscuit and othersMr Perfect - Thursday, September 24, 2015 - link

Actual gameplay is still important in benchmarking, mainly because that's when framerates usually tank. An empty level can get fantastic FPS, but drop a dozen players having an intense fight into that level and performance goes to hell pretty fast. That's the situation where we hope to see DX12 outshine DX11.Stuka87 - Thursday, September 24, 2015 - link

Wrong, a benchmark without gameplay is worthless. Look at Battlefield 4 as an example. Its built in benchmarks are worthless. Once you join a 64 player server, everything changes.This benchmark shows how a raw engine runs, but is not indicative of how the game will run at all.

Plus its super early in development with drivers that stil need work, which the article states that AMD's driver arrived too late.

inighthawki - Thursday, September 24, 2015 - link

Yes, but when the goal is to show improvements in rendering performance, throwing someone into a 64 player match completely skews the results. The CPU overhead of handling a 64 player multiplayer match will far outweigh to small changes in CPU overhead from a new rendering API.