The Intel Skylake i7-6700K Overclocking Performance Mini-Test to 4.8 GHz

by Ian Cutress on August 28, 2015 2:30 PM ESTGaming Benchmarks: Integrated Graphics Overclocked

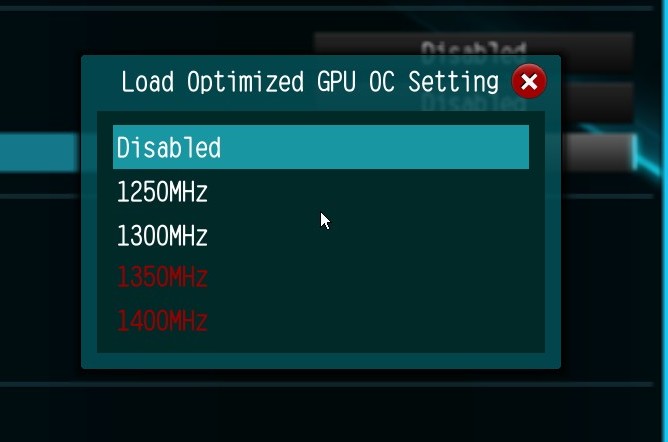

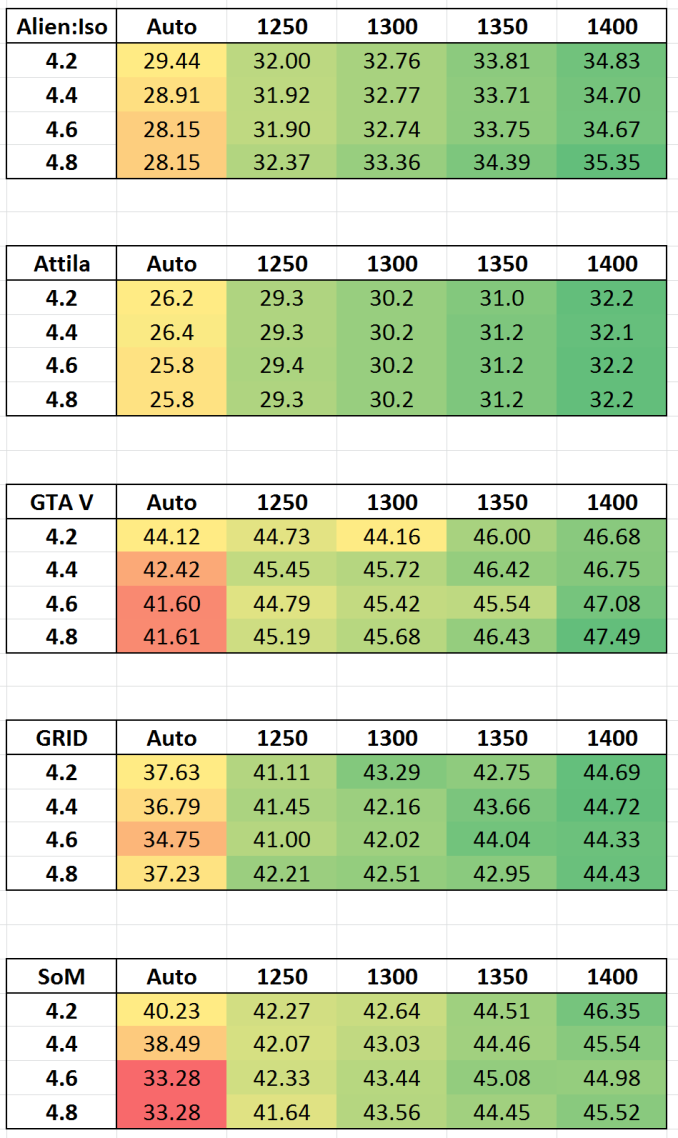

Given the disappointing results on the Intel HD 530 graphics when the processor was overclocked, the tables were turned and we designed a matrix of both CPU and IGP overclocks to test in our graphics suite. So for this we still take the i7-6700K at 4.2 GHz to 4.8 GHz, but then also adjust the integrated graphics from 'Auto' to 1250, 1300, 1350 and 1400 MHz as per the automatic overclock options found on the ASRock Z170 Extreme7+.

Technically Auto should default to 1150 MHz in line with what Intel has published as the maximum speed, however the results on the previous page show that this is more of a see-saw operation when it come to power distribution of the processor. With any luck, actually setting the integrated graphics frequency should maintain that frequency throughout the benchmarks. With the CPU overclocks as well, we can see how it scales with added CPU frequency.

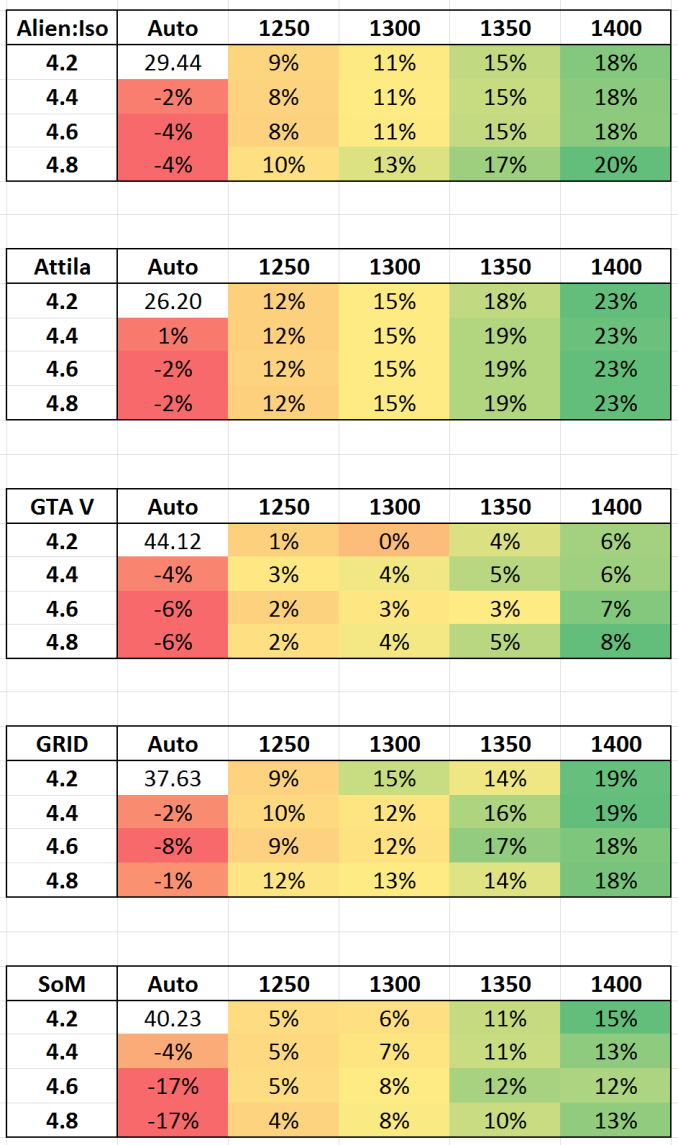

Results for these benchmarks will be provided in matrix form, both as absolute numbers and as a percentage compared to the 4.2 GHz CPU + Auto IGP reference value. Test settings are the same as the previous set of data for IGP.

Absolute numbers

Percentage Deviation from 4.2 GHz / Auto

Conclusions on Overclocking the IGP

It becomes pretty clear that by fixing the frequency of the integrated graphics, there is for the most part no longer the detrimental effect when you overclock the processor, or at least the reduction in performance to the same degree (which falls within standard error). On three of the games, fixing the integrated graphics to 1250 Mhz nets ~10% boost in performance, which for titles like Attila extends to 23% at 1400 MHz. By contrast, GTA V shows only a small gain, indicating that we are perhaps limited in other ways.

103 Comments

View All Comments

Oxford Guy - Tuesday, September 1, 2015 - link

(says the guy with a name like Communism)HollyDOL - Sunday, August 30, 2015 - link

Well, you might have a point with something here. Even though eye itself can take information very fast and optical nerve itself can transmit them very fast, the electro-chemical bridge (Na-K bridge) between them needs "very long" time before it stabilises chemical levels to be able to transmit another impulse between two nerves. Afaic it takes about 100ms to get the levels back (though I currently have no literature to back that value up) so the next impulse can be transmitted. I suspect there are multitudes of lanes so they are being cycled to get better "frame rate" and other goodies that make it up (tunnel effect for example - narrow field of vision to get more frames with same bandwidth?)...Actually I would like to see a science based article on that topic that would make things straight on this play field. Maybe AT could make an article (together with some opthalmologist/neurologist) to clear that up?

Communism - Monday, August 31, 2015 - link

All latencies between the input and the output directly to the brain add up.Any deviation on top of that is an error rate that is added on top of that.

Your argument might as well be "Light between the monitor and your retina is very fast traveling, so why would it matter?"

One must take everything into consideration when talking about latency and temporal error.

qasdfdsaq - Wednesday, September 2, 2015 - link

Not to mention, even the best monitors have more than 2ms variance in response time depending on what colours they're showing.Nfarce - Sunday, August 30, 2015 - link

As one who has been building gaming rigs and overclocking since the Celeron 300->450MHz days of the late 1990s, I'd +1 that. Over the past 15+ years, every new build I did with a new chipset (about every 2-3 years) has shown a diminished return in overclock performance for gaming. And my resolutions have increased over that period as well, further putting more demand on the GPU than CPU (going from 1280x1024 in 1998 to 1600x1200 in 2001 to 1920x1080 in 2007 to 2560x1440p in 2013). So here I am today with an i5 4690K which has successfully been gamed on at 4.7GHz, yet I'm only running it at stock speed because there is ZERO improvement on frames in my benchmarked games (Witcher III, BF4, Crysis 3, Alien Isolation, Project Cars, DiRT Rally). It's just a waste of power and heat and wear and tear. I will overclock it however when running video editing software and other CPU-intensive apps which noticeably helps.TallestJon96 - Friday, August 28, 2015 - link

Scalling seems pretty good, I'd love to see analysis on the i5-6600k as well.vegemeister - Friday, August 28, 2015 - link

Not stable for all possible inputs == not stable. And *especially* not stable when problematic inputs are present in production software that actually does something useful.Beaver M. - Saturday, August 29, 2015 - link

Exactly. Fact of the matter is that proper overclocking takes a LONG LONG time to get stable, unless you get extremely lucky. I sometimes spend months to get it stable. Even when testing with Prime95 like theres no tomorrow, it still wont prove that the system is 100% stable. You also have to test games for hours for several days and of course other applications. But you cant really play games 24/7, so it takes quite some time.sonny73n - Sunday, August 30, 2015 - link

If you have all power saving features disabled, you only have to worry about stability under load. Otherwise, as CPU voltage and frequency fluctuate depend on each application, it maybe a pain. Also most mobos have issues with RAM together with CPU OCed to certain point.V900 - Saturday, August 29, 2015 - link

Thats an extremely theoretical definition of "production software".No professional or production company would ever overclock their machines to begin with.

For the hobbyist overclocker who on a rare occasion needs to encode something in 4K60, the problem is solved by clicking a button in his settings and rebooting.

I really don't see the big deal here.