The Intel Skylake i7-6700K Overclocking Performance Mini-Test to 4.8 GHz

by Ian Cutress on August 28, 2015 2:30 PM ESTConclusions

The how, what and why questions that surround overclocking often result in answers that either confuse or dazzle, depending on the mind-set of the user listening. At the end of the day, it originated from trying to get extra performance for nothing. Buying the low-end, cheaper processors and changing a few settings (or an onboard timing crystal) would result in the same performance as a more expensive model. When we were dealing with single core systems, the speed increase was immediate. With dual core platforms, there was a noticeable difference as well, and overclocking gave the same performance as a high end component. This was noticeable particularly in games which would have CPU bottlenecks due to single/dual core design. However in recent years, this has changed.

Intel sells mainstream processors in both dual and quad core flavors, each with a subset that enable hyperthreading and some other distinctions. This affords five platforms – Celeron, Pentium, i3, i5 and i7 going from weakest to strongest. Overclocking is now enabled solely reserved for the most extreme i5 and i7 processors. Overclocking in this sense now means taking the highest performance parts even further, and there is no recourse to go from low end to high end – extra money has to be spent in order to do so.

As an aside, in 2014, Intel released the Pentium G3258, an overclockable dual core processor without hyperthreading. When we tested, it overclocked to a nice high frequency and it performed in single threaded workloads as expected. However, a dual core processor is not a quad core, and even with a +50% increase in frequency, it will not escape a +100% or +200% increase in threads over the i5 or i7 high end processors. With software and games now taking advantage of multiple cores, having too few cores is the bottleneck, not frequency. Unfortunately you cannot graft on extra silicon as easily as pressing a few buttons.

One potential avenue is to launch an overclockable i3 processor, using a dual core with hyperthreading, which might play on par with an i5 even though we have hyperthreads compared to actual core count. But if it performed, it might draw away sales from the high end overclocking processors, and Intel does not have competition in this space, so I doubt we would see it any time soon.

But what exactly does overclocking the highest performing processor actually achieve? Our results, including all the ones in Bench not specifically listed in this piece, show improvements across the board in all our processor tests.

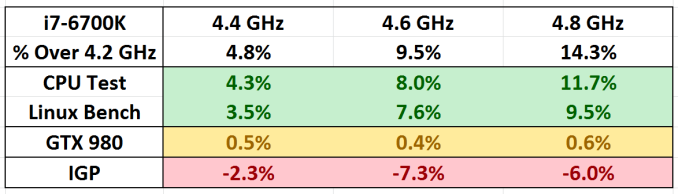

Here we get three very distinct categories of results. The move of +200 MHz is rounded to about a 5% jump, and with our CPU tests it is more nearer 4% for each step up and slightly less in our Linux Bench. In both of these there were benchmarks that bought the average down due to other bottlenecks in the system: Photoscan Stage 2 (the complex multithreaded stage) was variable and in Linux Bench both NPB and Redis-1 gave results that were more DRAM limited. Remove these and the results get closer to the true % gain.

Meanwhile, all of our i7-6700K overclocked testing are now also available in Bench, allowing direct comparison to other processors. Other CPUs when overclocked will be updated in due course.

Moving on, with our discrete testing on a GTX 980, our series of games had little impact on increased frequency at 1080p or even SoM at 4K. Some might argue that this is to be expected, because at high settings the onus is more on the graphics card – but ultimately with a GTX 980 you would be running at 1080p or better at maximum settings where possible.

Finally, the integrated graphics results are a significantly different ball game. When we left the IGP at default frequencies, and just overclocked the processor. The results give a decline in average frame rates, despite the higher frequency, which is perhaps counterintuitive and not expected. The explanation here is due to power delivery budgets – when overclocked, the majority of the power pushes through to the CPU and items are processed quicker. This leaves less of a power budget within the silicon for the integrated graphics, either resulting in lower frequencies to maintain the status quo or by the increase in graphical data occurring over the DRAM-to-CPU bus causing a memory latency bottleneck. Think of it like a see-saw: when you push harder on the CPU side, the IGP side effect is lower. Normally this would be mitigated by increasing the power limit on the processor as a whole in the BIOS, however in this case this had no effect.

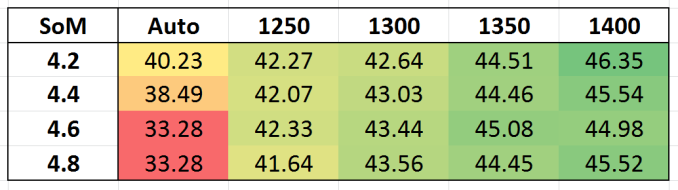

When we fixed the integrated graphics frequencies however, this issue disappeared.

Taking Shadow of Mordor as the example, raising the graphics frequency not only gave a boost in performance when we used the presets provided on the ASRock motherboard, but also the issue of balancing power between the processor and the graphics disappeared and our results were within expected variance.

103 Comments

View All Comments

bill.rookard - Friday, August 28, 2015 - link

I wonder if not having the FIVR on-die has to do with the difference between the Haswell voltage limits and the Skylake limits?Communism - Friday, August 28, 2015 - link

Highly doubtful, as Ivy Bridge has relatively the same voltage limits.Morawka - Saturday, August 29, 2015 - link

yea thats a crazy high voltage.. that was even high for 65nm i7 920'skuttan - Sunday, August 30, 2015 - link

i7 920 is 45nm not 65nmCellar Door - Friday, August 28, 2015 - link

Ian, so it seems like the memory controller - even though capable of driving DDR4 to some insane frequencies seems to error out with large data sets?It would interesting to see this behavior with Skylake and DDR3L.

Also it would be interesting to see in the i56600k, lacking the hyperthreading would run into same issues.

Communism - Friday, August 28, 2015 - link

So your sample definitively wasn't stable above 4.5ghz after all then.......Haswell/Broadwell/Skylake dud confirmed. Waiting for Skylake-E where the "reverse hyperthreading" will be best leveraged with the 6/8 core variant with proper quad channel memory bandwidth.

V900 - Friday, August 28, 2015 - link

Nope, it was stable above 4.5 Ghz...And no dud confirmed in Broadwell/Skylake.

There is just one specific scenario (4K/60 encoding) where the combination of the software and the design of the processor makes overclocking unfeasible.

Not really a failure on Intels part, since it's not realistic to expect them to design a mass-market CPU according to the whims of the 0.5% of their customers who overclock.

Gigaplex - Saturday, August 29, 2015 - link

If you can find a single software load that reliably works at stock settings, but fails at OC, then the OC by definition is not 100% stable. You might not care and are happy to risk using a system configured like that, but I sure as hell wouldn't.Oxford Guy - Saturday, August 29, 2015 - link

Exactly. Not stable is not stable.HollyDOL - Sunday, August 30, 2015 - link

I have to agree... While we are not talking about server stable with ECC and things, either you are rock stable on desktop use or not stable at all. Already failing on one of test scenarios is not good at all. I wouldn't be happy if there were some hidden issues occuring during compilations, or after few hours of rendering a scene... or, let's be honest, in the middle of gaming session with my online guild. As such I am running my 2500k half GHz lower than stability testing shown as errorless. Maybe it's excessively much, but I like to be on a safe side with my OC, especially since the machine is used for wide variety of purposes.