The Mobile CPU Core-Count Debate: Analyzing The Real World

by Andrei Frumusanu on September 1, 2015 8:00 AM EST- Posted in

- Smartphones

- CPUs

- Mobile

- SoCs

Overall Analysis & Conclusion

Hopefully we've managed to cover a few of the more common use-cases that are routinely encountered in daily usage on Android and get a good idea of how applications behave. We've seen some quite expected numbers for some use-cases but also stumbled on very large surprises that weren't quite as obvious.

When I started out this piece the goals I set out to reach was to either confirm or debunk on how useful homogeneous 8-core designs would be in the real world. The fact that Chrome and to a lesser extent Samsung's stock browser were able to consistently load up to 6-8 concurrent processes while loading a page suddenly gives a lot of credence to these 8-core designs that we would have otherwise not thought of being able to fully use their designed CPU configurations. In terms of pure computational load, web-page rendering remains as one of the heaviest tasks on a smartphone so it's very encouraging to see that today's web rendering engines are able to make good use of parallelization to spread the load between the available CPU cores.

It's hard to summarize the vast amount data of the last 16 pages in an orderly and correct manner. After all we are talking about extremely varying use-cases and time-scales for each scenario. While averaging the metrics over the course of a scenario might seem a good idea at first, one has to keep in mind that this wouldn't be able to properly represent cases where load peaks for smaller durations. It's these small computational bursts which are most of the time the cause for "lags" and frame-drops. So to better represent these bottle-necks which determine the user-visible cases of application speed and performance, we rather use the 90th percentile of the CPU run-queue depths:

| 90th Percentile Run-Queue Depth Averages | |||

| Little Cluster | Big Cluster | Little + Big Clusters |

|

| S-Browser - AnandTech Article | 2.27 | 2.19 | 3.87 |

| S-Browser - AnandTech FP | 3.12 | 1.25 | 4.15 |

| Chrome - AnandTech FP | 5.69 | 1.84 | 7.10 |

| Chrome - BBC Frontpage | 5.00 | 2.00 | 6.22 |

| Hangouts Launch | 2.77 | 2.11 | 4.01 |

| Hangouts Writing A Message | 2.80 | 0.05 | 2.57 |

| Reddit Sync Launch | 1.84 | 1.11 | 2.38 |

| Reddit Sync Scrolling | 0.95 | 1.03 | 1.46 |

| Play Store Open & Scroll | 2.87 | 0.78 | 3.45 |

| Play Store App Updates | 3.73 | 5.42 | 8.51 |

| Camera: Launch | 1.45 | 2.73 | 2.98 |

| Camera: Still Snapshot | 4.12 | 0.87 | 4.59 |

| Camera: Video Recording | 5.17 | 2.04 | 5.42 |

| Real Racing 3 Launch | 2.16 | 1.33 | 3.26 |

| Real Racing 3 Playing | 2.09 | 0.89 | 2.96 |

| Modern Combat 5 Playing | 2.09 | 0.73 | 2.68 |

I was wary of creating this table as it can be easily misinterpreted: Because run-queue depth averages are not directly representative of the amount of concurrent threads in a given scenario, we lose information when aggregating them for a given cluster or the whole system. This for example happens on the big cluster on the AT article load scenario where the 90th percentile of the aggregate rq-depth reaches 2.19 while in reality this figure is composed of 4 medium-high threads. Readers should thus keep in mind the actual detailed graphs of the preceding pages when reading the table.

While not directly the goal of the article, the collected data also serves as a perfect case-study for heterogeneous big.LITTLE SoCs. We've long seen discussions concerning what the "ideal" big.LITTLE configuration would be. There's several angles to this: the most optimal little and big cluster core counts, and whether we're aiming for performance or power efficiency in each case. In terms of low- to medium-performance threads, we've had several cases where 4 little cores weren't enough. Web page rendering in Chrome in particular seems to be the killer use-case where actually having two clusters of highly efficient cores makes sense.

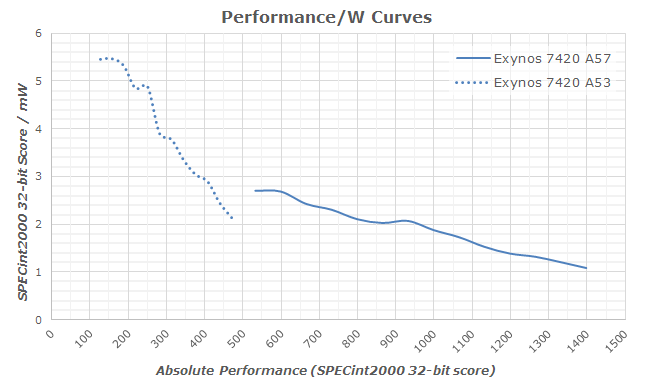

On the high-performance "big" cluster side, the discussion topic is more about whether 2 or 4 core designs make more sense. I think the decision here is not about performance but rather about power efficiency. A 2-core big-cluster design would provide more than enough performance for most use-cases, but as we've seen throughout our testing during interactive use it's more common than not to have 2+ threads placed on the big cluster. So while a 2-core design could handle bursts where ~3-4 threads are placed onto the big cluster, the CPUs would need to scale up higher in frequency to provide the same performance compared to a wider 4-core design. And scaling up higher in frequency has a quadratically detrimental effect on power efficiency as we need higher operating voltages. At the end of the day I think the 4 big core designs are not only the better performing ones but also the more efficient ones.

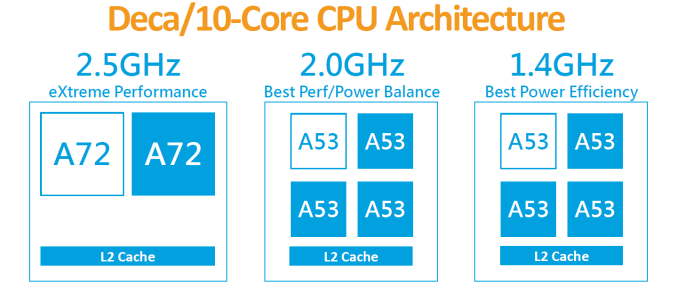

This puts one particular vendor in quite of an interesting position: MediaTek. Even if one wouldn't be able to fully saturate a cluster one can still derive power efficiency advantages due to the fact that two small clusters would be able to operate at separate frequencies and thus efficiency points. I've encountered enough scenarios that would in theory fit the Helio X20's tri-cluster design that I'm starting to think that such a design would actually be a very smart choice for current Android devices.

What about more traditional SoC configurations? As mentioned earlier symmetric 8-core designs such as MediaTek's Helio X10 would, contrary to one's expectations, be seemingly able to take advantage of their higher core counts. So while it would be preferable to have higher performance cores such as Cortex A57's or A72's, one has to keep in mind the target market of these architectures are limited to higher-end SoCs. The 8 little-core designs are mostly targeted at the entry- and mid-level where adding a second Cortex A53 cluster can be very cheap way of still providing benefits in every-day usages, particularly in web-browsing.

What is clear though albeit there are corner-cases, is that the vast majority of applications do seem to be optimal for quad-core SoCs. This is why traditional 4-core and 4.4 big.LITTLE designs still appear to make the most sense in terms providing a balanced configuration and making most use of the hardware at hand. For big.LITTLE, even if there were no use-cases where all cores are concurrently used, it's not a big deal as what we are aiming for in heterogeneous systems is power efficiency gains.

This is also the point of the discussion where the debate of the potential detrimental effect of having more cores comes into play: The fact that a SoC has more cores does not automatically mean it uses more power. As demonstrated in the data, modern power management is advanced enough to make extensive use of fine-grained power-gated idle states, thus eliminating any overhead there might be of simply having more physical cores on the silicon. If there are cases (And as we've seen, there are!) which make use of more cores then this should be seen purely as an added bonus and icing on the cake.

What about narrow CPU-core number design philosophies? Would such designs make sense on Android? This is probably another question that our readers will ask themselves when looking at the data. Apple and recently Nvidia with their Denver architecture both choose to keep going the route of employing large 2-core designs that are strong in their single-threaded performance but fall behind in terms of multi-threaded performance.

While for Apple it can be argued that we're dealing with a very different operating system and it is likely iOS applications are less threaded than their Android counter-parts. But there are cases where this doesn't need to be necessarily hold true: For example browser rendering engines, as demonstrated, can be multi-threaded if adapted to do so. Native high-end games which already make use of multiple threads are also unlikely to differ in their threading logic between the platforms.

While such narrow CPU-core designs would have higher performance at a given frequency - it is not a direct indicator of the actual performance/W efficiency that a single thread would have on these chipsets. We still haven't had a chance to make a proper apples-to-apples comparison for these architectures so we're limited to theorycrafting with the data we currently have available to us:

What we see in the use-case analysis is that the amount of use-cases where an application is visibly limited due to single-threaded performance seems be very limited. In fact, a large amount of the analyzed scenarios our test-device with Cortex A57 cores would rarely need to ramp up to their full frequency beyond short bursts (Thermal throttling was not a factor in any of the tests). On the other hand, scenarios were we'd find 3-4 high load threads seem not to be that particularly hard to find, and actually appear to be an a pretty common occurence. For mobile, the choice seems to be obvious due to the power curve implications. In scenarios where we're not talking about having loads so small that it becomes not worthwhile to spend the energy to bring a secondary core out of its idle state, one could generalize that if one is able to spread the load over multiple CPUs, it will always preferable and more efficient to do so.

In the end what we should take away from this analysis is that Android devices can make much better use of multi-threading than initially expected. There's very solid evidence that not only are 4.4 big.LITTLE designs validated, but we also find practical benefits of using 8-core "little" designs over similar single-cluster 4-core SoCs. For the foreseeable future it seems that vendors who rely on ARM's CPU designs will be well served with a continued use of 4.4 b.L designs. Only MediaTek seems to fall out of the norm here with its upcoming X20 SoC, which I'm definitely looking forward to see as to how it behaves in the real-world. We'll also see some vendors revert back to quad-core designs in their custom architectures - while we've yet to get a better picture of how these will behave in terms of performance and power, I think that 4 cores will be a quite reasonable target and sweet-spot for vendors to aim for.

157 Comments

View All Comments

nightbringer57 - Tuesday, September 1, 2015 - link

There are several faces to this problem. The inherent issue with multi core designs is that it is not trivial to develop your application so it uses several cores efficiently. The potential gain in using multi core designs is that it can do the same job for less power than a single core design. The articles answers the question "can today's typical software environment use several cores efficiently?" with a pretty objective yes. It does not necessarily state that this is superior to more simple cores, it even states that it is not relevant for other environments.Your computer can handle an order of magnitude more computation needs, but don't forget it is using two orders of magnitude more power. The switch to dual core processors in the first place (in desktops) was motivated by the fact the industry hit a wall where they could not raise frequency anymore (heat and power consumption being an issue), where two, more efficient cores could increase performance significantly while still using less power.

Of course, the sweet spot does vary depending on the targeted power as well as the environment you're working in.

Do the android phones hit this sweet spot? maybe. But, at least, they are capable of hitting this sweet spot, for that power target and this given environment. That's what this article says.

name99 - Tuesday, September 1, 2015 - link

"Your computer can handle an order of magnitude more computation needs, but don't forget it is using two orders of magnitude more power."This is simply not true. Apple (and others) are shipping laptops running Broadwell at, what, 4.5W?

IF there were massive value in adding smaller cores to the Broadwell package (eg it could drop the average power to 2.5W) wouldn't Intel do that? They could, eg, add 2 or 4 Silvermont cores and have their own big.LITTLE system. They could even automatically switch threads in the HW if they don't want the OS to be involved, they way they handle DVFS automatically on their newest cores.

What I see when I read through these comments is a collection of people not very familiar with OS scheduling who are happy to interpret "OS can schedule multiple threads" as "app requires multiple cores to function well", and a much smaller collection of professionals who understand that the two have little relationship to each other for very short duration threads.

There's also a whole lot of claims being made here about power savings on the basis of absolutely fsckall evidence --- Andrei shows absolutely no graphs of the power being used during these runs, and it is HYPOTHESIS, not fact, that running say four lightweight threads on four A53 cores would use less energy than aggregating those four threads on a single A57. Maybe it's true, maybe it isn't --- I don't see any reason to simply assert that it's true.

nightbringer57 - Wednesday, September 2, 2015 - link

Well, when Intel ships Broadwell processors at 4.5W, they do consume only a little bit less than an order of magnitude more than your average cortex-A53 cluster.Using big.LITTLE configurations requires a lot of precautions at the very beginning of the conception of both cores. You don't just take lower-end cores and add them to the SoC. Both the higher end and the lower end core must be conceived as being big.LITTLE compatible.

And, however impressive those processors from Intel were, keep in mind that if they didn't put some lower power SKUs out, it's probably because they can't. To get into lower power figures, they are still forced to resort to Atom processors. And Atom branded processos today are... Overwhelmingly 4-cores models.

Once again, I'm not pretending this article is the final proof that 8 core designs are a must. But it shows that, at least, typical use cases are able to use all cores. Not that this is efficient. But there is potential for efficiency.

name99 - Wednesday, September 2, 2015 - link

You need to be a little more careful with slinging around the term "order of magnitude".A 4 core A53 cluster running FP on all CPUs (Exynos 5433, 1300MHz) uses about 865mW. That's a factor of 5 from Broadwell's 4.5W, not a factor of ten.

I'm no fan of much of Intel's work and behavior, but I don't think we are well served when we ignore details, when the details hold most of the interesting facts.

prisonerX - Wednesday, September 2, 2015 - link

All you're saying is that currently software needs better single thread performance. Duh!What everyone else is saying is that you can't get increased performance, nor better power usage, going forward with a single thread performance strategy. Physics has spoken!

It's nothing to do with OS scheduling and everything to do with software architecture. Everything is moving towards increasing parallelism and that will continue.

That's why mobile phones now have 8 cores and will have more because they are not weighed down by legacy architectures.

Jaybus - Tuesday, September 1, 2015 - link

It is worse than that on the development side. Yes, it is non-trivial to develop an app that uses multiple cores efficiently, but it is actually impossible to develop an app that uses multiple cores efficiently on all platforms. Maintaining many different versions optimized for particular platforms is just not plausible when there are so many different platforms.prisonerX - Tuesday, September 1, 2015 - link

What are you basing that on? Your own bias?In reality your single Haswell core is going to be slower and use a lot more power in the process.

lopri - Wednesday, September 2, 2015 - link

Do people read?"I should start with a disclaimer that because the tools required for such an analysis rely heavily on the Linux kernel, that this analysis is constrained to the behaviour of Android devices and doesn't necessarily represent the behaviour of devices on other operating systems, in particular Apple's iOS. As such, any comparisons between such SoCs should be limited to purely to theoretical scenarios where a given CPU configuration would be running Android."

The world does not revolve around Apple. This article has nothing whatsoever with Apple products. Furthermore, the article does neither claim nor imply that wider fat cores are better or worse than big.LITTLE.

jjj - Tuesday, September 1, 2015 - link

Yet to read the full article just looked at the more relevant graphs but i do wish you would have tested some heavier web pages (since AT and BBC are not that heavy), some SoCs with only small cores and look at power too. Would be very curious about power for a quad A53 vs octa A53 at same clocks. Testing GPGPU on the midrange SoCs that actually do that, would be interesting too.Really hope next year we get 2xA72(+some A53s) in 20$ SoCs for 150-200$ phones with very nice CPU perf. Anyway, will read the full article as soon as i find the free time.

Pissedoffyouth - Tuesday, September 1, 2015 - link

>Yet to read the full article just looked at the more relevant graphs but i do wish you would have tested some heavier web pagesDaily mail UK comes to mind as the heaviest website ever