The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

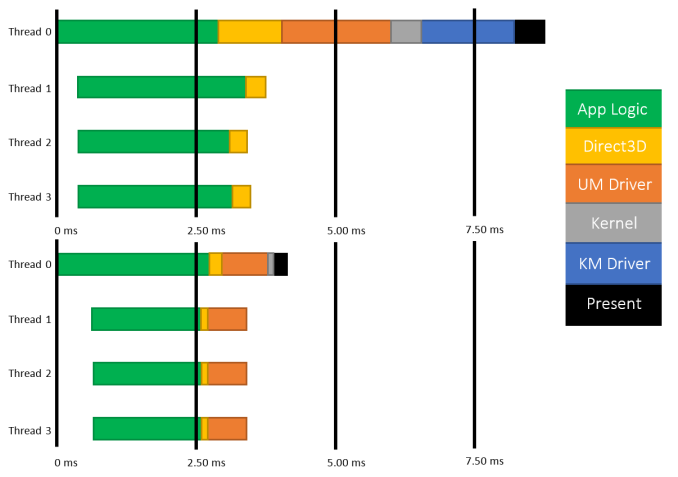

About a year and a half ago AMD kicked off the public half of a race to improve the state of graphics APIs. Dubbed "Mantle", AMD’s in-house API for their Radeon cards stripped away the abstraction and inefficiencies of traditional high-level APIs like DirectX 11 and OpenGL 4, and instead gave developers a means to access the GPU in a low-level, game console-like manner. The impetus: with a low-level API, engine developers could achieve better performance than with a high-level API, sometimes vastly exceeding what DirectX and OpenGL could offer.

While AMD was the first such company to publicly announce their low-level API, they were not the last. 2014 saw the announcement of APIs such as DirectX 12, OpenGL Next, and Apple’s Metal, all of which would implement similar ideas for similar performance reasons. It was a renaissance in the graphics API space after many years of slow progress, and one desperately needed to keep pace with the progress of both GPUs and CPUs.

In the PC graphics space we’ve already seen how early versions of Mantle perform, with Mantle offering some substantial boosts in performance, especially in CPU-bound scenarios. As awesome as Mantle is though, it is currently a de-facto proprietary AMD API, which means it can only be used with AMD GPUs; what about NVIDIA and Intel GPUs? For that we turn towards DirectX, Microsoft’s traditional cross-vendor API that will be making the same jump as Mantle, but using a common API for the benefit of every vendor in the Windows ecosystem.

DirectX 12 was first announced at GDC 2014, where Microsoft unveiled the existence of the new API along with their planned goals, a brief demonstration of very early code, and limited technical details about how the API would work. Since then Microsoft has been hard at work on DirectX 12 as part of the larger Windows 10 development effort, culminating in the release of the latest Windows 10 Technical Preview, Build 9926, which is shipping with an early preview version of DirectX 12.

GDC 2014 - DirectX 12 Unveiled: 3DMark 2011 CPU Time: Direct3D 11 vs. Direct3D 12

With the various pieces of Microsoft’s latest API finally coming together, today we will be taking our first look at the performance future of DirectX. The API is stabilizing, video card drivers are improving, and the first DirectX 12 application has been written; Microsoft and their partners are finally ready to show off DirectX 12. To that end, today we’ll looking at DirectX 12 through Oxide Games’ Star Swarm benchmark, our first DirectX 12 application and a true API efficiency torture test.

Does DirectX 12 bring the same kind of performance benefits we saw with Mantle? Can it resolve the CPU bottlenecking that DirectX 11 struggles with? How well does the concept of a low-level API work for a common API with disparate hardware? Let’s find out!

245 Comments

View All Comments

Mr Perfect - Sunday, February 8, 2015 - link

That's not what he's saying though, he said TDP is some measure of what amount of heat is 'wasted" heat. As if there's some way to figure out what part of the 165 watts is doing computational work, and what is just turning into heat without doing any computational work. That's not what TDP measures.Also, CPUs and GPUs can routinely go past TDP, so I'm not sure where people keep getting TDP is maximum power draw from. It's seen regularly in the benchmarks here at Anandtech. That's usually one of the goals of the power section of reviews, seeing if the manufacturers TDP calculation of typical power draw holds up in the real world.

Mr Perfect - Sunday, February 8, 2015 - link

Although, now that I think about it, I do remember a time when TDP actually was pretty close to maximum power draw. But then Intel came out with the Netburst architecture and started defining TDP as the typical power used by the part in real world use, since the maximum power draw was so ugly. After a lot of outrage from the other companies, they picked up the same practice so they wouldn't seem to be at a disadvantage in regard to power draw. That was ages ago though, TDP hasn't meant maximum power draw for years.Strunf - Sunday, February 8, 2015 - link

TDP essentially means your GPU can work at that power input for a long time, in the past the CPU/GPU were close to it cause they didn't have throttle, idles and what not technologies. Today they have and they can go past the TDP for "short" period of times, with the help of thermal sensors they can adjust the power as they need without risking of burning down the CPU/GPU.YazX_ - Friday, February 6, 2015 - link

Dude, its total System power consumption not video card only.Morawka - Friday, February 6, 2015 - link

are you sure you not looking at factory overclocked cards? The 980 has a 8 pin and 6 pin connector. You gotta minus the CPU and Motherboard power.Check any reference review on power consumption

http://www.guru3d.com/articles_pages/nvidia_geforc...

Yojimbo - Friday, February 6, 2015 - link

Did you notice the 56% greater performance? The rest of the system is going to be drawing more power to keep up with the greater GPU performance. NVIDIA is getting much greater benefit of having 4 cores than 2, for instance. And who knows, maybe the GPU itself was able to run closer to full load. Also, the benchmark is not deterministic, as mentioned several times in the article. It is the wrong sort of benchmark to be using to compare two different GPUs in power consumption, unless the test is run significantly many times. Finally, you said the R9 290X-powered system consumed 14W more in the DX12 test than the GTX 980-powered system, but the list shows it consumed 24W more. Let's not even compare DX11 power consumption using this benchmark, since NVIDIA's performance is 222% higher.MrPete123 - Friday, February 6, 2015 - link

Win7 will be dominant in businesses for some time, but not gaming PCs where this will be benefit more.Yojimbo - Friday, February 6, 2015 - link

Most likely the main reasons for consumers not upgrading to Windows 10 will be laziness, comfort, and ignorance.Murloc - Saturday, February 7, 2015 - link

people who are CPU bottlenecked are not that kind of people given the amount of money they spend on GPUs.Frenetic Pony - Friday, February 6, 2015 - link

FREE. Ok. FREE. F and then R and then E and then another E.