The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

Star Swarm & The Test

For today’s DirectX 12 preview, Microsoft and Oxide Games have supplied us with a newer version of Oxide’s Star Swarm demo. Originally released in early 2014 as a demonstration of Oxide’s Nitrous engine and the capabilities of Mantle, Star Swarm is a massive space combat demo that is designed to push the limits of high-level APIs and demonstrate the performance advantages of low-level APIs. Due to its use of thousands of units and other effects that generate a high number of draw calls, Star Swarm can push over 100K draw calls, a massive workload that causes high-level APIs to simply crumple.

Because Star Swarm generates so many draw calls, it is essentially a best-case scenario test for low-level APIs, exploiting the fact that high-level APIs can’t effectively spread out the draw call workload over several CPU threads. As a result the performance gains from DirectX 12 in Star Swarm are going to be much greater than most (if not all) video games, but none the less it’s an effective tool to demonstrate the performance capabilities of DirectX 12 and to showcase how it is capable of better distributing work over multiple CPU threads.

It should be noted that while Star Swarm itself is a synthetic benchmark, the underlying Nitrous engine is relevant and is being used in multiple upcoming games. Stardock is using the Nitrous engine for their forthcoming Star Control game, and Oxide is using the engine for their own game, set to be announced at GDC 2015. So although Star Swarm is still a best case scenario, many of its lessons will be applicable to these future games.

As for the benchmark itself, we should also note that Star Swarm is a non-deterministic simulation. The benchmark is based on having two AI fleets fight each other, and as a result the outcome can differ from run to run. The good news is that although it’s not a deterministic benchmark, the benchmark’s RTS mode is reliable enough to keep the run-to-run variation low enough to produce reasonably consistent results. Among individual runs we’ll still see some fluctuations, while the benchmark will reliably demonstrate larger performance trends.

The Test

For today’s preview Microsoft, NVIDIA, and AMD have provided us with the necessary WDDM 2.0 drivers to enable DirectX 12 under Windows 10. The NVIDIA driver is 349.56 and the AMD driver is 15.200. At this time we do not know when these early WDDM 2.0 drivers will be released to the public, though we would be surprised not to see them released by the time of GDC in early March.

In terms of bugs and other known issues, Microsoft has informed us that there are some known memory and performance regressions in the current WDDM 2.0 path that have since been fixed in interim builds of Windows. In particular the WDDM 2.0 path may see slightly lower performance than the WDDM 1.3 path for older drivers, and there is an issue with memory exhaustion. For this reason Microsoft has suggested that a 3GB card is required to use the Star Swarm DirectX 12 binary, although in our tests we have been able to run it on 2GB cards seemingly without issue. Meanwhile DirectX 11 deferred context support is currently broken in the combination of Star Swarm and NVIDIA's drivers, causing Star Swarm to immediately crash, so these results are with D3D 11 deferred contexts disabled.

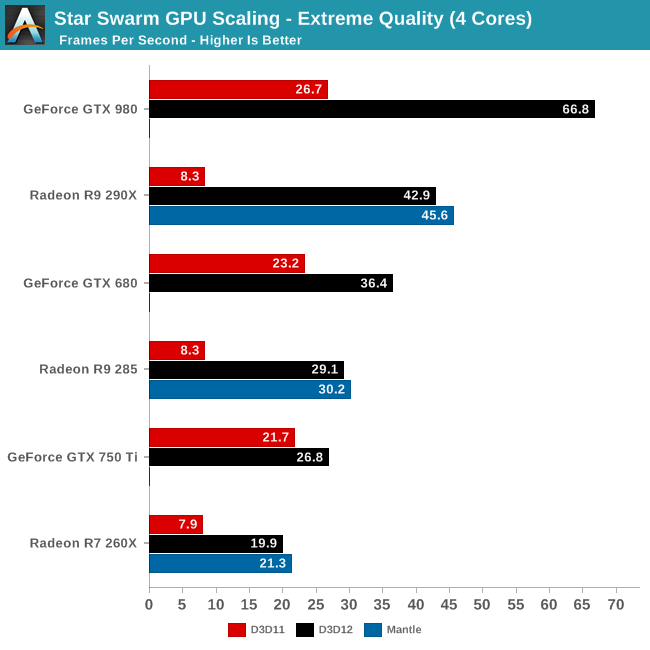

For today’s article we are looking at a small range of cards from both AMD and NVIDIA to showcase both performance and compatibility. For NVIDIA we are looking at the GTX 980 (Maxwell 2), GTX 750 Ti (Maxwell 1), and GTX 680 (Kepler). For AMD we are looking at the R9 290X (GCN 1.1), R9 285 (GCN 1.2), and R9 260X (GCN 1.1). As we mentioned earlier support for Fermi and GCN 1.0 cards will be forthcoming in future drivers.

Meanwhile on the CPU front, to showcase the performance scaling of Direct3D we are running the bulk of our tests on our GPU testbed with 3 different settings to roughly emulate high-end Core i7 (6 cores), i5 (4 cores), and i3 (2 cores) processors. Unfortunately we cannot control for our 4960X’s L3 cache size, however that should not be a significant factor in these benchmarks.

| DirectX 12 Preview CPU Configurations (i7-4960X) | |||

| Configuration | Emulating | ||

| 6C/12T @ 4.2GHz | Overclocked Core i7 | ||

| 4C/4T @ 3.8GHz | Core i5-4670K | ||

| 2C/4T @ 3.8GHz | Core i3-4370 | ||

Though not included in this preview, AMD’s recent APUs should slot between the 2 and 4 core options thanks to the design of AMD’s CPU modules.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 290X AMD Radeon R9 285 AMD Radeon R7 260X NVIDIA GeForce GTX 980 NVIDIA GeForce GTX 750 Ti NVIDIA GeForce GTX 680 |

| Video Drivers: | NVIDIA Release 349.56 Beta AMD Catalyst 15.200 Beta |

| OS: | Windows 10 Technical Preview 2 (Build 9926) |

Finally, while we’re going to take a systematic look at DirectX 12 from both a CPU standpoint and a GPU standpoint, we may as well answer the first question on everyone’s mind: does DirectX 12 work as advertised? The short answer: a resounding yes.

245 Comments

View All Comments

Jeffro421 - Thursday, February 12, 2015 - link

Something is horribly wrong with your results. I just ran this benchmark, on extreme, with a 270X 4GB and I got 39.61 FPS on DX11. You say a 290X only got 8.3 fps on DX11?http://i.imgur.com/JzX0UAa.png

Ryan Smith - Saturday, February 14, 2015 - link

You ran the Follow scenario. Our tests use the RTS scenario.Follow is a much lighter workload and far from reliable due to the camera swinging around.

0VERL0RD - Friday, February 13, 2015 - link

Been meaning to ask why both cards show vastly different total memory in Directx diag. Don't recall Article indicating how much memory each card had. Assuming they're equal. Is it normal for Nvidia to not report correct memory or is something else going on?Ryan Smith - Saturday, February 14, 2015 - link

The total memory reported is physical + virtual. As far as I can tell AMD is currently allocating 4GB of virtual memory, whereas NVIDIA is allocating 16GB of virtual memory.trisct - Friday, February 13, 2015 - link

MS needs a lot more Windows installs to make the Store take off, but first they need more quality apps and a competitive development stack. The same app on IOS or Android is almost always noticeably smoother with an improved UI (often extra widget behaviors that the Windows tablet versions cannot match). Part of this is maturity of the software, but Microsoft has yet to reach feature parity with the competing development environments, so its also harder for devs to create those smooth apps in the first place.NightAntilli - Friday, February 13, 2015 - link

We know Intel has great single core performance. So the lack of benefits for more than 4 cores is not unsurprising. The most interesting aspect would be to test the CPUs with weak single core performance, like the AMD FX series. Using the FX series rather than (only) the Intel CPUs would be more telling. 4 cores would not be enough to shift the bottleneck to the GPU with the FX CPUs. This would give a much better representation of scaling beyond 4 cores. Right now we don't know if the spreading of the tasks across multiple threads is limited to 4 cores, or if it scales equally well to 6 threads or 8 threads also.This is a great article, but I can't help feeling that we would've gotten more out of it if at least one AMD CPU was included. Either an FX-6xxx or FX-8xxx.

Ryan Smith - Saturday, February 14, 2015 - link

Ask and ye shall receive: http://www.anandtech.com/show/8968/star-swarm-dire...NightAntilli - Tuesday, February 17, 2015 - link

Thanks a lot :) The improvements are great.0ldman79 - Monday, February 16, 2015 - link

One benefit for MS to have (almost) everyone on a single OS is just how many man hours are spent patching the older OS? If they can set up the market to where they can drop support for Vista, 7 and 8 earlier than anticipated they will save themselves a tremendous amount of money.Blackpariah - Tuesday, February 17, 2015 - link

I'm just hoping the already outdated console hardware in PS4/Xbone won't hold things back too much for the pc folks. On a side note... I'm in a very specific scenario where my new gtx 970, with DX11, is getting 30-35 fps @ 1080P in battlefield 4 because the cpu is still an old Phenom 2 x4... while with my older R9 280, on Mantle, the framerate would stay above 50's at all times at almost identical graphic detail & same resolution.