Synology DS1815+ 8-bay Intel Rangeley SMB NAS Review

by Ganesh T S on November 18, 2014 6:30 AM ESTMiscellaneous Aspects and Final Words

In order to keep testing consistent across all 8-bay units, we performed all our expansion / rebuild testing as well as power consumption evaluation with the unit configured in RAID-5. The disks used for benchmarking (Western Digital WD4000FYYZ) were also used in this section. The table below presents the average power consumption of the unit as well as time taken for various RAID-related activities.

| Synology DS1815+ RAID Expansion and Rebuild / Power Consumption | ||

| Activity | Duration (HH:MM:SS) | Avg. Power (W) |

| Single Disk Init | 0:10:42 | 32.14 W |

| JBOD to RAID-1 Migration | 11:17:58 | 42.34 W |

| RAID-1 (2D) to RAID-5 (3D) Migration | 35:53:15 | 51.68 W |

| RAID-5 (3D) to RAID-5 (4D) Expansion | 25:1:4 | 62.48 W |

| RAID-5 (4D) to RAID-5 (5D) Expansion | 23:32:53 | 73.78 W |

| RAID-5 (5D) to RAID-5 (6D) Expansion | 23:6:12 | 84.07 W |

| RAID-5 (6D) to RAID-5 (7D) Expansion | 24:28:29 | 94.58 W |

| RAID-5 (7D) to RAID-5 (8D) Expansion | 27:7:26 | 104.72 W |

| RAID-5 (8D) Rebuild | 14:21:12 | 103.44 W |

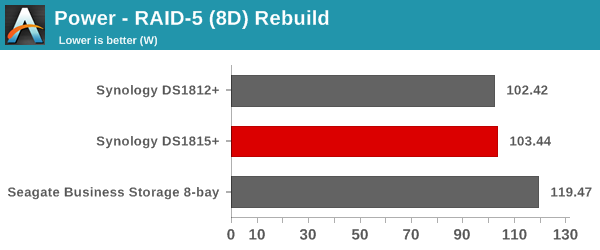

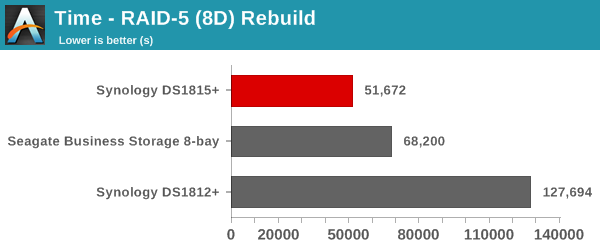

The graphs below show the power consumption and rebuild duration when repairing a RAID-5 volume for the various 8-bay NAS units that have been evaluated before.

Even though the DS1815+ is not as power efficient as the DS1812+, the unit turns out to be better by a huge margin thanks to the cut-down in the rebuild duration. That said, it does look like Synology can optimize RAID rebuild and expansion durations further.

Concluding Remarks

The SMB / SOHO / prosumer COTS NAS market is interestingly poised. With the previous generation Atom platforms, NAS vendors had to differentiate themselves with the software. However, with their 22nm silicon, Intel has provided them with multiple options. We have already looked at QNAP using Bay Trail-D with extra focus on the multimedia transcoding and virtualization aspects. Asustor has opted to go the Haswell route, with a Core i3 CPU for the 70-series. With the DSx15+ series, Synology has placed its bets on the Intel Rangeley platform.

The new Rangeley platform has made up for the drawbacks of the previous generation x86 platforms at this price point. Equipped with the Atom C2538, the DS1815+ excels in three areas: multi-client performance, encryption capabilities and power efficiency. Synology's DSM is quite mature and it has no problems in bringing out the potential of Intel's Rangeley for the NAS market. The latest version of DSM brings deeper cloud integration (more cloud vendors supported for backup), better sync control, an advanced multi-platform note-taking solution in Note Stations / DS Note and improved security features (such as digital signing for packages). For the SMB market that Synology is targeting with the DS1815+, DSM 5.1 also brings SSD caching to high-availability clusters, VMware VAAI for NFS (in addition to the already existing iSCSI support) and scheduled iSCSI LUN snapshots.

Multi-client performance in terms of average response times is better because of the highly integrated I/O compared to other solutions which use bridge chips and have bottlenecks in connecting to the CPU. The appearance of AES-NI in the Atom-class SoCs has finally delivered power efficient encryption capabilities. Obviously, the 22 nm fabrication process as well as tight I/O integration greatly help in reducing the power consumption of the platform compared to other solutions in the market.

The above advantages aside, there are certain areas where Synology could improve:

- The DS1815+ needs to ship with 4 GB of RAM by default. Users running multiple apps would benefit tremendously.

- Even though the iSCSI feature set is quite advanced and ahead of the competitors, performance for the 'multiple LUNs on RAID' case could do with some improvement

- In terms of hardware / chassis design, a USB port (even 2.0 would suffice) on the front would be nice to have.

At $1050 for a diskless unit, the pricing is not unreasonable (given the premiums usually associated with Synology units). The Atom C2538 is one of the more powerful Rangeley SoCs and it helps the DS1815+ pack quite a punch for the SMB / SOHO market. We will shortly be reviewing a couple of 8-bay NAS units from other vendors. They will help us get a better understanding of where the DS1815+ stands when contemporary NAS units are taken into consideration.

65 Comments

View All Comments

DigitalFreak - Wednesday, November 19, 2014 - link

Not everyone is poor like you.chaos215bar2 - Wednesday, November 19, 2014 - link

I see a lot of comments like this, and I can only imagine you're assuming that:1) The NAS is only being used as a file server with the most basic setup.

2) Updates are not an issue.

I agree that building a custom NAS box is a fun project and can save a lot of money. However, not everyone wants to deal with the complications that can arise from setting up multiple services and keeping them up-to-date.

Say you want an email server. To install and fully configure Synology's Mail Station takes no more than 10 minutes. If you want webmail to go with it, just install a second package. There's almost zero setup required. Sure, you'll have more options on a generic Linux installation, but setting up a fully functional and securely configured email system takes quite a lot of research if you're just doing it one time.

Of course, all of that time spent properly configuring your custom-built server is worthless if you don't keep it up to date. As of DSM 5.1, Synology will automatically install either all updates or just security updates, and you know that the updated components have been tested and work together. I have never had a problem with a service going down due to a Synology update. With full Linux distributions, not so much. Most of the time updates work fine, but I would never trust something as critical as my primary email server to automatic updates.

shodanshok - Friday, November 21, 2014 - link

Hi,while I agree on the simplicity argument (installing postfix, dovecon and roundcube surely require some time), RedHat and CentOS distros are very good from an update standpoint. I had very little problems with many server (100+) administered over the past years, even with automatic update enabled. Moreover, with the right yum plugin you can install security updates only, if you want.

Nowadays, and with a strong backup strategy, I feel confident enough to enable yum auto-update on all server except the ones used as hypervisors (I had a single hypervisor with auto-update enabled for testing purpose, and anyway it run without a problem).

Sadly, with Debian and Ubuntu LTS distros I had some more problems regarding updates, but perhaps it is only a unfortunately coincidence...

Regards,

shodanshok - Tuesday, November 18, 2014 - link

Hi agree with people saying that similar units are primarily targeted at users that want a clean and simple "off-the-shell" experience. With units as the one reviewed, you basically need to insert the disks, power on the device and follow one or two wizards.That said, a custom-build NAS has vastly better performance/price ratio. One of our customer bought a PowerEdge R515 (6 core Piledriver @ 3.1+ GHz) with 16 GB of ECC RAM, a PERC H700 RAID card with 512 MB of NVRAM cache memory and a 3 years on-site warranty. Total price: about 1600 euros (+ VAT).

He then installed 8x 2TB WD RE and I configured it with a 11+ TB RAID6 volumes with thin LVM volumes and XFS filesystem. It serves both as a backup server (deduplicated via hard links and rsync) and as a big storage for non-critical things (eg: personal files).

Our customer is VERY satisfied of how it works, but hey - face the reality: a skilled people did all the setup work for him, and (obviously) he paid us...

Beany2013 - Thursday, November 27, 2014 - link

This is about the most sensible comment on this entire review.If his budget halved and he couldn't necessarily afford support from you on a regular basis (or at least wanted his hourly callout charge to be lowered) I'm guessing you'd be more tempted to push him in the direction of a device like this, though?

(I've been there, done that, and swapped out more than a few Windows SBS/standard+exchange boxes for Syno units over the last few years for this very reason, natch - the Windows license costs themselves pretty much pay for one of these)

eximius - Thursday, December 11, 2014 - link

I have to agree with these previous two comments.I have an 1813+ sitting next to my heavily modded (aka needed to use a dremel) case with 15 hot swap disks (currently Linux + btrfs + samba & NFS). I have and use (and love) both. There are use cases for both, but I would certainly not hand my custom solution over to someone random and expect it to just work. I have automated updates and reboots (all hail "if [ -f /var/run/reboot-required ]") but occasionally something does not work right. No normal person is going to be able to figure that out in a reasonable amount of time.

Also 30 minutes to install and configure it yourself is total BS. I have saltstack and automated PXE installs at home and 30 minutes is still stretching it for me for a full stack install and configure. Linux + Samba + backup + updates + RAID and/or mdadm and/or zfs and/or btrfs installed and *configured* in 30 minutes is beyond optimistic, even for technical people. $800 does not cover 8 hours of my time, so ya, I recommend Synology for certain (mostly home/SOHO) scenarios.

I can expect my 70 year old dad to be able to keep his synology up to date, but not a Linux or BSD distro. That is just a ridiculous thing to expect.

DustinT - Tuesday, November 18, 2014 - link

Ganesh, thanks for the thoughtful review. I am very interested in seeing how SSD caching affects performance. Take 2 drives out, replace them with 240gb SSDs and retest. Synology is putting a lot of emphasis on ssd caching, and I will be making my buying decision largely based on that aspect alone.eximius - Thursday, December 11, 2014 - link

It depends on your use case. For large sequential transfers SSDs are not going to help you very much since a couple of spinning metal drives can easily saturate a gigabit link. If you need a lot more IOPS then an SSD cache will help you out here but only so much, since again the limit is gigabit ethernet (16000 IOPS or so).*note* this applies to gigabit links, 10+ gigabit ethernet or infiniband connected devices can see an improvement with SSDs.

mervincm - Tuesday, November 18, 2014 - link

Yes, please , test Read and Write Cache effect. In my 1813+ (4GB) on DSM 5.0 I installed a 2 disk Read/Write SSD Cache. Strangely streaming performance dropped, and since my use case is highly dependent on streaming, I removed the cache. I wonder now if things are better with 5.1 or with the read only cache.eximius - Thursday, December 11, 2014 - link

First, your bottleneck is the gigabit LAN. A couple of spinning rust drives can easily saturate a gigabit link so an SSD cache is not going to accelerate a streaming (aka sequential read) operation over gigabit ethernet. If you need more IOPS then an SSD cache will help (gigabit ethernet tops out somewhere around 16000 IOPS), though at the cost of reduced throughput.IOPS and throughput are at opposite ends of the spectrum, an increase in one means a decrease in the other. If your use case is sequential reads and writes, don't bother with the SSDs. On DAS (direct attached storage) you can improve both IOPS and throughput with an SSD cache since it takes a whole lot of platters to equal the performance of a single 850 pro SSD.

Also note that this problem has nothing to do with Synology, you have the same constraints even if you had 24+ thread CPU(s) and 128 GB+ RAM with <insert favourite redundancy technology here>. Gigabit ethernet is slow, period.