Intel Xeon E5 Version 3: Up to 18 Haswell EP Cores

by Johan De Gelas on September 8, 2014 12:30 PM ESTLRDIMMs: Capacity and Real World Performance

As we have shown that Intel invested a lot of time to improve the support for LRDIMMs, we also wanted to do a few real world tests to understand when capacity or higher speed at high DPC matters.

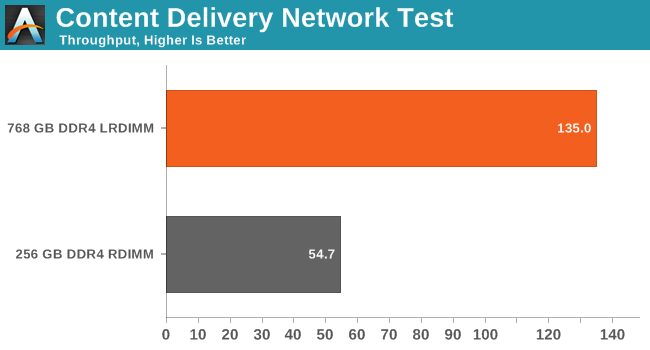

First we test with our CDN test. We simulate our CDN test with one CDN server and three client machines on a 10 Gbit/s network. Each client machine simulates thousands of users requesting different files. Our server runs Ubuntu 13.04 with Apache.

The static files requested originate from sourceforge.org, a mirror of the /a directory, containing 1.4TB of data and 173,000 files. To model the real world load for the CDN two different usage patterns or workloads are executed simultaneously, one that accesses a limited set of files more frequently and a second that accesses less frequently requested files. This workload simulates users that are requesting both current as well as older or less frequently accessed files. You can read more about our CDN test here. We used the Xeon E5-2695 v3.

There is no doubt about it: some applications are all about caching and LRDIMMs are incredibly useful in these situations. Some HPC workloads supposedly require a lot of memory as well and can be very sensitive to memory bandwidth. Really? Even with a 35MB L3 cache? We decided to find out.

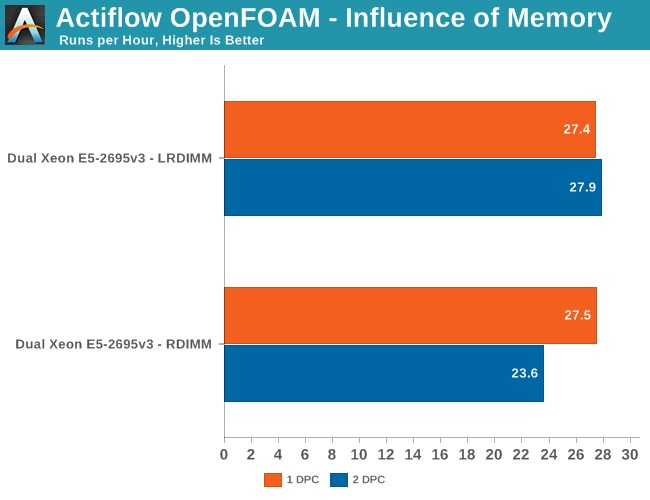

LRDIMMs are just as fast as RDIMM in a 1DPC configuration. Once you plug in two DIMMs per channel, LRDIMMs outperform RDIMMs by 18%. That is quite surprising considering that our CPU is outfitted with an exceptionally large L3. This test clearly shows there are still applications out there craving more memory bandwidth. In the case of OpenFOAM, the amount of bandwidth largely determines how many cores your CPU can keep busy.

85 Comments

View All Comments

LostAlone - Saturday, September 20, 2014 - link

Given the difference in size between the two companies it's not really all that surprising though. Intel are ten times AMD's size, and I have to imagine that Intel's chip R&D department budget alone is bigger than the whole of AMD. And that is sad really, because I'm sure most of us were learning our computer science when AMD were setting the world on fire, so it's tough to see our young loves go off the rails. But Intel have the money to spend, and can pursue so many more potential avenues for improvement than AMD and that's what makes the difference.Kevin G - Monday, September 8, 2014 - link

I'm actually surprised they released the 18 core chip for the EP line. In the Ivy Bridge generation, it was the 15 core EX die that was harvested for the 12 core models. I was expecting the same thing here with the 14 core models, though more to do with power binning than raw yields.I guess with the recent TSX errata, Intel is just dumping all of the existing EX dies into the EP socket. That is a good means of clearing inventory of a notably buggy chip. When Haswell-EX formally launches, it'll be of a stepping with the TSX bug resolved.

SanX - Monday, September 8, 2014 - link

You have teased us with the claim that added FMA instructions have double floating point performance. Wow! Is this still possible to do that with FP which are already close to the limit approaching just one clock cycle? This was good review of integer related performance but please combine with Ian to continue with the FP one.JohanAnandtech - Monday, September 8, 2014 - link

Ian is working on his workstation oriented review of the latest XeonKevin G - Monday, September 8, 2014 - link

FMA is common place in many RISC architectures. The reason why we're just seeing it now on x86 is that until recently, the ISA only permitted two registers per operand.Improvements in this area maybe coming down the line even for legacy code. Intel's micro-op fusion has the potential to take an ordinary multiply and add and fuse them into one FMA operation internally. This type of optimization is something I'd like to see in a future architecture (Sky Lake?).

valarauca - Monday, September 8, 2014 - link

The Intel compiler suite I believe already convertsx *= y;

x += z;

into an FMA operation when confronted with them.

Kevin G - Monday, September 8, 2014 - link

That's with source that is going to be compiled. (And don't get me wrong, that's what a compiler should do!)Micro-op fusion works on existing binaries years old so there is no recompile necessary. However, micro-op fusion may not work in all situations depending on the actual instruction stream. (Hypothetically the fusion of a multiply and an add in an instruction stream may have to be adjacent to work but an ancient compiler could have slipped in some other instructions in between them to hide execution latencies as an optimization so it'd never work in that binary.)

DIYEyal - Monday, September 8, 2014 - link

Very interesting read.And I think I found a typo: page 5 (power optimization). It is well known that THE (not needed) Haswell HAS (is/ has been) optimized for low idle power.

vLsL2VnDmWjoTByaVLxb - Monday, September 8, 2014 - link

Colors or labeling for your HPC Power Consumption graph don't seem right.JohanAnandtech - Monday, September 8, 2014 - link

Fixed, thanks for pointing it out.