Apple's Cyclone Microarchitecture Detailed

by Anand Lal Shimpi on March 31, 2014 2:10 AM EST

The most challenging part of last year's iPhone 5s review was piecing together details about Apple's A7 without any internal Apple assistance. I had less than a week to turn the review around and limited access to tools (much less time to develop them on my own) to figure out what Apple had done to double CPU performance without scaling frequency. The end result was an (incorrect) assumption that Apple had simply evolved its first ARMv7 architecture (codename: Swift). Based on the limited information I had at the time I assumed Apple simply addressed some low hanging fruit (e.g. memory access latency) in building Cyclone, its first 64-bit ARMv8 core. By the time the iPad Air review rolled around, I had more knowledge of what was underneath the hood:

As far as I can tell, peak issue width of Cyclone is 6 instructions. That’s at least 2x the width of Swift and Krait, and at best more than 3x the width depending on instruction mix. Limitations on co-issuing FP and integer math have also been lifted as you can run up to four integer adds and two FP adds in parallel. You can also perform up to two loads or stores per clock.

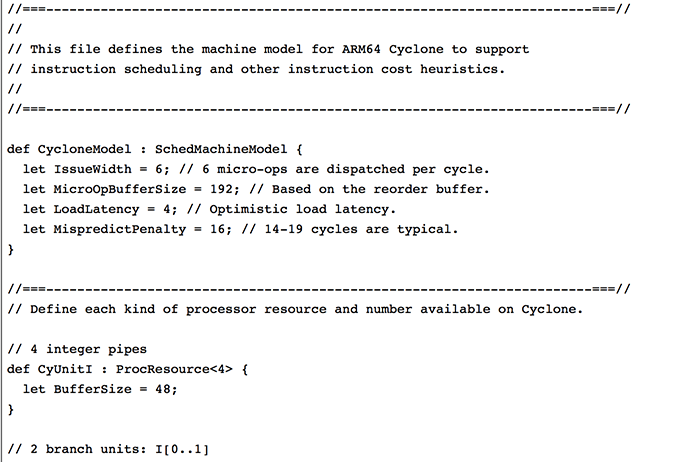

With Swift, I had the luxury of Apple committing LLVM changes that not only gave me the code name but also confirmed the size of the machine (3-wide OoO core, 2 ALUs, 1 load/store unit). With Cyclone however, Apple held off on any public commits. Figuring out the codename and its architecture required a lot of digging.

Last week, the same reader who pointed me at the Swift details let me know that Apple revealed Cyclone microarchitectural details in LLVM commits made a few days ago (thanks again R!). Although I empirically verified many of Cyclone's features in advance of the iPad Air review last year, today we have some more concrete information on what Apple's first 64-bit ARMv8 architecture looks like.

Note that everything below is based on Apple's LLVM commits (and confirmed by my own testing where possible).

| Apple Custom CPU Core Comparison | ||||||

| Apple A6 | Apple A7 | |||||

| CPU Codename | Swift | Cyclone | ||||

| ARM ISA | ARMv7-A (32-bit) | ARMv8-A (32/64-bit) | ||||

| Issue Width | 3 micro-ops | 6 micro-ops | ||||

| Reorder Buffer Size | 45 micro-ops | 192 micro-ops | ||||

| Branch Mispredict Penalty | 14 cycles | 16 cycles (14 - 19) | ||||

| Integer ALUs | 2 | 4 | ||||

| Load/Store Units | 1 | 2 | ||||

| Load Latency | 3 cycles | 4 cycles | ||||

| Branch Units | 1 | 2 | ||||

| Indirect Branch Units | 0 | 1 | ||||

| FP/NEON ALUs | ? | 3 | ||||

| L1 Cache | 32KB I$ + 32KB D$ | 64KB I$ + 64KB D$ | ||||

| L2 Cache | 1MB | 1MB | ||||

| L3 Cache | - | 4MB | ||||

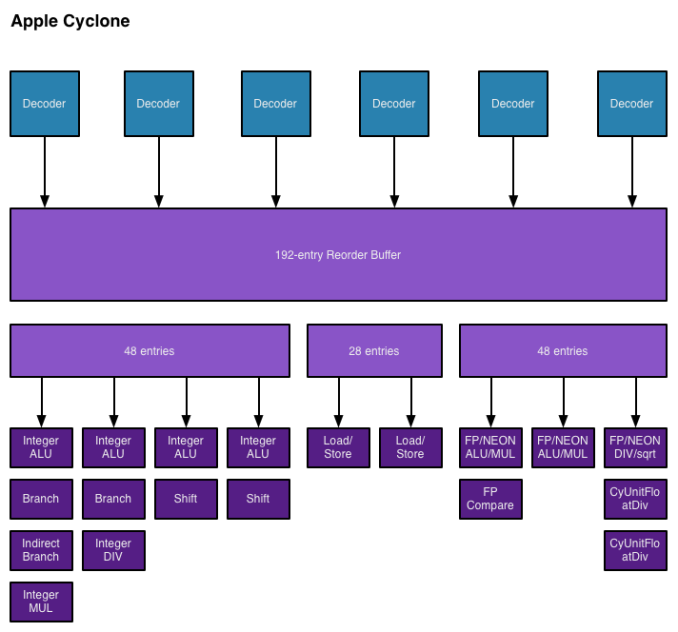

As I mentioned in the iPad Air review, Cyclone is a wide machine. It can decode, issue, execute and retire up to 6 instructions/micro-ops per clock. I verified this during my iPad Air review by executing four integer adds and two FP adds in parallel. The same test on Swift actually yields fewer than 3 concurrent operations, likely because of an inability to issue to all integer and FP pipes in parallel. Similar limits exist with Krait.

I also noted an increase in overall machine size in my initial tinkering with Cyclone. Apple's LLVM commits indicate a massive 192 entry reorder buffer (coincidentally the same size as Haswell's ROB). Mispredict penalty goes up slightly compared to Swift, but Apple does present a range of values (14 - 19 cycles). This also happens to be the same range as Sandy Bridge and later Intel Core architectures (including Haswell). Given how much larger Cyclone is, a doubling of L1 cache sizes makes a lot of sense.

On the execution side Cyclone doubles the number of integer ALUs, load/store units and branch units. Cyclone also adds a unit for indirect branches and at least one more FP pipe. Cyclone can sustain three FP operations in parallel (including 3 FP/NEON adds). The third FP/NEON pipe is used for div and sqrt operations, the machine can only execute two FP/NEON muls in parallel.

I also found references to buffer sizes for each unit, which I'm assuming are the number of micro-ops that feed each unit. I don't believe Cyclone has a unified scheduler ahead of all of its execution units and instead has statically partitioned buffers in front of each port. I've put all of this information into the crude diagram below:

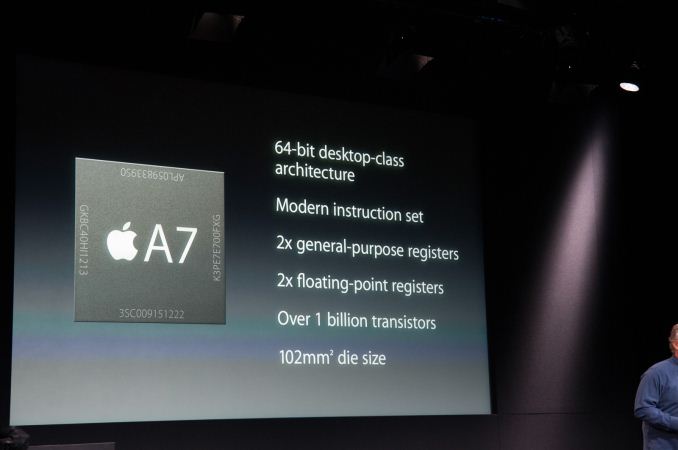

Unfortunately I don't have enough data on Swift to really produce a decent comparison image. With six decoders and nine ports to execution units, Cyclone is big. As I mentioned before, it's bigger than anything else that goes in a phone. Apple didn't build a Krait/Silvermont competitor, it built something much closer to Intel's big cores. At the launch of the iPhone 5s, Apple referred to the A7 as being "desktop class" - it turns out that wasn't an exaggeration.

Cyclone is a bold move by Apple, but not one that is without its challenges. I still find that there are almost no applications on iOS that really take advantage of the CPU power underneath the hood. More than anything Apple needs first party software that really demonstrates what's possible. The challenge is that at full tilt a pair of Cyclone cores can consume quite a bit of power. So for now, Cyclone's performance is really used to exploit race to sleep and get the device into a low power state as quickly as possible. The other problem I see is that although Cyclone is incredibly forward looking, it launched in devices with only 1GB of RAM. It's very likely that you'll run into memory limits before you hit CPU performance limits if you plan on keeping your device for a long time.

It wasn't until I wrote this piece that Apple's codenames started to make sense. Swift was quick, but Cyclone really does stir everything up. The earlier than expected introduction of a consumer 64-bit ARMv8 SoC caught pretty much everyone off guard (e.g. Qualcomm's shift to vanilla ARM cores for more of its product stack).

The real question is where does Apple go from here? By now we know to expect an "A8" branded Apple SoC in the iPhone 6 and iPad Air successors later this year. There's little benefit in going substantially wider than Cyclone, but there's still a ton of room to improve performance. One obvious example would be through frequency scaling. Cyclone is clocked very conservatively (1.3GHz in the 5s/iPad mini with Retina Display and 1.4GHz in the iPad Air), assuming Apple moves to a 20nm process later this year it should be possible to get some performance by increasing clock speed scaling without a power penalty. I suspect Apple has more tricks up its sleeve than that however. Swift and Cyclone were two tocks in a row by Intel's definition, a third in 3 years would be unusual but not impossible (Intel sort of committed to doing the same with Saltwell/Silvermont/Airmont in 2012 - 2014).

Looking at Cyclone makes one thing very clear: the rest of the players in the ultra mobile CPU space didn't aim high enough. I wonder what happens next round.

182 Comments

View All Comments

homebrewer - Tuesday, April 1, 2014 - link

Yeah, and by that logic, it would be even more ridiculous to ever use a mouse, a GUI, or more than 640k memory...homebrewer - Tuesday, April 1, 2014 - link

I remember when the 1" HD came out. Everyone said it was 'neat' but can't hold enough data to be useful. Apple built the iPod with it.JerryL - Tuesday, April 1, 2014 - link

There's an alternative direction Apple may be going: What's the hardware in those massive data centers it's building? No one really knows - only Apple's own software runs in there. They're certainly not buying off-the-shelf servers to populate their data centers - none of the big guys are any more.So imagine a clean-sheet design for an Apple data center server. I see at least three technologies that Apple's already shipping that I'd look to apply: An A7 variant or successor for the CPU's (lower power, less cooling); fast on-board SSD as used in all recent laptops; maybe Thunderbolt for connection to disks.

Apple has a long history of using technologies in unexpected places. Laptop technologies were the basis of the Mac Mini, then generations of iMac desktops; some are figuring in the most recent Mac Pro. The rest of the industry initially missed this possibility - they viewed desktops and laptops as entirely separate product segments, each with their own standards (standard big box - mainly full of air for years now - for desktops; 3.5" disks in desktops, 2.5" in laptops; internal expansion of memory and multiple disks for desktops, limited expansion for laptops; different lines of CPU chips). By now everyone realizes that many of those distinctions stopped making sense a long time ago. (That's not to say there are *no* differences - you can afford to use much more power in a desktop than a laptop, so you can put in a more power-hungry CPU. What's new is that you differentiate in your designs when it actually matters, not to match some arbitrary 25-year-old standard.)

Maybe the next wall to be breached is that separating "servers" from other machines.

-- Jerry

OreoCookie - Tuesday, April 1, 2014 - link

That's a very good point: Apple has been preaching to own as much of the stack as possible, at the very least the crucial parts. Since cloud services are becoming increasingly important, Apple should go the way of Facebook and Google, and make its own servers. There are some efforts in this direction (Caldexa, HP's project Moonshot), so maybe this is what Mansfield is up to these days. Certainly, Apple's focus on desktop class performance would enable it to become central to Apple's server architecture.One of the big problems I have is persistent, distributed storage. All of the solutions seem rather pedestrian and only partial solutions to a bigger problem. Maybe this will be one area of innovation soon.

easp - Tuesday, April 1, 2014 - link

I think you are putting the cart before the horse. You use components designed for one segment in another because they offer better economics, or capabilities.So, Apple used mobile parts in desktops because they were more compact and power efficient.

Similarly, Google leveraged high-volume commodity parts to fill their datacenters because they were significantly cheaper, and also had a better balance between I/O, memory bandwidth, and CPU.

People are now looking to mobile market components for the datacenter both because of the economies of scale in mobile, and for power consumption.

Apple may well end up using Cyclone in the datacenter, but if they do, it won't be because they designed it with a focus on the datacenter, it will be because decisions that make sense for mobile also make sense for the datacenter. Performance per watt is an important consideration for devices designed to fit in a pocket, or to fill a datacenter. In Apple's case, success at the former is what pays for the latter.

shompa - Friday, April 4, 2014 - link

@JerryL Web/Cloud is one of Apple's weaknesses.Back in .mac/MobileMe days Apple used Sun servers/Solaris and Oracle stuff. The first huge data center for iCloud used HP and NetApp stuff + Windows Azure (= why it will never work).

Its unlikely that Apple suddenly would start to do its own ARM Servers to do stuff. I really wished that Apple started to use its own servers/software for key components like iCloud/Siri/Itunes but Apple don't sees that as their key business.

---

We will see a move to ARM architecture in the future. Per Mhz A7 is faster than Intels i7 ULV processors. Many IT people dont think that just 15 years ago RISC had 90% of the server market and a huge part of the workstation market. We had PPC, Alpha, IBM Power, X86, PARisc and a bunch more CPUs competing. This lead to "Moores law" where performance doubled every 18 month.

X86 became fast enough, was cheap and had Windows. RISC was always faster, but Sun and other vendors lived in a world where they wanted 4000 dollar for a CPU. With AMD X86-64 even the server marked started to move to X86.

In 2006 intel released the Core architecture that killed competition between Intel and AMD. Since RISC vendors already was out of business Intel had no competition. Moores law died. In 2006 fastest desktop X86 was quad core 2.66ghz Core cpu. Today fastest desktop CPU is 3.5 ghz quad core intel. Less than 100% increase in 8 years. Even if we count desktop Xeons six core its about 150% increase.

ARM that have competition even between different ARM vendors 2007: 416Mhz to Quad core/octa core 2.5ghz in 2014. Just Apple have had 4000% increase in performance in the chips they use.

Intel 150%. ARM/Apple 4000%

Intel believe they don't have to release faster stuff since there is no other X86/pure Windows CPU. Instead of making faster stuff they have optimized profits. Now Intel does the same thing as RISC vendors did: charge 4400 dollar for a 20 core Xeon.

Look at graphic chips where AMD and Nvidia compete. They still follow "Moore's law". People complain that graphic chips cost 700 dollar. Its cheap!

Intels 20 core Xeon is less than 500mm2. AMD/Nvidias highend GPUs are around 500mm2. Since a wafer cost 7500 dollars/28nm it cost AMD/Nvidia about 400 dollars to produce a GPU at 500mm2. The point: If Intel had competition they would be forced to sell 20 core Xeons at 500 dollars. Then Moore's law would be alive.

This arrogance from Intel will kill X86. And its not one day to early. Every IT professional that have used real 64bit chips with real 64bit OS and no BIOS knows what I talk about. The sad thing is that I talk about stuff that real computers have had for almost 25 years. (1990 64bit. OpenBoot/EFi is at least 20 years old. Pure 64bit OS at least 15 years old. X86 = 64bit extentions = why 64bit code on Windows is SLOWER then 32bit code. About 3% slower. No real 64bit chip have this fenomen. Not even Apples A7. Benchmarks shows that code compiled in 32bit/64bit gains 20-30% performce by going 64bit. I really hate the windows myth that 64bit is just more memory. ARM for example have had 38bit addressing for years. Memory is not the issue. Its performance.

I think its funny that it was John Scully's Apple that was one of the founding members of what later became ARM. Apple needed chips for their Newton. 15-20 years later these ARM chips generate the most money for Apple and save the IT industry.

BTW. Optimized will always be better. Apple makes its own chips since they can optimize it for its software. Look at A5. Apple used 30% of the die area for DSPs and stuff they wanted incl the noise cancellation for Siri. A6 had the "visual processor". A7 had M7. Imagine a desktop CPU where Apple can put anything they want.

Look at MacPro. Since Apple control the hardware and use a real OS they can dedicate a graphic card for compute. A Firepro have 35 times the calculation power than the Xeons in MacPro. This is what's going to happen with Apples desktop ARMs. Apple have 15 years experience using graphic for acceleration and SIMD/Altivec. Stuff that Windows still cant do since MSFT don't control the hardware.

psyq321 - Wednesday, April 9, 2014 - link

What is it that can be done on a "real OS" regarding compute that I cannot do on a "non real" OS such as Windows or Linux?I have CUDA, I have OpenCL and on both OS X and Linux/Windows I have to design and optimize my algorithms for GPU compute, otherwise they would suck.

There is no magical pixie dust in OS X that would magically make the GPU and development environment more "compute friendly" than it is on other platforms. If somebody claims otherwise that is just load of marketing nonsense. If your algorithm does not scale or sucks at memory accesses on the GPU it is going to suck on both OS X and Windows/Linux. If not, it is going to go well on both.

And, guess what, if I want I can get a expandable system where I can stack 4 or more GPUs, where on the Mac Pro I am stuck with 2 of them tops. Not to mention the CPU side, where there are readily available Ivy Bridge EP 4S systems that can fit in the workstation form factor, while Mac Pro cannot do more than a single Xeon 2697 v2.

As for NVIDIA/AMD competing vs Intel Xeons, sure - look at NVIDIA's and AMD's "enterprise" GPUs, all of the sudden we are talking about mid-high $$$ figures for a single card, just like Intel's high-end Xeons.

This is because enterprise market is willing to pay high dollar for a solution, not because there is no competition. Server hardware for big data / medical / research was always costing thousands of $$$ or more per piece, the only difference is that every year you get more for your $3/$5/$10K+.

cornchip - Tuesday, April 1, 2014 - link

And will they skip A13?Systems Analyst - Wednesday, April 2, 2014 - link

First, it seems to me that Apple are at least one year ahead of Qualcomm, and Qualcomm are a year or two ahead of Intel, in mobile computing. It all seems to be about the level of integration achieved. Intel are having to use TSMC to deliver SOFIA. There will be a delay before Intel can achieve this level of integration using their own wafer fabs.Secondly, it is not all about the CPU. ARM have stated that 2+4 big/little cores makes sense in mobile. This is because most of the processing power is in the GPU. According to NVIDIA, this general idea scales all the way up to HPC; the GPUs provide the real processing power. Apples's A7 chip may well have 4 A53 cores, and use big/little; who, other than Apple, would know?

Thirdly, there is no best CPU design; ARM emphasise diversity; they are not trying to do Apple's job for them. Every different CPU design is a different design point in the design space.

Fourthly, the levels of integration now possible on SoCs, especially at the server level, are so great, and potentially so diverse, that there is no best SoC; they are just different points in the design space.

Fifthly, the desktop market is contracting; this even applies to Apple's MACs. No company can base its future on a contracting market.

PaulInOakland - Wednesday, April 9, 2014 - link

Anand, nice work, ex CPU/IO system guy here so it's nice to see this sort of digging. Quick question. With 6 micro-op issuances (or is that decode) , what about resource interlock ? register collision. How smart is the compiler ?