The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

A Note On Crossfire, 4K Compatibility, Power, & The Test

Before we dive into our formal testing, there are a few brief testing notes that bear mentioning.

First and foremost, on top of our normal testing we did some additional Crossfire compatibility testing to see if AMD’s new XDMA Crossfire implementation ran into any artifacting or other issues that we didn’t experience elsewhere. The good news there is that outside of the typical scenarios where games simply don’t scale with AFR – something that affects SLI and CF equally – we didn’t see any artifacts in the games themselves. The closest we came to a problem was with the intro videos for Total War: Rome 2, which have black horizontal lines due to the cards trying to AFR render said video at a higher framerate than it played at. Once in-game Rome was relatively fine; relatively because it’s one of the games we have that doesn’t see any performance benefit from AFR.

Unfortunately AMD’s drivers for 290X are a bit raw when it comes to Crossfire. Of note, when running at a 4K resolution, we had a few instances of loading a game triggering an immediate system reboot. Now we’ve had crashes before, but nothing quite like this. After reporting it to AMD, AMD tells us that they’ve been able to reproduce the issue and have fixed it for the 290X launch drivers, which will be newer than the press drivers we used. Once those drivers are released we’ll be checking to confirm, but we have no reason to doubt AMD at this time.

Speaking of 4K, due to the two controller nature of the PQ321 monitor we use there are some teething issues related to using 4K right now. Most games are fine at 4K, however we have found games that both NVIDIA and AMD have trouble with at one point or another. On the NVIDIA side Metro will occasionally lock up after switching resolutions, and on the AMD side GRID 2 will immediately crash if using the two controller (4K@60Hz) setup. In the case of the latter dropping down to a single controller (4K@30Hz) satisfies GRID while allowing us to test at 4K resolutions, and with V-sync off it doesn’t have a performance impact versus 60Hz, but it is something AMD and Codemasters will need to fix.

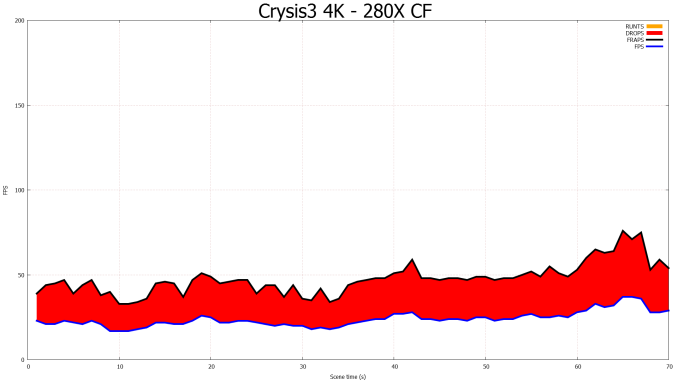

Furthermore we also wanted to offer a quick update on the state of Crossfire on AMD’s existing bridge based (non-XDMA) cards. The launch drivers for the 290X do not contain any further Crossfire improvements for bridge based cards, which means Eyefinity Crossfire frame pacing is still broken for all APIs. Of particular note for our testing, the 280X Crossfire setup ends up in a particularly nasty failure mode, simply dropping every other frame. It’s being rendered, as evidenced by the consumption of the Present call, however as our FCAT testing shows it’s apparently not making it to the master card. This has the humorous outcome of making the frame times rather smooth, but it makes Crossfire all but worthless as the additional frames are never displayed. Hopefully AMD can put a fork in the matter once and for all next month.

A Note On Testing Methodologies & Sustained Performance

Moving on to the matter of our testing methodology, we want to make note of some changes since our 280X review earlier this month. After having initially settled on Metro: Last Light for our gaming power/temp/noise benchmark, in a spot of poor planning on our part we have discovered that Metro scales poorly on SLI/CF setups, and as a result doesn't push those setups very hard. As such we have switched from Metro to Crysis 3 for our power/temp/noise benchmarking, as Crysis 3 was our second choice and has a similar degree of consistency to it as Metro while scaling very nicely across both AMD and NVIDIA multi-GPU setups. For single-GPU cards the impact on noise is measurably minor, as the workloads are similar, however power consumption will be a bit different due to the difference in CPU workloads between the benchmarks.

We also want to make quick note of our testing methodologies and how they are or are not impacted by temperature based throttling. For years we have done all of our GPU benchmarking by looping gaming benchmarks multiple times, both to combat the inherent run-to-run variation that we see in benchmarking, and more recently to serve as a warm-up activity for cards with temperature based throttling. While these methods have proved sufficient for the Radeon 7000 series, the GeForce 600 series, and even the GeForce 700 series, due to the laws of physics AMD's 95C throttle point takes longer to get to than NVIDIA's 80C throttle point. As a result it's harder to bring the 290X up to its sustained temperatures before the end of our benchmark runs. It will inevitably hit 95C in quiet mode, but not every benchmark runs long enough to reach that before the 3rd or 4th loop.

For the sake of consistency with past results we have not altered our benchmark methodology. However we wanted to be sure to point out this fact before getting to benchmarking, so that there’s no confusion over how we’re handling the matter. Consequently we believe our looping benchmarks run long enough to generally reach sustained performance numbers, but in all likelihood some of our numbers on the shortest benchmarks will skew low. For the next iteration of our benchmark suite we’re most likely going to need to institute a pre-heating phase for all cards to counter AMD’s 95C throttle point.

The Drivers

The press drivers for the 290X are Catalyst 13.11 Beta v5 (The “v” is AMD’s nomenclature), which identify themselves as being from the driver branch 13.250. These are technically still in the 200 branch of AMD’s drivers, but this is the first appearance of 250, as Catalyst 13.11 Beta v1 was still 13.200. AMD doesn’t offer release notes on these beta drivers, but we found that they offered distinct improvements in GRID 2 and to a lesser extent Battlefield 3, and have updated our earlier results accordingly.

Meanwhile for NVIDIA we’re using the recently released “game ready” 331.58 WHQL drivers.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: |

AMD Radeon R9 290X XFX Radeon R9 280X Double Dissipation AMD Radeon HD 7970 GHz Edition AMD Radeon HD 7970 AMD Radeon HD 6970 AMD Radeon HD 5870 NVIDIA GeForce GTX Titan NVIDIA GeForce GTX 780 NVIDIA GeForce GTX 770 |

| Video Drivers: |

NVIDIA Release 331.58 AMD Catalyst 13.11 Beta v1 AMD Catalyst 13.11 Beta v5 |

| OS: | Windows 8.1 Pro |

396 Comments

View All Comments

46andtool - Thursday, October 24, 2013 - link

I dont know where your getting your information but your obviously nvidia biased because its all wrong. AMD is known for using poor reference coolers, once manufactures like sapphire and HIS roll out there cards in a couple weeks Im sure the noise and heat wont be a problem. and the 780ti is poised to be between a 780gtx and a titan, it will not be faster than a 290x, sorry. We already have the 780ti's specs..what Nvidia needs to focus on is dropping its insane pricing.SolMiester - Monday, October 28, 2013 - link

Sorry bud, but the Ti will be much faster than Titan, otherwise there is no point, hell even the 780OC is enough to edge the Titan. Why are people going on about Titan, its a once in a blue moon product to fill a void that AMD left open with CUDA dev for prosumers...Full monty with perhaps 7ghz memory, wahey!Samus - Friday, October 25, 2013 - link

What in the world makes you think the 780Ti will be faster than Titan? That's ridiculous. What's next, a statement that the 760Ti will be faster than the 770?TheJian - Friday, October 25, 2013 - link

http://www.techradar.com/us/news/computing-compone...Another shader and more mhz.

http://news.softpedia.com/news/NVIDIA-GeForce-GTX-...

If the specs are true quite a few sites think it will be faster than titan.

http://hexus.net/tech/news/graphics/61445-nvidia-g...

Check the table. 780TI would win in gflops if leak is true. The extra 80mhz+1SMX mean it should either tie or barely beat it in nearly everything.

Even a tie at $650 would be quite awesome at less watts/heat/noise possibly. Of course it will be beat a week later buy a fully unlocked titan ultra or more mhz, or mhz+fully unlocked. NV won't just drop titan. They will make a better one easily. It's not like NV just sat on their butts for the last 8 months. It's comic anyone thinks AMD has won. OK, for a few weeks tops (and not really even now other than price looking at 1080p and the games I mentioned previously).

ShieTar - Thursday, October 24, 2013 - link

It doesn't cost less than a GTX780, it only has a lower MSRP. The actual price for which you can buy a GTX780 is already below 549$ today, so as usual you pay the same price for the same performance with both companies.And testing 4K gaming is important right now, but it should be another 3-5 years before 4K performance actually impacts sales figures in any relevant way.

And about Titan? Keep in mind that it is 8 months old, still has one SMX disabled (unlike the Quadro K6000), and still uses less power in games than the 290X. So I wouldn't be surprised to see a Titan+ come out soon, with 15 SMX and higher base clocks, and as Ryan puts it in this article "building a refined GPU against a much more mature 28nm process". That should be enough to gain 10%-15% performance in anything restricted by computing power, thus showing a much more clear lead over the 290X.

The only games that the 290X will clearly win are those that are restricted by memory bandwidth. But nVidia have proven with the 770 that they can operate memory at 7GHz as well, so they can increase Titans bandwidth by 16% through clocks alone.

Don't get me wrong, the 290X looks like a very nice card, with a very good price to it. I just don't think nVidia has any reason to worry, this is just competition as usual, AMD have made their move, nVidia will follow.

Drumsticks - Thursday, October 24, 2013 - link

http://www.newegg.com/Product/ProductList.aspx?Sub...Searched on Newegg, sorted by lowest price, lowest one was surprise! $650. I don't think Newegg is over $100 off in their pricing with competitors.

46andtool - Thursday, October 24, 2013 - link

http://www.newegg.com/Product/Product.aspx?Item=N8...your clearly horrible at searching

TheJian - Friday, October 25, 2013 - link

$580 isn't $550 though right? And out of stock. I wonder how many of these they can actually make seeing how hot it is already in every review pegged 94c. Nobody was able to OC it past 1125. They're clearly pushing this thing a lot already.ShieTar - Friday, October 25, 2013 - link

Well, color me surprised. I admittedly didn't check the US market, because for more than a decade now, electronics used to be sold in the Euro-Region with a price conversion assumption of 1€=1$, so everything was about 35% more expensive over here (but including 19% VAT of course).So for this discussion I used our German comparison engines. Both the GTX780 and the R290X are sold for the same price of just over 500€ over here, which is basically 560$+19%VAT. I figured the same price policies would apply in the US, it basically always does.

Well, as international shipping is rarely more that 15$, it would seem like your cheapest way to get a 780 right now is to actually import it from Germany. Its always been the other way around with electronics, interesting to see it the other way around for once.

46andtool - Thursday, October 24, 2013 - link

the price of a 780gtx is not below $649 unless you are talking about a refurbished or open box card.