The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

A Note On Crossfire, 4K Compatibility, Power, & The Test

Before we dive into our formal testing, there are a few brief testing notes that bear mentioning.

First and foremost, on top of our normal testing we did some additional Crossfire compatibility testing to see if AMD’s new XDMA Crossfire implementation ran into any artifacting or other issues that we didn’t experience elsewhere. The good news there is that outside of the typical scenarios where games simply don’t scale with AFR – something that affects SLI and CF equally – we didn’t see any artifacts in the games themselves. The closest we came to a problem was with the intro videos for Total War: Rome 2, which have black horizontal lines due to the cards trying to AFR render said video at a higher framerate than it played at. Once in-game Rome was relatively fine; relatively because it’s one of the games we have that doesn’t see any performance benefit from AFR.

Unfortunately AMD’s drivers for 290X are a bit raw when it comes to Crossfire. Of note, when running at a 4K resolution, we had a few instances of loading a game triggering an immediate system reboot. Now we’ve had crashes before, but nothing quite like this. After reporting it to AMD, AMD tells us that they’ve been able to reproduce the issue and have fixed it for the 290X launch drivers, which will be newer than the press drivers we used. Once those drivers are released we’ll be checking to confirm, but we have no reason to doubt AMD at this time.

Speaking of 4K, due to the two controller nature of the PQ321 monitor we use there are some teething issues related to using 4K right now. Most games are fine at 4K, however we have found games that both NVIDIA and AMD have trouble with at one point or another. On the NVIDIA side Metro will occasionally lock up after switching resolutions, and on the AMD side GRID 2 will immediately crash if using the two controller (4K@60Hz) setup. In the case of the latter dropping down to a single controller (4K@30Hz) satisfies GRID while allowing us to test at 4K resolutions, and with V-sync off it doesn’t have a performance impact versus 60Hz, but it is something AMD and Codemasters will need to fix.

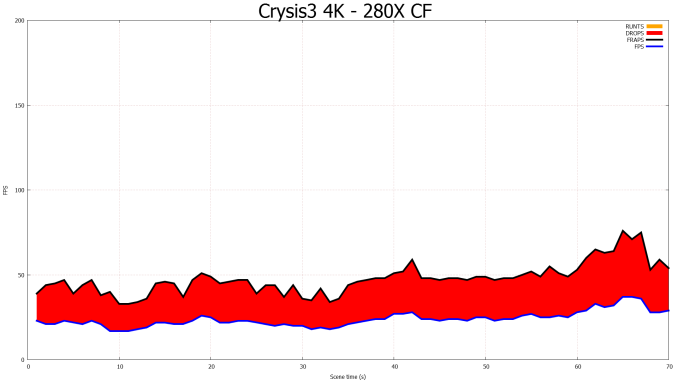

Furthermore we also wanted to offer a quick update on the state of Crossfire on AMD’s existing bridge based (non-XDMA) cards. The launch drivers for the 290X do not contain any further Crossfire improvements for bridge based cards, which means Eyefinity Crossfire frame pacing is still broken for all APIs. Of particular note for our testing, the 280X Crossfire setup ends up in a particularly nasty failure mode, simply dropping every other frame. It’s being rendered, as evidenced by the consumption of the Present call, however as our FCAT testing shows it’s apparently not making it to the master card. This has the humorous outcome of making the frame times rather smooth, but it makes Crossfire all but worthless as the additional frames are never displayed. Hopefully AMD can put a fork in the matter once and for all next month.

A Note On Testing Methodologies & Sustained Performance

Moving on to the matter of our testing methodology, we want to make note of some changes since our 280X review earlier this month. After having initially settled on Metro: Last Light for our gaming power/temp/noise benchmark, in a spot of poor planning on our part we have discovered that Metro scales poorly on SLI/CF setups, and as a result doesn't push those setups very hard. As such we have switched from Metro to Crysis 3 for our power/temp/noise benchmarking, as Crysis 3 was our second choice and has a similar degree of consistency to it as Metro while scaling very nicely across both AMD and NVIDIA multi-GPU setups. For single-GPU cards the impact on noise is measurably minor, as the workloads are similar, however power consumption will be a bit different due to the difference in CPU workloads between the benchmarks.

We also want to make quick note of our testing methodologies and how they are or are not impacted by temperature based throttling. For years we have done all of our GPU benchmarking by looping gaming benchmarks multiple times, both to combat the inherent run-to-run variation that we see in benchmarking, and more recently to serve as a warm-up activity for cards with temperature based throttling. While these methods have proved sufficient for the Radeon 7000 series, the GeForce 600 series, and even the GeForce 700 series, due to the laws of physics AMD's 95C throttle point takes longer to get to than NVIDIA's 80C throttle point. As a result it's harder to bring the 290X up to its sustained temperatures before the end of our benchmark runs. It will inevitably hit 95C in quiet mode, but not every benchmark runs long enough to reach that before the 3rd or 4th loop.

For the sake of consistency with past results we have not altered our benchmark methodology. However we wanted to be sure to point out this fact before getting to benchmarking, so that there’s no confusion over how we’re handling the matter. Consequently we believe our looping benchmarks run long enough to generally reach sustained performance numbers, but in all likelihood some of our numbers on the shortest benchmarks will skew low. For the next iteration of our benchmark suite we’re most likely going to need to institute a pre-heating phase for all cards to counter AMD’s 95C throttle point.

The Drivers

The press drivers for the 290X are Catalyst 13.11 Beta v5 (The “v” is AMD’s nomenclature), which identify themselves as being from the driver branch 13.250. These are technically still in the 200 branch of AMD’s drivers, but this is the first appearance of 250, as Catalyst 13.11 Beta v1 was still 13.200. AMD doesn’t offer release notes on these beta drivers, but we found that they offered distinct improvements in GRID 2 and to a lesser extent Battlefield 3, and have updated our earlier results accordingly.

Meanwhile for NVIDIA we’re using the recently released “game ready” 331.58 WHQL drivers.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: |

AMD Radeon R9 290X XFX Radeon R9 280X Double Dissipation AMD Radeon HD 7970 GHz Edition AMD Radeon HD 7970 AMD Radeon HD 6970 AMD Radeon HD 5870 NVIDIA GeForce GTX Titan NVIDIA GeForce GTX 780 NVIDIA GeForce GTX 770 |

| Video Drivers: |

NVIDIA Release 331.58 AMD Catalyst 13.11 Beta v1 AMD Catalyst 13.11 Beta v5 |

| OS: | Windows 8.1 Pro |

396 Comments

View All Comments

Blamcore - Friday, October 25, 2013 - link

Wow, I was just remarking yesterday that NV fanbois had sunk to the level of apple fanbois, when I was seeing the argument "you just like AMD because you can't afford NV" on a few boards. Now here is apple fanbois famous argument "my company is better because they have a higher profit margin" Gratz your unreasonable bias just went up a level!I know, you aren't a fanboy, you are really a business expert here to recommend that a company should gain market share by releasing a card roughly equal to what it's competitor had out for months and pricing it the same as they do! Maybe the could have asked 650 if they released it last January

puppies - Saturday, October 26, 2013 - link

R+D costs come from the sale price of the card. Are you tring to claim a $300 GPU costs $300 in materials? R+D costs also come from the fact that shrinking the process enables the manufacturer to get more cards per die each time.Look at Intel and AMD their chips don't go up in price each time they get faster, they stay at the same price point. The last 2 cards I have bought have been Nvidia but the next one will be AMD at this rate. I expect a 660TI to be faster and more energy efficient than a 560TI and at the same price point WHEN IT IS RELEASED and I think a lot of people are in the same boat. Nvidia is trying to push people into spending more each time they release a new model line up and it stinks.

I don't care if a 660 is faster than a 560TI, forcing people to move down the GPU lineup just smacks of NVIDIA price gouging.

Samus - Thursday, October 24, 2013 - link

I have to disagree with you Berzerker. Although his post clearly "overpromotes" the 290, it is incredible value when you consider it is faster and cheaper (by hundreds of dollars) than the Titan.-Geforce 660TI owner

Laststop311 - Thursday, October 24, 2013 - link

For people that value a quiet computer, this card is trashSpunjji - Friday, October 25, 2013 - link

For people that value a quiet computer, all stock coolers are useless.People that value a truly quiet computer won't even be playing at this end of the GPU market.

Samus - Friday, October 25, 2013 - link

This card is a great candidate for water cooling since the back of the PCB is essentially empty. Water cooling the face side is cheaper/easier, and this card can clearly use it.HisDivineOrder - Friday, October 25, 2013 - link

He didn't say "silent." He said "quiet." I'd argue the Titan/780/690 coolers were all "quiet," but not "silent."Since he said quiet, I don't think his expectation is unreasonable to expect a certain level of "quiet" at the $500+ range of discrete cards.

Nenad - Friday, October 25, 2013 - link

780 with stock cooler is not useless, and it IS quiet (it is not 'silent')BTW, going by posted numbers it seems 290x will be TWICE as noisy as GTX780 ?

ballfeeler - Thursday, October 24, 2013 - link

Methinks Berzerker7 is just salty and perhaps partial to nvidia. Nothing itchy wrote is inaccurate, including the $550 price that Salty-Berzerker7 claimed was $600.- Fastest card ? - yup

- Free game ? – yup

- Pooped all over titan ? –yup

Do not be salty mr. Berzerker7. AMD just roundhouse kicked nvidia square in the gonads with performance above Titan for half the price.

Shark321 - Thursday, October 24, 2013 - link

At 1080p it's actually slower than Titan if you average all reviews across the sites. With some reviews even slightly slower than the 780. It's also the loudest card ever produced after 30 minutes of playing (9,6 Sone in Battlefield 3 according to PCGamesExtreme). With this noise it's not acceptable and there will be no other coolers for the time being.