The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

A Note On Crossfire, 4K Compatibility, Power, & The Test

Before we dive into our formal testing, there are a few brief testing notes that bear mentioning.

First and foremost, on top of our normal testing we did some additional Crossfire compatibility testing to see if AMD’s new XDMA Crossfire implementation ran into any artifacting or other issues that we didn’t experience elsewhere. The good news there is that outside of the typical scenarios where games simply don’t scale with AFR – something that affects SLI and CF equally – we didn’t see any artifacts in the games themselves. The closest we came to a problem was with the intro videos for Total War: Rome 2, which have black horizontal lines due to the cards trying to AFR render said video at a higher framerate than it played at. Once in-game Rome was relatively fine; relatively because it’s one of the games we have that doesn’t see any performance benefit from AFR.

Unfortunately AMD’s drivers for 290X are a bit raw when it comes to Crossfire. Of note, when running at a 4K resolution, we had a few instances of loading a game triggering an immediate system reboot. Now we’ve had crashes before, but nothing quite like this. After reporting it to AMD, AMD tells us that they’ve been able to reproduce the issue and have fixed it for the 290X launch drivers, which will be newer than the press drivers we used. Once those drivers are released we’ll be checking to confirm, but we have no reason to doubt AMD at this time.

Speaking of 4K, due to the two controller nature of the PQ321 monitor we use there are some teething issues related to using 4K right now. Most games are fine at 4K, however we have found games that both NVIDIA and AMD have trouble with at one point or another. On the NVIDIA side Metro will occasionally lock up after switching resolutions, and on the AMD side GRID 2 will immediately crash if using the two controller (4K@60Hz) setup. In the case of the latter dropping down to a single controller (4K@30Hz) satisfies GRID while allowing us to test at 4K resolutions, and with V-sync off it doesn’t have a performance impact versus 60Hz, but it is something AMD and Codemasters will need to fix.

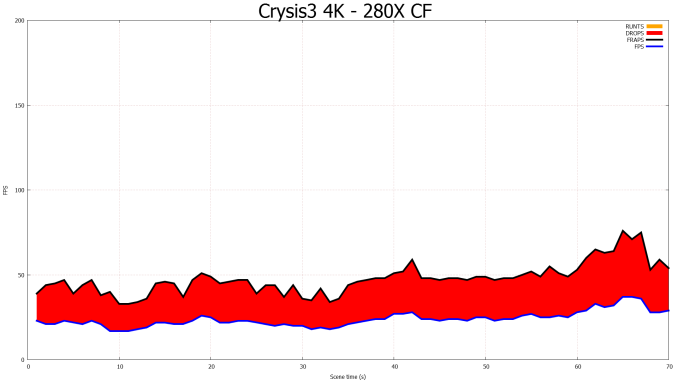

Furthermore we also wanted to offer a quick update on the state of Crossfire on AMD’s existing bridge based (non-XDMA) cards. The launch drivers for the 290X do not contain any further Crossfire improvements for bridge based cards, which means Eyefinity Crossfire frame pacing is still broken for all APIs. Of particular note for our testing, the 280X Crossfire setup ends up in a particularly nasty failure mode, simply dropping every other frame. It’s being rendered, as evidenced by the consumption of the Present call, however as our FCAT testing shows it’s apparently not making it to the master card. This has the humorous outcome of making the frame times rather smooth, but it makes Crossfire all but worthless as the additional frames are never displayed. Hopefully AMD can put a fork in the matter once and for all next month.

A Note On Testing Methodologies & Sustained Performance

Moving on to the matter of our testing methodology, we want to make note of some changes since our 280X review earlier this month. After having initially settled on Metro: Last Light for our gaming power/temp/noise benchmark, in a spot of poor planning on our part we have discovered that Metro scales poorly on SLI/CF setups, and as a result doesn't push those setups very hard. As such we have switched from Metro to Crysis 3 for our power/temp/noise benchmarking, as Crysis 3 was our second choice and has a similar degree of consistency to it as Metro while scaling very nicely across both AMD and NVIDIA multi-GPU setups. For single-GPU cards the impact on noise is measurably minor, as the workloads are similar, however power consumption will be a bit different due to the difference in CPU workloads between the benchmarks.

We also want to make quick note of our testing methodologies and how they are or are not impacted by temperature based throttling. For years we have done all of our GPU benchmarking by looping gaming benchmarks multiple times, both to combat the inherent run-to-run variation that we see in benchmarking, and more recently to serve as a warm-up activity for cards with temperature based throttling. While these methods have proved sufficient for the Radeon 7000 series, the GeForce 600 series, and even the GeForce 700 series, due to the laws of physics AMD's 95C throttle point takes longer to get to than NVIDIA's 80C throttle point. As a result it's harder to bring the 290X up to its sustained temperatures before the end of our benchmark runs. It will inevitably hit 95C in quiet mode, but not every benchmark runs long enough to reach that before the 3rd or 4th loop.

For the sake of consistency with past results we have not altered our benchmark methodology. However we wanted to be sure to point out this fact before getting to benchmarking, so that there’s no confusion over how we’re handling the matter. Consequently we believe our looping benchmarks run long enough to generally reach sustained performance numbers, but in all likelihood some of our numbers on the shortest benchmarks will skew low. For the next iteration of our benchmark suite we’re most likely going to need to institute a pre-heating phase for all cards to counter AMD’s 95C throttle point.

The Drivers

The press drivers for the 290X are Catalyst 13.11 Beta v5 (The “v” is AMD’s nomenclature), which identify themselves as being from the driver branch 13.250. These are technically still in the 200 branch of AMD’s drivers, but this is the first appearance of 250, as Catalyst 13.11 Beta v1 was still 13.200. AMD doesn’t offer release notes on these beta drivers, but we found that they offered distinct improvements in GRID 2 and to a lesser extent Battlefield 3, and have updated our earlier results accordingly.

Meanwhile for NVIDIA we’re using the recently released “game ready” 331.58 WHQL drivers.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: |

AMD Radeon R9 290X XFX Radeon R9 280X Double Dissipation AMD Radeon HD 7970 GHz Edition AMD Radeon HD 7970 AMD Radeon HD 6970 AMD Radeon HD 5870 NVIDIA GeForce GTX Titan NVIDIA GeForce GTX 780 NVIDIA GeForce GTX 770 |

| Video Drivers: |

NVIDIA Release 331.58 AMD Catalyst 13.11 Beta v1 AMD Catalyst 13.11 Beta v5 |

| OS: | Windows 8.1 Pro |

396 Comments

View All Comments

TheJian - Friday, October 25, 2013 - link

Incorrect. Part of the point of gsync is when you can do 200fps in a particular part of the game they can crank up detail and USE the power you have completely rather than making the whole game for say 60fps etc. Then when all kinds of crap is happening on screen (50 guys shooting each other etc) they can drop the graphics detail down some to keep things smooth. Gsync isn't JUST frame rate. You apparently didn't read the anandtech live blog eh? You get your cake and can eat it too (stutter free, no tearing, smooth and extra power used when you have it available).MADDER1 - Friday, October 25, 2013 - link

If Gsync drops the detail to maintain fps like you said, then you're really not getting the detail you thought you set. How is that having your cake and eating it too?Cellar Door - Friday, October 25, 2013 - link

How so? If Mantle gets 760gtx performance in BF4 from a 260X ..will you switch then?Animalosity - Sunday, October 27, 2013 - link

No. You are sadly mistaken sir.Antiflash - Thursday, October 24, 2013 - link

I've usually prefer Nvidia Cards, but they have it well deserved when decided to price GK110 to the stratosphere just "because they can" and had no competition. That's poor way to treat your customers and taking advantage of fanboys. Full implementation of Tesla and Fermi were always priced around $500. Pricing Keppler GK110 at $650+ was stupid. It's silicon after all, you should get more performance for the same price each year. Not more performance at a premium price as Nvidia tried to do this generation. AMD is not doing anything extraordinary here they are just not following nvidia price gouging practices and $550 is their GPU at historical market prices for their flagship GPU. We would not have been having this discussion if Nvidia had done the same with GK110.inighthawki - Thursday, October 24, 2013 - link

" It's silicon after all, you should get more performance for the same price each year"So R&D costs come from where, exactly? Not sure why people always forget that there is actual R&D that goes into these types of products, it's not just some $5 just of plastic and silicon + some labor and manufacturing costs. Like when they break down phones and tablets and calculate costs they never account for this. As if their engineers are basically just selecting components on newegg and plugging them together.

jecastejon - Thursday, October 24, 2013 - link

R&D. Is R&D tied only to a specific Nvidia card? AMD as others also invest a lot in R&D with every product generation, even if they are not successful. Nvidia will have to do a reality cheek with their pricing and be loyal to their fans and the market. Today's advantages don't last for to long.Antiflash - Thursday, October 24, 2013 - link

NVIDIA's logic. Kepler refresh: 30% more performance => 100% increase in priceAMD's logic. GCN refresh: is 30% more preformance => 0% increase in price

I can't see how this is justified by R&D of just a greedy company squishing its more loyal customer base.

Antiflash - Thursday, October 24, 2013 - link

Just for clarification. price comparison between cards at its introduction comparing NVIDIA's 680 vs Titan and AMD's 7970 vs 290xTheJian - Friday, October 25, 2013 - link

AMD way=ZERO PROFITS company going broke, debt high, 6Bil losses in 10yrsNV way=500-800mil profits per year so you can keep your drivers working.

Your love of AMD's pricing is dumb. They are broke. They have sold nearly everything they have or had, fabs, land, all that is left is the company itself and IP.

AMD should have priced this card at $650 period. Also note, NV hasn't made as much money as 2007 for 6 years. They are not gouging you or they would make MORE than before in 2007 right? Intel, Apple, MS are gouging you as they all make more now than then (2007 was pretty much highs for a lot of companies, down since). People like you make me want to vomit as you just are KILLING AMD, which in turn will eventually cost me dearly buying NV cards as they will be the only ones with real power in the next few years. AMD already gave up the cpu race. How much longer you think they can fund the gpu race with no profits? 200mil owed to GF in Dec 31, so the meager profit they made last Q and any they might have made next Q is GONE. They won't make 200mil profit next Q...LOL. Thanks to people like you asking for LOW pricing and free games.

You don't even understand you are ANTI-AMD...LOL. Your crap logic is killing them (and making NV get 300mil less profit than 2007). The war is hurting them both. I'd rather have AMD making 500mil and NV making 1B than what we get now AMD at ZERO and NV at 550mil.