The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Bioshock Infinite

Bioshock Infinite is Irrational Games’ latest entry in the Bioshock franchise. Though it’s based on Unreal Engine 3 – making it our obligatory UE3 game – Irrational had added a number of effects that make the game rather GPU-intensive on its highest settings. As an added bonus it includes a built-in benchmark composed of several scenes, a rarity for UE3 engine games, so we can easily get a good representation of what Bioshock’s performance is like.

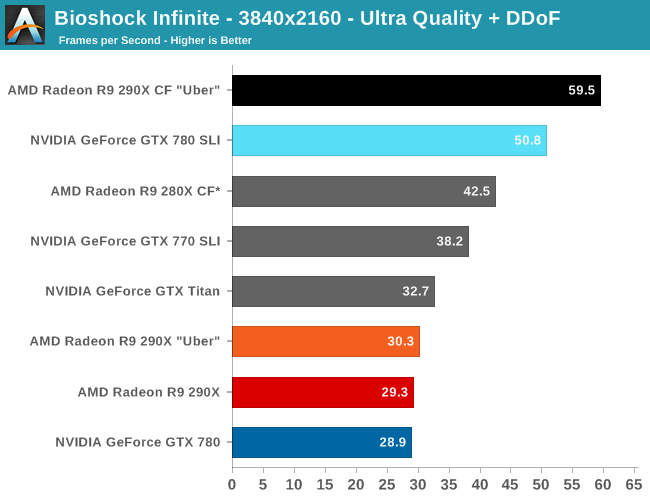

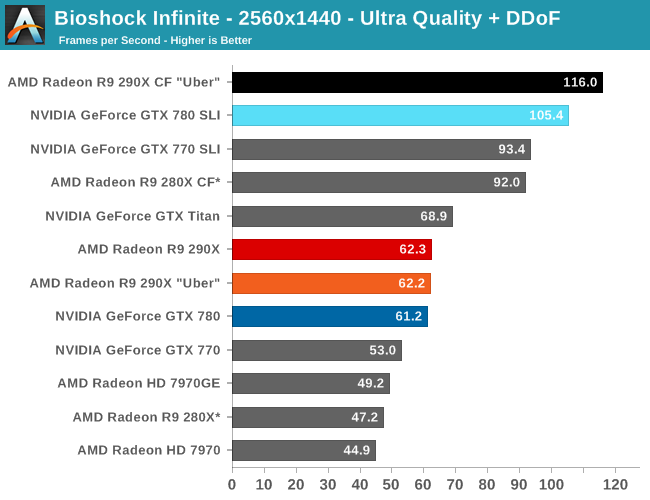

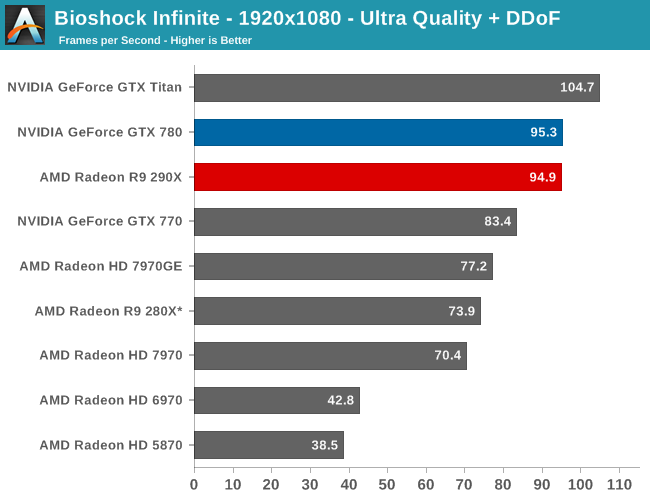

The first of the games AMD allowed us to publish results for, Bioshock is actually a straight up brawl between the 290X and the GTX 780 at 2560. The 290X’s performance advantage here is just 2%, much smaller than the earlier leads it enjoyed and essentially leaving the two cards tied, which also makes this one of the few games that 290X can’t match GTX Titan. At 2560 everything 290X/GTX 780 class or better can beat 60fps despite the heavy computational load of the depth of field effect, so for AMD 290X is the first single-GPU card from them that can pull this off.

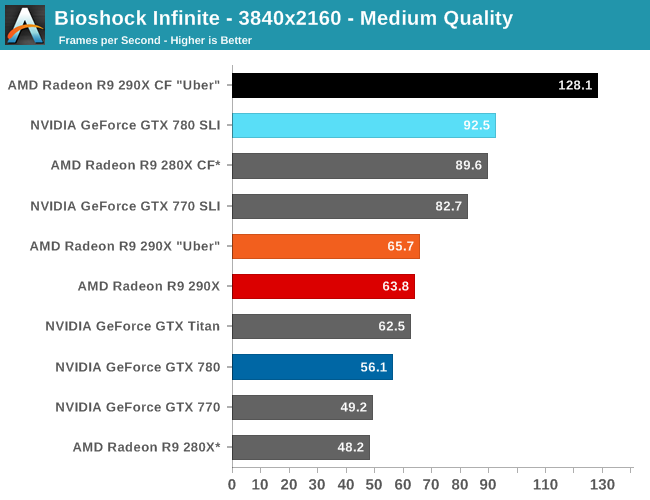

Meanwhile at 4K things end up being rather split depending on the resolution we’re looking at. At Ultra quality the 290X and GTX 780 are again tied, but neither is above 30fps. Drop down to Medium quality however and we get framerates above 60fps again, while at the same time the 290X finally pulls away from the GTX 780, beating it by 14% and even edging out GTX Titan. Like so many games we’re looking at today the loss in quality cannot justify the higher resolution, in our opinion, but it presents another scenario where 290X demonstrates superior 4K performance.

For no-compromises 4K gaming we once again turn our gaze towards the 290X CF and GTX 780 SLI, which has AMD doing very well for themselves. While AMD and NVIDIA are nearly tied at the single GPU level – keep in mind we’re in uber mode for CF, so the uber 290X has a slight performance edge in single GPU mode – with multiple GPUs in play AMD sees better scaling from AFR and consequently better overall performance. At 95% the 290X achieves a nearly perfect scaling factor here, while the GTX 780 SLI achieves only 65%. Curiously this is better for AMD and worse for NVIDIA than the scaling factors we see at 2560, which are 86% and 72% respectively.

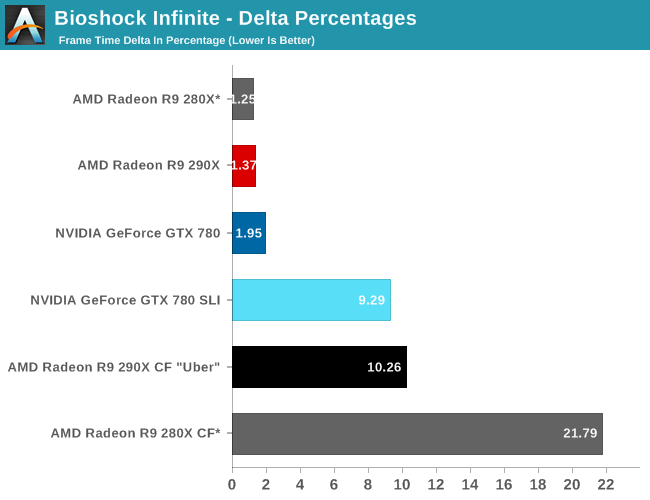

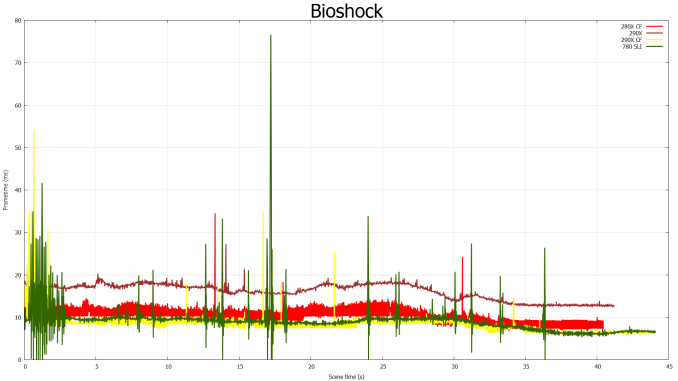

Moving on to our FCAT measurements, it’s interesting to see just how greatly improved the frame pacing is for the 290X versus the 280X, even with the frame pacing fixes in for the 280X. Whereas the 280X has deltas in excess of 21%, the 290X brings those deltas down to 10%, better than halving the variance in this game. Consequently the frame time consistency we’re seeing goes from being acceptable but measurably worse than NVIDIA’s consistency to essentially equal. In fact 10% is outright stunning for a multi-GPU setup, as we rarely achieve frame rates this consistent on those setups.

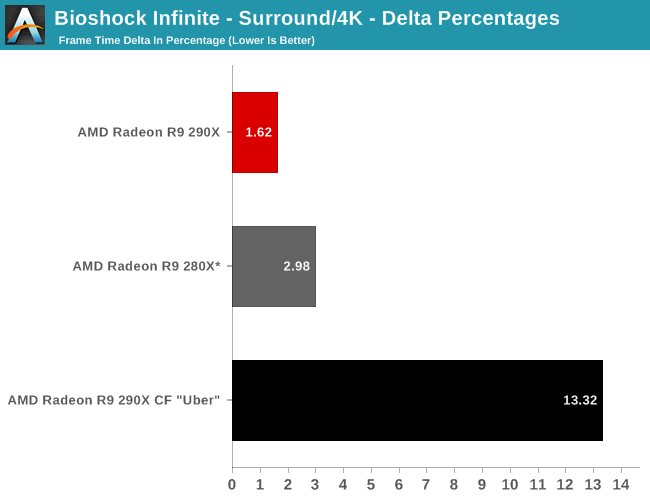

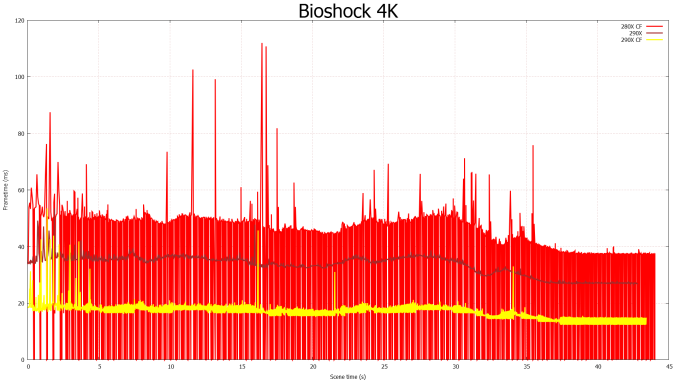

Finally for 4K gaming our variance increases a bit, but not immensely so. Despite the heavier rendering workload and greater demands on moving these large frames around, the delta percentages keep to 13%.

396 Comments

View All Comments

TheJian - Friday, October 25, 2013 - link

Incorrect. Part of the point of gsync is when you can do 200fps in a particular part of the game they can crank up detail and USE the power you have completely rather than making the whole game for say 60fps etc. Then when all kinds of crap is happening on screen (50 guys shooting each other etc) they can drop the graphics detail down some to keep things smooth. Gsync isn't JUST frame rate. You apparently didn't read the anandtech live blog eh? You get your cake and can eat it too (stutter free, no tearing, smooth and extra power used when you have it available).MADDER1 - Friday, October 25, 2013 - link

If Gsync drops the detail to maintain fps like you said, then you're really not getting the detail you thought you set. How is that having your cake and eating it too?Cellar Door - Friday, October 25, 2013 - link

How so? If Mantle gets 760gtx performance in BF4 from a 260X ..will you switch then?Animalosity - Sunday, October 27, 2013 - link

No. You are sadly mistaken sir.Antiflash - Thursday, October 24, 2013 - link

I've usually prefer Nvidia Cards, but they have it well deserved when decided to price GK110 to the stratosphere just "because they can" and had no competition. That's poor way to treat your customers and taking advantage of fanboys. Full implementation of Tesla and Fermi were always priced around $500. Pricing Keppler GK110 at $650+ was stupid. It's silicon after all, you should get more performance for the same price each year. Not more performance at a premium price as Nvidia tried to do this generation. AMD is not doing anything extraordinary here they are just not following nvidia price gouging practices and $550 is their GPU at historical market prices for their flagship GPU. We would not have been having this discussion if Nvidia had done the same with GK110.inighthawki - Thursday, October 24, 2013 - link

" It's silicon after all, you should get more performance for the same price each year"So R&D costs come from where, exactly? Not sure why people always forget that there is actual R&D that goes into these types of products, it's not just some $5 just of plastic and silicon + some labor and manufacturing costs. Like when they break down phones and tablets and calculate costs they never account for this. As if their engineers are basically just selecting components on newegg and plugging them together.

jecastejon - Thursday, October 24, 2013 - link

R&D. Is R&D tied only to a specific Nvidia card? AMD as others also invest a lot in R&D with every product generation, even if they are not successful. Nvidia will have to do a reality cheek with their pricing and be loyal to their fans and the market. Today's advantages don't last for to long.Antiflash - Thursday, October 24, 2013 - link

NVIDIA's logic. Kepler refresh: 30% more performance => 100% increase in priceAMD's logic. GCN refresh: is 30% more preformance => 0% increase in price

I can't see how this is justified by R&D of just a greedy company squishing its more loyal customer base.

Antiflash - Thursday, October 24, 2013 - link

Just for clarification. price comparison between cards at its introduction comparing NVIDIA's 680 vs Titan and AMD's 7970 vs 290xTheJian - Friday, October 25, 2013 - link

AMD way=ZERO PROFITS company going broke, debt high, 6Bil losses in 10yrsNV way=500-800mil profits per year so you can keep your drivers working.

Your love of AMD's pricing is dumb. They are broke. They have sold nearly everything they have or had, fabs, land, all that is left is the company itself and IP.

AMD should have priced this card at $650 period. Also note, NV hasn't made as much money as 2007 for 6 years. They are not gouging you or they would make MORE than before in 2007 right? Intel, Apple, MS are gouging you as they all make more now than then (2007 was pretty much highs for a lot of companies, down since). People like you make me want to vomit as you just are KILLING AMD, which in turn will eventually cost me dearly buying NV cards as they will be the only ones with real power in the next few years. AMD already gave up the cpu race. How much longer you think they can fund the gpu race with no profits? 200mil owed to GF in Dec 31, so the meager profit they made last Q and any they might have made next Q is GONE. They won't make 200mil profit next Q...LOL. Thanks to people like you asking for LOW pricing and free games.

You don't even understand you are ANTI-AMD...LOL. Your crap logic is killing them (and making NV get 300mil less profit than 2007). The war is hurting them both. I'd rather have AMD making 500mil and NV making 1B than what we get now AMD at ZERO and NV at 550mil.