The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Metro: Last Light

As always, kicking off our look at performance is 4A Games’ latest entry in their Metro series of subterranean shooters, Metro: Last Light. The original Metro: 2033 was a graphically punishing game for its time and Metro: Last Light is in its own right too. On the other hand it scales well with resolution and quality settings, so it’s still playable on lower end hardware.

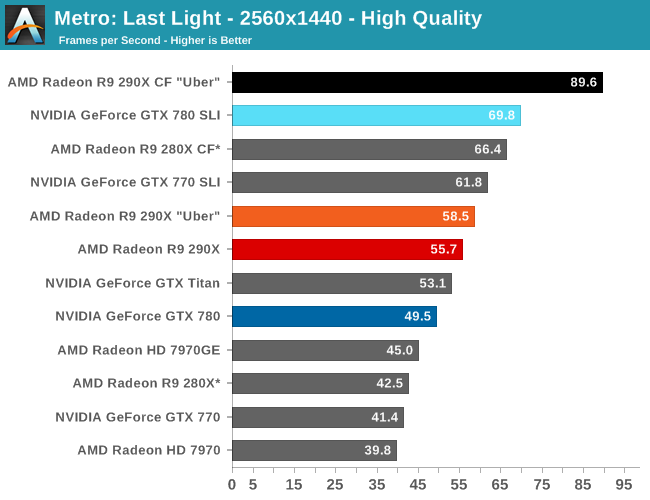

For the bulk of our analysis we’re going to be focusing on our 2560x1440 results, as monitors at this resolution will be what we expect the 290X to be primarily used with. A single 290X may have the horsepower to drive 4K in at least some situations, but given the current costs of 4K monitors that’s going to be a much different usage scenario.

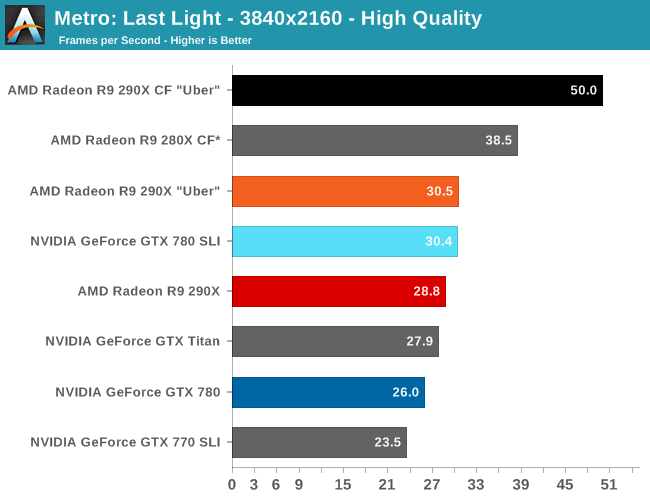

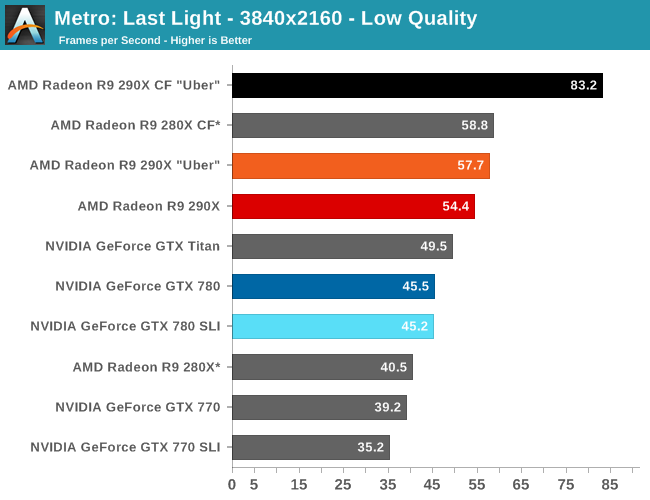

With that said, for focusing on 4K on most games we’ve thrown in results both at a high quality setting, and a lower quality setting that makes it practical to run at 4K off of a single card. Given current monitor prices it won’t make a ton of sense to try to go with reduced quality settings just to save $550 – and consequently we may not keep the lower quality benchmarks around for future articles – but for the purposes of looking at a new GPU it’s useful to be able to look at single-GPU performance at framerates that are actually playable.

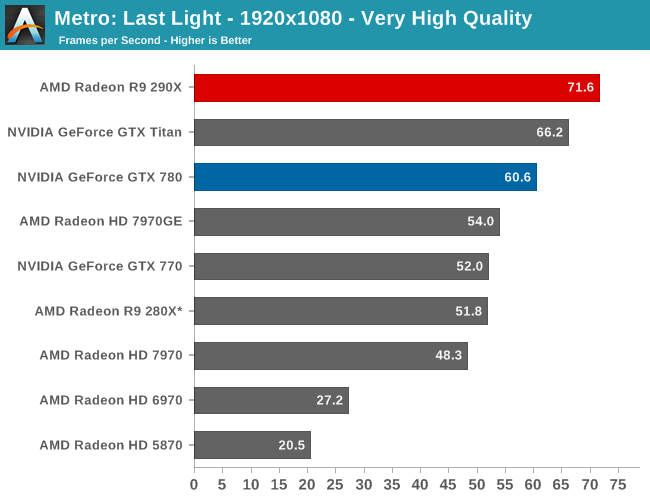

With that said, starting off with Metro at 2560 the 290X hits the ground running on our first benchmark. At 55fps it’s just a bit shy of hitting that 60fps average we love to cling to, but among all of our single-GPU cards it is the fastest, beating even the traditional powerhouse that is GTX Titan. Consequently the performance difference between 290X and GTX 780 (290X’s real competition) is even greater, with the 290X outpacing the GTX 780 by 13%, all the while being $100 cheaper. As we’ll see these results are a bit better than the overall average, but all told we’re not too far off. For as fast as GTX 780 is, 290X is going to be appreciably (if not significantly) faster.

290X also does well for itself compared to the Tahiti based 280X. At 2560 the 290X’s performance advantage stands at 31%, which as we alluded to earlier is greater than the increase in die size, offering solid proof that AMD has improved their performance per mm2 of silicon despite the fact that they’re still on the same 28nm manufacturing process. That 31% does come at a price increase of 83% however, which although normal for this price segment serves as a reminder that the performance increases offered by the fastest video cards with the biggest GPUs do not come cheaply.

Meanwhile for one final AMD comparison, let’s quickly look at the 290X in uber mode. As the 290X is unable to sustain the power/heat workload of a 1000MHz Hawaii GPU for an extended period of time, at its stock (quiet settings) it has to pull back on performance in order to meet reasonable operational parameters. Uber mode on the other hand represents what 290X and the Hawaii can do when fully unleashed; the noise costs won’t be pretty (as we’ll see), but in the process it builds on 290X’s existing leads and increases them by another 5%. And that’s really going to be one of the central narratives for 290X once semi-custom and fully-custom cards come online: Despite being a fully enabled part, 290X does not give us everything Hawaii is truly capable of.

Moving on, let’s talk about multi-GPU setups and 4K. Metro is a solid reminder that not every game scales similarly across different GPUs, and for that matter that not every game is going to significantly benefit from multi-GPU setups. Metro for its part isn’t particularly hospitable to multi-GPU cards, with the best setup scaling by only 53% at 2560. This is better than some games that won’t scale at all, but it won’t be as good as those games that see a near-100% performance improvement. Which consequently is also why we dropped Metro as a power benchmark, as this level of scaling is a poor showcase for the power/temp/noise characteristics of a pair of video cards under full load.

The real story here of course is that it’s another strong showing for AMD at both 2560 and 4K. At 2560 the 290X CF sees better performance scaling than the GTX 780 SLI – 53% versus 41% – further extending the 290X’s lead. Bumping the resolution up to 4K makes things even more lopsided in AMD’s favor, as at this point the NVIDIA cards essentially fail to scale (picking up just 17%) while the 290X sees an even greater scaling factor of 63%. As such for those few who can afford to seriously chase 4K gaming, the 290X is the only viable option in this scenario. And at 50fps average for 4K at high quality, 4K gaming at reasonable (though not maximum) quality settings is in fact attainable when it comes to Metro.

Meanwhile for single-GPU configurations at 4K, 4K is viable, but only at Metro’s lowest quality levels. This will be the first of many games where such a thing is possible, and the first of many games where going up to 4K in this manner further improves on AMD’s lead at 4K. Again, we’re not of the opinion that 4K at these low quality settings is a good way to play games, but it does provide some insight and validationg into AMD’s claims that their hardware is better suited for 4K gaming.

396 Comments

View All Comments

Spunjji - Friday, October 25, 2013 - link

Word.extide - Thursday, October 24, 2013 - link

That doesn't mean that AMD can't come up with a solution that might even be compatible with G-Sync... Time will tell..piroroadkill - Friday, October 25, 2013 - link

That would not be in NVIDIA's best interests. If a lot of machines (AMD, Intel) won't support it, why would you buy a screen for a specific graphics card? Later down the line, maybe something like the R9 290X comes out, and you can save a TON of money on a high performing graphics card from another team.It doesn't make sense.

For NVIDIA, their best bet at getting this out there and making the most money from it, is licencing it.

Mstngs351 - Sunday, November 3, 2013 - link

Well it depends on the buyer. I've bounced between AMD and Nvidia (to be upfront I've had more Nvidia cards) and I've been wanting to step up to a larger 1440 monitor. I will be sure that it supports Gsync as it looks to be one of the more exciting recent developments.So although you are correct that not a lot of folks will buy an extra monitor just for Gsync, there are a lot of us who have been waiting for an excuse. :P

nutingut - Saturday, October 26, 2013 - link

Haha, that would be something for the cartel office then, I figure.elajt_1 - Sunday, October 27, 2013 - link

This doesn't prevent AMD from making something similiar, if Nvidia decides to not make it open.hoboville - Thursday, October 24, 2013 - link

Gsync will require you to buy a new monitor. Dropping more money on graphics and smoothness will apply at the high end and for those with big wallets, but for the rest of us there's little point to jumping into Gsync.In 3-4 years when IPS 2560x1440 has matured to the point where it's both mainstream (cheap) and capable of delivering low-latency ghosting-free images, then Gsync will be a big deal, but right now only a small percentage of the population have invested in 1440p.

The fact is, most people have been sitting on their 1080p screens for 3+ years and probably will for another 3 unless those same screens fail--$500+ for a desktop monitor is a lot to justify. Once the monitor upgrades start en mass, then Gsync will be a market changer because AMD will not have anything to compete with.

misfit410 - Thursday, October 24, 2013 - link

G-String didn't kill anything, I'm not about to give up my Dell Ultrasharp for another Proprietary Nvidia tool.anubis44 - Tuesday, October 29, 2013 - link

Agreed. G-sync is a stupid solution to non-existent problem. If you have a fast enough frame rate, there's nothing to fix.MADDER1 - Thursday, October 24, 2013 - link

Mantle could be for either higher frame rate or more detail. Gsync sounds like just frame rate.