The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Meet The Radeon R9 290X

Now that we’ve had a chance to discuss the features and the architecture of GCN 1.1 and Hawaii, we can finally get to the hardware itself: AMD’s reference Radeon R9 290X.

Other than the underlying GPU and the livery, the reference 290X is actually not a significant deviation from the reference design for the 7970. There are some changes that we’ll go over, but for better and for worse AMD’s reference design is not much different from the $550 card we saw almost 2 years ago. For cooling in particular this means AMD is delivering a workable cooler, but it’s not one that’s going to complete with the efficient-yet-extravagant coolers found on NVIDIA’s GTX 700 series.

Starting as always from the top, the 290X measures in at 10.95”. The PCB itself is a bit shorter at 10.5”, but like the 7970 the metal frame/baseplate that is affixed to the board adds a bit of length to the complete card. Meanwhile AMD’s shroud sports a new design, one which is shared across the 200 series. Functionally it’s identical to the 7970, being made of similar material and ventilating in the same manner.

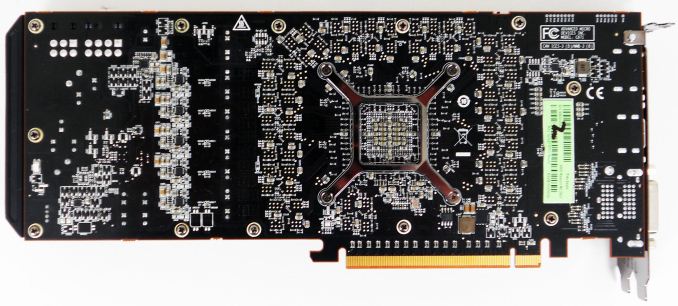

Flipping over to the back of the card quickly, you won’t find much here. AMD has placed all 16 RAM modules on the front of the PCB, so the back of the PCB is composed of resistors, pins, mounting brackets, and little else. AMD continues to go without a backplate here as the backplate is physically unnecessary and takes up valuable breathing room in Crossfire configurations.

Pulling off the top of the shroud, we can see in full detail AMD’s cooling assembling, including the heatsink, radial fan, and the metal baseplate. Other than angling the far side of the heatsink, this heatsink is essentially unchanged from the one on the 7970. AMD is still using a covered aluminum block heatsink designed specifically for use in blower designs, which runs most of the length of the card between the fan and PCIe bracket. Connecting the heatsink to the GPU is an equally large vapor chamber cooler, which is in turn mounted to the GPU using AMD’s screen printed, high performance phase change TIM. Meanwhile the radial fan providing airflow is the same 75mm diameter fan we first saw in the 7970. Consequently the total heat capacity of this cooler will be similar, but not identical to the one on the 7970; with AMD running the 290X at a hotter 95C versus the 80C average of the 7970, this same cooler is actually able to move more heat despite being otherwise no more advanced.

Moving on, though we aren’t able to take apart the card for pictures (we need it intact for future articles), we wanted to quickly go over the power and RAM specs for the 290X. For power delivery AMD is using a traditional 5+1 power phase setup, with power delivery being driven by their newly acquired IR 3567B controller. This will be plenty to drive the card at stock, but hardcore overclockers looking to attach the card to water or other exotic cooling will likely want to wait for something with a more robust power delivery system. Meanwhile despite the 5GHz memory clockspeed for the 290X, AMD has actually equipped the card with everyone’s favorite 6GHZ Hynix R0C modules, so memory controller willing there should be quite a bit of memory overclocking headroom to play with. 16 of these modules are located around the GPU on the front side of the PCB, with thermal pads connecting them to the metal baseplate for cooling.

Perhaps the biggest change for the 290X as opposed to the 7970 is AMD’s choice for balancing display connectivity versus ventilation. With the 6970 AMD used a half-slot vent to fit a full range of DVI, HDMI, and DisplayPorts, only to drop the second DVI port on the 7970 and thereby utilize a full slot vent. With the 290X AMD has gone back once more to a stacked DVI configuration, which means the vent is once more back down to a bit over have a slot in size. At this point both AMD and NVIDIA have successfully shipped half-slot vent cards at very high TDPs, so we’re not the least bit surprised that AMD has picked display connectivity over ventilation, as a half-slot vent is proving to be plenty capable in these blower designs. Furthermore based on NVIDIA and AMD’s latest designs we wouldn’t expect to see full size vents return for these single-GPU blowers in the future, at least not until someone finally gets rid of space-hogging DVI ports entirely.

Top: R9 290X. Bottom: 7970

With that in mind, the display connectivity for the 290X utilizes AMD’s new reference design of 2x DL-DVI-D, 1x HDMI, and 1x DisplayPort. Compared to the 7970 AMD has dropped the two Mini DisplayPorts for a single full-size DisplayPort, and brought back the second DVI port. Note that unlike some of AMD’s more recent cards these are both physically and electrically DL-DVI ports, so the card can drive 2 DL-DVI monitors out of the box; the second DVI port isn’t just for show. The single DVI port on the 7970 coupled with the high cost of DisplayPort to DL-DVI ports made the single DVI port on the 7970 an unpopular choice in some corners of the world, so this change should make DVI users happy, particularly those splurging on the popular and cheap 2560x1440 Korean IPS monitors (the cheapest of which lack anything but DVI).

But as a compromise of this design – specifically, making the second DVI port full DL-DVI – AMD had to give up the second DisplayPort, which is why the full sized DisplayPort is back. This does mean that compared to the 7970 the 290X has lost some degree of display flexibility howwever, as DisplayPorts allow for both multi-monitor setups via MST and for easy conversion to other port types via DVI/HDMI/VGA adapters. With this configuration it’s not possible to drive 6 fully independent monitors on the 290X; the DisplayPort will get you 3, and the DVI/HDMI ports the other 3, but due to the clock generator limits on the 200 series the 3 monitors on the DVI/HDMI ports must be timing-identical, precluding them from being fully independent. On the other hand this means that the PC graphics card industry has effectively settled the matter of DisplayPort versus Mini DisplayPort, with DisplayPort winning by now being the port style of choice for both AMD and NVIDIA. It’s not how we wanted this to end up – we still prefer Mini DisplayPort as it’s equally capable but smaller – but at least we’ll now have consistency between AMD and NVIDIA.

Moving on, AMD’s dual BIOS functionality is back once again for the 290X, and this time it has a very explicit purpose. The 290X will ship with two BIOSes, a “quiet” bios and an “uber” BIOS, selectable with the card’s BIOS switch. The difference between the two BIOSes is that the quiet BIOS ships with a maximum fan speed of 40%, while the uber BIOS ships with a maximum fan speed of 50%. The quiet BIOS is the default BIOS for the 290X, and based on our testing will hold the noise levels of the card equal to or less than those of the reference 7970.

| AMD Radeon Family Cooler Comparison: Noise & Power | |||||||||||

| Card | Load Noise - Gaming | Estimated TDP | |||||||||

| Radeon HD 7970 | 53.5dB | 250W | |||||||||

| Radeon R9 290X Quiet | 53.3dB | 300W | |||||||||

| Radeon R9 290X Uber | 58.9dB | 300W | |||||||||

However because of the high power consumption and heat generation of the underlying Hawaii GPU, in quiet mode the card is unable to sustain its full 1000MHz boost clock for more than a few minutes; there simply isn’t enough cooling occuring at 40% to move 300W of heat. We’ll look at power, temp, and noise in full a bit later in our benchmark section, but average sustained clockspeeds are closer to 900MHz in quiet mode. Uber mode and its 55% fan speed on the other hand is fast enough (and just so) to move enough air to keep the card at 1000MHz in all non-TDP limited workloads. The tradeoff there is that the last 100MHz of clockspeed is going to be incredibly costly from a noise perspective, as we’ll see. The reference 290X would not have been a viable product if it didn’t ship with quiet mode as the default BIOS.

Finally, let’s wrap things up by talking about miscellaneous power and data connectors. With AMD having gone with bridgeless (XDMA) Crossfire for the 290X, the Crossfire connectors that have adorned high-end AMD cards for years are now gone. Other than the BIOS switch, the only thing you will find at the top of the card are the traditional PCIe power sockets. AMD is using the traditional 6pin + 8pin setup here, which combined with the PCIe slot power is good for delivering 300W to the card, which is what we estimate to be the card’s TDP limit. Consequently overclocking boards are all but sure to go the 8pin + 8pin route once those eventually arrive.

396 Comments

View All Comments

DMCalloway - Thursday, October 24, 2013 - link

Once again, against the Titan it's $450 cheaper, not $100. Against the gtx 780 it is a wash on performance at a cheaper price point. Eight months late to the game I'll agree on, however it took time to get in bed with Sony and Micro$oft which was needed if they (AMD) ever hope to get to the point of being able to release 'at a competitive time'. I'm amazed that they are still viable after the financial losses they suffered with the whole Intel paying OEM's to not release their current cpu gen. along side AMD's business . Sure, AMD won the law suit but the financial losses in market share was in the billions , Intel jumped ahead a gen. and the damage was done. Realistically, I believe AMD chose wisely to focus on the console market because the 7970ghz pushed hard wasn't really that far behind a stock gtx780.Bloodcalibur - Thursday, October 24, 2013 - link

Ever wonder why the TItan costs $350 more than their own GTX 780 while having only a small margin of improvement?Oh, right, compute performance.

anubis44 - Thursday, October 24, 2013 - link

and in some cases, the R9 290X is as much as 23% faster in 4K resolution than the Titan, or in the words of HardOCP: : "at Ultra HD 4K it (R9 290X) just owns the GeForce GTX TITAN."Bloodcalibur - Thursday, October 24, 2013 - link

Once again, Titan is a gaming/workstation hybrid, that's why it costs $350 more than their own GTX 780 with only a small FPS improvement in gaming.TheJian - Friday, October 25, 2013 - link

Depends on the games chosen. For instance All 4K:Guru3d:

Tombraider tied 4k 40fps (they consider this BARELY playable-though advise 60fps)

MOH Warfighter Titan wins 7%

Bioshock Infinite Titan wins 10% (33fps to 30, but again not going to be playable min in teens?)

BF3 TIE (32fps, again avg, so not playable)

The only victory at 4K is Hitman absolution here. So clearly it depends on what your settings are and what games you play. Also note the fps at 4K at hardocp. They can't max settings and every game is a sacrifice of some stuff (or a lot). Even at 2560 Kyle notes all were unplayable with everything on with avg's at 22fps and mins 12fps for all 3 basically...ROFL. How useful is it to win (or even lose) at a res you can't play at?

http://www.techpowerup.com/reviews/AMD/R9_290X/24....

Techpowerup tests all the way to 5760x1080, quoting that unless not tested. Here we go again...LOL

World of Warcraft domination for 780 & Titan (over 20% faster on titan 5760!)

SKYRIM - Both Titan and 780 win 5760

Starcraft2 only went to 2560 but again clean sweep for 780/Titan bot over 10%

Splintercell blacklist clean sweep at 2560 & 5760 for Titan AND 780 (>20% for titan both res)

Farcry3 (titan and 780 wins at 5760 but at 22fps who cares...LOL but 10% faster than 290x)

black ops 2 (only went to 2560, but titan wins all res)

Metro TIE (26fps, again neither playable)

Crysis 3 Titan over 10% win (25fps vs. 22, but neither playable...LOL)

At hardocp, metro, tombraider, bf3, and crysis 3 were all UNDER 25fps min on both cards with most coming in at 22fps or so on both. I wish they would benchmark at what they find is PLAYABLE, but even then I'm against 4K if I have to turn all kinds of stuff off in the first place. Only farcry3 was tested at above 30fps...LOL. You need TWO cards for 4K gaming. PERIOD. If you have the money to buy a 4K monitor or two monitors you probably have the cash to do it right and buy 2 cards. Steampowered survey shows this as most have 2 cards above 1920x1200! Bragging about 4K gaming on this card (or even titan) is ridiculous as it just ends up in an exercise of turning crap off that devs wanted me to SEE. I wouldn't care if 290x was 50% faster than Titan if you're running 22fps who cares? Neither is playable. You've proven NOTHING. If we jump off a 100 story building I'll beat you to the bottom...Yeah but umm...We're both still dead right? So what's the point no matter who wins that game?

Funfact: techspot.com tombraider comment (2560/1080p both tested-4xSSAA+16af)

"We expected AMD to do better in Tomb Raider since they supported the title's development, but the R9 290X was 12% slower than the GTX Titan and 3% slower than the GTX 780"

LOL. I hope they do better with BF4 AMD enhancements. Resident Evil 6 shows titan win also.

http://www.techspot.com/review/727-radeon-r9-290x/...

Tomshardware 4K quote:

"In Gaming At 3840x2160: Is Your PC Ready For A 4K Display?, I concluded that you’d want at least two GeForce GTX 780s for 4K. And although the R9 290X is faster than even the $1000 Titan, I maintain that you need a pair in order to crank your settings up to where they should be."

That was their ARMA quote...But it applies to all 4K...TWO CARDS. But their benchmarks are really low compared to everyone else for Titan in the same games. It's like took 10-15% off Titan's scores. IE, Bioshock infinite at guru3d shows titan winning 10%, but at toms losing by 20% same game, same res...WTF? That's odd right? Skyrim shows NV domination at 4k (780 also). Almost 20% faster for Titan & 780 (they tied) over Uber. Of course they turned off ALL AA modes to get it playable. Again, you can't just judge 4K by one site's games. Clearly you can find the exact opposite at 4K and come back down to reality (a res you can actually play at above 30fps) and titan is smacking them in a ton of games (far more wins than losses). I could find a ton more if needed but you should get the point. TITAN isn't OWNED at 4K and usually when it is as toms says of Metro "the win is largely symbolic though", yeah at 30fps avg it is pointless even turned down!

bronopoly - Thursday, October 24, 2013 - link

Why shouldn't one of the cards you mentioned be bought for 1080p? I don't know about you, but I prefer to get 120 FPS in games so it matches my monitor w/ lightboost enabled.Bloodcalibur - Thursday, October 24, 2013 - link

Except the Titan is a gaming/workstation hybrid due to its computing ability. Anyone who bought a Titan just for gaming is retarded and paid $350 more than they would have on a 780. Titan shouldn t be compared to 290X for gaming. Its a good card for those who do both gaming and a little bit of computing.looncraz - Thursday, October 24, 2013 - link

Install a new cooler and the last two of those problems vanish... and you've saved hundreds... you could afford to build a stand-alone water-cooling loop just for the 290x and still have money to spare for a nice dinner.teiglin - Thursday, October 24, 2013 - link

I haven't finished reading the article yet, but isn't that more than a little hyperbolic? It just means NVIDIA will have to cut back on the amount it gouges for GK110. The fact that it was able to leave the price high for so long is nearly all good for them--it's just a matter of how quickly they adjust their pricing to match.It will be nice to have a fair fight again at the high-end for a single card.

bill5 - Thursday, October 24, 2013 - link

Heh, I'm the biggest AMD fanboy around, but these top two comments almost smell like marketing.It's a great card, and the Titan was deffo highly overpriced, but Nvidia can just make some adjustments on price and compete. That 780 Ti they showed will surely be something in that vein.