The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Synthetics

As always we’ll also take a quick look at synthetic performance. The 290X shouldn’t pack any great surprises here since it’s still GCN, and as such bound to the same general rules for efficiency, but we do have the additional geometry processors and additional ROPs to occupy our attention.

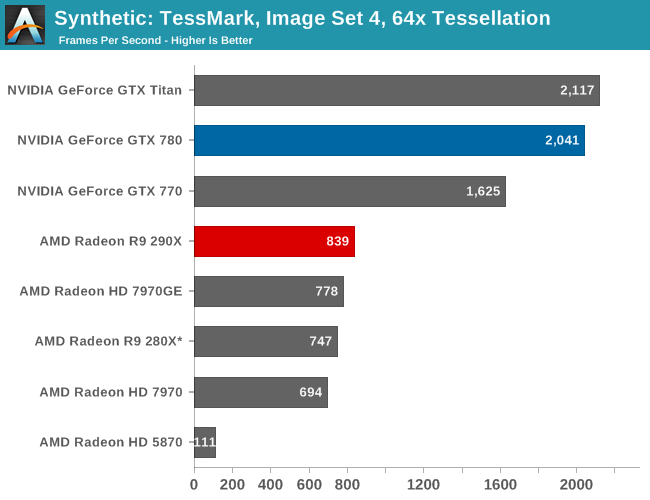

Right off the bat then, the TessMark results are something of a head scratcher. Whereas NVIDIA’s performance here has consistently scaled well with the number of SMXes, AMD’s seeing minimal scaling from those additional geometry processors on Hawaii/290X. Clearly Tessmark is striking another bottleneck on 290X beyond simple geometry throughput, though it’s not absolutely clear what that bottleneck is.

This is a tessellation-heavy benchmark as opposed to a simple massive geometry bencehmark, so we may be seeing a tessellation bottleneck rather than a geometry bottleneck, as tessellation requires its own set of heavy lifting to generate the necessary control points. The 12% performance gain is much closer to the 11% memory bandwidth gain than anything else, so it may be that the 280X and 290X are having to go off-chip to store tessellation data (we are after all using a rather extreme factor), in which case it’s a memory bandwidth bottleneck. Real world geometry performance will undoubtedly be better than this – thankfully for AMD this is the pathological tessellation case – but it does serve of a reminder of how much more tessellation performance NVIDIA is able to wring out of Kepler. Though the nearly 8x increase in tessellation performance since 5870 shows that AMD has at least gone a long way in 4 years, and considering the performance in our tessellation enabled games AMD doesn’t seem to be hurting for tessellation performance in the real world right now.

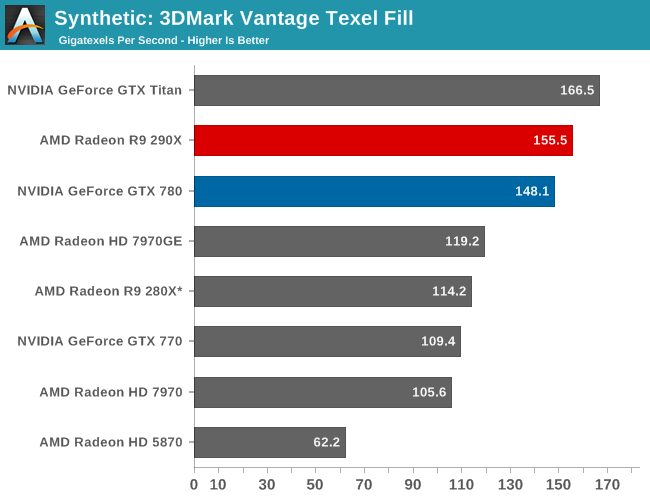

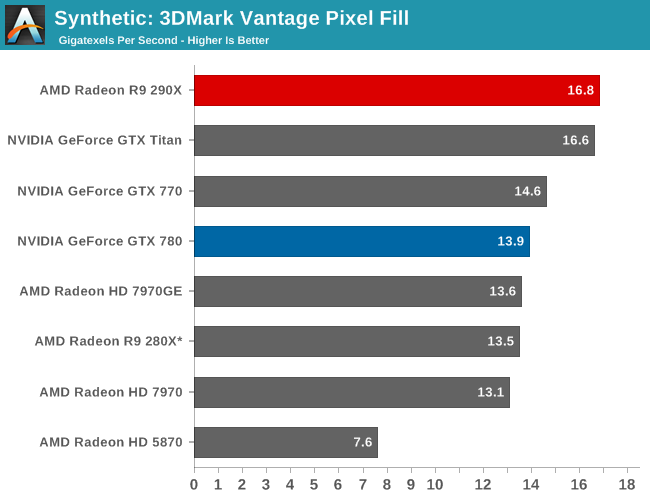

Moving on, we have our 3DMark Vantage texture and pixel fillrate tests, which present our cards with massive amounts of texturing and color blending work. These aren’t results we suggest comparing across different vendors, but they’re good for tracking improvements and changes within a single product family.

Looking first at texturing performance, we can see that texturing performance is essentially scaling 1:1 with what the theoretical numbers say it should. 36% better texturing performance over 280X is exactly in line with the increased number of texture units versus 280X, at the very least proving that 290X isn’t having any trouble feeding the increased number of texture units in this scenario.

Meanwhile for our pixel fill rates the results are a bit more in the middle, reflecting the fact that this test is a mix of ROP bottlenecking and memory bandwidth bottlenecking. Remember, AMD doubled the ROPs versus 280X, but only gave it 11% more memory bandwidth. As a result the ROPs’ ability to perform is going to depend in part on how well color compression works and what can be recycled in the L2 cache, as anything else means a trip to the VRAM and running into those lesser memory bandwidth gains. Though the 290X does get something of a secondary benefit here, which is that unlike the 280X it doesn’t have to go through a memory crossbar and any inefficiencies/overhead it may add, since the number of ROPs and memory controllers is perfectly aligned on Hawaii.

396 Comments

View All Comments

ninjaquick - Thursday, October 24, 2013 - link

so 4-5% faster than Titan?Drumsticks - Thursday, October 24, 2013 - link

If the 780Ti is $599, then that means the 780 should see at least a $150 (nearly 25%!) price drop, which is good with me.DMCalloway - Thursday, October 24, 2013 - link

So, what you are telling me is Nvidia is going to stop laughing- all- the- way- to-the-bank and price the 780ti for less than current 780 prices? Current 780 owners are going to get HOT and flood the market with used 780's.dragonsqrrl - Thursday, October 24, 2013 - link

Why is it that this is only ever the case when Nvidia performs a massive price drop? Nvidia price drop = early adopters getting screwed (even though 780 has been out for ~6 months now). AMD price drop = great value for enthusiasts, go AMD! ... lolz.Minion4Hire - Thursday, October 24, 2013 - link

Titan is a COMPUTE card. A poor man's (relatively speaking) proper compute solution. The fact that it is also a great gaming card is almost incidental. No one needs a 6GB frame buffer for gaming right now. The Titan comparisons are nearly meaningless.The "nearly" part is the unknown 780 TI. Nvidia could enable the remaining CUs on 780 to at least give the TI comparable performance to Titan. But who cares that Titan is $1000? It isn't really relevant.

ddriver - Thursday, October 24, 2013 - link

Even much cheaper radeons compeltely destroy the titan as well as every other nvidia gpu in compute, do not be fooled by a single, poorly implemented test, the nvidia architecture plainly sucks in double precision performance.ShieTar - Thursday, October 24, 2013 - link

Since "much cheaper" Radeons tend to deliver 1/16th DP performance, you seem to not really know what you are talking about. Go read up on a relevant benchmark suite on professional and compute cards, e.g. http://www.tomshardware.com/reviews/best-workstati... The only tasks where AMD cards shine are those implemented in OpenCL.ddriver - Thursday, October 24, 2013 - link

"Much cheaper" relative to the price of the titan, not entry level radeons... You clutched onto a straw and drowned...OpenCL is THE open and portable industry standard for parallel computing, did you expect radeons to shine at .. CUDA workloads LOL, I'd say OpenCL performance is all I really need, it has been a while since I played or cared about games.

Pontius - Tuesday, October 29, 2013 - link

I'm in the same boat as you ddriver, all I care about is OpenCL in these articles. I go straight to that section usually =)TheJian - Friday, October 25, 2013 - link

You're neglecting the fact that everything you can do professionally in openCL you can already do faster in cuda. Cuda is taught in 600+ universities for a reason. It is in over 200 pro apps and has been funded for 7+yrs unlike opencl which is funded by a broke company hoping people will catch on one day :) Anandtech refuses to show cuda (gee they do have an AMD portal after all...LOL) but it exists and is ultra fast. You really can't name a pro app that doesn't have direct support or support via plugin for Cuda. And if you're buying NV and running opencl instead of cuda (like anand shows calling it compute crap) you're an idiot. Why don't they run Premiere instead of Sony crap for video editing? Because Cuda works great for years in it. Same with Photoshop etc...You didn't look at folding@home DP benchmark here in this review either I guess. 2.5x faster than 290x. As you can see it depends on what you do and the app you use. I consider F@H stupid use of electricity but that's just me...LOL. Find anything where OpenCL (or any AMD stuff, directx, opengl) beats CUDA. Compute doesn't just mean OpenCL, it means CUDA too! Dumb sites just push openCL because its OPEN...LOL. People making money use CUDA and generally buy quadro or tesla (they own 90% of the market for a reason, or people would just buy radeons right?).

http://www.anandtech.com/show/7457/the-radeon-r9-2...

DP in F@H here. Titan sort of wins right? 2.5x or so over 290x :) It's comic both here and toms uses a bunch of junk synthetic crap (bitmining, Asics do that now, basemark junk, F@H, etc) to show how good AMD is, but forget you can do real work with Cuda (heck even bitmining can be done with cuda)

When you say compute, I think CUDA, not opencl on NV. As soon as you toss in Cuda the compute story changes completely. Unfortunately even Toms refuses to pit OpenCL vs. Cuda just like here at anandtech (but that's because both love OpenCL and hate proprietary stuff). But at least they show you in ShieTar's link (which craps out, remove the . at the end of the link) that Titan kills even the top quadro cards (it's a Tesla remember for $1500 off). It's 2x+ faster than quadro's in almost everything they tested. So yeah, Titan is very worth it for people who do PRO stuff AND game.

http://www.tomshardware.com/reviews/best-workstati...

For the lazy, fixed ShieTar's link.

All these sites need to do is fire up 3dsmax, cinema4d, Blender, adobe (pick your app, After Effect, Premiere, Photoshop) and pit Cuda vs. OpenCL. Just pick an opencl plugin for AMD (luxrender) and Octane/furryball etc for NV then run the tests. Does AMD pay all these sites to NOT do this? I comment and ask on every workstation/vid card article etc at toms, they never respond...LOL. They run pure cuda, then pure opencl, but act like they never meet. They run crap like basemark for photo/video editing opencl junk (you can't make money on that), instead of running adobe and choosing opencl(or directx/opengl) for AMD and Cuda for NV. Anandtech runs Sony Vegas which a quick google shows has tons of problems with NV. Heck pit Sony/AMD vs. Adobe/NV. You can run the same tests in both on video, though it would be better to just use adobe for both but they won't do that until AMD gets done optimizing for the next rev...ROFL. Can't show AMD in a bad light here...LOL. OpenCL sucks compared to Cuda (proprietary or not...just the truth).