Intel's Xeon E5-2600 V2: 12-core Ivy Bridge EP for Servers

by Johan De Gelas on September 17, 2013 12:00 AM ESTRendering Performance: Cinebench

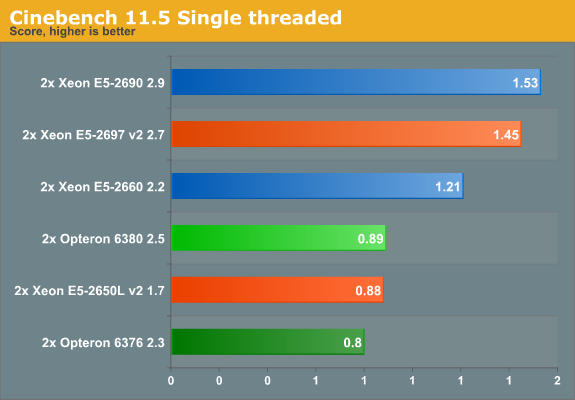

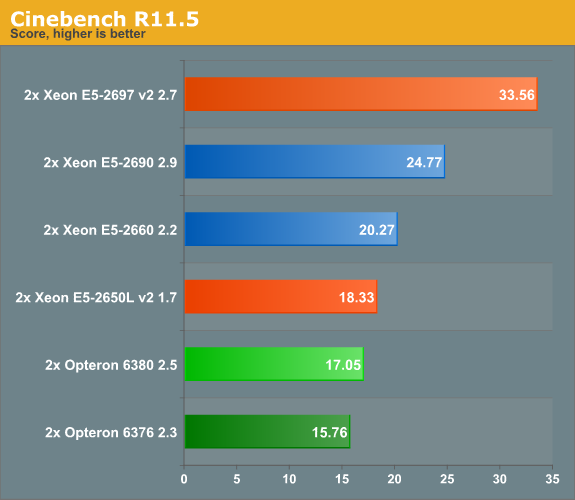

Cinebench, based on MAXON's CINEMA 4D software, is probably one of the most popular benchmarks around as it is pretty easy to perform this benchmark on your own home machine. The benchmark supports up to 64 threads, more than enough for our 24- and 32-thread test servers. First we test single-threaded performance, to evaluate the performance of each core.

Cinebench achieves an IPC between 1.4 and 1.8 and is mostly dominated by SSE2 code. There is little improvement in that area, and the older 2.9-3.8GHz E5-2690 has the clock speed advantage over the new 2.7-3.5GHz E5-2697 v2. Also of note is that i n this type of code, one Piledriver core again needs 50% higher clock speed to keep up with the Ivy Bridge core.

Once we run up to 48 threads, the new Xeon can outperform its predecessor by a wide margin of ~35%. It is interesting to compare this with the Core i7-4960x results , which is the same die as the "budget" Xeon E5s (15MB L3 cache dies). The six-core chip at 3.6GHz scores 12.08. It is clear that chosing cores over clock speed is a good strategy in rendering farms: the dual E5-2650L offers 50% better performance in a power budget that is more or less the same (2x70W vs 130W).

70 Comments

View All Comments

mczak - Tuesday, September 17, 2013 - link

Yes that's surprising indeed. I wonder how large the difference in die size is (though the reason for two dies might have more to do with power draw).zepi - Tuesday, September 17, 2013 - link

How about adding turbo frequencies to sku-comparison tables? That'd make comparison of the sku's a bit easier as that is sometimes more repsentative figure depending on the load that these babies are run.JarredWalton - Tuesday, September 17, 2013 - link

I added Turbo speeds to all SKUs as well as linking the product names to the various detail pages at AMD/Intel. Hope that helps! (And there were a few clock speed errors before that are now corrected.)zepi - Wednesday, September 18, 2013 - link

Appreciated!zepi - Wednesday, September 18, 2013 - link

For most server buyers things are not this simple, but for armchair sysadmins this might do:http://cornflake.softcon.fi/export/ivyexeon.png

ShieTar - Tuesday, September 17, 2013 - link

"Once we run up to 48 threads, the new Xeon can outperform its predecessor by a wide margin of ~35%. It is interesting to compare this with the Core i7-4960x results , which is the same die as the "budget" Xeon E5s (15MB L3 cache dies). The six-core chip at 3.6GHz scores 12.08."What I find most interesting here is that the Xeon manages to show a factor 23 between multi-threaded and single-threaded performance, a very good scaling for a 24-thread CPU. The 4960X only manages a factor of 7 with its 12 threads. So it is not merely a question of "cores over clock speed", but rather hyperthreading seems to not work very well on the consumer CPUs in the case of Cinebench. The same seems to be true for the Sandy Bridge and Haswell models as well.

Do you know why this is? Is hyperthreading implemented differently for the Xeons? Or is it caused by the different OS used (Windows 2008 vs Windows 7/8)?

JlHADJOE - Tuesday, September 17, 2013 - link

^ That's very interesting. Made me look over the Xeon results and yes, they do appear to be getting close to a 100% increase in performance for each thread added.psyq321 - Tuesday, September 17, 2013 - link

Hyperthreading is the same.However, HCC version of IvyTown has two separate memory controllers, more features enabled (direct cache access, different prefetchers etc.). So it might scale better.

I am achieving 1.41x speed-up with dual Xeon 2697 v2 setup, compared to my old dual Xeon 2687W setup. This is so close to the "ideal" 1.5x scaling that it is pretty amazing. And, 2687w was running on a slightly higher clock in all-core turbo.

So, I must say I am very happy with the IvyTown upgrade.

garadante - Tuesday, September 17, 2013 - link

It's not 24 threads, it's 48 threads for that scaling. 2x physical CPUs with 12 cores each, for 24 physical cores and a total of 48 logical cores.Kevin G - Tuesday, September 17, 2013 - link

Actually if you run the numbers, the scaling factor from 1 to 48 threads is actually 21.9. I'm curious what the result would have been with Hyperthreading disabled as that can actually decrease performance in some instances.