Samsung SSD 840 EVO Review: 120GB, 250GB, 500GB, 750GB & 1TB Models Tested

by Anand Lal Shimpi on July 25, 2013 1:53 PM EST- Posted in

- Storage

- SSDs

- Samsung

- TLC

- Samsung SSD 840

Performance Consistency

In our Intel SSD DC S3700 review I introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

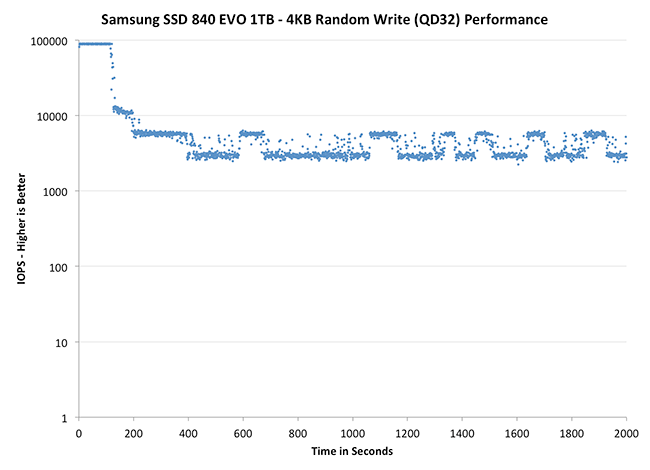

To generate the data below I took a freshly secure erased SSD and filled it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next I kicked off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. I ran the test for just over half an hour, no where near what we run our steady state tests for but enough to give me a good look at drive behavior once all spare area filled up.

I recorded instantaneous IOPS every second for the duration of the test. I then plotted IOPS vs. time and generated the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, I did vary the percentage of the drive that I filled/tested depending on the amount of spare area I was trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives we've tested here but not all controllers may behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

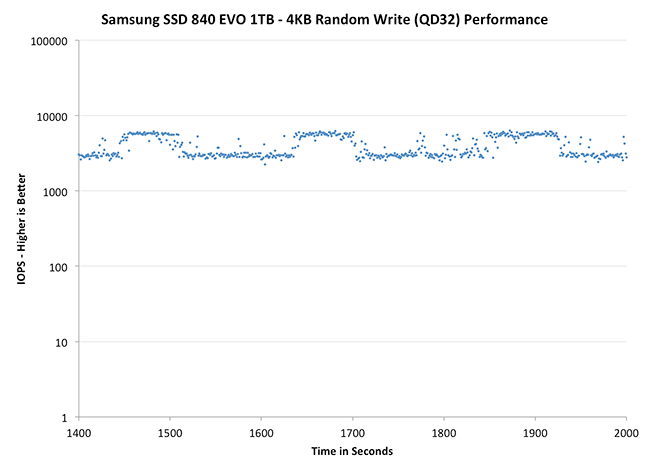

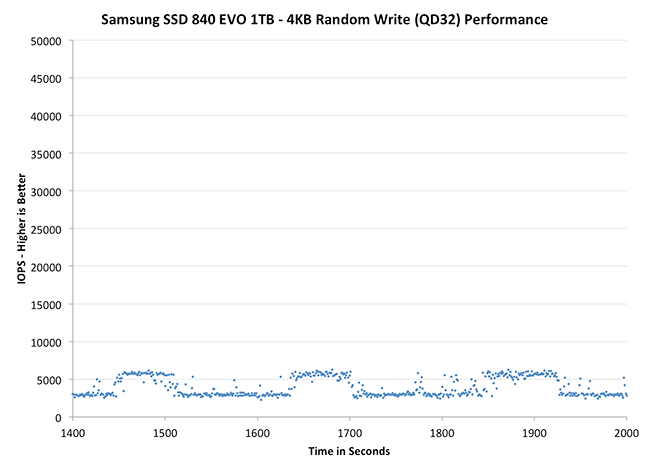

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

|

|||||||||

| Crucial M500 960GB | Samsung SSD 840 EVO 1TB | Samsung SSD 840 EVO 250GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

Thanks to the EVO's higher default over provisioning, you actually get better consistency out of the EVO than the 840 Pro out of the box. Granted you can get similar behavior out of the Pro if you simply don't use all of the drive. The big comparison is against Crucial's M500, where the EVO does a bit better. SanDisk's Extreme II however remains the better performer from an IO consistency perspective.

|

|||||||||

| Crucial M500 960GB | Samsung SSD 840 EVO 1TB | Samsung SSD 840 EVO 250GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

|

|||||||||

| Crucial M500 960GB | Samsung SSD 840 EVO 1TB | Samsung SSD 840 EVO 250GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

Zooming in we see very controlled and frequent GC patterns on the 1TB drive, something we don't see in the 840 Pro. The 250GB drive looks a bit more like a clustered random distribution of IOs, but minimum performance is still much better than on the standard OP 840 Pro.

TRIM Validation

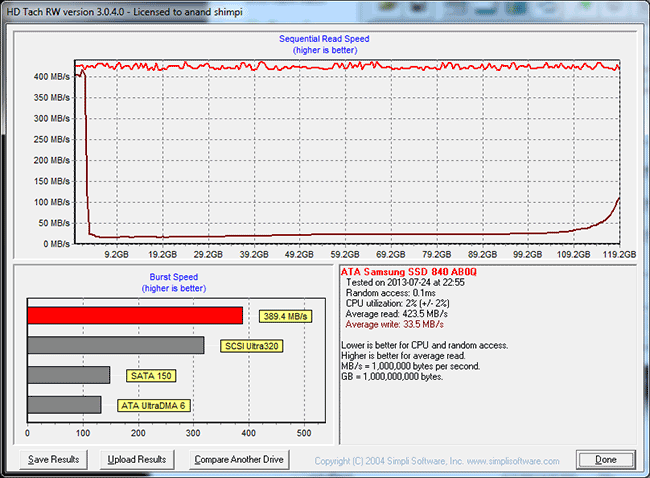

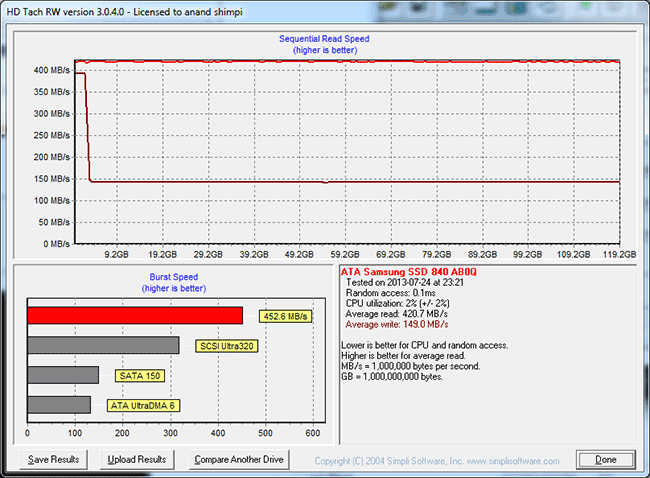

Our performance consistency test actually replaces our traditional TRIM test in terms of looking at worst case scenario performance, but I wanted to confirm that TRIM was functioning properly on the EVO so I dusted off our old test for another go. The test procedure remains unchanged: fill the drive with sequential data, run a 4KB random write test (QD32, 100% LBA range) for a period of time (30 minutes in this case) and use HDTach to visualize the impact on write performance:

Minimum performance drops down to around 30MB/s, eugh. Although the EVO can be reasonably consistent, you'll still want to leave some free space on the drive to ensure that performance always stays high (I recommend 15 - 25% if possible).

A single TRIM pass (quick format under Windows 7) fully restores performance as expected:

The short period of time at 400MB/s is just TurboWrite doing its thing.

137 Comments

View All Comments

Sivar - Thursday, July 25, 2013 - link

Really well-written article.I have to admit, while most of Samsung's products are crap, their 840 and later SSDs are not bad at all.

(The 830, while not prone to electronic failure, was built really poorly. It's SATA connector would snap off if you tilted your head the wrong way while looking at it).

Coup27 - Thursday, July 25, 2013 - link

Samsung have gotten into the world position they are in today by selling crap. I have used plenty of 830's and I have never had an issue with the SATA connector so I have no idea what you are doing with it.Coup27 - Thursday, July 25, 2013 - link

Haven't ^^ (why is there no edit button?)piroroadkill - Thursday, July 25, 2013 - link

So you accidentally broke a SATA connector, and now that's suddenly a flaw? I have two Samsung 830 256GB in my system, and somehow I didn't break the SATA connectors...I also fitted 4x Samsung 830 256GB to a server at work.. and somehow I didn't break the SATA connectors..

HisDivineOrder - Saturday, July 27, 2013 - link

True, this. SATA connectors are poorly designed, but that's the fault of the people who made the spec, not the specific one in the 830. I'm not saying it can't break. I've had SATA connectors break on a variety of devices. None of them were my 830, but I'm not saying it's impossible or whatever.I've seen WD, Seagate, and Hitachi drives all have a problem with the connector, though. Seems like SATA and HDMI were designed to make the connection as loose and easily broken as possible. I guess that gives them some small percentage of people buying all new product to replace something on said product that's small and plastic...

mmaenpaa - Thursday, July 25, 2013 - link

Good article once again Anand,and very good perfomance for this price range.

Regarding Torx, I believe this is one the main reasons why it is used:

"By design, Torx head screws resist cam-out better than Phillips head or slot head screws. Where Phillips heads were designed to cause the driver to cam out, to prevent overtightening, Torx heads were designed to prevent cam-out. The reason for this was the development of better torque-limiting automatic screwdrivers for use in factories. Rather than rely on the tool slipping out of the screw head when a torque level is reached, thereby risking damage to the driver tip, screw head and/or workpiece, the newer driver design achieves a desired torque consistently. The manufacturer claims this can increase tool bit life by ten times or more"

(from https://en.wikipedia.org/wiki/Torx)

BR,

Markku

hybrid2d4x4 - Thursday, July 25, 2013 - link

For what it's worth, my experience with screws is consistent with your post. I've never had a torx screw slip out, which is definitely not the case with philips or the square or flathead varieties. I'd like to see them used more often.piroroadkill - Thursday, July 25, 2013 - link

Agreed. I love Torx. Philips and pozidriv are the terrible bastard children of the screw universe. Always slipping and burring. Ugh. If everything was replaced with totally cam-out free designs like Torx, allen head, robertson screw.. etc, etc.. then I'd be more than happy.psuedonymous - Thursday, July 25, 2013 - link

I'd LOVE for Torx to be used more often. They're much easier to work with (not once have I had a Torx screw fall off the screwdriver and roll under the desk), the screwheads are more robust, and they frankly look a lot nicer than Philips or Pozidriv.It'd make pulling apart laptops all day a darn sight less onerous if Torx were the standard rather than Philips.

camramaan - Friday, February 14, 2014 - link

But then there would be less security in other areas of the mechanical world... not everyone can carry a bunch of Torx bits everywhere they go, so breaking into, or disassembling something built with Torx is more time laborious and pre-planned. I fully understand the sentiments, but the development of alternative screw heads was more for security than ease of use.