NVIDIA’s GeForce GTX Titan Review, Part 2: Titan's Performance Unveiled

by Ryan Smith & Rahul Garg on February 21, 2013 9:00 AM ESTTitan’s Compute Performance, Cont

With Rahul having covered the basis of Titan’s strong compute performance, let’s shift gears a bit and take a look at real world usage.

On top of Rahul’s work with Titan, as part of our 2013 GPU benchmark suite we put together a larger number of compute benchmarks to try to cover real world usage, including the old standards of gaming usage (Civilization V) and ray tracing (LuxMark), along with several new tests. Unfortunately that got cut short when we discovered that OpenCL support is currently broken in the press drivers, which prevents us from using several of our tests. We still have our CUDA and DirectCompute benchmarks to look at, but a full look at Titan’s compute performance on our 2013 GPU benchmark suite will have to wait for another day.

For their part, NVIDIA of course already has OpenCL working on GK110 with Tesla. The issue is that somewhere between that and bringing up GK110 for Titan by integrating it into NVIDIA’s mainline GeForce drivers – specifically the new R314 branch – OpenCL support was broken. As a result we expect this will be fixed in short order, but it’s not something NVIDIA checked for ahead of the press launch of Titan, and it’s not something they could fix in time for today’s article.

Unfortunately this means that comparisons with Tahiti will be few and far between for now. Most significant cross-platform compute programs are OpenCL based rather than DirectCompute, so short of games and a couple other cases such as Ian’s C++ AMP benchmark, we don’t have too many cross-platform benchmarks to look at. With that out of the way, let’s dive into our condensed collection of compute benchmarks.

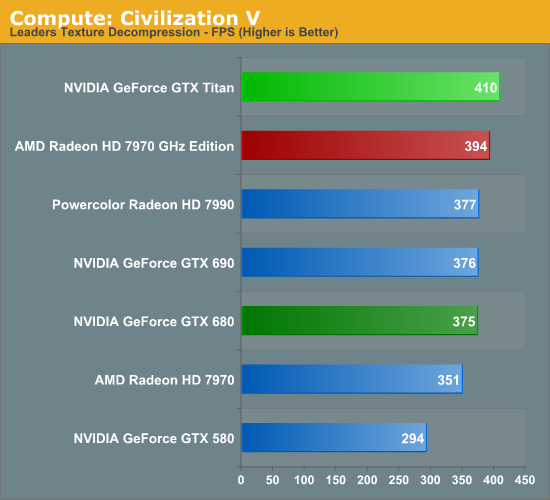

We’ll once more start with our DirectCompute game example, Civilization V, which uses DirectCompute to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes. While DirectCompute is used in many games, this is one of the only games with a benchmark that can isolate the use of DirectCompute and its resulting performance.

Note that for 2013 we have changed the benchmark a bit, moving from using a single leader to using all of the leaders. As a result the reported numbers are higher, but they’re also not going to be comparable with this benchmark’s use from our 2012 datasets.

With Civilization V having launched in 2010, graphics cards have become significantly more powerful since then, far outpacing growth in the CPUs that feed them. As a result we’ve rather quickly drifted from being GPU bottlenecked to being CPU bottlenecked, as we see both in our Civ V game benchmarks and our DirectCompute benchmarks. For high-end GPUs the performance difference is rather minor; the gap between GTX 680 and Titan for example is 45fps, or just less than 10%. Still, it’s at least enough to get Titan past the 7970GE in this case.

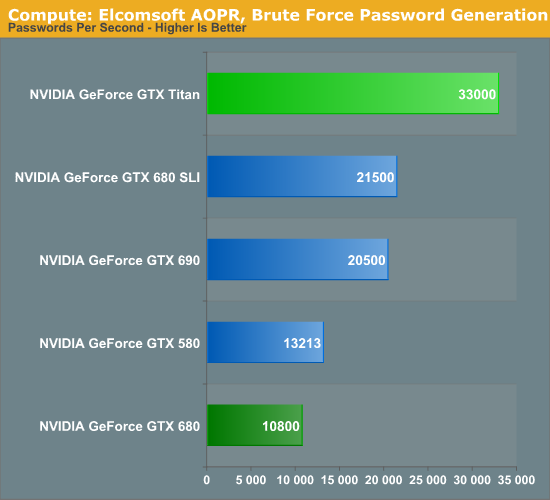

Our second test is one of our new tests, utilizing Elcomsoft’s Advanced Office Password Recovery utility to take a look at GPU password generation. AOPR has separate CUDA and OpenCL kernels for NVIDIA and AMD cards respectively, which means it doesn’t follow the same code path on all GPUs but it is using an optimal path for each GPU it can handle. Unfortunately we’re having trouble getting it to recognize AMD 7900 series cards in this build, so we only have CUDA cards for the time being.

Password generation and other forms of brute force crypto is an area where the GTX 680 is particularly weak, thanks to the various compute aspects that have been stripped out in the name of efficiency. As a result it ends up below even the GTX 580 in these benchmarks, never mind AMD’s GCN cards. But with Titan/GK110 offering NVIDIA’s full compute performance, it rips through this task. In fact it more than doubles performance from both the GTX 680 and the GTX 580, indicating that the huge performance gains we’re seeing are coming from not just the additional function units, but from architectural optimizations and new instructions that improve overall efficiency and reduce the number of cycles needed to complete work on a password.

Altogether at 33K passwords/second Titan is not just faster than GTX 680, but it’s faster than GTX 690 and GTX 680 SLI, making this a test where one big GPU (and its full compute performance) is better than two smaller GPUs. It will be interesting to see where the 7970 GHz Edition and other Tahiti cards place in this test once we can get them up and running.

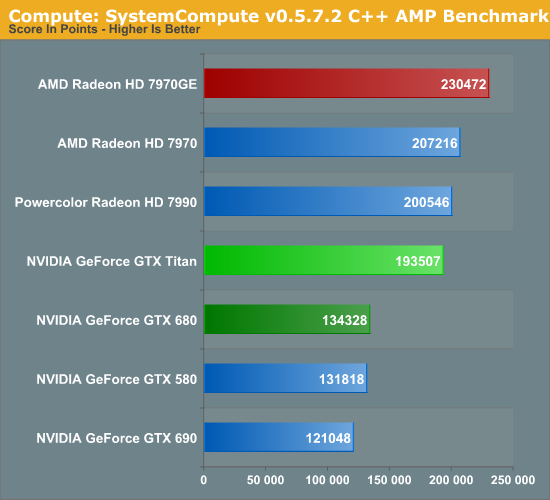

Our final test in our abbreviated compute benchmark suite is our very own Dr. Ian Cutress’s SystemCompute benchmark, which is a collection of several different fundamental compute algorithms. Rahul went into greater detail on this back in his look at Titan’s compute performance, but I wanted to go over it again quickly with the full lineup of cards we’ve tested.

Surprisingly, for all of its performance gains relative to GTX 680, Titan still falls notably behind the 7970GE here. Given Titan’s theoretical performance and the fundamental nature of this test we would have expected it to do better. But without additional cross-platform tests it’s hard to say whether this is something where AMD’s GCN architecture continues to shine over Kepler, or if perhaps it’s a weakness in NVIDIA’s current DirectCompute implementation for GK110. Time will tell on this one, but in the meantime this is the first solid sign that Tahiti may be more of a match for GK110 than it’s typically given credit for.

337 Comments

View All Comments

CeriseCogburn - Saturday, February 23, 2013 - link

Here you are arac, some places can do things this place claims it cannot.See the massive spanking amd suffers.

http://www.bit-tech.net/hardware/2013/02/21/nvidia...

That's beyond a 40% lead for the nvidia Titan above and beyond the amd flagship. LOL

No problem. No cpu limited crap. I guess some places know how to test.

TITAN 110 min 156 max

7970ghz 72 min 94 max

TheJian - Sunday, February 24, 2013 - link

Jeez, I wish I had read your post before digging up my links. Yours is worse than mine making my point on skyrim even more valid.In your link the GTX670 takes out the 7970ghz even at 2560x1200. I thought all these dumb NV cards were bandwidth limited ;) Clear separation on all cards in this "cpu limited" benchmark on ALL resolutions.

Hold on let me wrap my head around this...So with your site, and my 3 links to skyrim benchmarks in my posts (one of them right here at anandtech telling how to add gfx, their 7970ghz article), 3/4 of them showing separations according to their GPU class...Doesn't that mean they are NOT cpu bound? Am I missing something here? :) Are you wondering if Ryan benched skyrim with the hi-res pack after it came out, found it got smacked around by NV and dropped it? I mean he's claiming he tested it right above your post and found skyrim cpu limited. Is he claiming he didn't think adding a HI-RES PACK that's official would NOT add graphical slowdowns? This isn't a LOW-RES pack right?

http://www.anandtech.com/show/6025/radeon-hd-7970-...

Isn't that Ryan's article:

"We may have to look at running extra graphics effects (e.g. TrSSAA/AAA) to thin the herd in the future."...Yep I think that's his point. PUT IN THE FREAKIN PACK. Because Skyrim didn't just become worthless as a benchmark as TONS are playing it, unlike Crysis Warhead and Dirt Showdown. Which you can feel free to check the server link I gave, nobody playing Warhead today either. I don't think anyone ever played Showdown to begin with (unlike warhead which actually was fun in circa 2008).

http://www.vgchartz.com/game/23202/crysis-warhead/

Global sales .01mil...That's a decimal point right?

http://www.vgchartz.com/game/70754/dirt-showdown/

It hasn't reached enough sales to post the decimal point. Heck xbox360 only sold 140K units globally. Meanwhile:

http://www.vgchartz.com/game/49111/the-elder-scrol...

2.75million sold (that's not a decimal any more)! Which one should be in the new game suite? Mods and ratings are keeping this game relevant for a long time to come. That's the PC sales ONLY (which is all we're counting here anyway).

http://elderscrolls.wikia.com/wiki/Official_Add-on...

The high-res patch is an OFFICIAL addon. Can't see why it's wrong to benchmark what EVERYONE would download to check out that bought the game, released feb 2012. Heck benchmark dawnguard or something. It came Aug 2012. I'm pretty sure it's still selling and being played. PCper, techpowerup, anandtech's review of the 7970ghz and now this bit-tech.net site. Skyrim's not worth benching but all 4 links show what to do (up the gfx!) and results come through fine and 3 sites show NV winning (your site of course the one of the four that ignores the game - hmm, sort of shows my bias comment doesn't it?). No cpu limit at 3 other sites who installed the OFFICIAL pack I guess, but you can't be bothered to test a HI-RES pack that surely stresses a gpu harder than without? What are we supposed to believe here?

Looks like you may have a point Cerise.

Thanks for the link BTW:

http://www.bit-tech.net/hardware/2013/02/21/nvidia...

You can consider witcher 2 added as a 15th benchmarkable game you left out Ryan. Just wish they'd turn on ubersampling. As mins are ~55 for titan here even at 2560x1600. Clearly with it on this would be a NON cpu limited game too (it isn't cpu limited even off). Please refrain from benchmarking games with less than a 100K units in sales. By definition that means nobody is playing them OR buying them right? And further we can extrapolate that nobody cares about their performance. Can anyone explain why skyrim with hires (and an addon that came after) is excluded but TWO games with basically ZERO sales are in here as important games that will be hanging with us for a few years?

CeriseCogburn - Tuesday, February 26, 2013 - link

Yes, appreciate it thanks, and your links I'll be checking out now.They already floated the poster vote article for the new game bench lineup, and what was settled upon already was Never Settle heavily flavored, so don't expect anything but the same or worse here.

That's how it goes and there's a lot of pressure and PC populism and that great 2 week yearly vacation, and certainly attempting to prop a dying amd ship that "enables" this whole branch of competition for review sites is certainly not ignored. A hand up, a hand out, give em hand !

lol

Did you see where Wiz there at TPU in Titan review mentioned nVidia SLI plays 18 of 19 in house game tests and amd CF fails on 6 of them... currently fails on 6 of 19.

" NVIDIA has done a very good job here in the past, and out of the 19 games in our test suite, SLI only fails in F1 2012. Compare that to 6 out of 19 failed titles with AMD CrossFire. "

http://www.techpowerup.com/reviews/NVIDIA/GeForce_...

So the amd fanboys have a real problem recommending 79xx rather 7xxx or 6xxx doubled or tripled up as an alternative with equal or better cost and "some performance wins" when THIRTY THREE PERCENT OF THE TIME AMD CF FAILS.

I'm sorry, I was supposed to lie about that and claim all of amd's driver issues are behind it and it's all equal and amd used to have problems and blah blah blah the green troll company has driver issues too and blah blah blah...

CeriseCogburn - Tuesday, February 26, 2013 - link

Oh man, investigative reporting....lol" http://www.vgchartz.com/game/23202/crysis-warhead/

Global sales .01mil...That's a decimal point right?

http://www.vgchartz.com/game/70754/dirt-showdown/

It hasn't reached enough sales to post the decimal point. Heck xbox360 only sold 140K units globally. Meanwhile:

http://www.vgchartz.com/game/49111/the-elder-scrol...

2.75million sold (that's not a decimal any more)! Which one should be in the new game suite? "

Well it's just a mad, mad, amd world ain't it.

You have a MASSIVE point there.

Excellent link, that's a bookmark.

Zingam - Thursday, February 21, 2013 - link

GeForce Titan "That means 1/3 FP32 performance, or roughly 1.3TFLOPS"Playstation 4 "High-end PC GPU (also built by AMD), delivering 1.84TFLOPS of performance"

Can somebody explain to me how that above could be? GeForce Titan $999 graphics card has much lesser performance than what would be in basically (if I understand properly) an APU by AMD for $500 for the full system??? I doubt that Sony will accept $1000 or more loss but what I find even more doubtful that an APU could have that much performance.

Please, somebody clarify!

chizow - Thursday, February 21, 2013 - link

1/3 FP32 is double-precision FP64 throughput for Titanic. The PS4 must be quoting single-precision FP32 throughput and 1.84TFlops is nothing impressive in that regard. I believe GT200/RV670 were producing numbers in that range for single-precision FLOPs.Blazorthon - Thursday, February 21, 2013 - link

You are correct about PS4 quoting single precision and such, but I'm sure that you're wrong about GT200 being anywhere near 1.8TFLOPS in single precision. That number is right around the Radeon 7850.chizow - Saturday, February 23, 2013 - link

GT200 was around 1TFlop, I was confused because the same gen cards (RV670) were in the 1.2-1.3TFLOP range due to AMD's somewhat overstated VLIW5 theoretical peak numbers. Cypress for example was ~2.5TFlops so I wasn't too far off the mark in quoted TFLOPs.But yes if PS4 is GCN the performance would be closer to a 7850 in an apples to apples comparison.

frogger4 - Thursday, February 21, 2013 - link

Yep, the quoted number for the PS4 is the single precision performance. It's just over the single precision FP for the HD7850 at 1.76flops, and it has one more compute unit, so that makes sense. The double precision for Pitcairn GPUs is 1/16th of that.The single precision performance for the Titan is (more than) three times the 1.3Tflop double precision number. Hope that clears it up!

StealthGhost - Thursday, February 21, 2013 - link

Why are the settings/resolution used for, at least Battlefield 3, not consistent with those used in previous tests on GPUs, most directly those in Bench? Makes it harder to compare.Bench is such a great tool, it should be constantly updated and completely relevant, not discarded like it seems to be with these tests.