NVIDIA’s GeForce GTX Titan Review, Part 2: Titan's Performance Unveiled

by Ryan Smith & Rahul Garg on February 21, 2013 9:00 AM ESTBattlefield 3

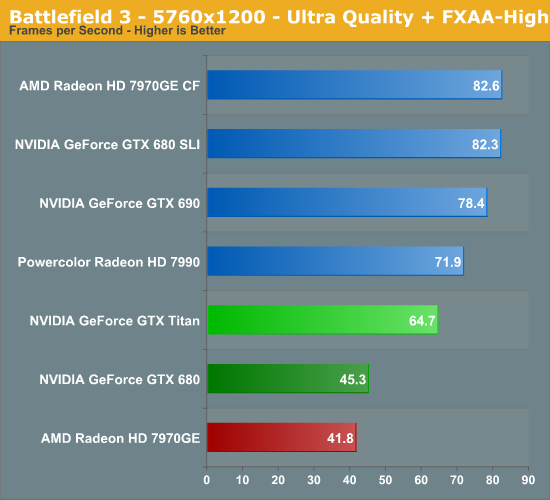

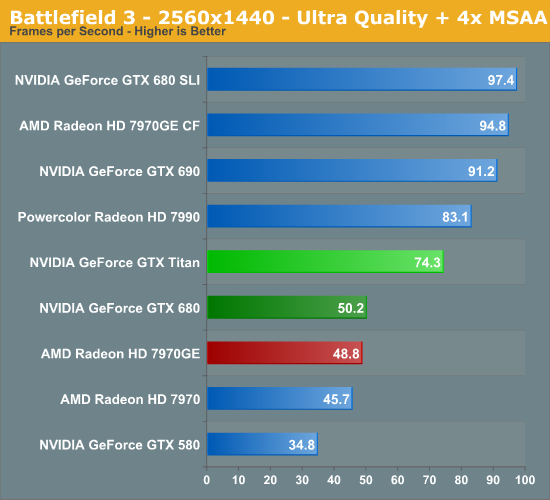

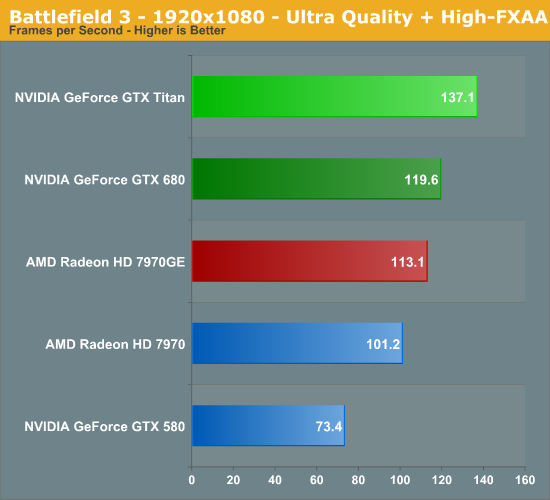

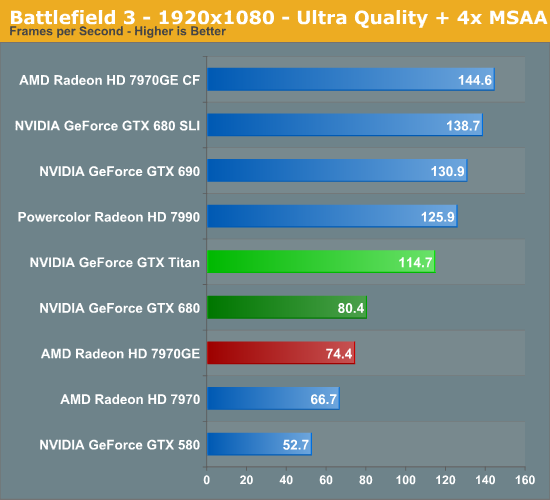

Our final action game of our benchmark suite is Battlefield 3, DICE’s 2011 multiplayer military shooter. Its ability to pose a significant challenge to GPUs has been dulled some by time and drivers, but it’s still a challenge if you want to hit the highest settings at the highest resolutions at the highest anti-aliasing levels. Furthermore while we can crack 60fps in single player mode, our rule of thumb here is that multiplayer framerates will dip to half our single player framerates, so hitting high framerates here may not be high enough.

AMD and NVIDIA have gone back and forth in this game over the past year, and as of late NVIDIA has held a very slight edge with the GTX 680. That means Titan has ample opportunity to push well past the 7970GE, besting AMD’s single-GPU contender by 52% at 2560. Even the GTX 680 is left well behind, with Titan clearing it by 48%.

This is enough to get Titan to 74fps at 2560 with 4xMSAA, which is just fast enough to make BF3 playable at those settings with a single GPU. Otherwise by the time we drop to 1920, even the 120Hz gamers should be relatively satisfied.

Moving on, as always multi-GPU cards end up being faster, but not necessarily immensely so. 22% for the GTX 690 and just 12% for the 7990 are smaller leads than we’ve seen elsewhere.

337 Comments

View All Comments

chizow - Friday, February 22, 2013 - link

Idiot...has the top end card cost 2x as much every time? Of course not!!! Or we'd be paying $100K for GPUs!!!CeriseCogburn - Saturday, February 23, 2013 - link

Stop being an IDIOT.What is the cost of the 7970 now, vs what I paid for it at release, you insane gasbag ?

You seem to have a brainfart embedded in your cranium, maybe you should go propose to Charlie D.

chizow - Saturday, February 23, 2013 - link

It's even cheaper than it was at launch, $380 vs. $550, which is the natural progression....parts at a certain performance level get CHEAPER as new parts are introduced to the market. That's called progress. Otherwise there would be NO INCENTIVE to *upgrade* (look this word up please, it has meaning).You will not pay the same money for the same performance unless the part breaks down, and semiconductors under normal usage have proven to be extremely venerable components. People expect progress, *more* performance at the same price points. People will not pay increasing prices for things that are not essential to life (like gas, food, shelter), this is called the price inelasticity of demand.

This is a basic lesson in business, marketing, and economics applied to the semiconductor/electronics industry. You obviously have no formal training in any of the above disciplines, so please stop commenting like a ranting and raving idiot about concepts you clearly do not understand.

CeriseCogburn - Saturday, February 23, 2013 - link

They're ALREADY SOLD OUT STUPID IDIOT THEORIST.LOL

The true loser, an idiot fool, wrong before he's done typing, the "education" is his brainwashed fried gourd Charlie D OWNZ.

chizow - Sunday, February 24, 2013 - link

And? There's going to be some demand for this card just as there was demand for the 690, it's just going to be much lower based on the price tag than previous high-end cards. I never claimed anything otherwise.I outlined the expectations, economics, and buying decisions in general for the tech industry and in general, they hold true. Just look around and you'll get plenty of confirmation where people (like me) who previously bought 1, 2, 3 of these $500-650 GPUs are opting to pass on a single Titanic at $1000.

Nvidia's introduction of an "ultra-premium" range is an unsustainable business model because it assumes Nvidia will be able to sustain this massive performance lead over AMD. Not to mention they will have a harder time justifying the price if their own next-gen offering isn't convincingly faster.

CeriseCogburn - Tuesday, February 26, 2013 - link

You're not the nVidia CEO nor their bean counter, you whacked out fool.You're the IDIOT that babbles out stupid concepts with words like "justifying", as you purport to be an nVidia marketing hired expert.

You're not. You're a disgruntled indoctrinated crybaby who can't move on with the times, living in a false past, and waiting for a future not here yet.

Oxford Guy - Thursday, February 21, 2013 - link

The article's first page has the word luxury appearing five times. The blurb, which I read prior to reading the article's first page has luxury appearing twice.That is 7 uses of the word in just a bit over one page.

Let me guess... it's a luxury product?

CeriseCogburn - Tuesday, February 26, 2013 - link

It's stupid if you ask me. But that's this place, not very nVidia friendly after their little didn't get the new 98xx fiasco, just like Tom's.A lot of these top tier cards are a luxury, not just the Titan, as one can get by with far less, the problem is, the $500 cards fail often at 1920x resolution, and this one perhaps can be said to have conquered just that, so here we have a "luxury product" that really can't do it's job entirely, or let's just say barely, maybe, as 1920X is not a luxury resolution.

Turn OFF and down SOME in game features, and that's generally, not just extreme case.

People are fools though, almost all the time. Thus we have this crazed "reviews" outlook distortion, and certainly no such thing as Never Settle.

We're ALWAYS settling when it comes to video card power.

araczynski - Thursday, February 21, 2013 - link

too bad there's not a single game benchmark in that whole article that I give 2 squirts about. throw in some RPG's please, like witcher/skyrim.Ryan Smith - Thursday, February 21, 2013 - link

We did test Skyrim only to ultimately pass on it for a benchmark. The problem with Skyrim (and RPGs in general) is that they're typically CPU limited. In this case our charts would be nothing but bar after bar at roughly 90fps, which wouldn't tell us anything meaningful about the GPU.