NVIDIA’s GeForce GTX Titan Review, Part 2: Titan's Performance Unveiled

by Ryan Smith & Rahul Garg on February 21, 2013 9:00 AM ESTCrysis: Warhead

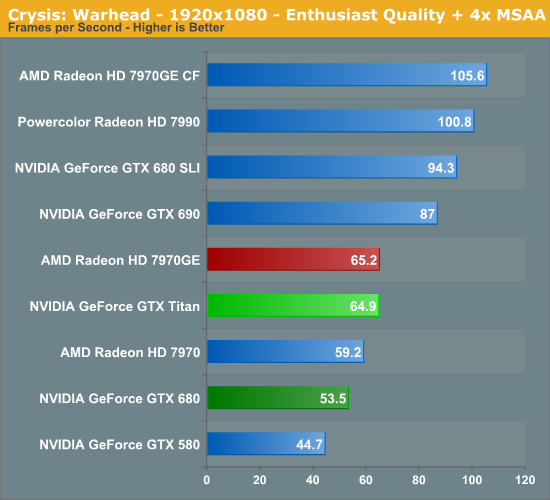

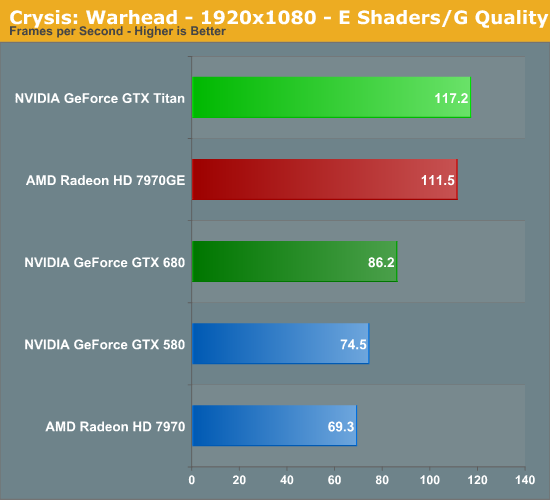

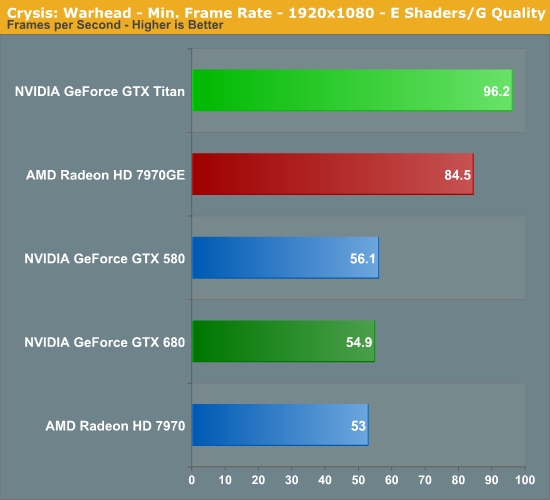

Up next is our legacy title for 2013, Crysis: Warhead. The stand-alone expansion to 2007’s Crysis, at over 4 years old Crysis: Warhead can still beat most systems down. Crysis was intended to be future-looking as far as performance and visual quality goes, and it has clearly achieved that. We’ve only finally reached the point where single-GPU cards have come out that can hit 60fps at 1920 with 4xAA.

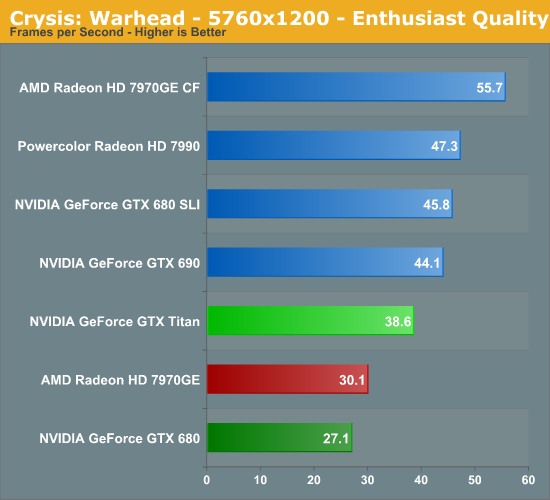

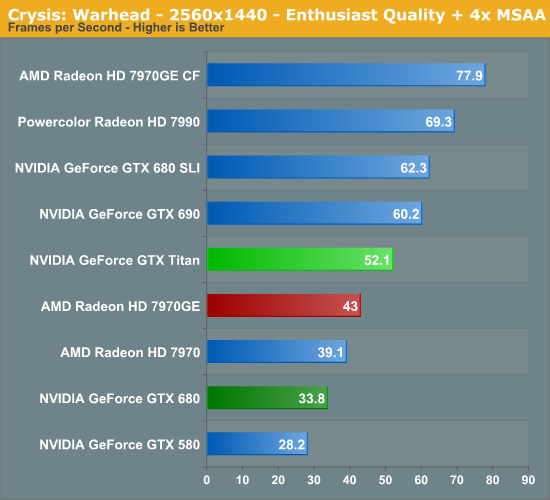

At 2560 we still have a bit of a distance to go before any single-GPU card can crack 60fps. In lieu of that Titan is the winner as expected. Leading the GTX 680 by 54%, this is Titan’s single biggest win over its predecessor, actually exceeding the theoretical performance advantage based on the increase in functional units alone. For some reason GTX 680 never did gain much in the way of performance here versus the GTX 580, and while it’s hard to argue that Titan has reversed that, it has at least corrected some of the problem in order to push more than 50% out.

In the meantime, with GTX 680’s languid performance, this has been a game the latest Radeon cards have regularly cleared. For whatever reason they’re a good match for Crysis, meaning even with all its brawn, Titan can only clear the 7970GE by 21%.

On the other hand, our multi-GPU cards are a mixed bag. Once more Titan loses to both, but the GTX 690 only leads by 15% thanks to GK104’s aforementioned weak Crysis performance. Meanwhile the 7990 takes a larger lead at 33%.

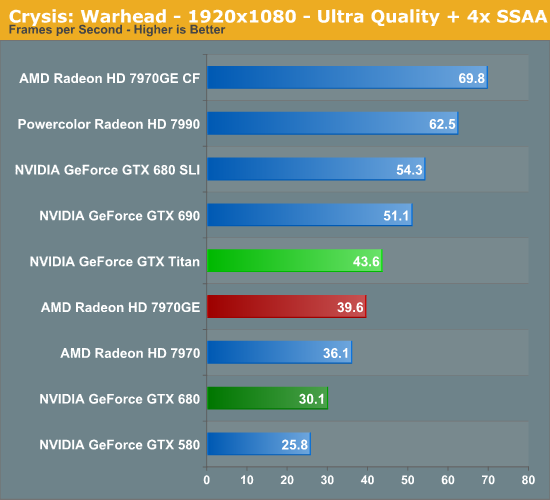

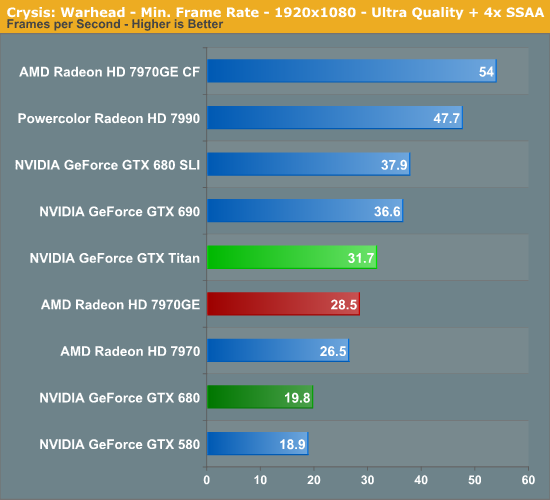

I’d also note that we’ve thrown in a “bonus round” here just to see when Crysis will be playable at 1080p with its highest settings and with 4x SSAA for that picture-perfect experience. As it stands AMD multi-GPU cards can already cross 60fps, but for everything else we’re probably a generation off yet before Crysis is completely and utterly conquered.

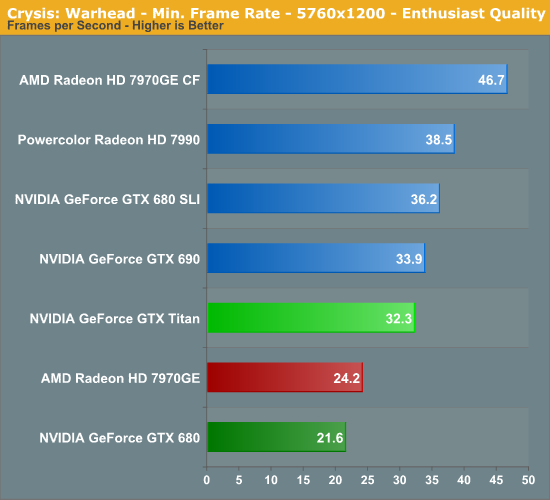

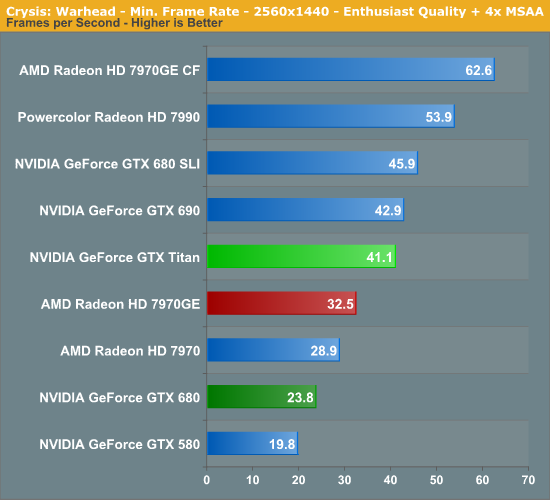

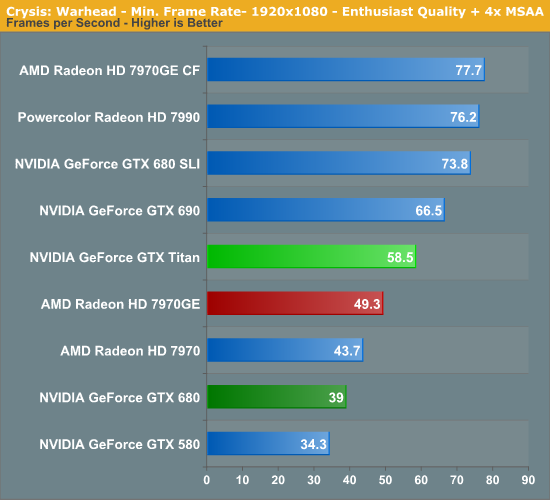

Moving on, we once again have minimum framerates for Crysis.

When it comes to Titan, the relative improvement in minimum framerates over GTX 680 is nothing short of obscene. Whatever it was that was holding back GTX 680 is clearly having a hard time slowing down Titan, leading to Titan offering 71% better minimum framerates. There’s clearly much more going on here than just an increase in function units.

Meanwhile, though Titan’s gains here over the 7970GE aren’t quite as high as they were with the GTX 680, the lead over the 7970GE still grows a bit to 26%. As for our mutli-GPU cards, this appears to be a case where SLI is struggling; the GTX 690 is barely faster than Titan here. Though at 31% faster than Titan, the 7990 doesn’t seem to be faltering much.

337 Comments

View All Comments

Ryan Smith - Thursday, February 21, 2013 - link

PCI\VEN_10DE&DEV_1005&SUBSYS_103510DEI have no idea what a Tesla card's would be, though.

alpha754293 - Thursday, February 21, 2013 - link

I don't suppose you would know how to tell the computer/OS that the card has a different PCI DevID other than what it actually is, would you?NVIDIA Tesla C2075 PCI\VEN_10DE&DEV_1096

Hydropower - Friday, February 22, 2013 - link

PCI\VEN_10DE&DEV_1022&SUBSYS_098210DE&REV_A1For the K20c.

brucethemoose - Thursday, February 21, 2013 - link

"This TDP limit is 106% of Titan’s base TDP of 250W, or 265W. No matter what you throw at Titan or how you cool it, it will not let itself pull more than 265W sustained."The value of the Titan isn't THAT bad at stock, but 106%? Is that a joke!?

Throw in an OC for OC comparison, and this card is absolutely ridiculous. Take the 7970 GE... 1250mhz is a good, reasonable 250mhz OC on air, a nice 20%-25% boost in performance.

The Titan review sample is probably the best case scenario and can go 27MHz past turbo speed, 115MHZ past base speed, so maybe 6%-10%. That $500 performance gap starts shrinking really, really fast once you OC, and for god sakes, if you're the kind of person who's buying a $1000 GPU, you shouldn't intend to leave it at stock speeds.

I hope someone can voltmod this card and actually make use of a waterblock, but there's another issue... Nvidia is obviously setting a precedent. Unless they change this OC policy, they won't be seeing any of my money anytime soon.

JarredWalton - Thursday, February 21, 2013 - link

As someone with a 7970GE, I can tell you unequivocally that 1250MHz on air is not at all a given. My card can handle many games at 1150MMhz, but other titles and applications (say, running some compute stuff) and I'm lucky to get stability for more than a day at 1050MHz. Perhaps with enough effort playing with voltage mods and such I could improve the situation, but I'm happier living with a card for a couple years that doesn't crap out because of excessively high voltages.CeriseCogburn - Saturday, February 23, 2013 - link

" After a few hours of trial and error, we settled on a base of the boost curve of 9,80 MHz, resulting in a peak boost clock of a mighty 1,123MHz; a 12 per cent increase over the maximum boost clock of the card at stock.Despite the 3GB of GDDR5 fitted on the PCB's rear lacking any active cooling it too proved more than agreeable to a little tweaking and we soon had it running at 1,652MHz (6.6GHz effective), a healthy ten per cent increase over stock.

With these 12-10 per cent increases in clock speed our in-game performance responded accordingly."

http://www.bit-tech.net/hardware/2013/02/21/nvidia...

Oh well, 12 is 6 if it's nVidia bash time, good job mr know it all.

Hrel - Thursday, February 21, 2013 - link

YES! 1920x1080 has FINALLY arrived. It only took 6 years from when it became mainstream but it's FINALLY here! FINALLY! I get not doing it on this card, but can you guys PLEASE test graphics cards, especially laptop ones, at 1600x900 and 1280x720. A lot of the time when on a budget playing games at a lower resolution is a compromise you're more than willing to make in order to get decent quality settings. PLEASE do this for me, PLEASE!JarredWalton - Thursday, February 21, 2013 - link

Um... we've been testing 1366x768, 1600x900, and 1920x1080 as our graphics standards for laptops for a few years now. We don't do 1280x720 because virtually no laptops have that as their native resolution, and stretching 720p to 768p actually isn't a pleasant result (a 6.7% increase in resolution means the blurring is far more noticeable). For desktop cards, I don't see much point in testing most below 1080p -- who has a desktop not running at least 1080p native these days? The only reason for 720p or 900p on desktops is if your hardware is too old/slow, which is fine, but then you're probably not reading AnandTech for the latest news on GPU performance.colonelclaw - Thursday, February 21, 2013 - link

I must admit I'm a little bit confused by Titan. Reading this review gives me the impression it isn't a lot more than the annual update to the top-of-the-line GPU from Nvidia.What would be really useful to visualise would be a graph plotting the FPS rates of the 480, 580, 680 and Titan along with their release dates. From this I think we would get a better idea of whether or not it's a new stand out product, or merely this year's '780' being sold for over double the price.

Right now I genuinely don't know if i should be holding Nvidia in awe or calling them rip-off merchants.

chizow - Friday, February 22, 2013 - link

From Anandtech's 7970 Review, you can see relative GPU die sizes:http://images.anandtech.com/doci/5261/DieSize.png

You'll also see the prices of these previous flagships has been mostly consistent, in the $500-650 range (except for a few outliers like the GTX 285 which came in hard economic times and the 8800Ultra, which was Nvidia's last ultra-premium card).

You an check some sites that use easy performance rating charts, like computerbase.de to get a quick idea of relative performance increases between generations, but you can quickly see that going from a new generation (not half-node) like G80 > GT200 > GF100 > GK100/110 should offer 50%+ increase, generally closer to the 80% range over the predecessor flagship.

Titan would probably come a bit closer to 100%, so it does outperform expectations (all of Kepler line did though), but it certainly does not justify the 2x increase in sticker price. Nvidia is trying to create a new Ultra-premium market without giving even a premium alternative. This all stems from the fact they're selling their mid-range part, GK104, as their flagship, which only occurred due to AMD's ridiculous pricing of the 7970.