The Vishera Review: AMD FX-8350, FX-8320, FX-6300 and FX-4300 Tested

by Anand Lal Shimpi on October 23, 2012 12:00 AM ESTPower Consumption

With Vishera, AMD was in a difficult position: it had to drive performance up without blowing through its 125W TDP. As the Piledriver cores were designed to do just that, Vishera benefitted. Remember that Piledriver was predominantly built to take this new architecture into mobile. I went through the details of what makes Piledriver different from its predecessor (Bulldozer) but at as far as power consumption is concerned, AMD moved to a different type of flip-flop in Piledriver that increased complexity on the design/timing end but decreased active power considerably. Basically, it made more work for AMD but resulted in a more power efficient chip without moving to a dramatically different architecture or new process node.

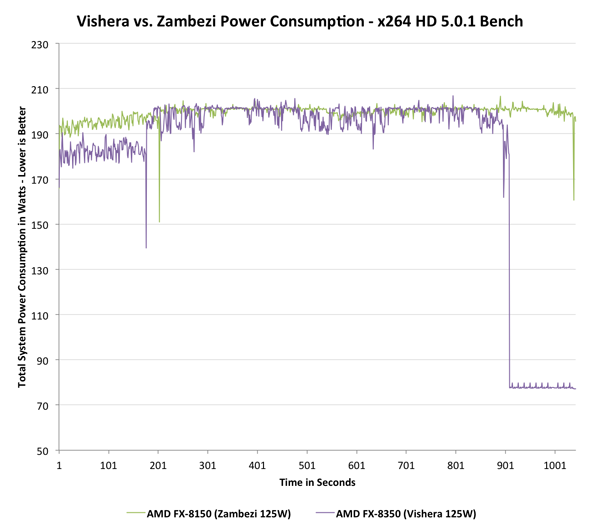

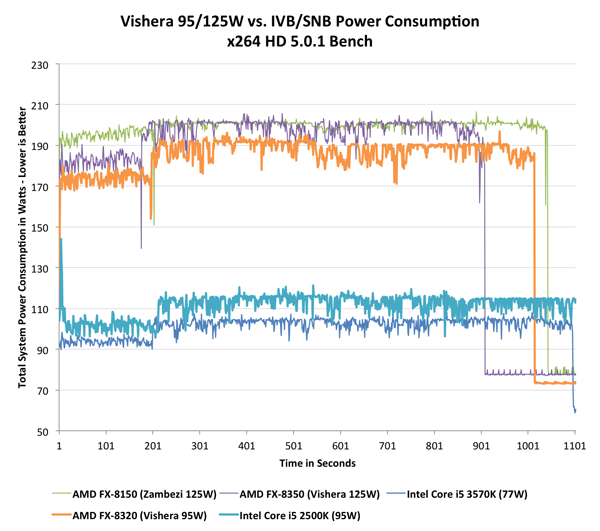

In mobile, AMD used these power saving gains to put Piledriver in mobile APUs, a place where Bulldozer never went. We saw this with Trinity, and surprisingly enough it managed to outperform the previous Llano generation APUs while improving battery life. On desktops however, AMD used the power savings offered by Piledriver to drive clock speeds up, thus increasing performance, without increasing power consumption. Since peak power didn't go up, overall power efficiency actually improves with Vishera over Zambezi. The chart below illustrates total system power consumption while running both passes of the x264 HD (5.0.1) benchmark to illustrate my point:

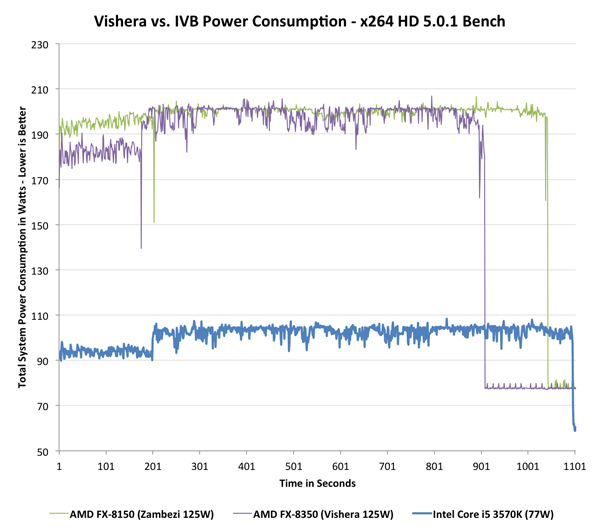

In the first pass Vishera actually draws a little less power, but once we get to the heavier second encode pass the two curves are mostly indistinguishable (Vishera still drops below Zambezi regularly). Vishera uses its extra frequency and IPC tweaks to complete the task sooner, and drive down to idle power levels, thus saving energy overall. The picture doesn't look as good though if we toss Ivy Bridge into the mix. Intel's 77W Core i5 3570K is targeted by AMD as the FX-8350's natural competitor. The 8350 is priced lower and actually outperforms the 3570K in this test, but it draws significantly more power:

The platforms aren't entirely comparable, but Intel maintains a huge power advantage over AMD. With the move to 22nm, Intel dropped power consumption over an already more power efficient Sandy Bridge CPU at 32nm. While Intel drove power consumption lower, AMD kept it constant and drove performance higher. Even if we look at the FX-8320 and toss Sandy Bridge into the mix, the situation doesn't change dramatically:

Sandy Bridge obviously consumes more than Ivy Bridge, but the gap between a Vishera and any of the two Intel platforms is significant. As I mentioned earlier however, this particular test runs quicker on Vishera however the test would have to be much longer in order to really give AMD the overall efficiency advantage.

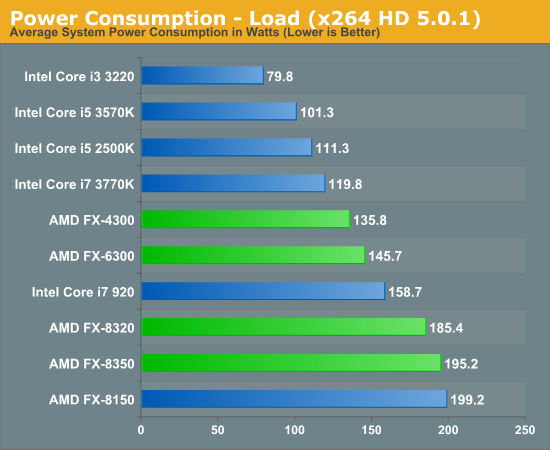

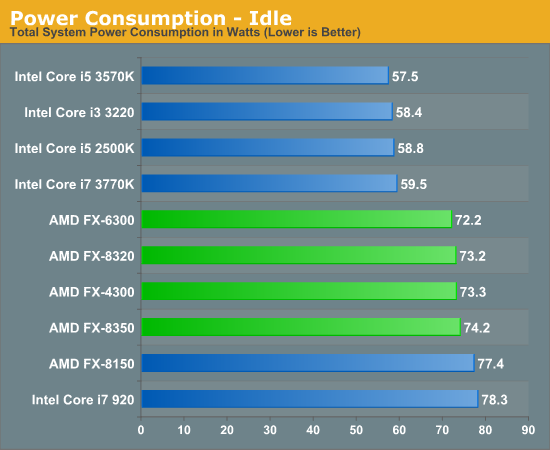

If we look at average power over the course of the two x264 encode passes, the results back up what we've seen above:

As more client PCs move towards smaller form factors, power consumption may become just as important as the single threaded performance gap. For those building in large cases this shouldn't be a problem, but for small form factor systems you'll want to go Ivy Bridge.

Note that idle power consumption can be competitive, but will obviously vary depending on the motherboard used (the Crosshair Formula V is hardly the lowest power AM3+ board available):

250 Comments

View All Comments

apache1649 - Friday, November 29, 2013 - link

Also X is not the only option. There are other, more functional, less bulky alternativesTaft12 - Tuesday, October 23, 2012 - link

Ad hominem fallacy. Address his arguments, not the slang.Windows is inappropriate for many important purposes, it says so right there in the EULA.

jabber - Tuesday, October 23, 2012 - link

Indeed, reading a lot of comments over the past 18 months you would think AMD were still pushing their old K6-2 CPUS from the turn of the century.Build a AMD machine or an Intel one and average Joe Customer isn't going to notice.

If honest, most of us here wouldn't either probably.

CeriseCogburn - Tuesday, October 30, 2012 - link

Funny how the same type of thing could be said in the video card wars, but all those amd fanboys won't say it there !Isn't that strange, how the rules change, all for poor little crappy amd the loser, in any and every direction possible, even in opposite directions, so long as it fits the current crap hand up amd needs to "get there" since it's never "beenthere". LOL

whatthehey - Tuesday, October 23, 2012 - link

We've heard all of this before, and while much of what you say is true, and ignoring the idiotic "Windoze" comments not to mention the tirade on "evil Intel", Anand sums it up quite clearly:Vishera performance isn't terrible but it's not great either. It can beat Intel in a few specific workloads (which very few people will ever run consistently), but in common workloads (lightly threaded) it falls behind by a large margin. All of this would be fine, were it not for the fact that Vishera basically sucks down a lot of power in comparison to Ivy Bridge and Sandy Bridge. Yes, that's right: even at 32nm with Sandy Bridge, Intel beats Vishera hands down.

If we assume Anand's AMD platform is a bit heavy on power use by 15W (which seems kind as it's probably more like 5-10W extra at most), then we have idle power slightly in Intel's favor but load power favors Intel by 80W. 80W in this case is 80% more power than the Intel platform, which means AMD is basically using a lot more energy just to keep up (and the Sandy Bridge i5-2500K uses about 70W less).

So go ahead and "save" all that money with your performance-for-dollar champion where you spend $200 on the CPU, $125 on the motherboard (because you still need a good motherboard, not some piece of crap), coming to $325 total for the core platform. Intel i5-3570K goes for $220 most of the time (e.g. Amazon), but you can snag it for just $190 (plus $10 shipping) from MicroCenter right now. As for motherboards, a decent Z77 motherboard will also set you back around $125.

So if we go with a higher class Intel motherboard, pay Newegg pricing on all parts, and go with a cheaper (lower class) AMD motherboard, we're basically talking $220 for the FX-8350 (price gouging by Newegg), $90 for a mediocre Biostar 970 chipset motherboard, and a total of $310. If we go Intel it's $230 for the i5-3570K, and let's go nuts and get the $150 Gigabyte board, bringing us to $380. You save $70 in that case (which is already seriously biased since we're talking high-end Gigabyte vs. mainstream Biostar).

Now, let's just go with power use of 60W Intel vs. 70W AMD, and if you never push the CPUs you only would spend about $8.75 extra per year leaving the systems on 24/7. Turn them off most of the day (8 hours per day use) and we're at less than $3 difference in power costs per year. Okay, fine, but why get a $200+ CPU if you're going to be idle and power off 2/3 of the day?

Let's say you're an enthusiast (which Beenthere obviously tries to be, even with the heavy AMD bias), so you're playing games, downloading files, and doing other complex stuff where your PC is on all the time. Hell, maybe you're even running Linux with a server on the system, so it's both loaded moderately to heavily and powered on 24/7! That's awesome, because now the AMD system uses 80W more power per day, which comes out to $70 in additional power costs per year. Oops. All of your "best performance-for-the-dollar" make believe talk goes out the window.

Even the areas where AMD leads (e.g. x264), they do so by a small to moderate margin but use almost twice as much power. x264 is 26% faster on the FX-8350 compared to i5-3570K, but if you keep your system for even two years you could buy the i7-3770K (FX is only 3% faster in that case) and you'd come out ahead in terms of overall cost.

The only reason to get the AMD platform is if you run a specific workload where AMD is faster (e.g. x264), or if you're going budget and buying the FX-4300 and you don't need performance. Or if you're a bleeding heart liberal with some missing brain cells that thinks that support one gigantic corporation (AMD) makes you a good person while supporting another even more gigantic corporation (Intel) makes you bad. Let's not use products from any of the largest corporations in the world in that case, because every one of them is "evil and law violating" to some extent. Personally, I'm going to continue shopping at Walmart and using Intel CPUs until/unless something clearly better comes along.

DarkXale - Tuesday, October 23, 2012 - link

I would also add in the cost of getting a 100W more powerful power supply. (At least)The cost of the better cooling (either via better/more fans or better case), And the 'cost' of having a system with a higher noise profile.

Finally - Tuesday, October 23, 2012 - link

That talk suffers from the same inability to consider any other viewpoint but that of the hardware fetishist.If you are fapping to benchmarks in your free time you are the 1%.

The other 99% couldn't care less which company produced their CPU, GPU or whatever is working the "magic" inside their PC.

dananski - Tuesday, October 23, 2012 - link

I agree with you but stopped reading at "uses 80W more power per day" because you have ruined your trustworthyness with unit fail.CeriseCogburn - Tuesday, October 30, 2012 - link

Hey idiot, he got everything correct except saying 80W more every second of the day, and suddenly, you the brilliant critic, no doubt, discount everything else.Well guess what genius - if you can detect an error, and that's all you got, HE IS LARGELY CORRECT, AND EVEN CORRECT ON THE POINT concerning the unit error you criticized.

So who the gigantic FOOL is that completely ruined their own credibility by being such a moronic freaking idiot parrot, that no one should pay attention to ?

THAT WOULD BE YOU, DUMB DUMB !

Here's a news flash for all you skum sucking doofuses : Just because someone gets some minor grammatical or speech perfection issue written improperly, THEY DON'T LOSE A DAMN THING AND CERTAINLY NOT CREDIBILITY WHEN YOU FRIKKIN RETARDS CANNOT PROVE A SINGLE POINT OF THE MANY MADE INCORRECT !

It really would be nice if you babbling idiots stopped doing it. but you do it because it's stupid, it's irritating, it's incorrect, and you've seen a hundred other jerk offs like ourself pull that crap, and you just cannot resist, because that's all you've got, right ?

LOL - now you may complain about caps.

Siana - Thursday, October 25, 2012 - link

It looks like extra 10W in idle test could be largely or solely due to mainboard. There is no clear evidence to what extent and whether at all the new AMD draws more power than Intel at idle.A high end CPU and low utilization (mostly idle time) is in fact a very useful and common case. For example, as a software developer, i spend most time reading and writing code (idle), or testing the software (utilization: 15-30% CPU, effectively two cores tops). However, in between, software needs to be compiled, and this is unproductive time which i'd like to keep as short as possible, so i am inclined to chose a high-end CPU. For GCC compiler on Linux, new AMD platform beats any i5 and a Sandy Bridge i7, but is a bit behind Ivy Bridge i7.

Same with say a person who does video editing, they will have a lot of low-utilization time too just because there's no batch job their system could perform most of the time. The CPU isn't gonna be the limiting factor while editing, but when doing a batch job, it's usually h264 export, they may also have an advantage from AMD.

In fact every task i can think of, 3D production, image editing, sound and music production, etc, i just cannot think of a task which has average CPU utilization of more than 50%, so i think your figure of 80Wh/day disadvantage for AMD is pretty much unobtainable.

And oh, noone in their right mind runs an internet-facing server as their desktop computer, for a variety of good reasons, so while Linux is easy to use as a server even at home, it ends up a limited-scope, local server, and again, the utilization will be very low. However, you are much less likely to be bothered by the services you're providing due to the sheer number of integer cores. In case you're wondering, in order to saturate a well-managed server running Linux based on up to date desktop components, no connection you can get at home will be sufficient, so it makes sense to co-locate your server at a datacenter or rent theirs. Datacenters go to great lengths to not be connected to a single point, which in your case is your ISP, but to have low-latency connections to many Internet nodes, in order to enable the servers to be used efficiently.

As for people who don't need a high end system, AMD offers better on-die graphics accelerator and at the lower end, the power consumption difference isn't gonna be big in absolute terms.

And oh, "downloading files" doesn't count as "complex stuff", it's a very low CPU utilization task, though i don't think this changes much apropos the argument.

And i don't follow that you need a 125$ mainboard for AMD, 60$ boards work quite well, you generally get away with cheaper boards for AMD than for Intel obviously even when taking into account somewhat higher power-handling capacity of the board needed.

The power/thermal advantage of course extends to cooling noise, and it makes sense to pay extra to keep the computer noise down. However, the CPU is just so rarely the culprit any longer, with GPU of a high-end computer being the noise-maker number one, vibrations induced by harddisk number two, and only to small extent the CPU and its thermal contribution.

Hardly anything of the above makes Piledriver the absolute first-choice CPU, however it's not a bad choice still.

Finally, the desktop market isn't so important, the margins are terrible. The most important bit for now for AMD is the server market. Obviously the big disadvantage vs. Intel with power consumption is there, and is generally important in server market, however with virtualization, AMD can avoid sharp performance drop-off and allow to deploy up to about 1/3rd more VMs per CPU package because of higher number of integer cores, which can offset higher power consumption per package per unit of performance. I think they're onto something there, they have a technology they use on mobile chips now which allows them to sacrifice top frequency but reduce surface area and power consumption. If they make a server chip based on that technology, with high performance-per-watt and 12 or more cores, that is very well within realms of possible and could very well be a GREAT winner in that market.