Samsung Galaxy Note 2 Review (T-Mobile) - The Phablet Returns

by Brian Klug on October 24, 2012 9:00 AM ESTThe original generation Galaxy Note I played with was an AT&T model, and as a result was based around the same platform (I call a platform the combination of SoC and baseband) as the Skyrocket, which was AT&T’s SGS2 with LTE. That platform was Qualcomm’s Fusion 2 chipset, the very popular combination of a APQ8060 SoC (45nm dual core Scorpion at 1.5 GHz with Adreno 220 graphics) and MDM9x00 for baseband (Qualcomm’s 45nm first generation multimode LTE solution). The US-bound Galaxy S 3 variants were built around the successor of that platform, which was Qualcomm’s MSM8960 SoC (28nm dual core Krait at 1.5 GHz with Adreno 225 graphics and an onboard 2nd gen LTE baseband). The result was quick time to market with the latest and greatest silicon, improvements to performance, onboard LTE without two modems, and lower power consumption.

The Galaxy Note 2 does something different, and finally brings Samsung’s Exynos line of SoCs into devices bound for the USA where air interfaces are a combination of LTE, WCDMA, and CDMA2000. It’s clear that the Note 2 was on a different development cycle, and this time the standalone 28nm LTE baseband I’ve been talking about forever was available for use in the Galaxy Note 2, that part is MDM9x15, same as what’s in the iPhone 5, Optimus G, One X+, and a bunch of other upcoming handsets. If you haven’t read our other reviews where I’ve talked about this, the reason is that MDM9x15 is now natively voice enabled (MDM9x00 was not unless you ran with a Fusion platform), smaller, and lower power than its predecessor. The result is that there’s finally a multimode FDD-LTE, TDD-LTE, WCDMA (up to DC-HSPA+), EVDO (up to EVDO Rev.B) and TD-SCDMA baseband out there which doesn’t require going with a two chip solution. I could go on for pages about how this is primarily an engineering decision at this point, but the availability of MDM9x15 is why we see OEMs starting to finally ship handsets based around SoCs other than Qualcomm’s and also include LTE at the same time.

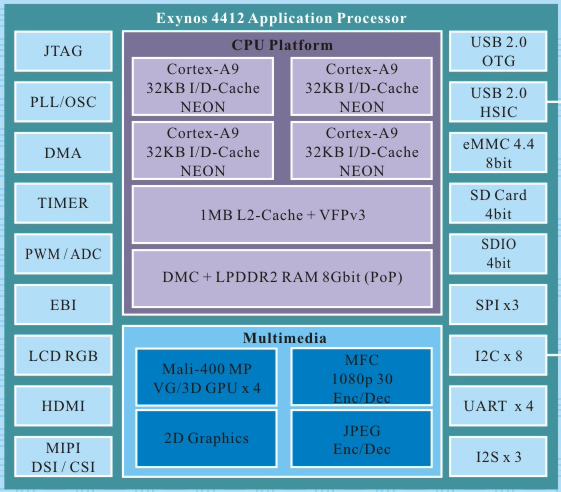

Anyhow, for a lot of people this will be the first time experiencing Samsung’s own current Exynos 4 flagship, Exynos 4412, which is of course quad core ARM Cortex A9s at a maximum of 1.6 GHz alongside ARM Mali–400MP4 built on Samsung’s 32nm HK-MG process. To the best of my knowledge, the Note 2 continues to use a 2x32 bit LPDDR2 memory interface, same as the international Galaxy S 3, though PCDDR3 is also a choice for Exynos 4412.

I’ve put together a table with specifications of the Note 2 and some other recent devices for comparison.

| Physical Comparison | ||||

| Apple iPhone 5 | Samsung Galaxy S 3 (USA) | Samsung Galaxy Note (USA) | Samsung Galaxy Note 2 | |

| Height | 123.8 mm (4.87") | 136.6 mm (5.38" ) | 146.8 mm | 151.1 mm |

| Width | 58.6 mm (2.31") | 70.6 mm (2.78") | 82.9 mm | 80.5 mm |

| Depth | 7.6 mm (0.30") | 8.6 mm (0.34") | 9.7 mm | 9.4 mm |

| Weight | 112 g (3.95 oz) | 133g (4.7 oz) | 178 g | 180 g |

| CPU | 1.3 GHz Apple A6 (Dual Core Apple Swift) | 1.5 GHz MSM8960 (Dual Core Krait) | 1.5 GHz APQ8060 (Dual Core Scorpion) | 1.6 GHz Samsung Exynos 4412 (Quad Core Cortex A9) |

| GPU | PowerVR SGX 543MP3 | Adreno 225 | Adreno 220 | Mali-400MP4 |

| RAM | 1 GB LPDDR2 | 2 GB LPDDR2 | 1 GB LPDDR2 | 2 GB LPDDR2 |

| NAND | 16, 32, or 64 GB integrated | 16/32 GB NAND with up to 64 GB microSDXC | 16 GB NAND with up to 32 GB microSD | 16/32/64 GB NAND (?) with up to 64 GB microSDXC |

| Camera | 8 MP with LED Flash + 1.2MP front facing | 8 MP with LED Flash + 1.9 MP front facing | 8 MP with LED Flash + 2 MP front facing | 8 MP with LED Flash + 1.9 MP front facing |

| Screen | 4" 1136 x 960 LED backlit LCD | 4.8" 1280x720 HD SAMOLED | 5.3" 1280 x 800 HD SAMOLED | 5.5" 1280 x 720 HD SAMOLED |

| Battery | Internal 5.45 Whr | Removable 7.98 Whr | Removable 9.25 Whr | Removable 11.78 Whr |

The Galaxy Note 2 also is one of the first handsets on the market other than Nexus devices to ship running Android 4.1. This puts it at a definite advantage in some tests as we’ll show in a moment, both due to improvements from project butter and what appear to be even newer Mali–400 drivers. I pulled the Note 1 out of my drawer and updated it to Android 4.0.1 and ran all the same tests again.

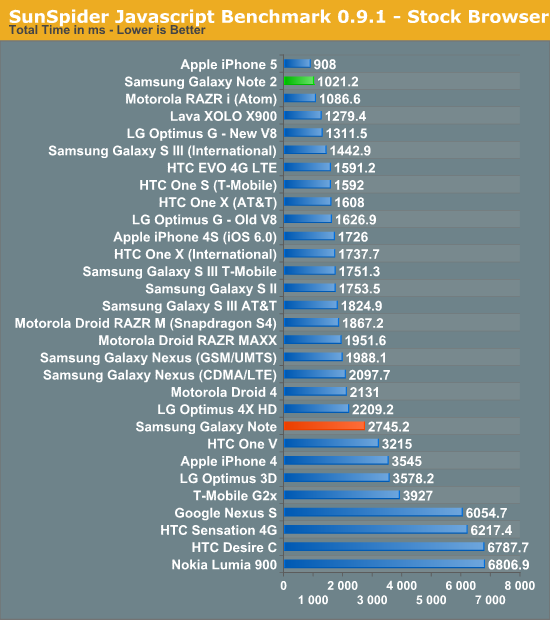

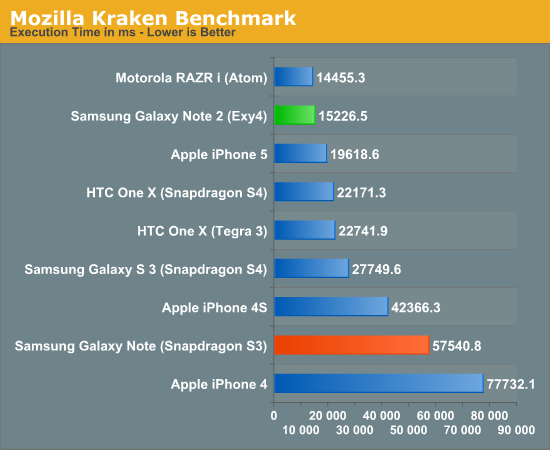

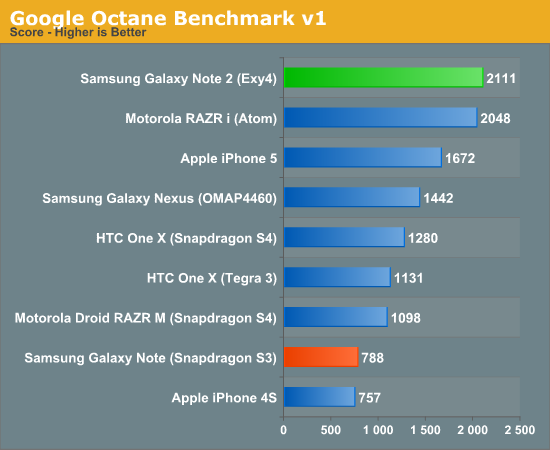

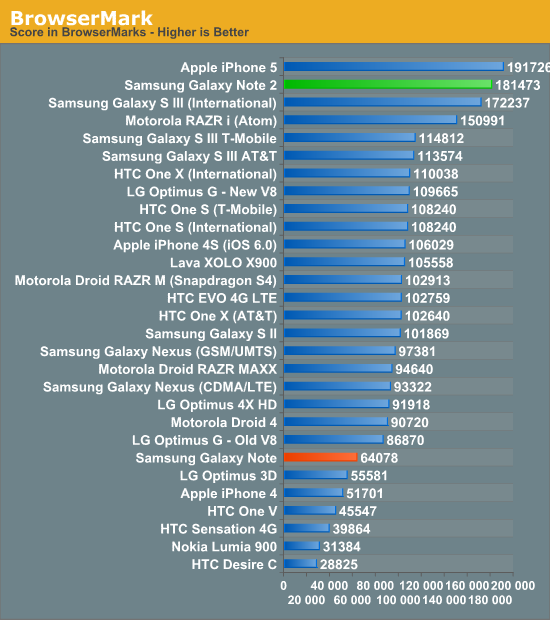

First up are some of the usual JavaScript performance tests which are run in the stock browser. Anand added a few in, and personally I think we’ve got almost an abundance of JavaScript performance emphasis right now. Again this is strongly influenced by the V8 JIT (Just In Time Compilation) library bundled with the stock browser on Android. OEMs spend a lot of time here optimizing V8 to the nuances of their particular architecture which can make a substantial difference in scores.

The usual disclosure here is that Android benchmarking is still a non-deterministic beast due to garbage collection, and I’m still not fully satisfied with everything that is available out there, but we have to make do with what we’ve got for the moment.

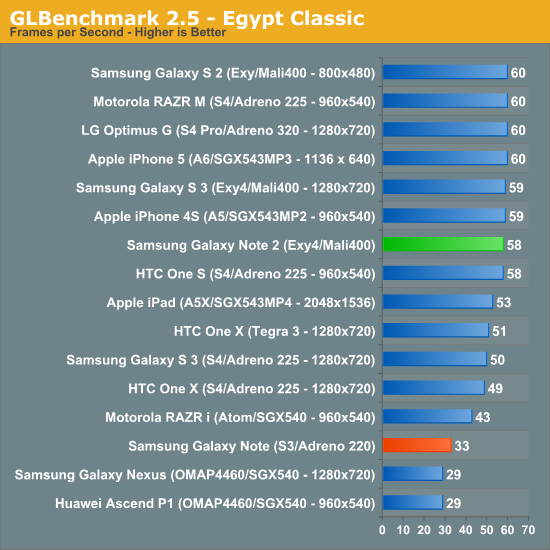

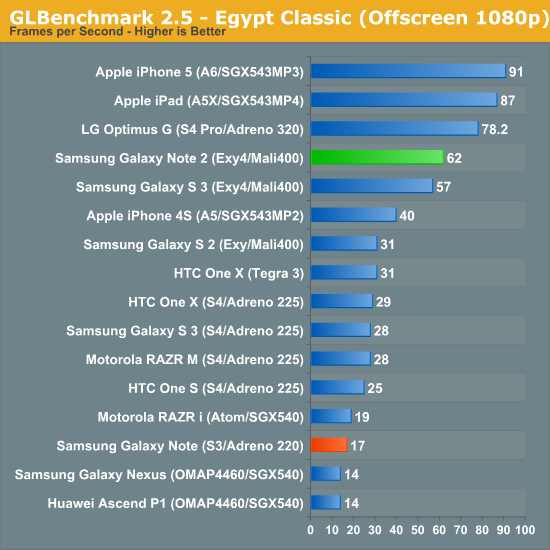

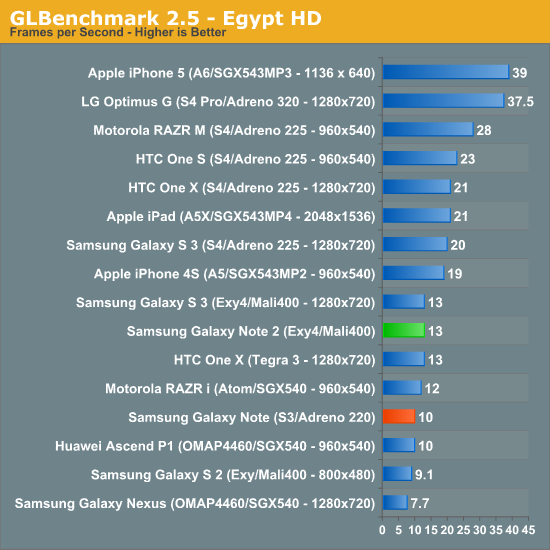

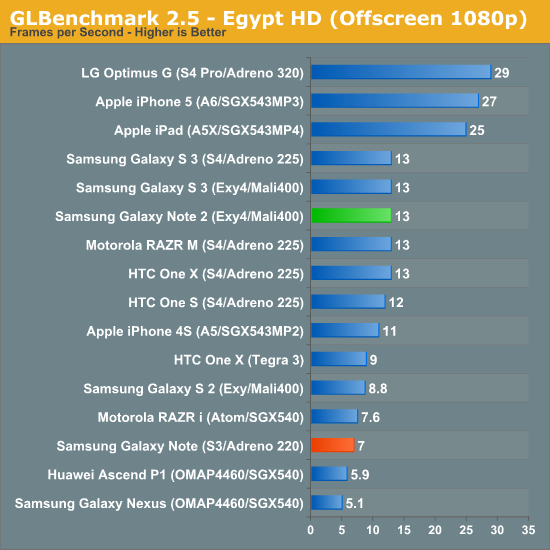

Next up is GLBenchmark 2.5.1 which now includes a beefier gameplay simulation test called Egypt HD alongside the previous Egypt test which is now named Egypt Classic. Offscreen resolution gets a bump to 1080p as well.

Here we see Mali–400 MP4 performing basically the same as I saw in the International Galaxy S 3 which is no surprise — it is after all the same SoC. Other than a slight bump in the Egypt Classic offscreen performance numbers, there aren’t any surprises. We see Exynos 4412 putting up a good fight, but Adreno 320 in APQ8064 is still something to look out for on the horizon. I'd run Taiji as well but we'd basically just see vsync at this point.

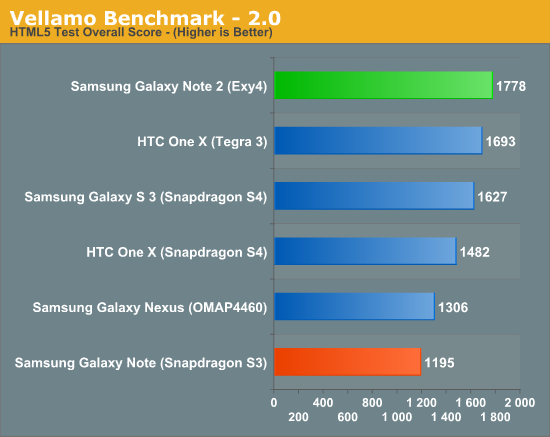

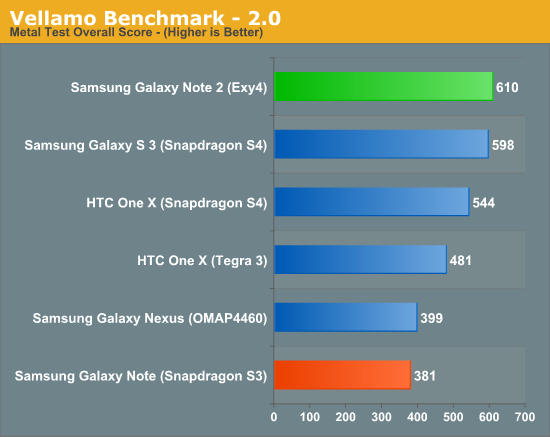

Vellamo 2.0.1 is a new version of the previously well-received Vellamo test developed by Qualcomm initially for in-house performance regression testing and checkin, later adopted by OEMs for their own testing, and finally released onto the Google Play Store. This is the first time the 2.0 version of Vellamo has made an appearance here, and after vetting it and spending time on the phone with its makers I feel just the same way about 2.0 as I did 1.0. There’s still the disclosure that this is Qualcomm’s benchmark, and that stigma will only go away after the app is open sourced for myself and others to code review, but from what deconstruction of the APK I’ve done, and further inspection of the included jS, I’m confident there’s no blatant cheating going on, it isn’t worth it.

Vellamo 2’s biggest new thing is the inclusion of a new ‘metal’ test which, as the name implies, includes some native tests. This is C code compiled with just the standard android compiler and -o2 optimization flag into both ARMv7 and x86 code. There’s Dhrystone for integer benchmarking, Linpack (native), Branch-K, Stream 5.9, RamJam, and a storage subtest.

Exynos 4412 and Android 4.1 is definitely a potent combination, which puts it close to the top if not at the top in a ton of CPU bound tests. My go-to application with lots of threading is still Chrome for Android, which regularly lights up four core devices completely. Even though our testing is done in the stock browser (since this almost always has the faster, platform-specific V8 library) my subjective tests are in Chrome, and the Note 2 feels very quick.

131 Comments

View All Comments

The0ne - Wednesday, October 24, 2012 - link

Don't worry, I'm 40 years old myself and screen specs are very important for me due to my aging eyes. I've since replaced all my LCDs with 30" IPS ones, e-readers and tablets have at least x800 and now this phone if I decide to buy one (if pricing is right).I was glad to read that statement in the review as well. It definitely put a smile onto my face to actually read it and have someone share the perspective. Mind you my eyes aren't too bad being .75 off but it does make a huge difference having a good screen to look at.

jjj - Wednesday, October 24, 2012 - link

Not a fan of the S3 but for some reason i kinda like this one.The weight seems rather high,after all most of the time the phone sits in a pocket,hope they get rid of some layers of glass in future models.Maybe by then we also see Corning's Willow Glass and the flexible Atmel touch sensor (not controller) for a thinner bezel.

It does feel a bit outdated already with quad Krait devices announced and dual core A15 arriving soon hopefully (Gigaom had some numbers for the A15 based Chromebook and they look impressive)

enezneb - Wednesday, October 24, 2012 - link

That 11 million contrast ratio is just amazing; a true testament to the potential of AMOLED.I could forsee Samsung improving their color calibration standards for the next generation of flagships seeing how they're under considerable pressure from the likes of SLCD2 and Apple's retina display. Paired with this new pentile matrix ultra-high ppi displays in the range of 400 may be possible as well (a la SLCD3)

Looks like next year will be another exciting year for mobile display technology once again.

schmitty338 - Wednesday, October 24, 2012 - link

You can change the colour calibration to be more 'natural' in the TouchWiz software ont eh Note II.Also, personally I don't see the need for 'more accurate' colours on a phone. Maybe if you are a pro photog who reviews pics on their phone, but otherwise, I love the colourful pop of AMOLED displays. Even the low res pentile AMOLED of my old galaxy S (original...getting the Note 2 soon) looks great for media. Text, not so much, due to the low res and pentile.

slysly - Wednesday, October 24, 2012 - link

Why would colour accuracy ever not be important? I thought the point of a big phone is to make it easier to consume all sorts of media, from websites to photos to movies. Wouldn't more accurate colours be better for all of these activities? To me, it's a bit like saying, I don't see the need to eat delicious food during lunch, or I don't need to be with a beautiful woman on the weekdays. Why settle for something markedly inferior?Calista - Thursday, October 25, 2012 - link

Is your screen properly colour calibrated? Are your walls painted in a neutral colour to avoid a colour cast? Are your lamps casting a specified type of light and shielded to avoid glare? Do you use high-quality blinds to prevent sunlight?This is only a few of the things to consider when dealing with calibration. And a cellphone is unable to deal with any of them unless it stays in the lab.

So for a cellphone the criteria is:

Is it bright enough?

Does it look pleasing to the eye, overly saturated or not?

PeteH - Thursday, October 25, 2012 - link

Eh, depends what you do with it. I can understand wanting an accurate representation of a photo you're taking.And given the option between an accurate display and a less accurate display (all other things being equal) I think most people would opt for the more accurate option. Note that I'm not saying they would choose it as better visually (people seem to be suckers for over saturated displays).

Zink - Wednesday, October 24, 2012 - link

Great review. Just the right amount of detail and I really like your perspective on day to day use.Mbonus - Wednesday, October 24, 2012 - link

Battery Life Question: I have noticed that since I have started using audio streaming apps my battery has taken quite a hit. I wonder if that could be added to your battery life benchmarks?It might not matter for devices like this where you have a large storage upgrade ability, but some other devices are forcing us to the cloud where streaming matters.

Great and thorough review as always!

Brian Klug - Wednesday, October 24, 2012 - link

So with streaming apps and such, even though the bitrate is low, if they're not very bursty (eg download and fill a big buffer, then wait 30 seconds or minutes, then repeat) they can hold the phone in CELL_DCH on UMTS or the appropriate equivalent on other air interface types, and that's what really burns power. It's time spent in that connected state that really destroys things.This is actually why I do the tethering test as well (which has a streaming audio component), but I'm beholden to whether or not the review unit that I'm sampled is provisioned for tethering or not, which is the real problem.

-Brian