Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTDecoupled L3 Cache

With Nehalem Intel introduced an on-die L3 cache behind a smaller, low latency private L2 cache. At the time, Intel maintained two separate clock domains for the CPU (core + uncore) and a third for what was, at the time, an off-die integrated graphics core. The core clock referred to the CPU cores, while the uncore clock controlled the speed of the L3 cache. Intel believed that its L3 cache wasn't incredibly latency sensitive and could run at a lower frequency and burn less power. Core CPU performance typically mattered more to most workloads than L3 cache performance, so Intel was ok with the tradeoff.

In Sandy Bridge, Intel revised its beliefs and moved to a single clock domain for the core and uncore, while keeping a separate clock for the now on-die processor graphics core. Intel now felt that race to sleep was a better philosophy for dealing with the L3 cache and it would rather keep things simple by running everything at the same frequency. Obviously there are performance benefits, but there was one major downside: with the CPU cores and L3 cache running in lockstep, there was concern over what would happen if the GPU ever needed to access the L3 cache while the CPU (and thus L3 cache) was in a low frequency state. The options were either to force the CPU and L3 cache into a higher frequency state together, or to keep the L3 cache at a low frequency even when it was in demand to prevent waking up the CPU cores. Ivy Bridge saw the addition of a small graphics L3 cache to mitigate this situation, but ultimately giving the on-die GPU independent access to the big, primary L3 cache without worrying about power concerns was a big issue for the design team.

When it came time to define Haswell, the engineers once again went to Nehalem's three clock domains. Ronak (Nehalem & Haswell architect, insanely smart guy) tells me that the switching between designs is simply a product of the team learning more about the architecture and understanding the best balance. I think it tells me that these guys are still human and don't always have the right answer for the long term without some trial and error.

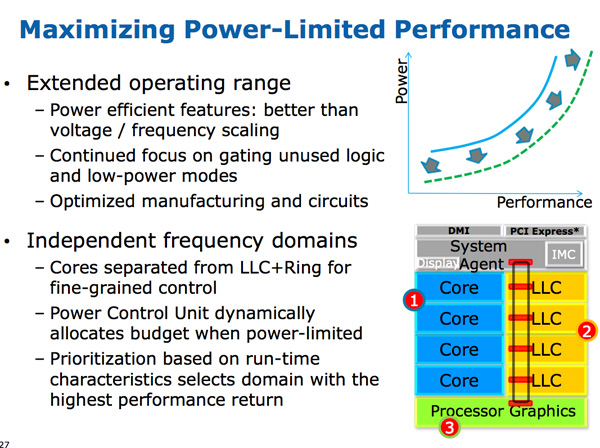

The three clock domains in Haswell are roughly the same as what they were in Nehalem, they just all happen to be on the same die. The CPU cores all run at the same frequency, the on-die GPU runs at a separate frequency and now the L3 + ring bus are in their own independent frequency domain.

Now that CPU requests to L3 cache have to cross a frequency boundary there will be a latency impact to L3 cache accesses. Sandy Bridge had an amazingly fast L3 cache, Haswell's L3 accesses will be slower.

The benefit is obviously power. If the GPU needs to fire up the ring bus to give/get data, it no longer has to drive up the CPU core frequency as well. Furthermore, Haswell's power control unit can dynamically allocate budget between all areas of the chip when power limited.

Although L3 latency is up in Haswell, there's more access bandwidth offered to each slice of the L3 cache. There are now dedicated pipes for data and non-data accesses to the last level cache.

Haswell's memory controller is also improved, with better write throughput to DRAM. Intel has been quietly telling the memory makers to push for even higher DDR3 frequencies in anticipation of Haswell.

245 Comments

View All Comments

A5 - Friday, October 5, 2012 - link

8 years is a loooooong time in this space, and yes you (and most people here) are in the minority.Notebooks have been outselling desktops for several years, and in 2011 smartphone shipments were higher than all PC form-factors combined. It's pretty clear where the big bucks are going, and it isn't desktop PCs.

flamethrower - Friday, October 5, 2012 - link

In 8 years you'll have 50-inch OLED TVs on your walls. What's going to drive them? Possibly a computer integrated into them.Peanutsrevenge - Friday, October 5, 2012 - link

We'll just be using large screens, keyboards and mice wireless connected to our ultra portable devices.The desktop will likely still exist for people like us who frequent this site, however it's role will be far more specialised, possibly more as our personal cloud servers than our PCs.

yankeeDDL - Friday, October 5, 2012 - link

Wow. Thanks for the excellent article: I really enjoyed it.The thought of having a processor of the power level of Ivy bridge in my mobile phone blows my mind.

Honestly though, I really can't see how the volume of CPUs for desktop PCs and servers is going to drop so dramatically, that Intel will need the volume generated by mobile, to "survive".

Yes, of course more volume will help, but 8 years from now, even if the mobiles will have such kind of computational power, I would imagine that a Desktop would have 10~20x that performance, as it is today.

It's true that today's CPUs are typically more powerful than the average user ever needs, but raise the hand who wouldn't trade his CPU for one 10x faster (in the same power envelope) ...

That said, 10W still seems like a lot to fit in a mobile: who knows the power consumption of high-end mobile CPUs today? (quad-core Krait CPU, for example, or even Tegra3)

dagamer34 - Friday, October 5, 2012 - link

Intel's real problem is that the power needed for "good enough" computing in a typical desktop CPU came a couple of years ago Nd is rapidly approaching in mobile. With more and more tasks being offloaded to the cloud, battery life is becoming a stronger and stronger focus.What's sad is that because AMD isn't the major player it once was, Intel has allowed it's eye off the ball, revving Atom with only minor tweaks and having a laissez faire approach to GPU performance. It's only been recently when mobile has started to dominate in the minds of consumers and Intel's lack of any major design wins (the RAZR I doesn't count) which has forced Intel to push as hard as it is now.

sp3x0ps - Friday, October 5, 2012 - link

Where is the iPhone 5 review? I need details!! arghh.Demon-Xanth - Friday, October 5, 2012 - link

Atom was targeted to UMPCs, but quickly took over low power embedded systems who don't need much power but do run Windows.tipoo - Friday, October 5, 2012 - link

Poor Via.dgingeri - Friday, October 5, 2012 - link

"Within 8 years many expect all mainstream computing to move to smartphones, or whatever other ultra portable form factor computing device we're carrying around at that point."They said the same thing about laptops. Sure, laptops hold about 60-65% of the market these days, but the desktop is still very much around, and is the preferred platform for PC gamers and HTPC applications. They're far more flexible than any mobile form factor.

Smartphones also have the severe disadvantage of a very small screen. Even the largest are too small for most people to deal with. On top of that, actually surfing the net on those tiny screens is an exercise in frustration for many people. I try to tap on a link, only to get the link next to it, or above it, or below it, or possibly having my stupid phone just select the text instead of following the link.

Smartphones have their niche. There's no doubt there, but they are not going to be anyone's mainstream device unless they have needle thin fingers and 20/10 vision.

Anand Lal Shimpi - Friday, October 5, 2012 - link

I agree with the notebook/desktop comparison - these form factors won't go away. I should have said the majority of mainstream client computing goes to smartphones. And solving the display and input problems is easy: wireless display (WiDi/Miracast) and wireless keyboard/mouse (or a dock that does both over wires if you'd rather that).Take care,

Anand