The NVIDIA GeForce GTX 660 Review: GK106 Fills Out The Kepler Family

by Ryan Smith on September 13, 2012 9:00 AM ESTPower, Temperature, & Noise

As always, we’re wrapping up our look at a video card’s stock performance with a look at power, temperature, and noise. Unlike GTX 660 Ti, which was a harvested GK104 GPU, GTX 660 is based on the brand-new GK106 GPU, which will have interesting repercussions for power consumption. Scaling down a GPU by disabling functional units often has diminishing returns, so GK106 will effectively “reset” NVIDIA’s position as far as power consumption goes. As a reminder, NVIDIA’s power target here is a mere 115W, while their TDP is 140W.

| GeForce GTX 660 Series Voltages | |||||

| Ref GTX 660 Ti Load | Ref GTX 660 Ti Idle | Ref GTX 660 Load | Ref GTX 660 Idle | ||

| 1.175v | 0.975v | 1.175v | 0.875v | ||

Stopping to take a quick look at voltages, even with a new GPU nothing has changed. NVIDIA’s standard voltage remains at 1.175v, the same as we’ve seen with GK104. However idle voltages are much lower, with the GK106 based GTX 660 idling at 0.875v versus 0.975v for the various GK104 desktop cards. As we’ll see later, this is an important distinction for GK106.

Up next, before we jump into our graphs let’s take a look at the average core clockspeed during our benchmarks. Because of GPU boost the boost clock alone doesn’t give us the whole picture, we’ve recorded the clockspeed of our GTX 660 during each of our benchmarks when running it at 1920x1200 and computed the average clockspeed over the duration of the benchmark

| GeForce GTX 600 Series Average Clockspeeds | |||||

| GTX 670 | GTX 660 Ti | GTX 660 | |||

| Max Boost Clock | 1084MHz | 1058MHz | 1084MHz | ||

| Crysis | 1057MHz | 1058MHz | 1047MHz | ||

| Metro | 1042MHz | 1048MHz | 1042MHz | ||

| DiRT 3 | 1037MHz | 1058MHz | 1054MHz | ||

| Shogun 2 | 1064MHz | 1035MHz | 1045MHz | ||

| Batman | 1042MHz | 1051MHz | 1029MHz | ||

| Portal 2 | 988MHz | 1041MHz | 1033MHz | ||

| Battlefield 3 | 1055MHz | 1054MHz | 1065MHz | ||

| Starcraft II | 1084MHz | N/A | 1080MHz | ||

| Skyrim | 1084MHz | 1045MHz | 1084MHz | ||

| Civilization V | 1038MHz | 1045MHz | 1067MHz | ||

With an official boost clock of 1033MHz and a maximum boost of 1084MHz on our GTX 660, we see clockspeeds regularly vary between the two points. For the most part our average clockspeeds are slightly ahead of NVIDIA’s boost clock, while in CPU-heavy workloads (Starcraft II, Skyrim), we can almost sustain the maximum boost clock. Ultimately this means that the GTX 660 is spending most of its time near or above 1050MHz, which will have repercussions when it comes to overclocking.

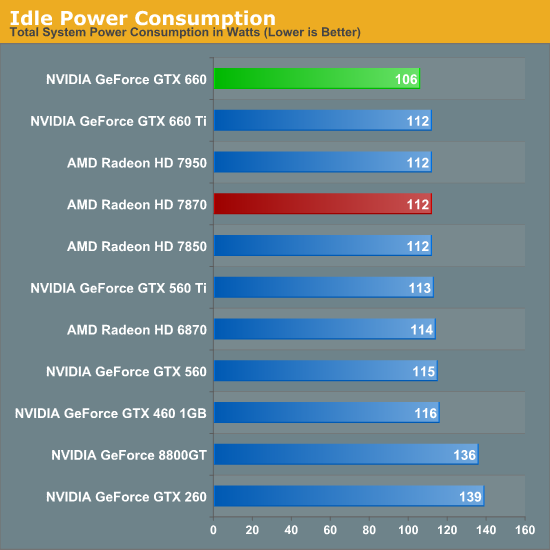

Starting as always with idle power we immediately see an interesting outcome: GTX 660 has the lowest idle power usage. And it’s not just a one or two watt either, but rather a 6W (all the wall) difference between the GTX 660 and both the Radeon HD 7800 series and the GTX 600 series. All of the current 28nm GPUs have offered refreshingly low idle power usage, but with the GTX 660 we’re seeing NVIDIA cut into what was already a relatively low idle power usage and shrink it even further.

NVIDIA’s claim is that their idle power usage is around 5W, and while our testing methodology doesn’t allow us to isolate the video card, our results corroborate a near-5W value. The biggest factors here seem to be a combination of die size and idle voltage; we naturally see a reduction in idle power usage as we move to smaller GPUs with fewer transistors to power up, but also NVIDIA’s idle voltage of 0.875v is nearly 0.1v below GK104’s idle voltage and 0.075v lower than GT 640 (GK107)’s idle voltage. The combination of these factors has pushed the GTX 660’s idle power usage to the lowest point we’ve ever seen for a GPU of this size, which is quite an accomplishment. Though I suspect the real payoff will be in the mobile space, as even with Optimus mobile GPUs have to spend some time idling, which is another opportunity to save power.

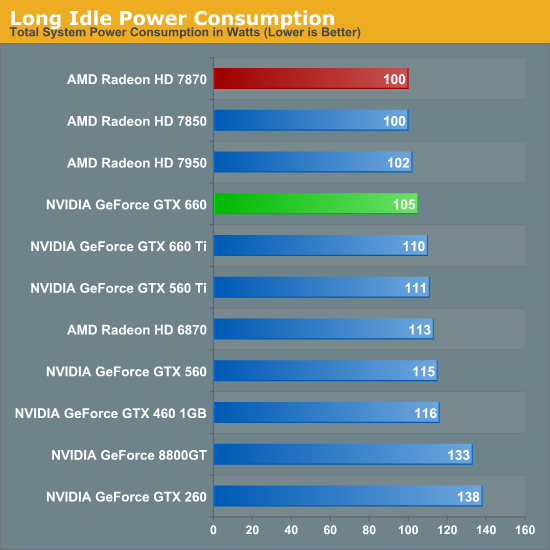

At this point the only area in which NVIDIA doesn’t outperform AMD is in the so-called “long idle” scenario, where AMD’s ZeroCore Power technology gets to kick in. 5W is nice, but next-to-0W is even better.

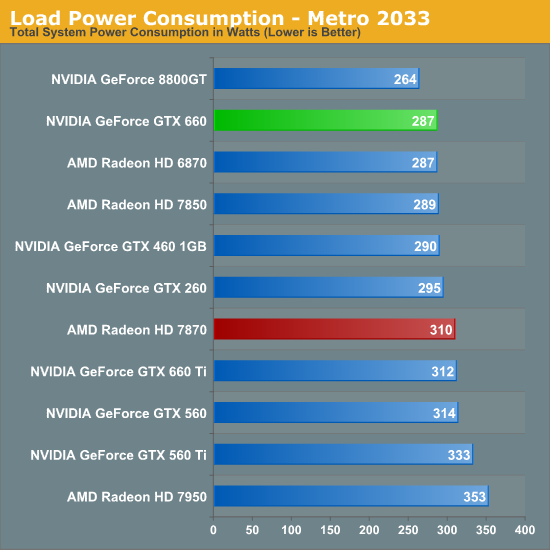

Moving on to load power consumption, given NVIDIA’s focus on efficiency with the Kepler family it comes as no great surprise that NVIDIA continues to hold the lead when it comes to load power consumption. The gap between GTX 660 and 7870 isn’t quite as large as the gap we saw between GTX 680 and 7970 but NVIDIA still has a convincing lead here, with the GTX 660 consuming 23W less at the wall than the 7870. This puts the GTX 660 at around the power consumption of the 7850 (a card with a similar TDP) or the GTX 460. On AMD’s part, Pitcairn is a more petite (and less compute-heavy) part than Tahiti, which means AMD doesn’t face nearly the disparity as they do on the high-end.

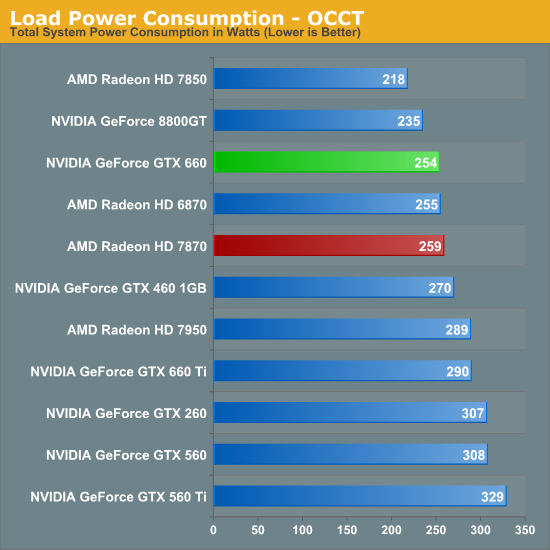

OCCT on the other hand has the GTX 660 and 7870 much closer, thanks to AMD’s much more aggressive throttling through PowerTune. This is one of the only times where the GTX 660 isn’t competitive with the 7850 in some fashion, though based on our experience our Metro results are more meaningful than our OCCT results right now.

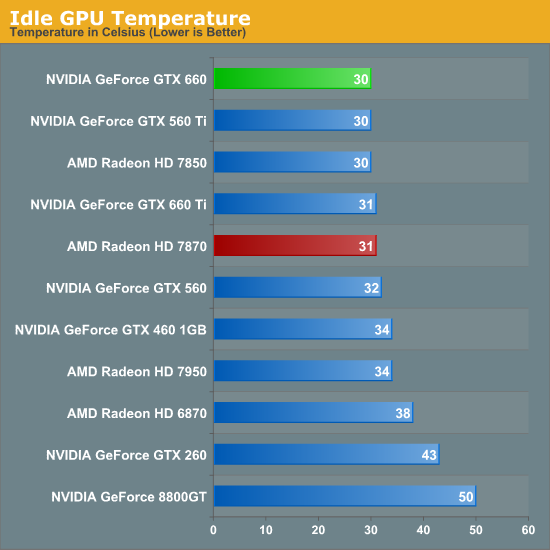

As for idle temperatures, there are no great surprises. A good blower can hit around 30C in our testbed, and that’s exactly what we see.

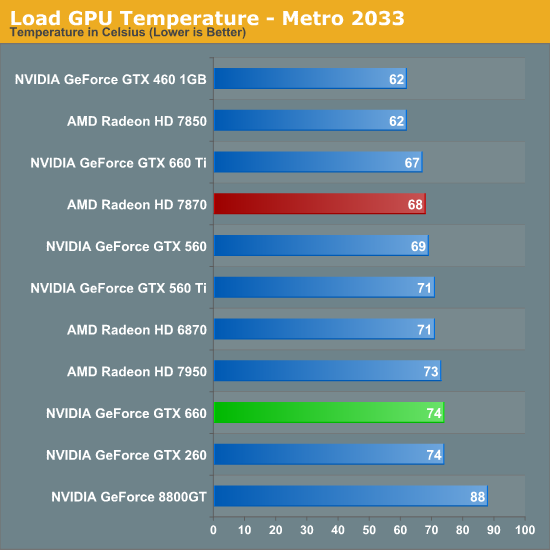

Temperatures under Metro look good enough; though despite their power advantage NVIDIA can’t keep up with the blower-equipped 7800 series. At the risk of spoiling our noise results, the 7800 series doesn’t do significantly worse for noise so it’s not immediately clear why the GTX 660 is 6C warmer here. Our best guess would be that the GTX 660’s cooler just quite isn’t up to the potential of the 7800 series’ reference cooler.

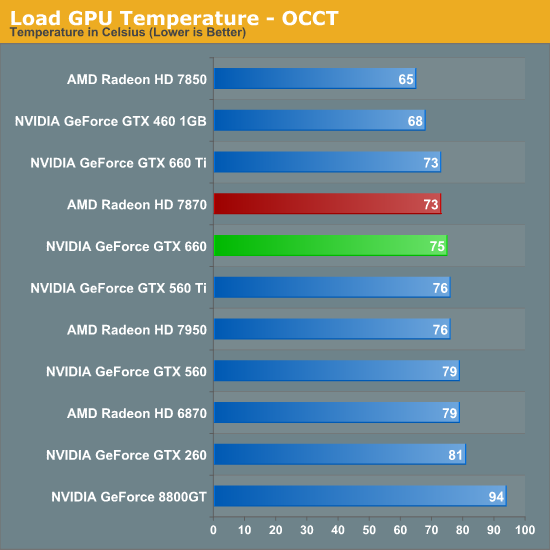

OCCT actually closes the gap between the 7870 and the GTX 660 rather than widening it, which is the opposite of what we would expect given our earlier temperature data. Reaching the mid-70s neither card is particularly cool, but both are still well below their thermal limits, meaning there’s plenty of thermal headroom to play with.

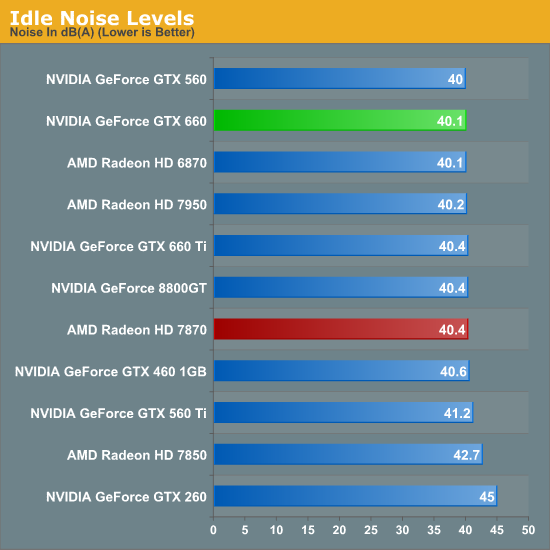

Last but not least we have our noise tests, starting with idle noise. Again there are no surprises here; the GTX 660’s blower is solid, producing no more noise than any other standard blower we’ve seen.

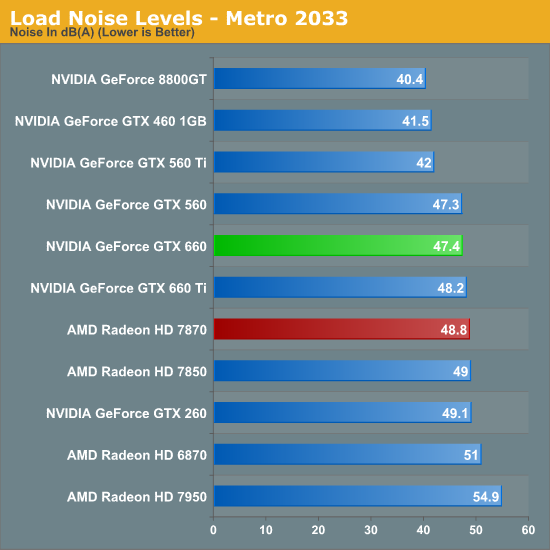

While the GTX 660 couldn’t beat the 7870 on temperatures under Metro, it can certainly beat the 7870 when it comes to noise. The difference isn’t particularly great – just 1.4dB – but every bit adds up, and 47.4dB is historically very good for a blower. However the use of a blower on the GTX 660 means that NVIDIA still can’t match the glory of the GTX 560 Ti or GTX 460; for that we’ll have to take a look at retail cards with open air coolers.

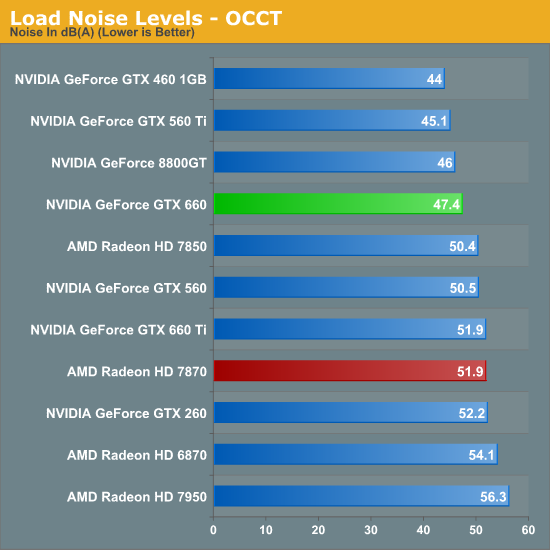

Similar to how AMD’s temperature lead eroded with OCCT, AMD’s slight loss in load noise testing becomes a much larger gap under OCCT. A 4.5dB difference is now solidly in the realm of noticeable, and further reinforces the fact that the GTX 660 is the quieter card under both normal and extreme situations.

We’ll be taking an in-depth look at some retail cards later today with our companion retail card article, but with those results already in hand we can say that despite the use of a blower the “reference” GTX 660 holds up very well. Open air coolers can definitely beat a blower with the usual drawbacks (that heat has to go somewhere), but when a blower is only hitting 47dB, you already have a fairly quiet card. So even a reference GTX 660 (as unlikely as it is to appear in North America) looks good all things considered.

147 Comments

View All Comments

Margalus - Thursday, September 13, 2012 - link

you say the stock 660 looks bad when compared to an overclocked 7870? what a shock that is!I guess it's always fair to say an nvidia card is bad when comparing the stock reference nv card to overclocked versions of it's nearest amd competitor..

Patflute - Friday, September 14, 2012 - link

Be fair and over clock both...poohbear - Thursday, September 13, 2012 - link

well after reading this im still have with my Gigabyte OC gtx 670 i got 2 months ago for $388. I will NOT be upgrading for 3 years & im confident my GTX 670 will still be in the upper segment in 3 years (like my 5870 that i upgraded from), so @ $130/yr its a great deal.poohbear - Thursday, September 13, 2012 - link

erm, i meant i'm still happy*. sucks that u can't edit on these comments.:pKineticHummus - Friday, September 14, 2012 - link

i had no idea what you meant with your "im still happy" edit until I went back to read your original statement again. somehow I mentally replaced the "have" with "happy" lol. reading fail for me...distinctively - Thursday, September 13, 2012 - link

Looks like the 660 is getting a nasty little spanking from the 7870 when you look around at all the reviews. The GK 106 appears to loose in just about every metric compared to Pitcairn.Locateneil - Thursday, September 13, 2012 - link

I just built a PC with 3770K and Asus Z77-v Pro, I was think to buy GTX 670 for my system but now I am now confused if it is better to go with 2 GTX 660 in SLI?Ryan Smith - Friday, September 14, 2012 - link

Our advice has always been to prefer a single more powerful card over a pair of weaker cards in SLI. SLI is a great mechanism to extend performance beyond what a single card can provide, but its inconsistent performance and inherent drawbacks (need for SLI profiles and microstuttering) means that it's not a good solution for when you can have a single, more powerful GPU.knghtwhosaysni - Thursday, September 13, 2012 - link

Do you guys think you could show frametimes like techreport does in your reviews? It can show some deficiencies in rendering that average FPS doesn't, like with Crysis 2 http://techreport.com/review/23527/nvidia-geforce-...It's nice that techreport does it, but I think Anandtech is the first stop for a lot of people who are looking for benchmarks, and I think if you guys showed this data in your own reviews then it would really push AMD and Nvidia to iron out their latency spike problems.

Ryan Smith - Friday, September 14, 2012 - link

We get asked this a lot. I really like Scott's methodology there, so if we were to do this I'd want to do more than just copy him by finding some way to do better than him (which is no easy task).To that end I find FRAPS to be at a higher level than I'd like. It's measuring when frames are handed off to the GPU rather than when the GPU actually finishes the frame. These times are strongly correlated, but I'd rather have more definitive low-level data from the GPU itself. If we could pull that off then frametimes are definitely something we'd look in to.