EVGA GeForce GTX 680 Classified Review: Pushing GTX 680 To Its Peak

by Ryan Smith on July 20, 2012 12:00 PM ESTPower, Temperature, & Noise

Wrapping up our look at the stock performance characteristics of the GTX 680 Classified, we have what’s perhaps our most important results section: power, temperature, & noise.

When it comes to custom cards these results can often make or break a card, depending on the niche it’s going for. The GTX 680 Classified is a high performing card, but it’s not a card shooting for performance at any cost, so we expect to find some kind of reasonable balance here.

| GeForce GTX 600 Series Voltages | ||||

| EVGA GTX 680C Boost Load | Ref GTX 680 Boost Load | EVGA GTX 680C Idle | ||

| 1.175v | 1.175v | 0.987v | ||

Note that at its stock settings EVGA must follow NVIDIA’s defaults, so the GTX 680 Classified has the same 1.175v load voltage as the reference GTX 680. This is where binning comes into play, as EVGA needs to identify GK104 GPUs that can reach 1100MHz+ at 1.175v in order to make the GTX 680 Classified a viable product.

| EVGA GTX 680 Classified Average Clockspeed | |||

| Game | Clockspeed | ||

| Crysis: Warhead | 1188MHz | ||

| Metro 2033 | 1211MHz | ||

| DiRT 3 | 1211MHz | ||

| Shogun2 | 1162MHz | ||

| Batman: Arkham City | 1188MHz | ||

| Portal 2 | 1211MHz | ||

| Battlefield 3 | 1188MHz | ||

| Starcraft II | 1188MHz | ||

| Skyrim | 1211MHz | ||

| Civilization V | 1188MHz | ||

Meanwhile, looking at the GTX 680 Classified’s median clockspeed during our benchmarks, we can see that our earlier speculation about the impact of such a high power target was true. The maximum boost bin on our card is 1201MHz (versus 1110MHz for our reference card), and with the exception of Shogun 2, the GTX 680 Classified is almost constantly at 1201MHz or the temperature-induced 1188MHz during our tests. With Shogun 2 that drops down to 1162MHz. With such a high power target the resulting clockspeeds are very consistent for a GTX 680 card.

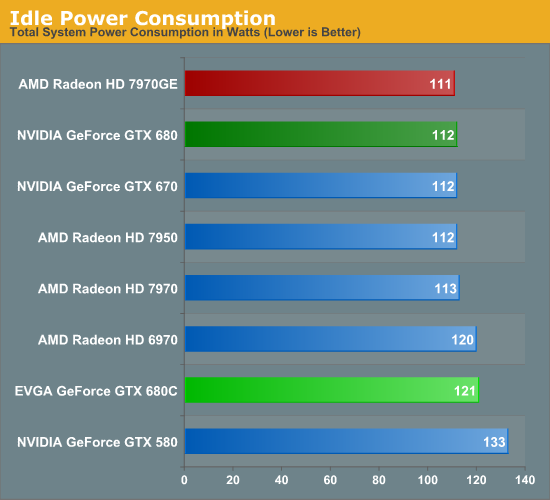

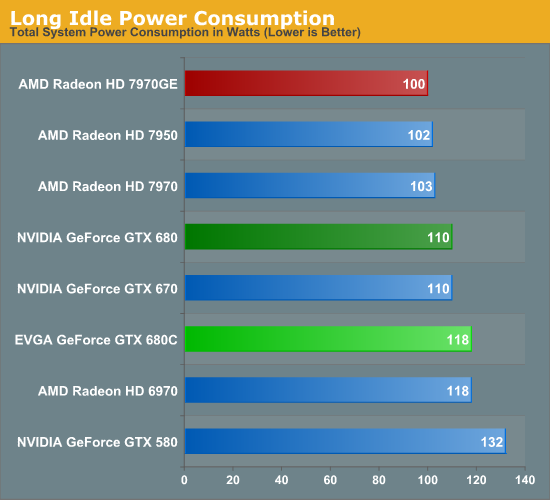

Moving on to power consumption, there are no great surprises here for the GTX 680 Classified when it comes to idle power consumption. With the additional VRM phases and more importantly 8 extra GDDR5 memory chips, idle power consumption has to go up. The impact is that idle power consumption rises by 8-9W, which would push the total idle power consumption of the card up to around 25W. Note however that this is still better than the GTX 580.

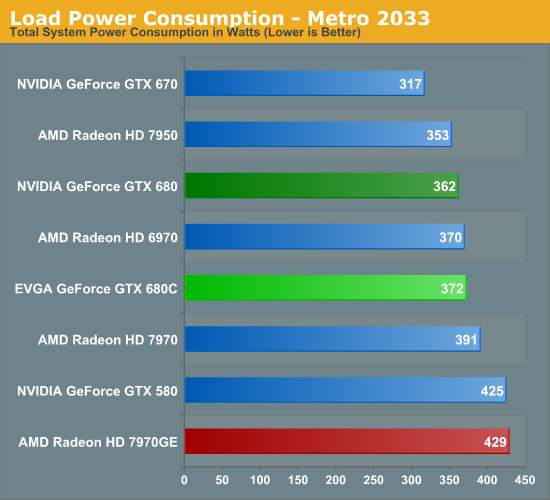

Load power on the other hand looks very good. In fact it’s much better than we were expecting. Despite the additional memory chips and the factory overclock, under Metro power consumption only rises 10W at the wall. This is still less than the7970, let alone the 7970GE.

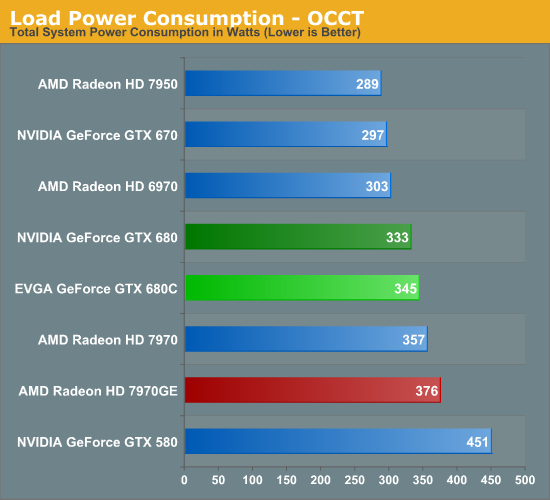

The story with OCCT is much the same. Here we can see that power at the wall increases by all of 12W, which is roughly the remaining difference between the GTX 680 Classified and the 7970. Ultimately the binning process necessary for EVGA to assign GK104 GPUs to their various products has pushed the very best GK104 GPUs into the Classified. The end result is that even at NVIDIA’s standard voltage of 1.175v, the GK104 GPU in our card ends up consuming less power than a more typical GK104 GPU, which allows the GTX 680 Classified to partially offset the higher power consumption of the additional RAM, additional VRM phases, and the factory overclock.

The final result is that the GTX 680 Classified still consumes a bit more power than the reference GTX 680, but not immensely so. Furthermore depending on whether we’re looking at Metro or OCCT, the GTX 680 Classified still draws 30-50W less than the next-nearest competitor, the 7970GE.

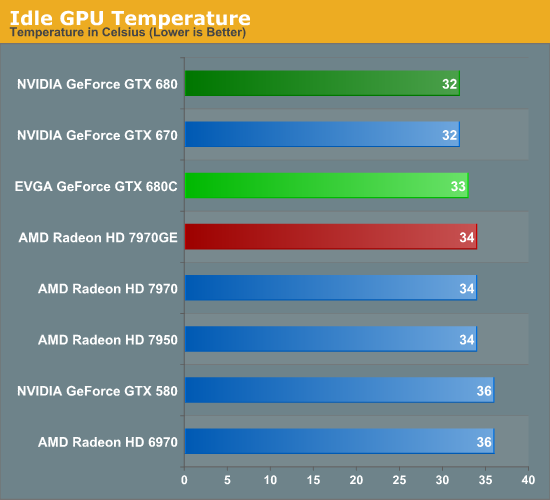

Moving on to temperatures, since EVGA has equipped the GTX 680 Classified with what’s functionally a larger version of the GTX 680’s blower, there are no grand surprises here. The GTX 680 Classified idles at 33C, which so far appears to be typical for these latest iterations of high-performance video cards.

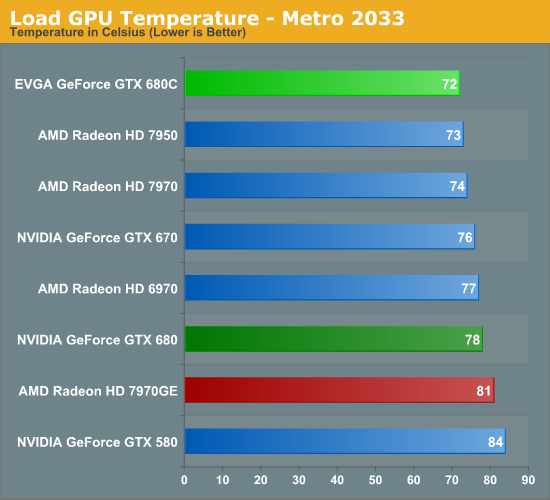

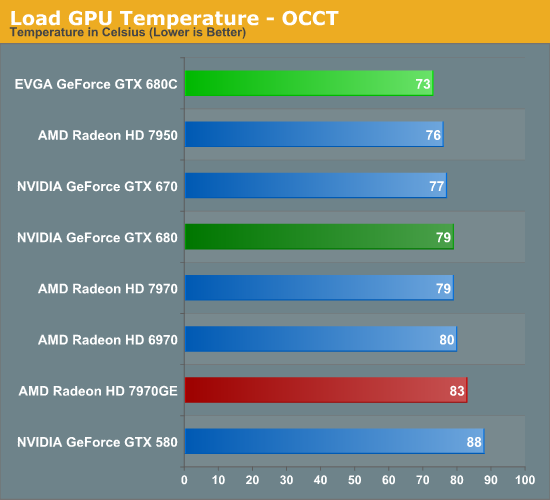

Load GPU temperatures on the other hand are clearly benefitting not only from the larger cooler of the GTX 680 Classified, but also its lower GPU power consumption. With both OCCT and Metro the GTX 680 Classified is 6C cooler than the reference GTX 680. Blowers aren’t known as the most effective coolers, so to reach the low 70s like this is definitely impressive.

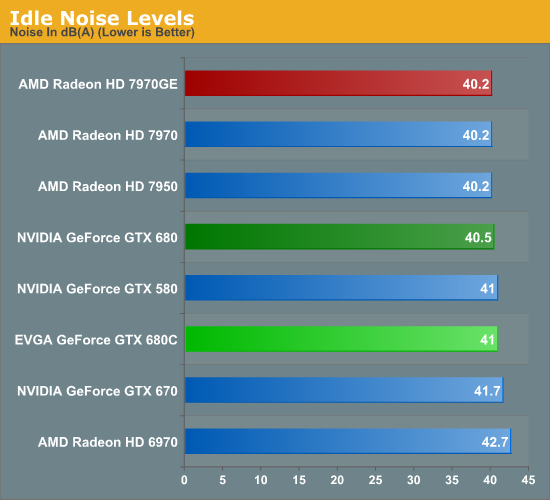

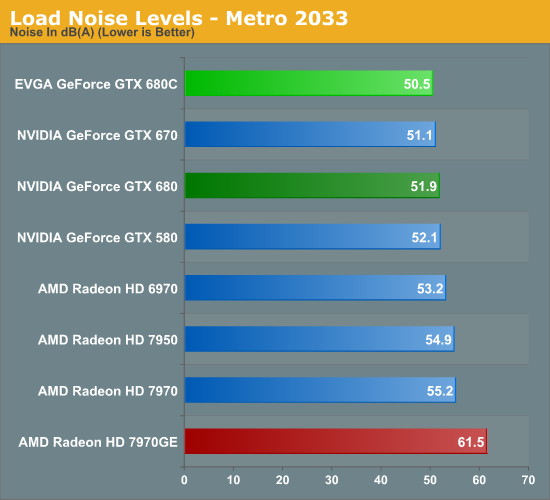

Last but not least we have our look at noise levels. Starting with idle noise, the GTX 680 Classified makes no significant tradeoff for its size. Idle noise ends up being a hair higher than the reference GTX 680, but not noticeably so.

With load noise levels on the other hand we find ourselves once again impressed. The larger cooler and larger fan means that the GTX 680 Classified generates less noise than even the reference GTX 680, which was already a fairly quiet card for its performance level. We had expected EVGA to make some kind of temperature/noise tradeoff here but there’s none to be found. They have managed to lower both at the same time.

Ultimately it’s clear that while EVGA has engineered the GTX 680 Classified for heavy overclocking, they haven’t sacrificed reference performance to get there. When it comes to both temperatures and noise, the GTX 680 Classified is suitably superior to the reference GTX 680. The fact that EVGA did all of this with a blower makes it all the more impressive, since we typically only see gains like these by switching to an open air cooler. Granted these improvements are largely a consequence of the need to overbuild the card for the kind of heavy overclocking it was designed for, but if you do run at stock there are definitely benefits to be realized.

75 Comments

View All Comments

Belard - Saturday, July 21, 2012 - link

Its been a few years... so I was a bit off on the price ;PI've owned 3DFx, GF2/3/4/5/7 series and ATIs 9800Pro/4670.

I paid $190 for the GF7600GT with the extra large cooler to reduce nice (Exhaust heat out the back)... and I laughed when the reviewers complained about the dual-slot being a "problem"... WTF?! Blowing heat out is better than blowing heat off the GPU and having it stay inside the case.

After than, I spent $85 on the ATI 4670 with the HIS blower... With the way PC gaming is, I don't see the value of spending a dime over $200. And considering its been 3 years since the ATI 5000 series, the 7850 should be a $150 card at the most.

Yes, I'm planning on the PS4 to replace my PC gaming and to rid me of Windows. NO PC games = Why use Windows?

RussianSensation - Saturday, July 21, 2012 - link

Console gaming has its appeals. Sitting on a nice couch in front of a 50-60 inch LED/Plasma after a long-day's of work is often more comfortable than gaming on a chair at a desk. However, that PS4 won't be $150, probably more like $400-500.Visual - Monday, July 23, 2012 - link

Eh, what does your screen have to do with the rest of the hardware?I've been playing my PC games on a couch 2m away from a 47" TV for the last 5 years, a lot of them with a wireless XBOX360 controller as well, at least when I feel the extra precision of a mouse is not needed, and always at a resolution and details settings much better than the console alternative. I only play exclusives on the actual XBOX360. There is no way in hell I will ever consider console gaming a serious option.

CeriseCogburn - Tuesday, July 24, 2012 - link

The same type of brainfart had the guy spewing nVidia has nothing below the $400 gtx670 worth buying.Thank you for adding a dose of reality.

CeriseCogburn - Sunday, July 29, 2012 - link

The 4850 has been below $100 for a long, long time. Brand new it has been $60 for a year.Now it's $40 with a special aftermarket HS

http://www.ebay.com/itm/ASUS-ATI-Radeon-HD-4850-EA...

Whatever, you're all screwy on numbers, as it makes it easy to moan and whine.

will54 - Saturday, July 21, 2012 - link

I read somewhere that the GTX 660 will be coming out in August and than they are going to focus on the 700 series. Not positive but I think I read on Toms Hardware.shin0bi272 - Sunday, July 22, 2012 - link

WTF Anand? I post a link as a reply and its instantly marked as spam? that's bull shit.poohbear - Friday, July 20, 2012 - link

why do you benchmark shogun 2 @ 1600p using Ultra Quality and then in 1200p you benchmark it @ very high quality? why did you drop the detail level exactly? makes no sense.Ryan Smith - Friday, July 20, 2012 - link

Because it was utterly unplayable at 5760x1200 at Ultra, even with 2 video cards. I'm all for bogging down a video card, but there has to be a limit.poohbear - Friday, July 20, 2012 - link

no i mean u dropped the quality when u went down to 1980x1200. why did u do that? not many people really pay attention to 5760x1200, most of us are on 1080p (according to Steam hardware survey).