NVIDIA GeForce GTX 690 Review: Ultra Expensive, Ultra Rare, Ultra Fast

by Ryan Smith on May 3, 2012 9:00 AM ESTBattlefield 3

Its popularity aside, Battlefield 3 may be the most interesting game in our benchmark suite for a single reason: it’s the first AAA DX10+ game. It’s been 5 years since the launch of the first DX10 GPUs, and 3 whole process node shrinks later we’re finally to the point where games are using DX10’s functionality as a baseline rather than an addition. Not surprisingly BF3 is one of the best looking games in our suite, but as with past Battlefield games that beauty comes with a high performance cost.

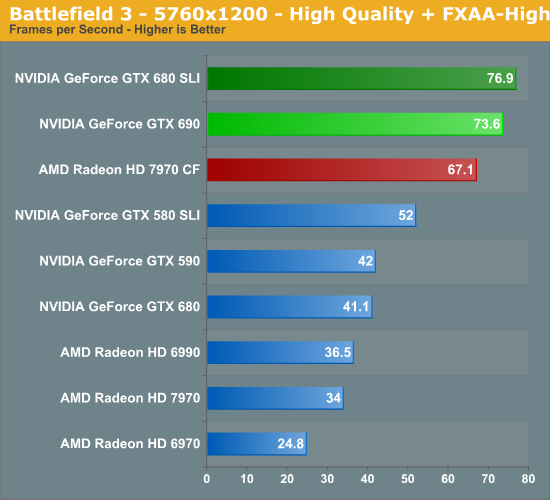

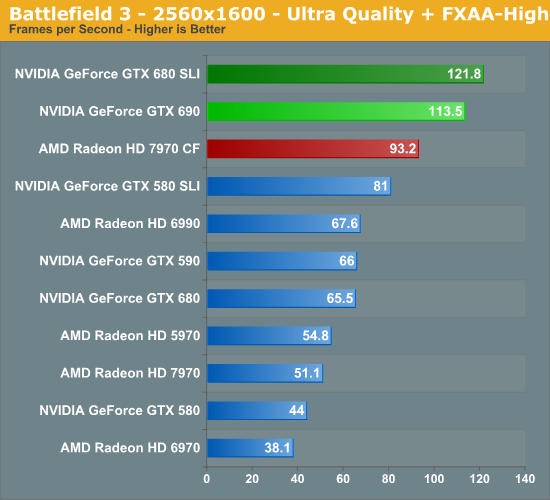

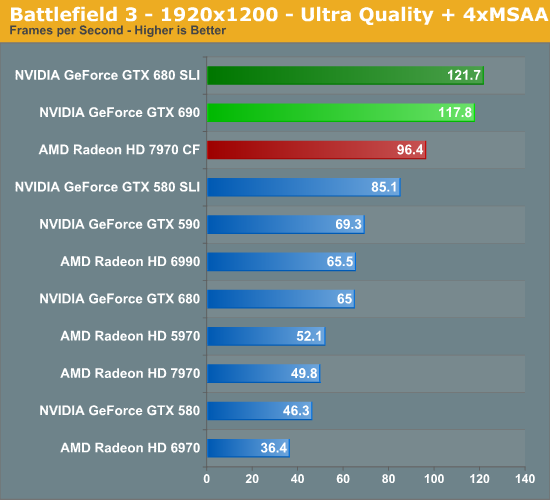

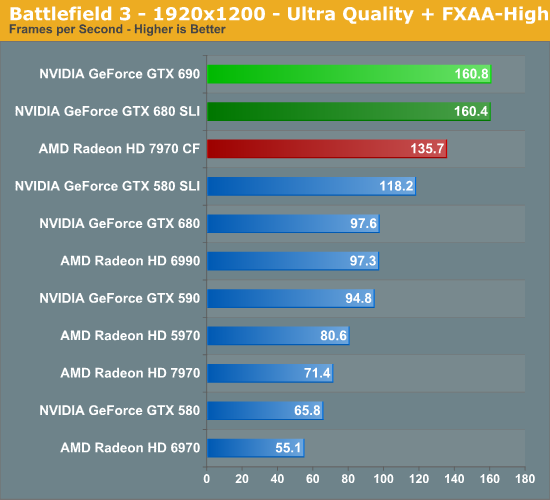

Battlefield 3 has been NVIDIA’s crown jewel; a widely played multiplayer game with a clear lead for NVIDIA hardware. And with multi-GPU thrown into the picture that doesn’t change, leading to the GTX 690 once again taking a very clear lead here over the 7970CF at all resolutions. With that said, we see something very interesting at 5760, with NVIDIA’s lead shrinking by quite a bit. What was a 21% lead at 2560 is only a 10% at 5760. So far we haven’t seen any strong evidence of NVIDIA being VRAM limited with only 2GB of VRAM and while this isn’t strong evidence that the situation has changed is does warrant consideration. If anything is going to be VRAM limited after all it’s BF3.

Meanwhile compared to the GTX 680 SLI the GTX 690 is doing okay here. It’s only achieving 93% of the GTX 680 SLI’s performance at 2560, but for some reason pulls ahead at 5760, covering that to 96% of the performance of the dual video card setup.

200 Comments

View All Comments

von Krupp - Saturday, May 5, 2012 - link

Not precisely. That $350 performance point? It used to be a $200 performance point. Similarly, that $350 point will turn into a $400 performance point. So, assuming I maintain the price tier, graphics returns for my dollar are gradually tapering off. I look at the performance I was getting out of my 7800 GT at 1280x1024, and it wasn't worth upgrading to a newer card, period, because of Windows XP, my single core CPU, and the fact that I was already maxing out every game I had and still getting decent frame rates. I think they key factor is that I do not care if I dip below 60 frames, as long as I'm above 30 and getting reasonable frame times.I also know that consoles extend the life of PC hardware. The 7800GT is a 20-pipe version of the GTX, which is in turn the GPU found in the PS3.Devs have gotten much better at optimization in titles that matter to me.

CeriseCogburn - Saturday, May 5, 2012 - link

You spend well over $1,600 on a decent system.It makes no sense to spend all that money, then buy monitors the cards in question cannot successfully drive on 3 year old Crysis game, let alone well over half the benchmarks in this article set without turning DOWN the settings.

You cannot turn up DX11 tesselation, keep it on medium.

You cannot turn up MSAA past 4X, and better keep it at 2X.

You had better turn down your visual distance in game.

That in fact, with "all the console ports" moanings "holding us back".

I get it, the obvious problem is none of you seem to, because you want to moan and pretend spending $1,000.00 on a monitor alone, or more, is "how it's done", because you whine you cannot even afford $500 for a single video card.

These cards successfully drive 1920X1080 monitors in the benchmarks, but just barely - and if you turn the eye candy up, they cannot do it.

CeriseCogburn - Saturday, May 5, 2012 - link

Thanks for telling everyone how correct I am by doing a pure 100% troll attack after you and yours could not avoid the facts.Your mommy, if you knew who she was, must be very disappointed.

geok1ng - Sunday, May 6, 2012 - link

This card was not build for 2560x1600 gaming. a single 680 is more than enough for that.The 690 was built for 5760x1200 gaming.

I would like to see triple 30" tests. Nothing like gaming at 7680x1600 to feel that you are spending well your VGA money.

CeriseCogburn - Sunday, May 6, 2012 - link

You can use cards 2 generations back for that, but like these cards, you will be turning down most and near all of the eye candy, and be stuck rweaking and clocking, and jittering and wishing you had more power.These cards cannot handle 1920X at current "console port" games unless you turn them down, and that goes ESPECIALLY for the AMD cards that suck at extreme tesselation and have more issues with anything above 4XAA, and often 4XAA.

The 5770 is an eyefinity card and runs 5760X1200 too.

I guess none of you will ever know until you try it, and it appears none of you have spent the money and become disappointed turning down the eye candy settings - so blabbering about resolutions is all you have left.

_vor_ - Tuesday, May 8, 2012 - link

"... blabbering..."Pot, meet kettle.

CeriseCogburn - Sunday, May 6, 2012 - link

They cost $400 to $2,000 plus, not $150 like the 242 1080p.Thanks for playing.

hechacker1 - Monday, May 7, 2012 - link

Nope, you can already get IPS, 27", 2560x1440 panels (the same that Apple uses) for $400.They're rare, but currently they are building them in batches of 1000 to see how strong demand is for them.

Sure the 120Hz will sort of go to waste due to the slow IPS switching speed, but it will accept that signal with 0 input lag.

The only problem is that only the 680 seems to have a ramdac fast enough to do 120Hz. Radeon's tend to cap out at 85Hz.

marine73 - Monday, May 7, 2012 - link

After checking Newegg it would seem that, unfortunately for Nvidia, this will be another piece of vaporware. Perhaps they should scale the Kepler's to 22nm and contract Intel to fab them since TSMC has major issues with 28nm. Just a thought.marine73 - Monday, May 7, 2012 - link

I guess I should retract my comments about TSMC as other customers are not experiencing supply issues with 28nm parts. Apparently the issues are with Nvidia's design, which may require another redo. I'm guessing AMD will be out with their 8000 series before Nvidia gets their act together. Sad because I have used several generations of Nvidia cards and was always happy with them.