NVIDIA GeForce GTX 680 Review: Retaking The Performance Crown

by Ryan Smith on March 22, 2012 9:00 AM ESTTheoreticals

As with any new architecture, we want to take a few moments to look at theoretical performance. These numbers shouldn’t be taken too seriously for cross-vendor comparison, but these numbers often tell us more about interesting architectural improvements that occur from one generation to the next.

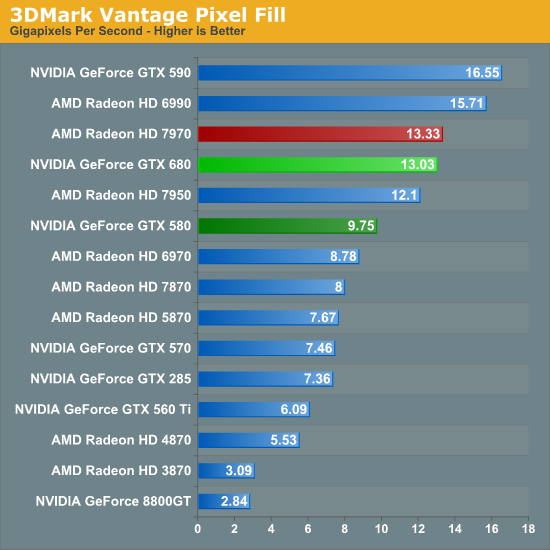

Our first theoretical test is perhaps the most perplexing: 3DMark Vantage’s pixel fill test. Typically this test is memory bandwidth bound as the nature of the test has the ROPs pushing as many pixels as possible with as little overhead as possible, which in turn shifts the bottleneck to a mix of ROP performance and the memory bandwidth needed to feed those ROPs.

Compared to the GTX 580, the GTX 680 has almost exactly the same amount of memory bandwidth (192GB/sec) and only 86% of the theoretical ROP performance (37Gpix vs. 32Gpix). In short, it shouldn’t outperform the GTX 580 here, and yet it outperforms the 580 by 33%.

Why does it do this? That’s the hard thing to answer. As we mentioned in our look at GK104’s architecture, NVIDIA did make some minor incremental improvements to their ROPs coming from GF114, such as slightly improved compression and improved polygon merging. One of those may very well be the contributing factor, particularly the compression improvements since this is a typically memory bandwidth bottlenecked test. Alternatively, it’s interesting to note that the difference between the two video cards is almost identical to the difference in the core clock. GTX 560 Ti’s results tend to blow a hole in this theory, but it bears consideration.

In any case, it’s an interesting turn of events and hopefully one that isn’t simply an edge case. As we’ve seen in our benchmarks GTX 680 has strong performance – even if its lead compared to the 7970 diminishes with resolution – but compared to the GTX 580 in particular it needs strong ROP performance across all games in order to deliver good performance at high resolutions and anti-aliasing.

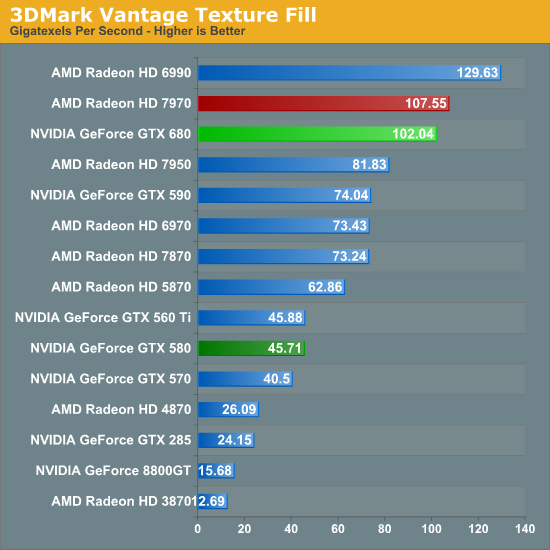

Our second theoretical test is 3DMark Vantage’s texture fill test, which to no surprise has the GTX 680 handily clobbering all prior NVIDIA cards. NVIDIA’s inclusion of 128 texture units on GK104 versus 64 on their previous generation GPUs gives the GTX 680 far better texturing performance. The 30%+ core clock difference only serves to further widen the gap.

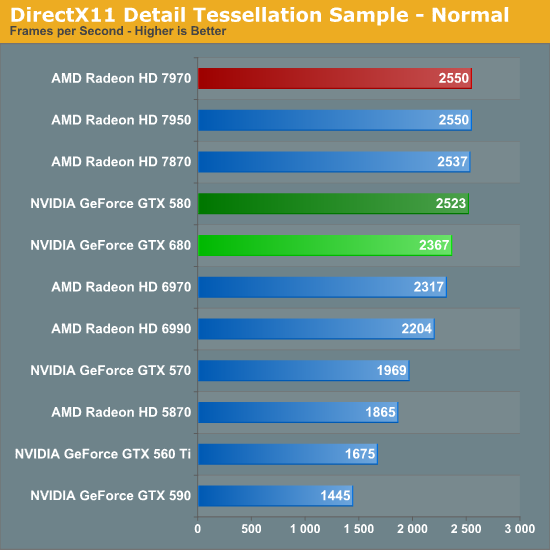

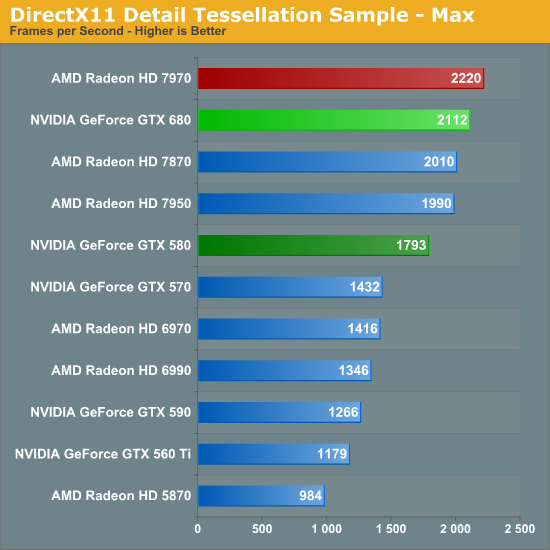

Our third theoretical test is the set of settings we use with Microsoft’s Detail Tessellation sample program out of the DX11 SDK. Overall while NVIDIA didn’t make any significant changes to their tessellation hardware (peak triangle rate is still 4/cycle), they have been working on further improving performance at absurdly high tessellation factors. You can see some of this in action at the max factor setting, but even then we’re running into a general performance wall since the Detail Tessellation program can’t go to the absolute highest tessellation factors NVIDIA’s hardware supports.

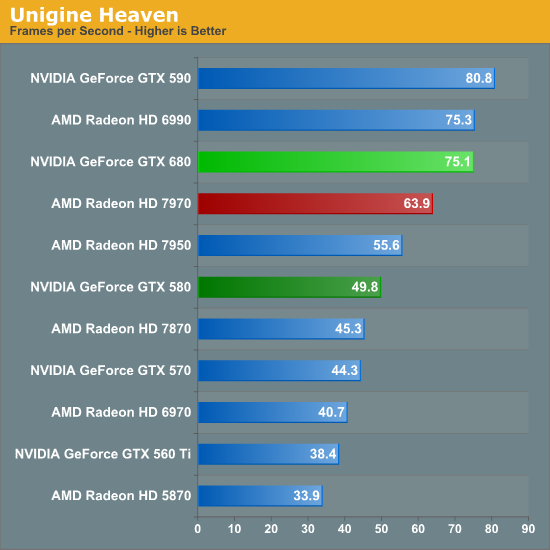

Our final theoretical test is Unigine Heaven 2.5, a benchmark that straddles the line between a synthetic benchmark and a real-world benchmark as the engine is licensed but no notable DX11 games have been produced using it yet. In any case the Heaven benchmark is notable for its heavy use of tessellation, which means it’s largely a proxy test for tessellation performance. Here we can see the GTX 680 shoot well ahead of the GTX 580 – by more than we saw in the DX11 Detail Tessellation sample – but at the same time there’s a lot more going on in Heaven than just tessellation.

Honestly at this point in time I’m not sure just how much more tessellation performance is going to matter. Until DX11 is the baseline API for games, tessellation is still an add-on feature, which means it’s being used to add fine detail to specific models rather than being used on everything in a game world. This demands good tessellation at high factors but at the same time it’s subject to diminishing returns on the improvement to image quality as triangles reach single pixel sizes and smaller. To that end I’m still waiting to see the day where we see tessellation scale similarly to textures – that is by using full MIP chaining of displacement maps – at which point we can evaluate tessellation performance similar to texture performance when it comes to both measuring the performance hit and evaluating the difference in image quality.

404 Comments

View All Comments

CeriseCogburn - Sunday, March 25, 2012 - link

They get to show amd "catching up" so they like it. They get to try to puke out Kepler's 2G ram and make amd's 3G shine, so they "can't resist" - and when frame rates fall below playable, "they all of a sudden" "don't care", even when the puking attempt fails. They haven't been able to resist since the 580 w 1.5G vs 2G 6950/6970 it was a great blame the low ram game for any changes.Then they checked 6950 1G 2G and 2G was slower...but so what.

Now 2G Kepler has put the ram lie to rest even in triple monitor gaming... but any lesser win or loss or slimming margin can still be blamed on that, it gets people "buying the amd card" and they get real frustrated here when they can't figure out why Nvidia is winning when they don't believe it should be. It's always expressed in the article how shocked they are. So ram is a convenient scapegoat. It's always used a "future proofing" notion as well, though no evidence has ever surfaced for that.

_vor_ - Sunday, March 25, 2012 - link

What's with all the nerdrage? Do you work for NVIDIA?formulav8 - Sunday, March 25, 2012 - link

Get over yourself already. NVidia doesn't even like You. Can't believe how people feel about a stinking stupid corporation.CeriseCogburn - Tuesday, March 27, 2012 - link

It's not about a corporation it's about facts guy. Facts mean my friends and my readers get the best they can get for the buck they are paying.Just because amd is behind and therefore lies are told, does not mean the truth should not shine through !

The truth shall shine through !

AnnonymousCoward - Sunday, March 25, 2012 - link

Personally, I don't care if the card has 64kB of RAM. Or 8 million stream processors. Performance, cost, power, and noise are what matter.And back to my point: performance in the 20-50fps range at 2560x1600 4xAA is meaningless and not a criteria for judgment.

CeriseCogburn - Tuesday, March 27, 2012 - link

I never disagreed with that point I merely explained why things are done in such and such a way while ignoring other things.It's not difficult at all.

Zephyr66z0r - Sunday, March 25, 2012 - link

Well I understand 'some' of the tech behind the GTX680 one thing stands out. 256bit bus width, when you see that with nvidia its along the lines of GTX560.... so does that mean there's going be a 384bit (mid-high) or 512bit(high-enth, 256bit + 256bit + 2 GPU) card/s coming out?I can't wait, anyone done SLi with it yet?

dmnwlv - Sunday, March 25, 2012 - link

First off, I think nVidia has done a good job with the new GTX680.However I do not need a game that is already running at 100+ frames to be even faster.

It needs to be fast at where it counts - games that are still running slow at 60 fps and below.

For this, of 3 relevant games, nVidia is faster at just one of them. Experience (if you also remember) has shown that the results could be very different once frames for some settings/games hit below 60fps.

Hence I cannot agree with all the big f about GTX680 is so much faster fuss.

You guys are led by the heart (much alike ati fanboys you used to call) than the brain.

And all other compute tests are non-relevant to me (and majority of you to be honest).

gramboh - Monday, March 26, 2012 - link

What about a little game (that several million people play) called Battlefield 3? NV has a massive lead with the GTX 680 over the 7970/7950. AT only benches single player, but the game is even more punishing in 64 player multiplayer. Having a smooth framerate at max detail with 4X AA/16X AF is a big competitive advantage and makes the game significantly more enjoyable.Kind of disappointed the card isn't faster in Witcher 2, which I think has the best graphics of a single player game.

CeriseCogburn - Tuesday, March 27, 2012 - link

Have all of you people repeating that FUD forgotten Shogun 2 Total War ?It's the hardest game in the bench set according to anandtech...

How is it that THE HARDEST GAME that Nvidia swept top to bottom at every resolution is suddenly and completely forgotten about, while we hear these other FUD declarations ?

How does that work, just repeat what some other mistaken fudder spewed ?