NVIDIA GeForce GTX 680 Review: Retaking The Performance Crown

by Ryan Smith on March 22, 2012 9:00 AM ESTGPU Boost: Turbo For GPUs

Now that we’ve had a chance to take a look at the Kepler architecture, let’s jump into features. We’ll start with the feature that’s going to have the biggest impact on performance: GPU Boost.

Much like we’ve seen with CPUs in previous years, GPUs are reaching a point where performance is being limited by overall power consumption. Until the last couple of years GPU power consumption has been allowed to slowly drift up with each generation, allowing for performance to scale to an incredible degree. However for many of the same reasons NVIDIA has been focusing on efficiency in general, GPUs are being pressured to do more without consuming more.

The problem of course is compounded by the fact that there are a wide range of possible workloads for a GPU, much like there is for a CPU. With the need to design video cards around specific TDPs for both power supply and heat dissipation reasons, the goal becomes one of maximizing your performance inside of your assigned TDP.

The answer to that problem in the CPU space is turbo boosting – that is increasing the clockspeed of one or more CPU cores so long as the chip as a whole remains at or under its TDP. By using turbo, Intel and AMD have been able to both maximize the performance of lightly threaded applications by boosting a handful of cores to high speeds, and at the same time maximize heavily threaded performance by boosting a large number of cores by little to none. For virtually any CPU-bound workload the CPU can put itself into a state where the appropriate execution units are making the most of their TDP allocation.

Of course in the GPU world things aren’t that simple – for starters we don’t have a good analogy for a lightly threaded workload – but the concept is similar. GPUs need to be able to run demanding tasks such as Metro 2033 or even pathological applications like FurMark while staying within their designated TDPs, and at the same time they need to be sure to deliver good performance for compute applications and games that aren’t quite so demanding. Or put another way, tasks that are GPU limited but aren’t maxing out every aspect of the GPU need to be able to get good performance without being held back by the need to keep heavy workloads in check.

In 2010 AMD took a stab that this scenario with PowerTune, which was first introduced on the Radeon HD 6900 series. With PowerTune AMD could set their clockspeeds relatively high, and should any application demand too much of the GPU, PowerTune would throttle down the GPU in order to avoid going over its TDP. In essence with PowerTune the GPU could be clocked too high, and simply throttled down if it tried to draw too much power. This allowed lighter workloads to operate at higher clockspeeds, while keeping power consumption in check for heavy workloads.

With the introduction of Kepler NVIDIA is going to be tackling this problem for their products, and their answer is GPU Boost.

In a nutshell, GPU Boost is turbo for the GPU. With GPU Boost NVIDIA is able to increase the core clock of GTX beyond its 1006MHz base clock, and like turbo on CPUs this is based on the power load, the GPU temperature, and the overall quality of the GPU. Given the right workload the GTX 680 can boost by 100MHz or more, while under a heavy workload the GTX 680 may not move past 1006MHz.

With GPU Boost in play this adds a new wrinkle to performance of course, but ultimately there are 2 numbers to pay attention to. The first number is what NVIDIA calls the base clock: this is another name for the regular core clock, and it represents the minimum full load clock for GTX 680; when operating at its full 3D clocks, the GTX 680 will never drop below this number.

The second number is what NVIDIA calls the boost clock, and this one is far more nebulous, as it relates to the operation of GPU Boost itself. With GPU Boost NVIDIA does not have an explicit top clock; they’re letting chip quality play a significant role in GPU Boost. Because GPU Boost is based around power consumption and temperatures, higher quality GPUs that operate with lower power consumption can boost higher than lower quality GPUs with higher power consumption. In essence the quality of the chip determines its boost limit under normal circumstances.

Accordingly, the boost clock is intended to convey what kind of clockspeeds buyers can expect to see with the average GTX 680. Specifically, the boost clock is based on the average clockspeed of the average GTX 680 that NVIDIA has seen in their labs. This is what NVIDIA had to say about the boost clock in their reviewer’s guide:

The “Boost Clock” is the average clock frequency the GPU will run under load in many typical non-TDP apps that require less GPU power consumption. On average, the typical Boost Clock provided by GPU Boost in GeForce GTX 680 is 1058MHz, an improvement of just over 5%. The Boost Clock is a typical clock level achieved running a typical game in a typical environment

In other words, when the average GTX 680 is boosting it reaches 1058MHz on average.

Ultimately NVIDIA and their customers are going to go through some teething issues on this, and there’s no way around it. Although the idea of variable performance isn’t a new one – we already see this to some degree with CPU turbo – this is the first time we’ve seen something like this in the GPU space, and it’s going to take some time to get used to.

In any case while we can’t relate to you what the average GTX 680 does with GPU Boost, we can tell you about GPU Boost based on what we’ve seen with our review sample.

First and foremost, GPU Boost operates on the concept of steps, analogous to multipliers on a CPU. Our card has 9 steps, each 13MHz apart, ranging from 1006MHz to 1110MHz. And while it’s not clear whether every GTX 680 steps up in 13MHz increments, based on NVIDIA’s boost clock of 1058MHz this would appear to be the case, as that would be 4 steps over the base clock.

At each step our card uses a different voltage, listed in the table below. We should note that we’ve seen different voltages reported for the same step in some cases, so it’s not entirely clear what’s going on. In any case we’re listing the most common voltage we’ve recorded for each step.

| GeForce GTX 680 GPU Boost Step Table | |||

| Frequency | Voltage | ||

| 1110MHz | 1.175v | ||

| 1097MHz | 1.15v | ||

| 1084MHz | 1.137v | ||

| 1071MHz | 1.125v | ||

| 1058MHz | 1.125v | ||

| 1045MHz | 1.112v | ||

| 1032MHz | 1.100v | ||

| 1019MHz | 1.075v | ||

| 1006MHz | 1.062v | ||

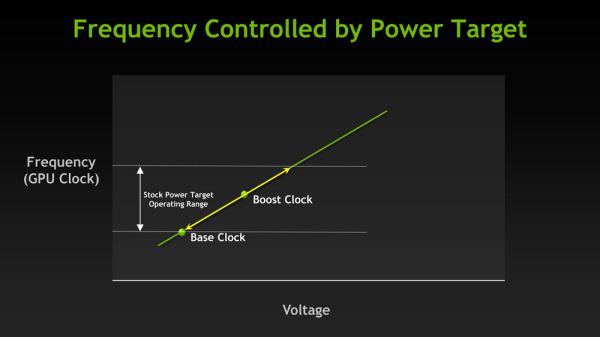

As for deciding what clockspeed to step up to, GPU boost determines this based on power consumption and GPU temperature. NVIDIA has on-card sensors to measure power consumption at the rails leading into the GPU, and will only allow the video card to step up so long as it’s below the GPU Boost power target. This target isn’t published, but NVIDIA has told us that it’s 170W. Note that this is not the TDP of the card, which is 195W. Because NVIDIA doesn’t have a true throttling mechanism with Kepler, their TDP is higher than their boost target as heavy workloads can push power consumption well over 170W even at 1006MHz.

Meanwhile GPU temperatures also play an important role in GPU boost. Our sample could only hit the top step (1110MHz) if the GPU temperature was below 70C; as soon as the GPU reached 70C it would be brought down to the next highest step of 1097MHz. This means that the top step is effectively unsustainable on the stock GTX 680, as there are few if any applications that are both intensive enough to require high clockspeeds and light enough to not push GPU temperatures up.

Finally, with the introduction of GPU Boost overclocking has been affected as well. Rather than directly controlling the core clock, overclocking is accomplished through the combined manipulation of the GPU Boost power target and the use of a GPU clock offset. Power target manipulation works almost exactly as you’d expect: you can lower and raise the GPU Boost power target by -30% to +32%, similar to how adjusting the PowerTune limit works on AMD cards. Increasing the power target allows the video card to pull more power, thereby allowing it to boost to higher steps than is normally possible (but no higher than the max step), while decreasing the power target keeps it from boosting at all.

The GPU offset meanwhile manipulates the steps themselves. By adjusting the GPU offset all of the GPU Boost steps are adjusted by roughly an equal amount, depending on what clocks the PLL driving the GPU can generate. E.G. a +100MHz offset clock would increase the 1st step to 1120MHz, etc up to the top step which would be increased to 1210MHz.

While each factor can be adjusted separately, it’s adjusting both factors together that truly unlock overclocking. Adjusting the GPU offset alone won’t achieve much if most workloads are limited by GPU Boost’s power target, and adjusting the power target alone won’t improve the performance of workloads that are already allowed to reach the highest step. By combining the two you can increase the GPU clock and at the same time increase the power target so that workloads are actually allowed to hit those new clocks.

On that note, overclocking utilities will be adding support for GPU Boost over the coming weeks. The first overclocking utility with support for GPU Boost is EVGA’s Precision X, the latest rendition of their Precision overclocking utility. NVIDIA supplied Precision X Beta 20 with our review samples, and as we understand it that will be made available shortly for GTX 680 buyers.

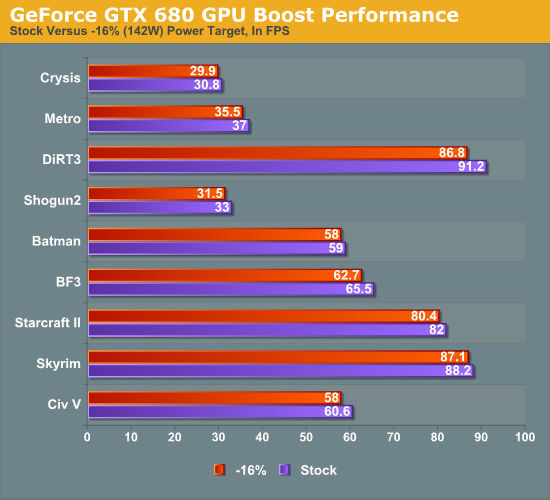

Finally, while we’ll go into full detail on overclocked performance in a bit, we wanted to quickly showcase the impact GPU Boost, both on regular performance and on overclocking. First up, we ran all of our benchmarks at 2560 with the power target for GPU boost set to -16%, which reduces the power target to roughly 142W. While GPU Boost cannot be disabled outright, this was enough to ensure that it almost never activated.

As is to be expected, the impact of GPU Boost varies depending on the game, but overall we found that enabling GPU boost on our card only improves performance by an average of 3%, and by no more than 5%. While this is effectively free performance, it also is a stark reminder that GPU Boost isn’t nearly as potent as turboing on a CPU – at least not quite yet. As there’s no real equivalent to the lightly threaded workload for GPUs, the need for a wide range of potential GPU Boost clocks is not nearly as great as the need for high turbo clocks on a CPU. Even a light GPU workload is relatively heavy when graphics itself is an embarrassingly parallel task.

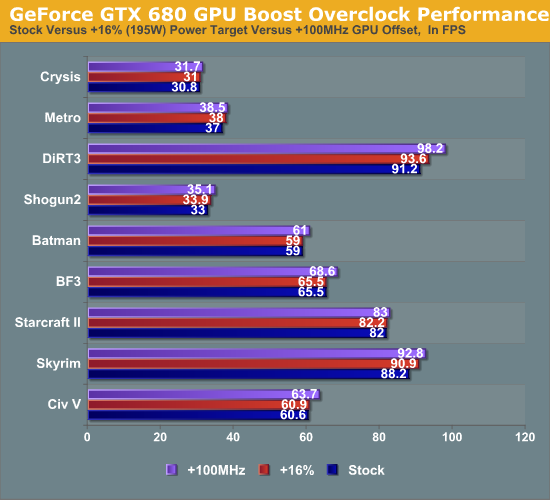

Our other quick look is at overclocking. The following is what our performance looked like at 2560 with stock GPU Boost settings, a power target of +16% (195W), and a GPU offset of +100MHz.

Overall raising the GPU offset is much more effective than raising the power target to improve performance, reflecting the fact that in our case most games were limited by the GPU Boost clock rather than the power target at least some of the time.

404 Comments

View All Comments

_vor_ - Tuesday, March 27, 2012 - link

All I read is blah blah blah NVIDIA blah blah nerdrage blah blah.CeriseCogburn - Tuesday, March 27, 2012 - link

I'll translate for the special people that need more help.AMD's IQ has been bad since 5000 series, with 6000 series also screwey.

You will have shimmering in game textures and lines in shading transitions on screen since their algorithm has been messed up for years, even though it is angle independent and a perfect circle, IT SUCKS in real life - aka gaming.

Nvidia doesn't have this problem, and hasn't had it since before the 5000 series amd cards.

AMD's 7000 series tries once again to fix the ongoing issues, but fails in at least 2 known places, having only Dx9 support, but may have the shimmering and shading finally tackled and up to Nvidia quality, at least in one synthetic check.

_vor_ - Tuesday, March 27, 2012 - link

How much is NVIDIA paying you to babysit this discussion and zealously post?"It's better to keep quiet and people think you are a fool, than to open your mouth and prove them right."

CeriseCogburn - Tuesday, March 27, 2012 - link

Words right from anandtechs articles, and second attack.A normal person would be thankful for the information.

CeriseCogburn - Tuesday, March 27, 2012 - link

Did you notice the Nvidia card won Civ5 by more than the amd did in Metro2033, but Civ5 is declared a tie, and well we know what everyone is claiming for Metro2033.I noticed that and thought it was quite interesting how that was accomplished.

BoFox - Monday, March 26, 2012 - link

AMD's angle-independent AF is still flawed in that it's not fully trilinear when it comes to high-frequency textures (noisy moire). You'd be seeing lines of transition when everything suddenly becomes a bit blurry in a distance with these kinds of grainy textures.It's rather subjective, though.

Nvidia does offer up to 32x CSAA with TRAA (transparent, or alpha textures) in DX10/11 games for superb IQ without having to use brute-force SSAA. AMD does not currently support "forced" AAA (Adaptive AA) on alpha textures in DX10/11 games, and the SSAA support in DX10/11 games was finally announced in beta driver support form with HD 7970 cards.

Transparency AA has been around since 2005, and Nvidia actually maintained the quality IQ options for DX10/11 games compared to DX9 games all along.

ati666 - Monday, March 26, 2012 - link

did AMD fix this problem in their HD7970 or not?CeriseCogburn - Tuesday, March 27, 2012 - link

We will find out what's wrong with it a year from now when the next series big 8000 is launched, until then denials and claims it's as good as nvidia are standard operating procedure, and spinning useless theoretical notions that affect gameplay exactly zero and have amd IQ disadvantages will be spun in a good light for amd to get all the amd fans claiming the buzzwords are a win.That will work like it has for the last 3 releases, 4000, 5000, and 6000, and we just heard the 7000 series fixes that fix the 5000 and 6000 crud that was covered up until now in the 7970 release article.

So amd users will suffer bad IQ in several ways while buzzing up words that are spun from this website as notional greatness and perfectness of amd till like, next release... then your question will be answered - just try to not notice anything until then, ok ?

blanarahul - Saturday, March 24, 2012 - link

I was confused as to GPU Boost was necessary or not. Thanks for making the difference clear.ammyt - Saturday, March 24, 2012 - link

Dafuq y'all saying?The benchmarks are tight in front of your faces! The 680 is tied with the 7950, which surpasses it by a little, and the 7970 is the leader. The 7950 is cheaper by a little margin, but the 7970 is roughly $80 more expensive. What are y'all fighting for?

If I were to choose between the 680, 7950, 7970, I will choose the 7950, cheaper, and a faster by a little margin than the 680. I don't care how or why (memory clock, architecture, bla bla bla) but the benchmarks are in front of you! Clearly, anandtech is biased towards Nvidia.

(Perhaps they're getting paid from them more than AMD...)