NVIDIA GeForce GTX 680 Review: Retaking The Performance Crown

by Ryan Smith on March 22, 2012 9:00 AM ESTTheoreticals

As with any new architecture, we want to take a few moments to look at theoretical performance. These numbers shouldn’t be taken too seriously for cross-vendor comparison, but these numbers often tell us more about interesting architectural improvements that occur from one generation to the next.

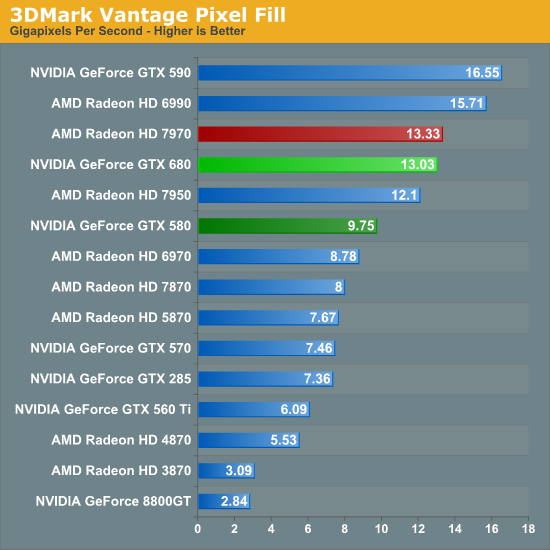

Our first theoretical test is perhaps the most perplexing: 3DMark Vantage’s pixel fill test. Typically this test is memory bandwidth bound as the nature of the test has the ROPs pushing as many pixels as possible with as little overhead as possible, which in turn shifts the bottleneck to a mix of ROP performance and the memory bandwidth needed to feed those ROPs.

Compared to the GTX 580, the GTX 680 has almost exactly the same amount of memory bandwidth (192GB/sec) and only 86% of the theoretical ROP performance (37Gpix vs. 32Gpix). In short, it shouldn’t outperform the GTX 580 here, and yet it outperforms the 580 by 33%.

Why does it do this? That’s the hard thing to answer. As we mentioned in our look at GK104’s architecture, NVIDIA did make some minor incremental improvements to their ROPs coming from GF114, such as slightly improved compression and improved polygon merging. One of those may very well be the contributing factor, particularly the compression improvements since this is a typically memory bandwidth bottlenecked test. Alternatively, it’s interesting to note that the difference between the two video cards is almost identical to the difference in the core clock. GTX 560 Ti’s results tend to blow a hole in this theory, but it bears consideration.

In any case, it’s an interesting turn of events and hopefully one that isn’t simply an edge case. As we’ve seen in our benchmarks GTX 680 has strong performance – even if its lead compared to the 7970 diminishes with resolution – but compared to the GTX 580 in particular it needs strong ROP performance across all games in order to deliver good performance at high resolutions and anti-aliasing.

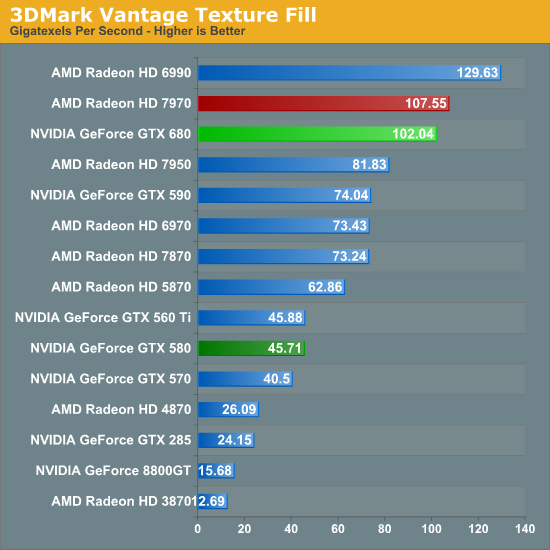

Our second theoretical test is 3DMark Vantage’s texture fill test, which to no surprise has the GTX 680 handily clobbering all prior NVIDIA cards. NVIDIA’s inclusion of 128 texture units on GK104 versus 64 on their previous generation GPUs gives the GTX 680 far better texturing performance. The 30%+ core clock difference only serves to further widen the gap.

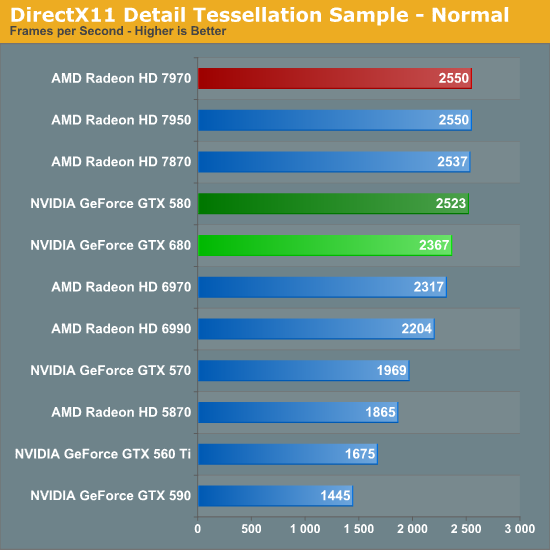

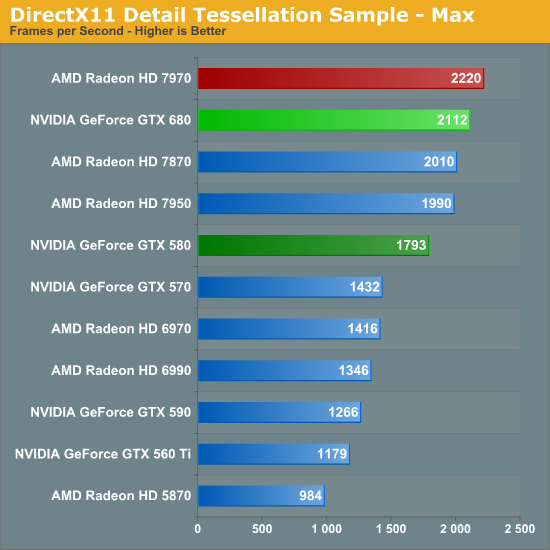

Our third theoretical test is the set of settings we use with Microsoft’s Detail Tessellation sample program out of the DX11 SDK. Overall while NVIDIA didn’t make any significant changes to their tessellation hardware (peak triangle rate is still 4/cycle), they have been working on further improving performance at absurdly high tessellation factors. You can see some of this in action at the max factor setting, but even then we’re running into a general performance wall since the Detail Tessellation program can’t go to the absolute highest tessellation factors NVIDIA’s hardware supports.

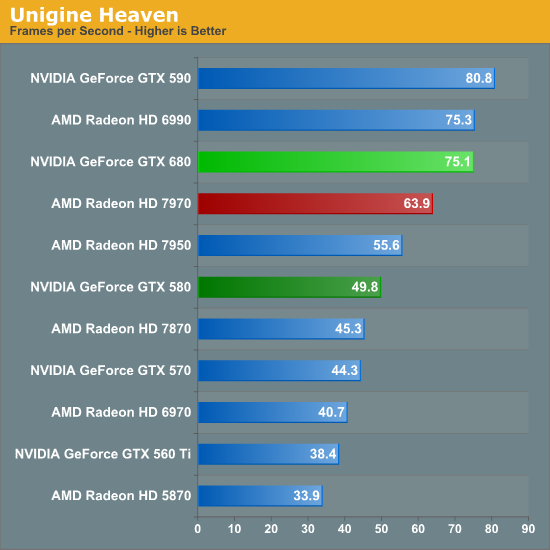

Our final theoretical test is Unigine Heaven 2.5, a benchmark that straddles the line between a synthetic benchmark and a real-world benchmark as the engine is licensed but no notable DX11 games have been produced using it yet. In any case the Heaven benchmark is notable for its heavy use of tessellation, which means it’s largely a proxy test for tessellation performance. Here we can see the GTX 680 shoot well ahead of the GTX 580 – by more than we saw in the DX11 Detail Tessellation sample – but at the same time there’s a lot more going on in Heaven than just tessellation.

Honestly at this point in time I’m not sure just how much more tessellation performance is going to matter. Until DX11 is the baseline API for games, tessellation is still an add-on feature, which means it’s being used to add fine detail to specific models rather than being used on everything in a game world. This demands good tessellation at high factors but at the same time it’s subject to diminishing returns on the improvement to image quality as triangles reach single pixel sizes and smaller. To that end I’m still waiting to see the day where we see tessellation scale similarly to textures – that is by using full MIP chaining of displacement maps – at which point we can evaluate tessellation performance similar to texture performance when it comes to both measuring the performance hit and evaluating the difference in image quality.

404 Comments

View All Comments

ET - Thursday, March 22, 2012 - link

Impressive combination of performance and power draw. AMD will have to adjust pricing.This looks promising for the lower end cards (which are of more interest to me). AMD's 77x0 cards have been somewhat disappointing, and I'll be looking forward to see what NVIDIA can offer in that price bracket and also the 78x0 competition.

rahvin - Friday, March 23, 2012 - link

With 28nm limited (in part because of the TSMC shutdown of the line) we won't see price reductions, the parts are going to be too limited for that to happen unfortunately, that is unless AMD stockpiled tons of chips before the TSMC shutdown. What we might see is AMD releasing drivers or new cards that stop underclocking their chips to keep the TDP so low. From what I've read in the reviews AMD has underclocked their cards significantly and could issue drivers tomorrow that boosts performance 30% but at the sacrifice of increased power consumption.The 680 appears to be a very nice card, but they tossed the compute performance out the window to accomplish it, the 580 smokes the 680 in most of the compute benchmarks. I find that disappointing personally and won't be upgrading as from my perspective it's not much of an upgrade against a 580. Shoot, show me a game that strains the 580, with every game produced a console port that is designed for DX9 I'm not sure why anyone bothers upgrading.

Janooo - Thursday, March 22, 2012 - link

Ryan, why you did not include OC79XX as you did with OC GTX460 when 68XX were launched?Ryan Smith - Thursday, March 22, 2012 - link

Because you guys have made it abundantly clear that you don't want us doing that.http://www.anandtech.com/show/3988/the-use-of-evga...

Janooo - Thursday, March 22, 2012 - link

"We were honestly afraid that if we didn't include at least a representative of the factory overclocked GTX 460s that we would get accused of being too favorable to AMD. As always, this is your site - you ultimately end up deciding how we do things around here. So I'm asking all of you to chime in with your thoughts - how would you like to handle these types of situations in the future?"Anand is asking what to do. The article form the link is not a proof of that. What are you talking about?

chizow - Thursday, March 22, 2012 - link

I think he's referring to the 620 comments worth of nerdrage more than the article.prophet001 - Thursday, March 22, 2012 - link

bad nerdrage is bad :(Janooo - Thursday, March 22, 2012 - link

I see.Still 680 overclocks/boosts on the fly and 7970 has set clock.

It's hard to compare them.

CeriseCogburn - Thursday, March 22, 2012 - link

If you keep the 680 cool it goes faster - so a good way would be to crank the 680's fan to 100% and watch it further trounce the 7970, right ?Janooo - Thursday, March 22, 2012 - link

That's the thing. I am not sure 680 can clock higher than 7970. If we do the same for both cards 7970 might end up faster card.