The Opteron 6276: a closer look

by Johan De Gelas on February 9, 2012 6:00 AM EST- Posted in

- IT Computing

- CPUs

- Bulldozer

- AMD

- Opteron

- Cloud Computing

- Interlagos

MySQL OLAP Analyzed

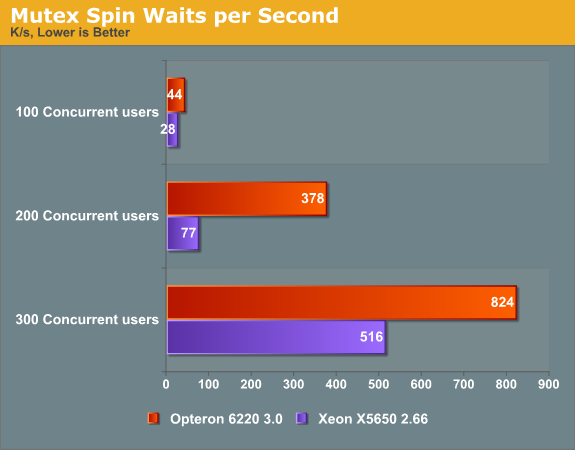

Since threads waiting for mutex (semaphores) to complete were killing scaling on the old MySQL/Innodb, we wrote a bash script to monitor the right lines in the complex and long listing of "SHOW ENGINE INNODB STATUS \G" command. The show status commands are rather hard to interpret as they simply reveal the current status of the counters and show few ratios, so we sampled the output of the status command every second to measure the amount of spin waits per second. This gave us some very interesting data points.

A few thousands of spin waits isn't anything to worry about, but a spin wait inherently wastes a small amount CPU cycles, and a few hundreds of thousands of them will waste a lot of CPU cycles without doing any useful computational work. At 200 concurrent users, five times (!) more spin waits are happening on the Opteron server than on the Xeon server. Since the Opteron has a slightly higher clockspeed than the Xeon (3GHz vs. 2.66GHz), chances are high that the Opteron's micro-architecture is a lot less efficient in handling the mutex. We will try to profile this and report back in our next article. But this already explains a lot: as the core count goes up, more threads are launched to take advantage of this, but more threads means that locking contention plays an increasingly important role.

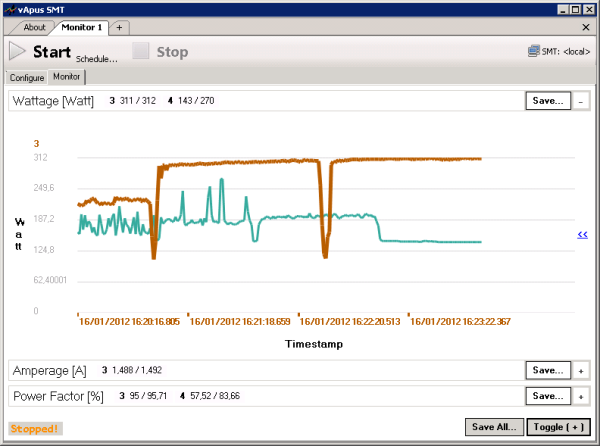

A CPU that handles mutex and locking in general slowly can get an even heavier performance penalty in MySQL with rapidly increasing amounts of context switches. If the spin wait spins too many times (too many "rounds"), it is put to sleep by the OS and put in a wait array. The context switch associated with this operation allows a new thread to run on the CPU but costs tens of thousands CPU cycles. So high amount of spin wait rounds and context switches waste a lot of CPU cycles and power. The end result is that spin locks and high context switch rates are having a devastating effect on the Opteron's power consumption. We integrated the Racktivity energy monitor into our vApus stress testing client. The Opteron is the brown line, the Xeon the blue line.

The dips are the periods between two concurrencies. The first bulge is 100 concurrent users, the second 200, and the third 300. As you can see the Opteron is running constantly at full throttle while the Xeon only spikes from time to time. The huge amount of spin locks is keeping the Opteron cores working hard, while the Xeon cores can take lots of breaks. The Opteron is running almost constantly at 311W (at 200 and 300 concurrent users) while the the Xeon runs at 190W with spikes up to 270W. The delta between the surfaces below the lines is the amount of energy consumed, which is huge.

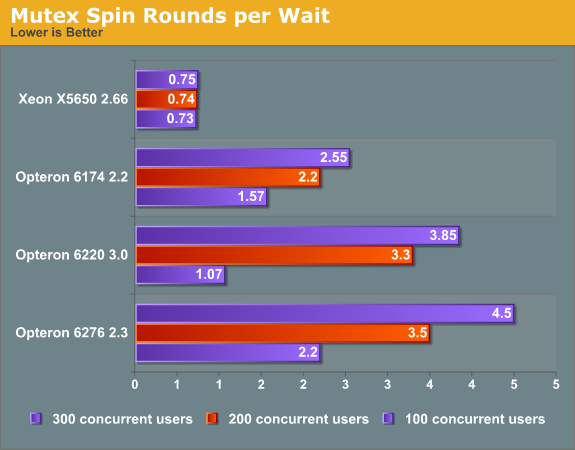

Another way to make the issue clear is to look at the spin rounds per wait. This value shows the number of spin lock rounds per OS wait for a mutex.

Notice that as the core count goes up and the single threaded performance goes down, the amount of spin lock grounds goes up. There is more to it than just "single threaded" performance as we can assume that a Opteron 6174 core at 2.2GHz is not faster than a Opteron 6220 core at 3GHz. In fact at low thread counts, even the Opteron 6276 edged out the Opteron 6174. So as long as the impact of locking contention is not too high, the Opteron 6200 does fine. Once locking contention is determining the performance, it is clear that even the old Magny-Cours architecture handles mutexes a lot better.

The Opteron is clearly not the only one to blame as apparantely MS SQL server has less internal contention problems than the MySQL/Innodb combination. We might also be able to tweak Innodb to lower the impact by changing the Innodb_sync_spin_loops variable and reducing the amount of threads running. However, handling semaphores quickly is important in many if not most server workloads. As long as we don't find a way around it, the Opteron 6200 is not an option for any heavily used MySQL OLAP server.

46 Comments

View All Comments

Scali - Saturday, February 11, 2012 - link

"It also reduces throughput."No, it improves throughput, assuming we are talking from improvement going from 1 physical core to 2 logical cores.

Clearly two logical cores (on the same physical core) have less throughput than two physical cores, but that's obvious since you only have half the hardware.

And that, together with the fact that Intel's SMT chips have far better single-threaded performance to begin with, results in very good performance per die area (you know, that thing that people used to praise AMD GPUs for).

"Yes, it does, via the implementation of all that shared hardware on the chip."

You can't say that, since there is no non-modular version of Bulldozer (just as there is no non-HT version of the Intel architectures).

However, if you compare a 4-core HT architecture with a non-HT architecture, be that a Core2 Quad or a Phenom X4, Intel's transistorcount is clearly in the same ballpark, so HT does not add much in terms of transistorcount.

With CMT we see little or no indication of reduced transistorcount. AMD's 4-module chips are MUCH larger than regular 4-core chips have been. In fact, AMD"s 4-module design is even larger than Intel's 6-core design with HT.

"Two different approaches to the same idea."

I disagree. SMT is a very different idea from CMT (which is a bogus marketing term invented by AMD anyway). CMT is more of a marketing excuse for not having proper SMT, and shows no merit in actual silicon.

"but I don't think we can label one as inherently better than the other yet."

Well clearly we disagree on that then.

I think SMT is clearly inherently better than CMT. SMT has far more flexible sharing of resources than AMD's half-baked approach.

And any theoretical disadvantages (fighting over resources and all that) can be put to bed with benchmarking such as in this review: the disadvantages may exist, but the net performance is unbeatable anyway. A midrange Xeon schools a CMT-based chip of twice the size.

Andexxx - Wednesday, February 15, 2012 - link

Well, there are a lot of factors affecting single-threaded performance in real life. So CMT indeed has its scaling advantages as tests suggested. At least most of the things should be constant when comparing CMT-on and CMT-off, while comparing SMT and CMT on different implementations is not. Lack of single-threaded performance is not a valid point of blaming CMT.If you want to *proof* CMT is a half-baked marketing crap while SMT is the only solution, what you need is a SMT-based AMD BD monolithic core or a CMT-based Intel monolithic module for comparison.

For the transistors counting, well, that's their choice of making such a cache and uncore configuration. You can keep telling 4-module chip is blahblahblah, but in some cases it beats a 4C8T Xeon chips. Transistors is not a big matter from customer viewpoint but just the producer viewpoint. If you want to argue with GPU's performance metrics, GPU is a data-parallel processor with bunch of logic units, while CPU is a latency-sensitive girlfriend of caches. Large amount of cache can make your Performance/mm^2 or Performance/transistors look worse. So trade-offs on the amount of cache should have been done before they started to design the chip.

Scali - Wednesday, February 15, 2012 - link

Well, one of the reasons why AMD's current CPUs have such poor single-threaded performance is because they moved from 3 ALUs per thread to 2 ALUs per thread.This is part of the whole CMT design.

So in that sense, CMT can be blamed for the poor single-threaded performance at least.

And since single-threaded performance is so bad, it is only logical that scaling to more threads is relatively good.

On a CPU with faster single-threaded performance, you run into IO limits sooner (memory, disk etc), so it is more difficult to maintain similar scaling with increased thread count.

The strength of SMT is that Intel did not have to cut any ALUs when implementing HT. Pentium 4 Northwood with HT still had two double-pumped ALUs, like the non-HT Willamette that went before it.

Likewise, Core i7 still has 3 ALUs, like Core2.

Another strength of SMT is that even with one less ALU per 2 threads than CMT, it still reaches similar performance in multithreaded scenarios. CMT can not share these ALUs between threads, while SMT can.

Conclusion: CMT is nonsense.

For the full version, see: http://scalibq.wordpress.com/2012/02/14/the-myth-o...

slycer.tech - Monday, February 13, 2012 - link

If Bulldozer arc really bad, how about this?http://www.marketwatch.com/story/amd-opterontm-620...

Can someone prove this award is a big liar?

duploxxx - Tuesday, February 14, 2012 - link

read the article, the baseline they use for price/performance is based on spec results....lots of companies still use these results to decide on a platform.but then again, benchmarks don't always show the real world value or even hard to compare since many have in house applications that don't scale or scale different like the ones benchmarked in reviews. 90% of the datacenters don't even require more then any midrange cpu, anything above midrange is wasted money and both vendors provide more then adequate solutions to that. It's the human mind that is often blocking sanity. Investing that wasted money in other solutions often provide a better total performing solution.

anti_shill - Monday, April 2, 2012 - link

shill_detector by anti_shill on Monday, April 02, 2012Here's a more accurate reflection of Bulldozer/ interlagos performance, untainted by intel ad bucks...

http://www.phoronix.com/scan.php?page=article&...

But if u really want to see what the true story is, have a look at AMD's stock price lately, and their server wins. They absolutely smoke intel on virtualization, and anything that requires a lot of threads. It's not even close.