NVIDIA's Tegra 3 Launched: Architecture Revealed

by Anand Lal Shimpi on November 9, 2011 12:34 AM ESTThe Tegra 3 GPU: 2x Pixel Shader Hardware of Tegra 2

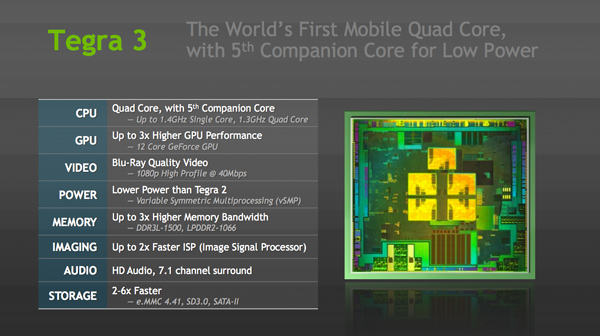

Tegra 3's GPU is very much an evolution of what we saw in Tegra 2. The GeForce in Tegra 2 featured four pixel shader units and four vertex shader units; in Tegra 3 the number of pixel shader units doubles while the vertex processors remain unchanged. This brings Tegra 3's GPU core count up to 12. NVIDIA still hasn't embraced a unified architecture, but given how closely it's mimicking the evolution of its PC GPUs I wouldn't expect such a move until the next-gen architecture - possibly in Wayne.

| Mobile SoC GPU Comparison | |||||||||||

| Adreno 225 | PowerVR SGX 540 | PowerVR SGX 543 | PowerVR SGX 543MP2 | Mali-400 MP4 | GeForce ULP | Kal-El GeForce | |||||

| SIMD Name | - | USSE | USSE2 | USSE2 | Core | Core | Core | ||||

| # of SIMDs | 8 | 4 | 4 | 8 | 4 + 1 | 8 | 12 | ||||

| MADs per SIMD | 4 | 2 | 4 | 4 | 4 / 2 | 1 | 1 | ||||

| Total MADs | 32 | 8 | 16 | 32 | 18 | 8 | 12 | ||||

| GFLOPS @ 200MHz | 12.8 GFLOPS | 3.2 GFLOPS | 6.4 GFLOPS | 12.8 GFLOPS | 7.2 GFLOPS | 3.2 GFLOPS | 4.8 GFLOPS | ||||

| GFLOPS @ 300MHz | 19.2 GFLOPS | 4.8 GFLOPS | 9.6 GFLOPS | 19.2 GFLOPS | 10.8 GFLOPS | 4.8 GFLOPS | 7.2 GFLOPS | ||||

Per core performance has improved a bit. NVIDIA worked on timing of critical paths through the GPU's execution units to help it run at higher clock speeds. NVIDIA wouldn't confirm the target clock for Tegra 3's GPU other than to say it was higher than Tegra 2's 300MHz. Peak floating point throughput per core is unchanged (one MAD per clock), but each core should be more efficient thanks to larger caches in the design.

A combination of these improvements as well as newer drivers are what give Tegra 3's GPU its 2x - 3x performance advantage over Tegra 2 despite only a 50% increase in overall execution resources. In pixel shader bound scenarios, there's an effective doubling of execution horsepower so the 2x gains are more believable there. I don't expect many games will be vertex processing bound so the lack of significant improvement there shouldn't be a big issue for Tegra 3.

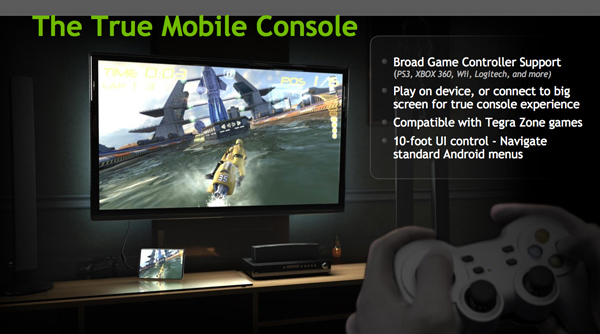

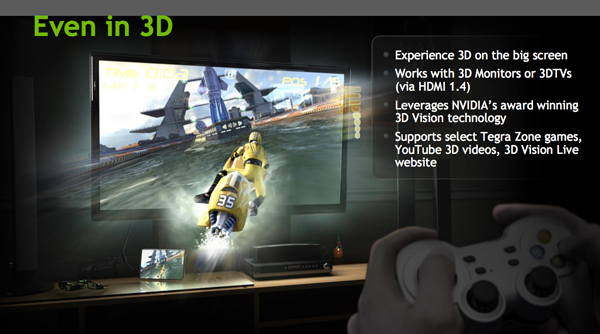

Ready for Gaming: Stereoscopic 3D and Expanded Controller Support

Tegra 3 now supports stereoscopic 3D for displaying content from YouTube, NVIDIA's own 3D Vision Live website and some Tegra Zone games. In its port of Android, NVIDIA has also added expanded controller support for PS3, Xbox 360 and Wii controllers among others.

Tegra 3 Video Encoding/Decoding and ISP

There's unfortunately not too much to go on here, especially not until we have some testable hardware in hand, but NVIDIA is claiming a much improved video decoder and more efficient video encoder in Tegra 3.

Tegra 3's video decoder can accelerate 1080p H.264 high profile content at up to 40Mbps, although device vendors can impose their own bitrate caps and file limitations on the silicon. NVIDIA wouldn't go into greater detail as to what's changed since Tegra 2, other than to say that the video decoder is more efficient. The video encoder is capable of 1080p H.264 base profile encode at 30 fps.

The Image Signal Processor (ISP) in Tegra 3 is twice as fast as what was in Tegra 2 and NVIDIA promised more details would be forthcoming (likely alongside the first Tegra 3 smartphone announcements).

Memory Interface: Still Single Channel, DDR3L-1500 Supported

Tegra 3 supports higher frequency memories than Tegra 2 did, but the memory controller itself is mostly unchanged from the previous design. While Tegra 2 supported LPDDR2 at data rates of up to 600MHz, Tegra 3 increases that to LPDDR2-1066 and DDR3-L is supported at data rates of up to 1500MHz. The memory interface is still only 32-bits wide, resulting in far less theoretical bandwidth than Apple's A5, Samsung's Exynos 4210, TI's OMAP 4, or Qualcomm's upcoming MSM8960. This is particularly concerning given the increase in core count as well as GPU execution resources. NVIDIA doesn't expect memory bandwidth to be a limitation, but I can't see how that wouldn't be the case in 3D games. Perhaps it's a good thing that Infinity Blade doesn't yet exist for Android.

SATA II Controller: On Die

Given Tegra 3 will find itself in convertible Windows 8 tablets, this next feature makes a lot of sense. NVIDIA's latest SoC includes an on-die SATA II controller, a feature that wasn't present on Tegra 2.

94 Comments

View All Comments

MamiyaOtaru - Friday, November 11, 2011 - link

what i liked about sound*storm* and what has me using cmedia now is DDLB3an - Wednesday, November 9, 2011 - link

Just a little thing, but the Transformer Prime has a IPS+ display, not a typical IPS display which you have listed. Asus clam the + version is 1.5x brighter than a normal IPS display.I'm impressed by the specs of the Prime, in literally EVERY single way (possibly apart the GPU) the Prime better than the iPad 2.... thinner, ligher, better display (apparently), higher res too, twice as much RAM, SD slot, and more than twice as many cores that are each also clocked higher...

If it's this good in the real world then i'll be imprssed that Asus could afford to make such a product and keep it at the same price as the iPad.

name99 - Wednesday, November 9, 2011 - link

"in literally EVERY single way ... better than the iPad 2"You sure about that? You know, for a FACT, that the flash is faster? That the WiFi supports 5GHz and is faster? That there is the same range of sensors (including, eg, magnetometer, accelerometer, gyro, proximity sensor, light sensor, and a dozen I've forgotten --- and that every one of them is better than on iPad2?

There is a HUGE amount to iPad2 that people seem to forget because it's just hiding there under the covers, it doesn't advertise itself.

ncb1010 - Saturday, November 12, 2011 - link

Yes, The prime has a magnetometer, a gyro, a compass and a light sensor according to theverge.com(ex-engadget staff). The base iPad base model is missing a key sensor(GPS) but this includes it at the same price point. The iPad has no flash in any sense of the word(Adobe flash or camera flash) while the transformer has both Adobe Flash and a camera flash so I really don't see how it has faster flash(do you mean shutter speed?). Besides, of all the specs we know on the camera, it looks to be a lot better than the ones Apple put in there to upsell people on the iPad 3. As far as a proximity sensor, what would be the purpose of it? The purpose in the iPad is to detect when the custom cover on the iPad is put on and removed. The Pros on the Optimus Prime hardware wise are numerious while the iPad have some theoretical benefits just because we don't know every single detail on the Prime. You are grasping at straws here.AuDioFreaK39 - Wednesday, November 9, 2011 - link

Quick question for Tegra 3 architecture engineers: Is the "companion core" identified as Core 0 or Core 4? Thanks in advance.Draiko - Wednesday, November 9, 2011 - link

Good question. I'd love a solid answer myself but from the core demo video, it looks like it's core 0.Anonymous Blowhard - Wednesday, November 9, 2011 - link

IANAD (I Am Not A Developer) but I'm betting it's actually still tagged as 0, with lower-level firmware switching as to whether or not "core 0" is the companion core or a full core.Remember that the companion core cannot be run at the same time as the full cores, so it's likely that when the demand-based switching kicks in, "companion core 0" is spun down, "full core 0" is spun up, and the rest of "full core 1/2/3" come online as well.

Since this is happening at the firmware/lower level vis-a-vis x86 "Turbo Core" it will be transparent to the OS.

/but that's, like, just my opinion man

eddman - Wednesday, November 9, 2011 - link

I agree with Anonymous Blowhard. The OS can't see all 5 cores at the same time, so companion core would be 0 when it's enabled.mythun.chandra - Wednesday, November 9, 2011 - link

Core 0 :)allingm - Thursday, November 10, 2011 - link

While you guys are probably right, and it is probably just core 0, there is the possibility that its cores 0 - 4. All 4 threads could simply be run on the one core and this would make it seamless to the OS which seems to be what Nvidia suggests.