Facebook's "Open Compute" Server tested

by Johan De Gelas on November 3, 2011 12:00 AM ESTPower Supply Efficiency Visualized

I graduated as an electromechanical engineer, but 17 years of IT jobs and research have helped me forget a lot about electricity and electronics. However, I have the advantage of running the Sizing Servers Lab (at the university college of West-flanders, Howest) and thus the privilege of working with some very talented people. Tijl Deneut told me he would be able to visualize the efficiency of the power supply. So with the advanced Racktivity PDU, he managed to produce a time graphic that shows how close the current sine wave remains to to the voltage sine wave. If the two are perfectly in phase, the power quality or power factor is 100%.

In your own home, this power factor is less important. However, large installations such as a data centers have to pay extra for bad power factors as a low power factor causes the electrical system to draw more current for the same amount of work being done, and more current results in higher heat losses.

Data centers have large power factor correctors, electronic systems with large capacitors that improve the PF but also consume energy. A bad PF can increase the Power Usage Effectiveness (PUE) of the data center, and this PUE has become an extremely important "benchmark" for data centers. The less these systems have to work the better, so the PF of a server PSU should be as close to 1 as possible.

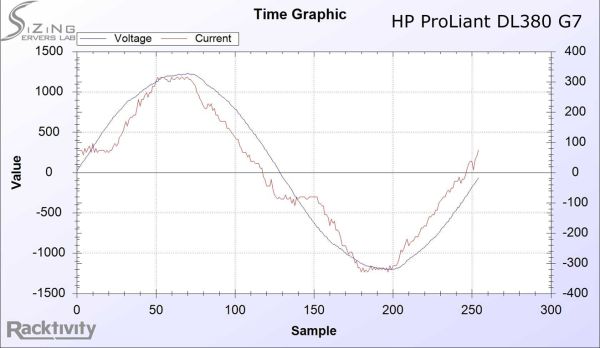

We started by measuring while the server is close to idle, which is a pretty bad scenario for the PF. First let's look at the sine waves of the HP DL380 G7:

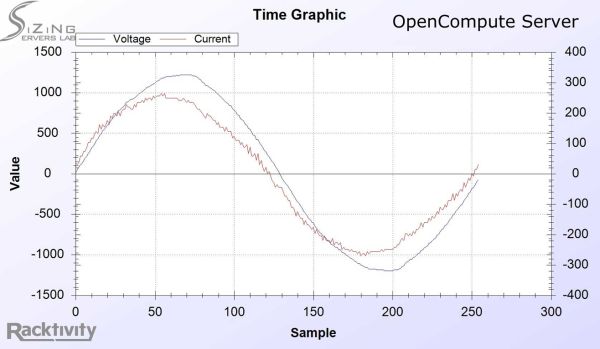

That's not bad at all, but next let's look at the sine waves of the AC that enters the Open Compute server

The current sine wave is not only closer to the voltage sine wave, it is also much closer to the ideal form of an AC sine wave, which makes energy delivery more efficient. It is one of the first indications that the Facebook engineers did their homework very well.

67 Comments

View All Comments

iwod - Thursday, November 3, 2011 - link

And i am guessing Facebook has at least 10 times more then what is shown on that image.DanNeely - Thursday, November 3, 2011 - link

Hundreds or thousands of times more is more likely. FB's grown to the point of building its own data centers instead of leasing space in other peoples. Large data centers consume multiple megawatts of power. At ~100W/box, that's 5-10k servers per MW (depending on cooling costs); so that's tens of thousands of servers/data center and data centers scattered globally to minimize latency and traffic over longhaul trunks.pandemonium - Friday, November 4, 2011 - link

I'm so glad there are other people out there - other than myself - that sees the big picture of where these 'miniscule savings' goes. :)npp - Thursday, November 3, 2011 - link

What you're talking about is how efficient the power factor correction circuits of those PSUs are, and not how power efficient the units their self are... The title is a bit misleading.NCM - Thursday, November 3, 2011 - link

"Only" 10-20% power savings from the custom power distribution????When you've got thousands of these things in a building, consuming untold MW, you'd kill your own grandmother for half that savings. And water cooling doesn't save any energy at all—it's simply an expensive and more complicated way of moving heat from one place to another.

For those unfamiliar with it, 480 VAC three-phase is a widely used commercial/industrial voltage in USA power systems, yielding 277 VAC line-to-ground from each of its phases. I'd bet that even those light fixtures in the data center photo are also off-the-shelf 277V fluorescents of the kind typically used in manufacturing facilities with 480V power. So this isn't a custom power system in the larger sense (although the server level PSUs are custom) but rather some very creative leverage of existing practice.

Remember also that there's a double saving from reduced power losses: first from the electricity you don't have to buy, and then from the power you don't have to use for cooling those losses.

npp - Thursday, November 3, 2011 - link

I don't remember arguing that 10% power savings are minor :) Maybe you should've posted your thoughts as a regular post, and not a reply.JohanAnandtech - Thursday, November 3, 2011 - link

Good post but probably meant to be a reply to erwinerwinerwin ;-)NCM - Thursday, November 3, 2011 - link

Johan writes: "Good post but probably meant to be a reply to erwinerwinerwin ;-)"Exactly.

tiro_uspsss - Thursday, November 3, 2011 - link

Is it just me, or does placing the Xeons *right* next to each other seem like a bad idea in regards to heat dissipation? :-/I realise the aim is performance/watt but, ah, is there any advantage, power usage-wise, if you were to place the CPUs further apart?

JohanAnandtech - Thursday, November 3, 2011 - link

No. the most important rule is that the warm air of one heatsink should not enter the stream of cold air of the other. So placing them next to each other is the best way to do it, placing them serially the worst.Placing them further apart will not accomplish much IMHO. most of the heat is drawn away to the back of the server, the heatsinks do not get very hot. You also lower the airspeed between the heatsinks.