NVIDIA’s GeForce GTX 590: Duking It Out For The Single Card King

by Ryan Smith on March 24, 2011 9:00 AM ESTCompute

Moving on from our look at gaming performance, we have our customary look at compute performance.

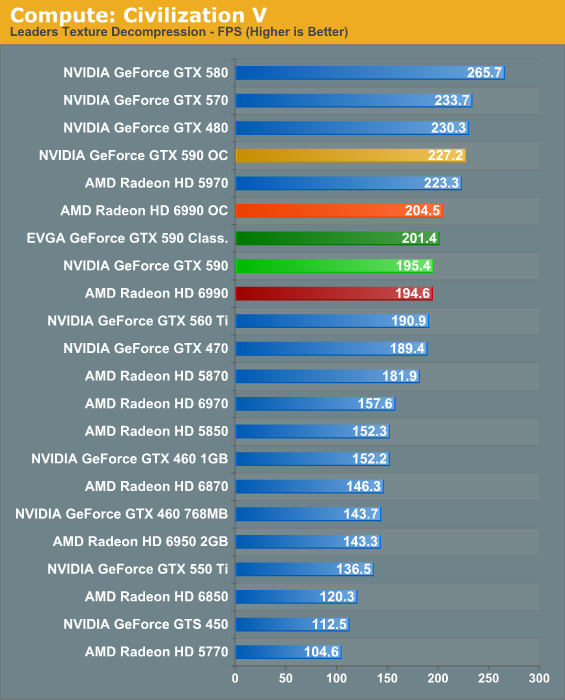

Our first compute benchmark comes from Civilization V, which uses DirectCompute to decompress textures on the fly. Civ5 includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes.

In the game world Civ5 benefits significantly from SLI and CrossFire. For our texture compression test however AFR is more a liability than a benefit. This doesn’t impact the game in any meaningful manner, but it’s an example of how SLI/CF aren’t always the right tool for the job. Unfortunately for both parties, with as few compute applications as there are today, almost none of them benefit from SLI/CF.

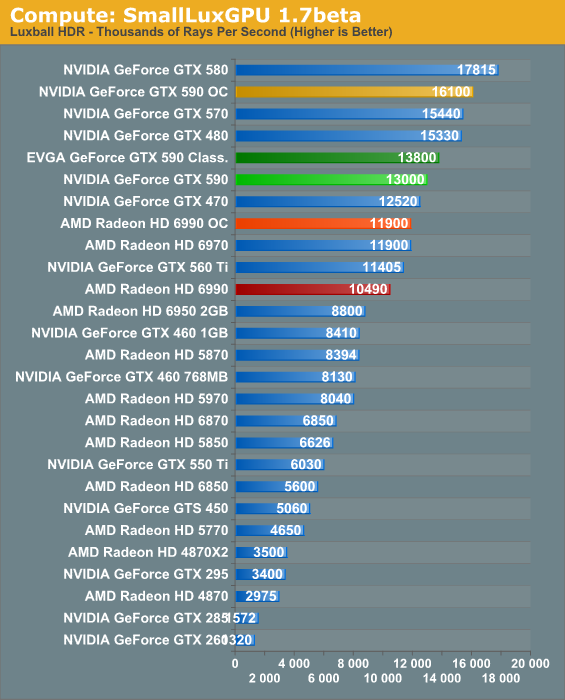

Our second GPU compute benchmark is SmallLuxGPU, the GPU ray tracing branch of the open source LuxRender renderer. While it’s still in beta, SmallLuxGPU recently hit a milestone by implementing a complete ray tracing engine in OpenCL, allowing them to fully offload the process to the GPU. It’s this ray tracing engine we’re testing.

SmallLuxGPU only currently supports ray tracing with one GPU, so all of our results are effectively proxies for what would be if the GTX 590 only had one GPU. Not surprisingly overclocks do wonders here, and NVIDIA’s strong compute architecture gives them an easy win. SLI/CF performance will become more important here when we upgrade to LuxMark for our next iteration of our benchmark suite, as LuxMark can handle multiple OpenCL drivers.

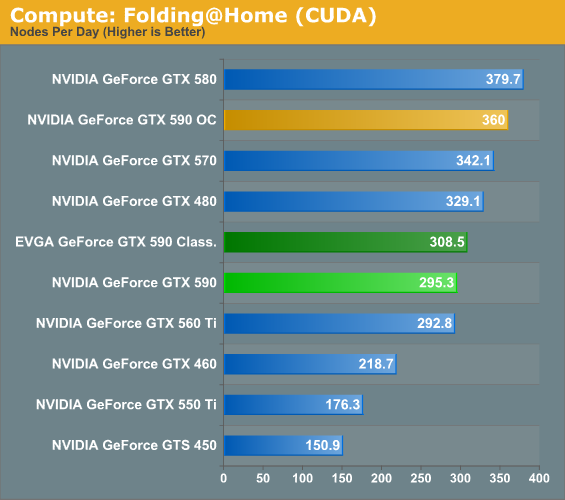

Our final compute benchmark is a Folding @ Home benchmark. Given NVIDIA’s focus on compute for Fermi, cards such as the GTX 590 can be particularly interesting for distributed computing enthusiasts, as two GPUs should be able to quickly retire work units.

Folding@Home doesn’t directly benefit from CF/SLI at all. However by dispatching one WU to each GPU it’s possible to double effective performance. With that taken into account the GTX 590 is quite an effective cruncher, particularly when we start looking at overclocking.

123 Comments

View All Comments

Abot13 - Saturday, March 26, 2011 - link

Well there is advertising and advertising. Asking multiple monitor manufacturers to supply sets of screens in order to test them for multi monitor setups, where the best sets will be used for a longer test period to see how they keep up over time, doesnt interfere with your journalistic integrity, bit for the manufacturers the cost can be written of as PR/advertising costs.in the end your readers will get the information they want, your integrety will be intact and we also will find out what the best monitors are for multi monitor setups.

Shouldnt be that hard now should it?

PrinceGaz - Friday, March 25, 2011 - link

Once you are dealing with framerates above about 80fps at the highest resolutions and quality settings, multi-monitor is one option, or it is time to start cranking up the anti-aliasing as the results are otherwise irrelevant.With one card doing 100fps and another managing 140fps in a game, the best card for that game is the one which is cheapest even if only slightly, unless you can do something to bring the framerates down in a useful way, and higher AA than 4x is the way to go.

Ryan Smith - Friday, March 25, 2011 - link

As a general principle I agree, however 8x AA really doesn't do anything for IQ. I'd rather turn on SSAA/TrAA/MLAA, however AMD and NVIDIA do not have a common AA mode beyond DX9 SSAA. So for DX10/11 titles, MSAA is still the lowest common denominator.DarknRahl - Thursday, March 24, 2011 - link

I agree. Anandtech always seems to have a nvidia bias to their video card reviews.It sticks in the craw as I dig all their other reviews for other products but video card wise I go elsewhere.

B3an - Thursday, March 24, 2011 - link

No they dont. It just that for some reason AMD attracts more morons that like to moan about these non-existent things. And i own AMD cards before you say anything else stupid.softdrinkviking - Sunday, March 27, 2011 - link

100% agree. i own an AMD card too, and i felt like anandtech was extremely positive about the 5800 series when i bought that card.it also looks like they are leaning towards the 6990 vs the 590 in this very article. to paraphrase... the 6990 is going to be better for current new games and future games, but the 590 seems to do a bit better with older titles and new games played at lower resolutions.

i don't understand how anyone could think that makes them nvidia biased. it boggles the mind.

compvter - Thursday, March 24, 2011 - link

Finnish site called muropaketti did some 3x display tests with these new cardshttp://plaza.fi/muropaketti/artikkelit/naytonohjai...

and for your question i would like to answer with other question: Why buy faster cpu if you won't need faster at the moment? Granted there is no limitations like dx compatability, but still you can use your gfx card quite a while. Im still using 3870x2 card (3 years), mainly because there are so few dx11 games.

VoraciousGorak - Thursday, March 24, 2011 - link

For the "hype" they were throwing out with that video preview, I thought they were going to pull a fast one and launch a GeForce 6-series. But a teaser video for a super-enthusiast GPU using existing tech that'll be so rare I'll probably never physically look at one in my lifetime?Color me disappointed.

fausto412 - Thursday, March 24, 2011 - link

yeah.those 700 dollar cards are only to be bought by a rich few.

Improve the $400 price range...that's as high as i'll go these days.

Red Storm - Thursday, March 24, 2011 - link

... are you guys not testing these cards at multi-monitor resolutions? You said yourself in the review that these are marketed towards the high end. Well, the high end isn't just 30" monitors. Multi monitors boast a higher resolution and I think it's important to know how well these dual GPU monsters perform at the level they are marketed towards.