The Brazos Review: AMD's E-350 Supplants ION for mini-ITX

by Anand Lal Shimpi on January 27, 2011 6:08 PM ESTDiscrete GPUs on Brazos: CPU and PCIe Bound

Intel's Atom could use a more capable GPU, but what about Brazos? The E-350's GPU is branded the AMD Radeon HD 6310. It has a total of 80 VLIW-5 SPs running at 500MHz. The GPU shares the same 64-bit DDR3 memory interface as the CPU, but it does not have any access to the CPU's caches. Future incarnations of Fusion will blur the line between the CPU and GPU but for now, this is the division.

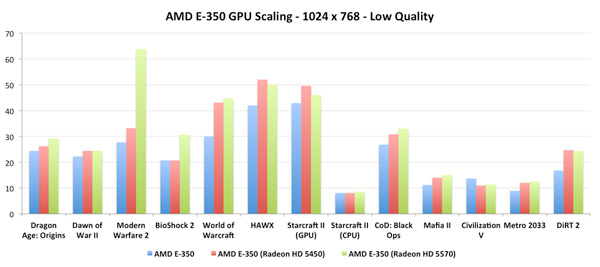

Branching off the E-350 APU are four PCIe lanes. There are another four lanes courtesy of the Hudson FCH. MSI's E350IA-E45 exposes the former by the way of a physical PCIe x16 slot, although electrically it's only a x4. Curious to see if there would be any benefit to plugging in a faster GPU I decided to try a Radeon HD 5450 and 5570 in the slot:

With a couple of exceptions (World of Warcraft, HAWX), there's no real benefit to a discrete Radeon HD 5450 over the integrated Radeon HD 6310. This is unsurprising as the two have very similar compute capabilities and only differ in the amount of available memory bandwidth since the Radeon HD 5450 doesn't have to share with a neighboring CPU.

The Radeon HD 5570 results were a bit unexpected. Other than Modern Warfare 2 and BioShock 2, there's little performance difference between the 5570 and the 5450 when paired with the AMD E-350. How much of this is due to the performance of the E-350 vs. the bandwidth limitations of the PCIe x4 slot is difficult to say. This smells like a CPU limitation, in which case it would mean that AMD didn't skimp at all when it came to the E-350's GPU.

The Radeon HD 6310: Very Good for the Money

I want to say that lately we've seen a resurgence in the importance of integrated graphics, but I don't know that it ever was truly important. With both AMD and Intel now taking processor graphics seriously, the quality and performance of what we get "for free" should go up tremendously in the coming years. The past two years have shown us that Intel is starting to take GPU performance seriously. The HD Graphics, HD Graphics 2000 and 3000 parts we've been given are all relatively competitive. The only problem is you generally have to spend around $100 - $200 on a CPU to get what I'd consider the bare minimum you should get from integrated graphics. Brazos aims to change that.

The E-350 still isn't enough to play all modern games, but it's what I would consider an acceptable entry level GPU. Despite its stature, the E-350 can easily compete with much more expensive Intel solutions when it comes to 3D gaming. Let's get to the numbers.

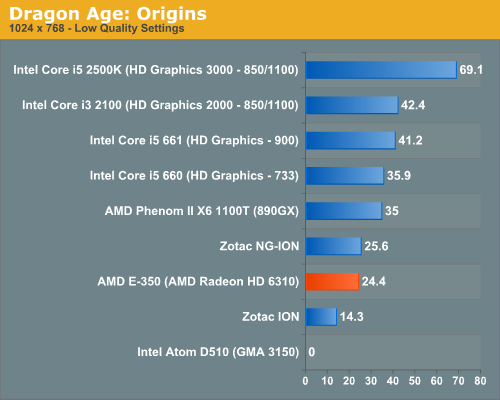

Dragon Age: Origins

DAO has been a staple of our integrated graphics benchmark for some time now. The third/first person RPG is well threaded and is influenced both by CPU and GPU performance.

We ran at 1024 x 768 with graphics and texture quality both set to low. Our benchmark is a FRAPS runthrough of our character through a castle.

Our Dragon Age: Origins benchmark is quite CPU bound here and thus the E-350's Radeon HD 6310 doesn't look all that powerful. Luckily for AMD, DAO happens to be more of an outlier among current games as you're about to see.

The bare bones Atom D510 won't even run DAO. You'll see a number of games where compatibility is a problem for the D510. NVIDIA's ION does a lot better but it'll take a second generation ION to hang with the E-350. Zotac's NG-ION is actually a bit faster than the E-350, implying some driver/threading efficiencies as we're most definitely CPU bound on these low end parts.

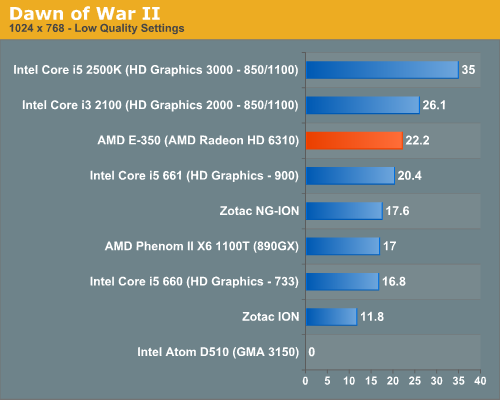

Dawn of War II

Dawn of War II is an RTS title that ships with a built in performance test. I ran at the lowest quality settings at 1024 x 768.

Oh what a difference moving a bottleneck makes. The AMD E-350, with its 75mm2 die is faster than Intel's Core i5 661 - the fastest implementation of Intel's HD Graphics. The E-350's performance isn't too far off the Core i3 2100 either. While none of these frame rates are what I'd call smooth, you can't argue with how competitive the E-350 is here.

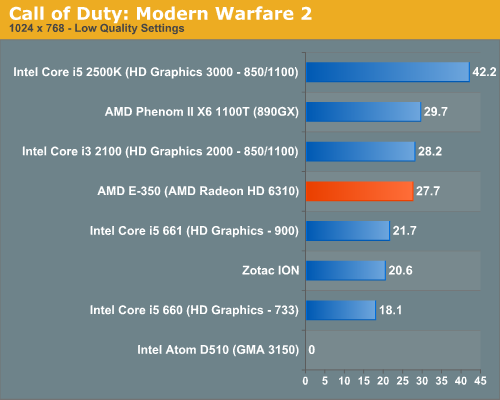

Call of Duty: Modern Warfare 2

Our Modern Warfare 2 benchmark is a quick FRAPS run through a multiplayer map. All settings were turned down/off and we ran at 1024 x 768.

Modern Warfare 2 paints an even better picture for AMD. The E-350 offers virtually the same performance as Intel's Core i3 2100, and noticeably better performance than the Core i5 661. Need I mention that you get this at a much lower price than either of the aforementioned CPUs?

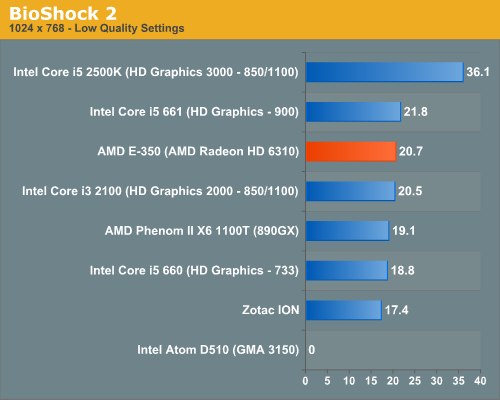

BioShock 2

Our test is a quick FRAPS runthrough in the first level of BioShock 2. All image quality settings are set to low, resolution is at 1024 x 768.

Again the E-350 continues to hang with the best here. The frame rates are still not high enough to get excited, but the effort is top notch.

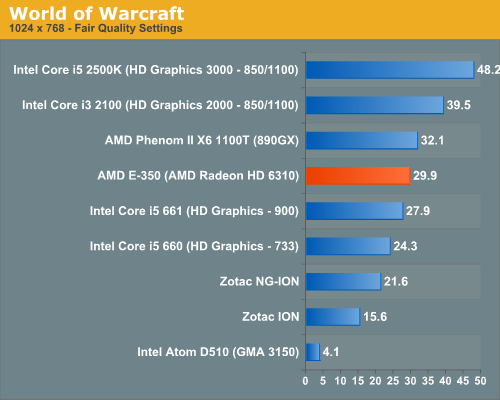

World of Warcraft

Our WoW test is run at fair quality settings (with weather turned down all the way) on a lightly populated server in an area where no other players are present to produce repeatable results. We ran at 1024 x 768.

AMD's E-350 is faster than anything from the Clarkdale era, while just a bit slower than AMD's 890GX. Intel's HD Graphics 2000 is faster but at a much higher power consumption and pricetag of course. Intel's Atom D510 can complete our benchmark here but at a laughable 4.1 fps. Both the first and second generation ION platforms fall behind Brazos.

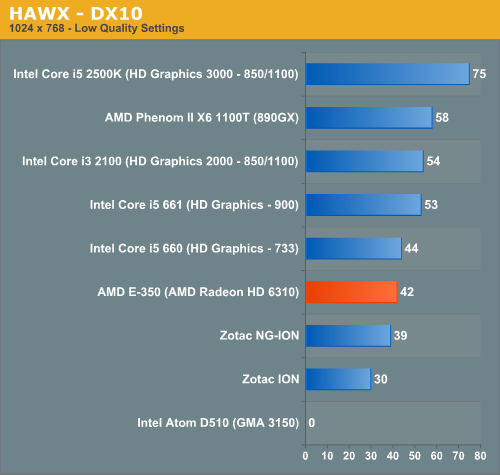

HAWX

Our HAWX performance tests were run with the game's built in benchmark in DX10 mode. All detail settings were turned down/off and we ran at 1024 x 768.

With HAWX CPU performance matters a bit more, pushing the E-350 just slightly behind the Core i5 660. The Radeon HD 6310 still delivers respectable performance in HAWX, especially considering its price point. The next-generation ION does come close in performance to the E-350, while the original ION is significantly slower.

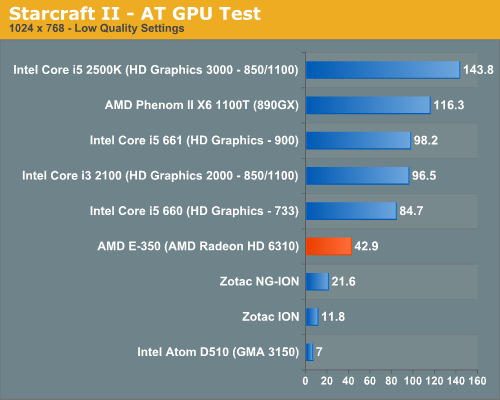

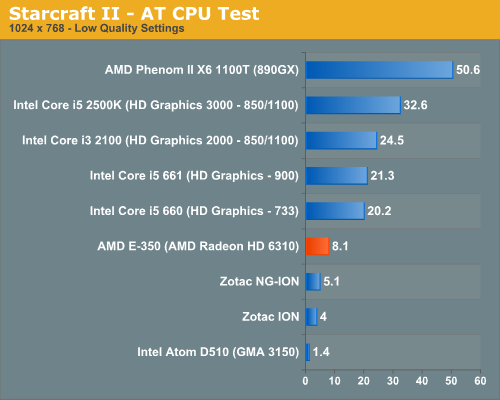

Starcraft II

We have two Starcraft II benchmarks: a GPU and a CPU test. The GPU test is mostly a navigate-around-the-map test, as scrolling and panning around tends to be the most GPU bound in the game. Our CPU test involves a massive battle of 6 armies in the center of the map, stressing the CPU more than the GPU. At these low quality settings however, both benchmarks are influenced by CPU and GPU.

In our GPU specific test, the E-350 is significantly faster than anything Atom based. While the D510 can technically run this test, it does so at only 7 fps. Even the next-generation ION only manages 21.6 fps. The E-350 with its Radeon HD 6310 delivers nearly twice the frame rate of the fastest ION. The advantage isn't purely on the GPU side. As I mentioned before, Starcraft II can be very CPU bound at times. The Bobcat cores at work in the E-350 help give it a significant advantage over anything paired with Atom.

The CPU dependency is what separates the E-350 from its larger, more expensive competitors here. The gap only widens as we look at what happens in a big battle:

While you can play Starcraft II on any of these systems, to maintain frame rate throughout all scenarios you really need more CPU and GPU horsepower.

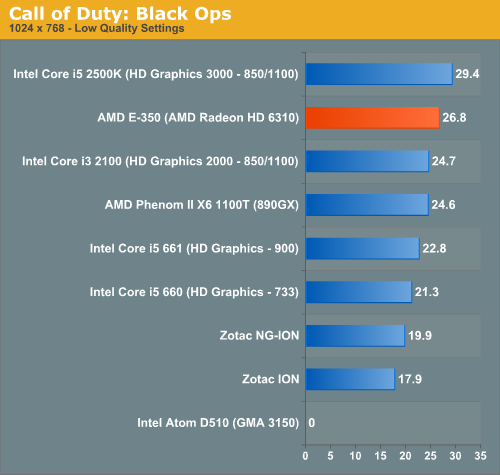

Call of Duty: Black Ops

Our Black Ops test is a quick FRAPS runthrough on a private multiplayer server. The game was set to 1024 x 768 at the lowest quality settings.

In Black Ops the E-350 does the best it has done thus far, nearly equaling the performance of Intel's HD Graphics 3000. In our Sandy Bridge Review I wondered if Intel was being limited by driver issues here as there's very little difference between the 3000 and 2000 GPUs. The E-350 benefits from all of the driver tweaks and experience AMD has from the Radeon side so it immediately puts its best foot forward. AMD's greatest ally in Fusion will be its driver experience.

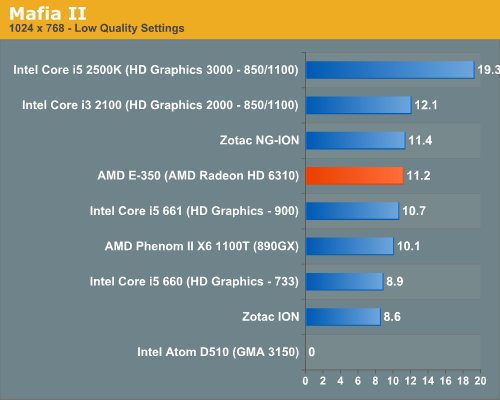

Mafia II

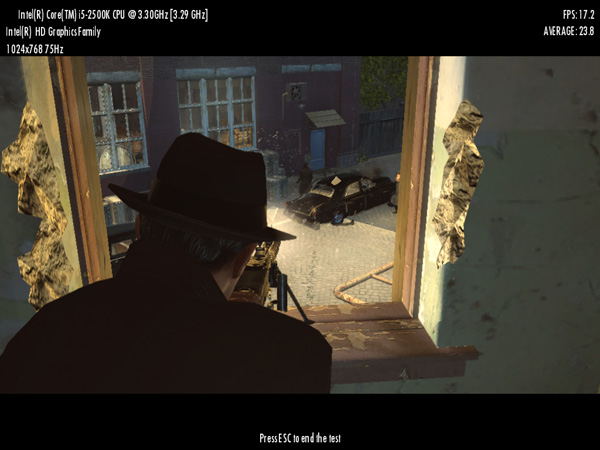

Mafia II ships with a built in benchmark which we used for our comparison:

Mafia II doesn't run well on any integrated graphics platform, regardless of vendor. The E-350 does well vs. the competition but none of these platforms are playable, even at the lowest settings.

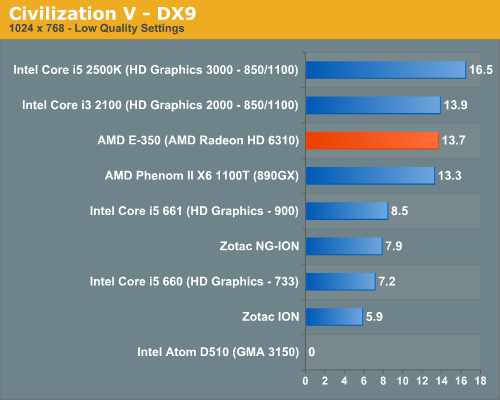

Civilization V

For our Civilization V test we're using the game's built in lateGameView benchmark. The test was run in DX9 mode with everything turned down at 1024 x 768:

Civilization V doesn't run well on any integrated graphics platform, but the E-350 runs it at least as well (or as poorly?) as Intel's Core i3 2100. The Bobcat cores help with a lot of the heavy lifting here giving the E-350 a substantial lead over Atom.

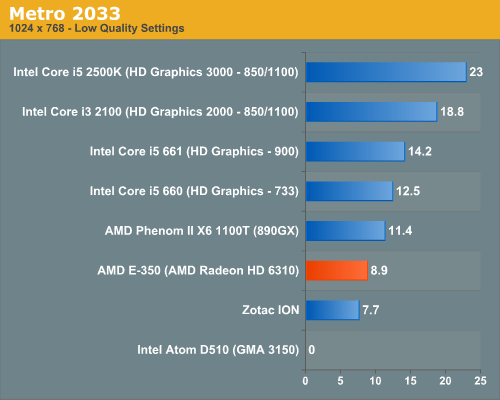

Metro 2033

We're using the Metro 2033 benchmark that ships with the game.

Metro 2033 is a bit too modern for these low end platforms. The E-350 is faster than ION but not in the realm of playability.

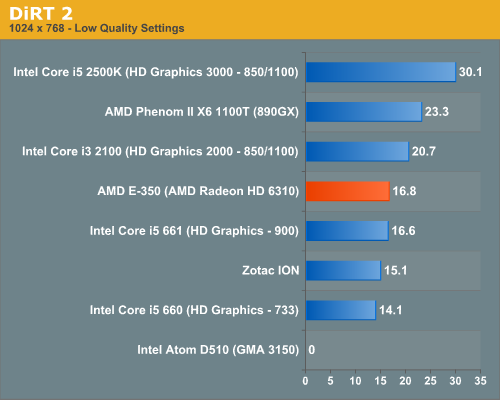

DiRT 2

Our DiRT 2 performance numbers come from the demo's built-in benchmark:

176 Comments

View All Comments

tno - Thursday, January 27, 2011 - link

http://www.anandtech.com/show/4017/vias-dual-core-...Not a full review but darn close.

e36Jeff - Thursday, January 27, 2011 - link

they did, its here:http://www.anandtech.com/show/4017/vias-dual-core-...

Problem is that what they tested was basically an engineering sample built on the wrong node, they havent gotten anything to market yet, so actual numbers from real products are unknown. having said that, yeah it does look like it might be better, but until someone makes a product based on it, we'll never know.

nitrousoxide - Thursday, January 27, 2011 - link

The price itself is stopping Nano X2 from dominating APU or Atom in the compete. The Nano platform consists of 3 different chips (aka CPU, NB, SB as the traditional layout) but the latter two managed to do that with only one chip, especially APU with very small die size. While Nano X2 is an impressive part compared to APU and Atom in absolute performance, it's not competitive in other features such as die size, price and power consumption.Tralalak - Friday, January 28, 2011 - link

AMD Zacate TDP Configs@18W + Hudson M1 = Fusion Controller Hub = ("South Bridge") TDP Configs@2,7W to 4,7 W for typical configurations === AMD Brazos 20,7W to 22,7W TDP. (2 chip solution)VIA say: VIA Nano X2 have some TDP than VIA Nano Single-Core.

VIA Nano X2 1.4GHz (40nm TSMC) have some TDP than VIA Nano U3200 1.4GHz (65nm Fujitsu) TDP = 6.5W

VIA Nano X2 1.4GHz max. TDP@6.5W + all-in-one chipset VIA VX900 MSP (Media System Processor = North Bridge (IGP) + South Bridge) max. TDP@4,5W === max.TDP@11W (2 chip solution)

I mean that In "minibotebook market" is very competitive.

VIA's 40nm next all-in-one chipset VIA VX MSP with DirectX 11 IGP refresh will appear in Q4 2011.

mczak - Monday, January 31, 2011 - link

VX900 has Chrome9 HC3 graphics core at 250Mhz - the same as VX800. Its 3d performance can barely keep up with the atoms anemic IGP (I could not find ANY review of VX900, just VX800), so totally no match for Brazos (though, in contrast to atom, it should support video decode much better). So your TDP comparison basically ignores the 3d part of it (surely the graphics core won't consume that much given its performance).Alright, even if I were to believe the DC Nano has same TDP as the current single core one, traditionally perf/power has never been that good with those VIA chips (not terrible, just not really good). Maybe their TDP definition is different (btw I've never seen a published TDP figure for any of the nano u3xxx series, which you seem to use as reference), also keep in mind runtime of notebooks is barely affected by TDP, much more important if you can get low idle power figures. I have no idea how the VIA platform would compete there (granted the publish idle power of the nano u3xxx cpus is only 100mW), but based on past designs I have to assume not very well (fwiw, this article here doesn't help for that neither, since the atoms don't have all of their mobile siblings power management features enabled).

So, unless VIA delivers, I remain sceptical if they can be competitive. Yes, a 1.4Ghz Nano DC should be quite competitive with 1.6Ghz Zacate performance wise - maybe also power wise, but 3d graphics will be very very sub par. To catch up in that area the new IGP is needed which as you mentioned is q4 (if the graphic core is like VN1000, it should do quite well, though I'll note that VN1000 plus the required southbridge has a 12W TDP).

bjacobson - Saturday, January 29, 2011 - link

I for one will be clicking any links with more info on these nanos! That sorely beat the Brazos out of nowhere hah!I doubt the it'll be much good at games though, and the drivers will be rough.,,

silverblue - Monday, January 31, 2011 - link

I've seen the Nano in comparison with Brazos before so I knew it was capable of being faster, however it'll take a load more power.The last time I saw a proper Nano rundown, we were talking a 65nm chip...

Iketh - Thursday, January 27, 2011 - link

power consumption is considerably higher on NanoAmdInside - Thursday, January 27, 2011 - link

Just curious if it was a fad or are people still buying Atom systems? I bought an Atom netbook but sold it within 30 days cause I couldn't stand it (Dell Mini 10v). Then I bought an ION Zotac Atom 330 system to use as a video streaming device for my bedroom and while I do like it for what it does, I just can't see myself buying another cheap lower powered Atom/AMD E-350 like device. I bought mine because the price made it seem like a great deal but once I got past that, I just lost interest in netbook/low powered mini-ITX platforms. Tablets on the other hand I am still hooked on. Love my iPad and may pick up a second this year and pass mine onto my wife.nitrousoxide - Thursday, January 27, 2011 - link

What APU/Atom can do is still far beyond ARM-based tablet's reach. Just look at how much superiority x86 have in absolute performance. E-350 has roughly 5 times the performance of Tegra 2, and even an Atom is significantly faster. So tablet is just an alternative of nettops, not a replacement. If you are fine with you iPad that's cool, but saying that nettop is dead is still far too early.The superiority of x86 is just unmatched by ARM, and that's why Intel claims that ARM is not a big deal for it. Want to be as fast? Then add more instructions sets, design more complicated architectures, and what you get is no longer an ARM.