The Brazos Review: AMD's E-350 Supplants ION for mini-ITX

by Anand Lal Shimpi on January 27, 2011 6:08 PM ESTDiscrete GPUs on Brazos: CPU and PCIe Bound

Intel's Atom could use a more capable GPU, but what about Brazos? The E-350's GPU is branded the AMD Radeon HD 6310. It has a total of 80 VLIW-5 SPs running at 500MHz. The GPU shares the same 64-bit DDR3 memory interface as the CPU, but it does not have any access to the CPU's caches. Future incarnations of Fusion will blur the line between the CPU and GPU but for now, this is the division.

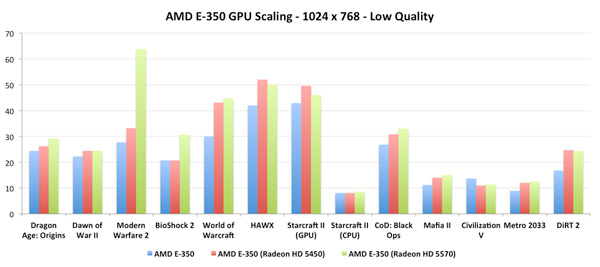

Branching off the E-350 APU are four PCIe lanes. There are another four lanes courtesy of the Hudson FCH. MSI's E350IA-E45 exposes the former by the way of a physical PCIe x16 slot, although electrically it's only a x4. Curious to see if there would be any benefit to plugging in a faster GPU I decided to try a Radeon HD 5450 and 5570 in the slot:

With a couple of exceptions (World of Warcraft, HAWX), there's no real benefit to a discrete Radeon HD 5450 over the integrated Radeon HD 6310. This is unsurprising as the two have very similar compute capabilities and only differ in the amount of available memory bandwidth since the Radeon HD 5450 doesn't have to share with a neighboring CPU.

The Radeon HD 5570 results were a bit unexpected. Other than Modern Warfare 2 and BioShock 2, there's little performance difference between the 5570 and the 5450 when paired with the AMD E-350. How much of this is due to the performance of the E-350 vs. the bandwidth limitations of the PCIe x4 slot is difficult to say. This smells like a CPU limitation, in which case it would mean that AMD didn't skimp at all when it came to the E-350's GPU.

The Radeon HD 6310: Very Good for the Money

I want to say that lately we've seen a resurgence in the importance of integrated graphics, but I don't know that it ever was truly important. With both AMD and Intel now taking processor graphics seriously, the quality and performance of what we get "for free" should go up tremendously in the coming years. The past two years have shown us that Intel is starting to take GPU performance seriously. The HD Graphics, HD Graphics 2000 and 3000 parts we've been given are all relatively competitive. The only problem is you generally have to spend around $100 - $200 on a CPU to get what I'd consider the bare minimum you should get from integrated graphics. Brazos aims to change that.

The E-350 still isn't enough to play all modern games, but it's what I would consider an acceptable entry level GPU. Despite its stature, the E-350 can easily compete with much more expensive Intel solutions when it comes to 3D gaming. Let's get to the numbers.

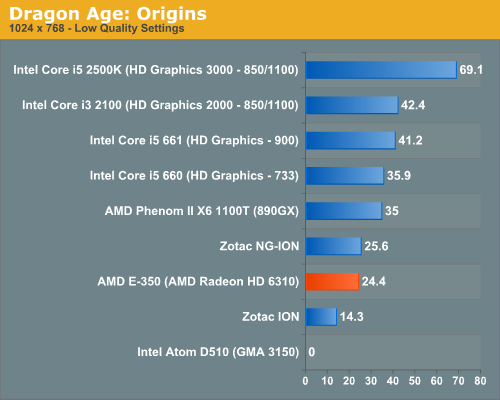

Dragon Age: Origins

DAO has been a staple of our integrated graphics benchmark for some time now. The third/first person RPG is well threaded and is influenced both by CPU and GPU performance.

We ran at 1024 x 768 with graphics and texture quality both set to low. Our benchmark is a FRAPS runthrough of our character through a castle.

Our Dragon Age: Origins benchmark is quite CPU bound here and thus the E-350's Radeon HD 6310 doesn't look all that powerful. Luckily for AMD, DAO happens to be more of an outlier among current games as you're about to see.

The bare bones Atom D510 won't even run DAO. You'll see a number of games where compatibility is a problem for the D510. NVIDIA's ION does a lot better but it'll take a second generation ION to hang with the E-350. Zotac's NG-ION is actually a bit faster than the E-350, implying some driver/threading efficiencies as we're most definitely CPU bound on these low end parts.

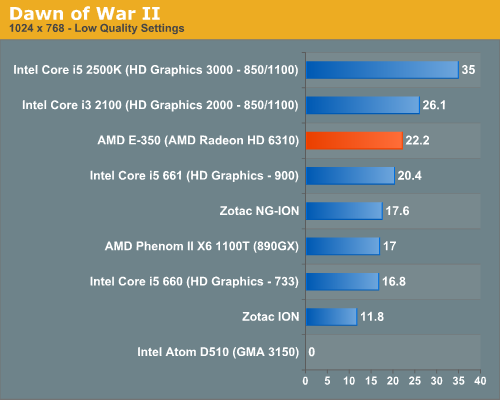

Dawn of War II

Dawn of War II is an RTS title that ships with a built in performance test. I ran at the lowest quality settings at 1024 x 768.

Oh what a difference moving a bottleneck makes. The AMD E-350, with its 75mm2 die is faster than Intel's Core i5 661 - the fastest implementation of Intel's HD Graphics. The E-350's performance isn't too far off the Core i3 2100 either. While none of these frame rates are what I'd call smooth, you can't argue with how competitive the E-350 is here.

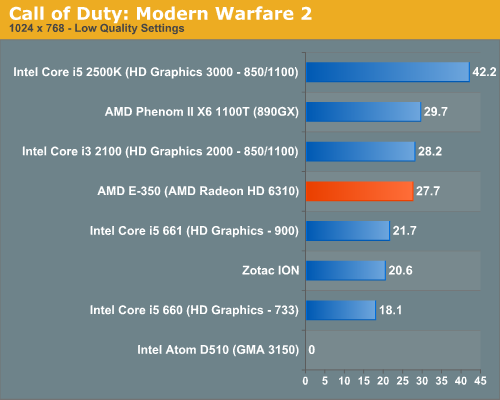

Call of Duty: Modern Warfare 2

Our Modern Warfare 2 benchmark is a quick FRAPS run through a multiplayer map. All settings were turned down/off and we ran at 1024 x 768.

Modern Warfare 2 paints an even better picture for AMD. The E-350 offers virtually the same performance as Intel's Core i3 2100, and noticeably better performance than the Core i5 661. Need I mention that you get this at a much lower price than either of the aforementioned CPUs?

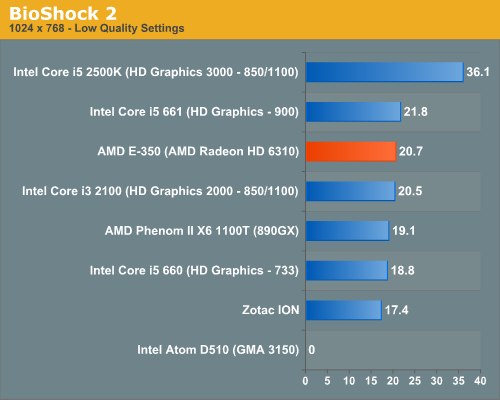

BioShock 2

Our test is a quick FRAPS runthrough in the first level of BioShock 2. All image quality settings are set to low, resolution is at 1024 x 768.

Again the E-350 continues to hang with the best here. The frame rates are still not high enough to get excited, but the effort is top notch.

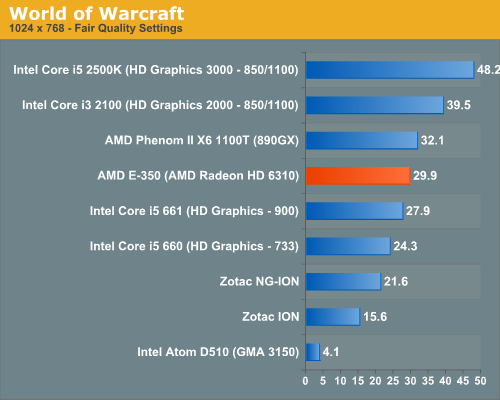

World of Warcraft

Our WoW test is run at fair quality settings (with weather turned down all the way) on a lightly populated server in an area where no other players are present to produce repeatable results. We ran at 1024 x 768.

AMD's E-350 is faster than anything from the Clarkdale era, while just a bit slower than AMD's 890GX. Intel's HD Graphics 2000 is faster but at a much higher power consumption and pricetag of course. Intel's Atom D510 can complete our benchmark here but at a laughable 4.1 fps. Both the first and second generation ION platforms fall behind Brazos.

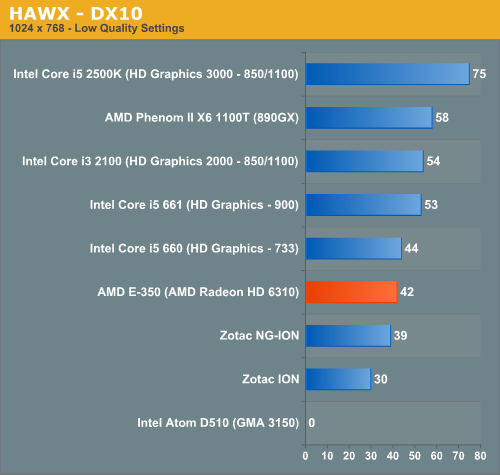

HAWX

Our HAWX performance tests were run with the game's built in benchmark in DX10 mode. All detail settings were turned down/off and we ran at 1024 x 768.

With HAWX CPU performance matters a bit more, pushing the E-350 just slightly behind the Core i5 660. The Radeon HD 6310 still delivers respectable performance in HAWX, especially considering its price point. The next-generation ION does come close in performance to the E-350, while the original ION is significantly slower.

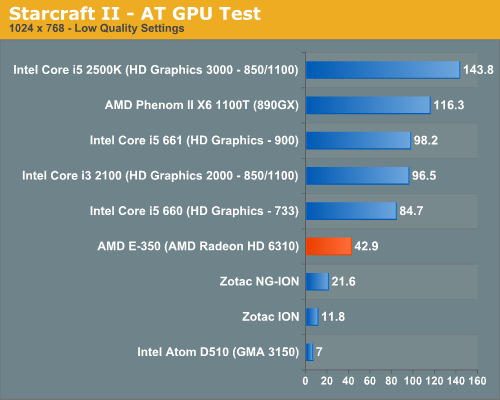

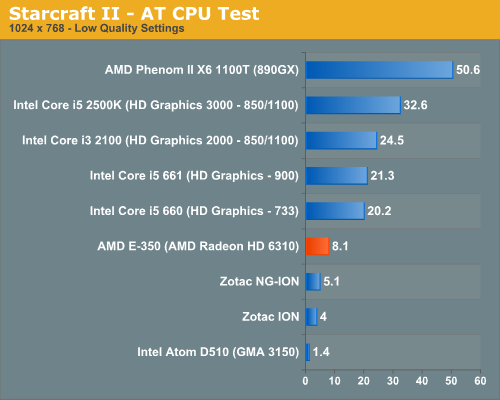

Starcraft II

We have two Starcraft II benchmarks: a GPU and a CPU test. The GPU test is mostly a navigate-around-the-map test, as scrolling and panning around tends to be the most GPU bound in the game. Our CPU test involves a massive battle of 6 armies in the center of the map, stressing the CPU more than the GPU. At these low quality settings however, both benchmarks are influenced by CPU and GPU.

In our GPU specific test, the E-350 is significantly faster than anything Atom based. While the D510 can technically run this test, it does so at only 7 fps. Even the next-generation ION only manages 21.6 fps. The E-350 with its Radeon HD 6310 delivers nearly twice the frame rate of the fastest ION. The advantage isn't purely on the GPU side. As I mentioned before, Starcraft II can be very CPU bound at times. The Bobcat cores at work in the E-350 help give it a significant advantage over anything paired with Atom.

The CPU dependency is what separates the E-350 from its larger, more expensive competitors here. The gap only widens as we look at what happens in a big battle:

While you can play Starcraft II on any of these systems, to maintain frame rate throughout all scenarios you really need more CPU and GPU horsepower.

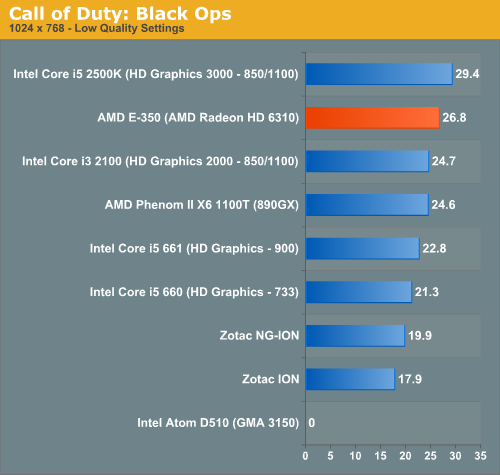

Call of Duty: Black Ops

Our Black Ops test is a quick FRAPS runthrough on a private multiplayer server. The game was set to 1024 x 768 at the lowest quality settings.

In Black Ops the E-350 does the best it has done thus far, nearly equaling the performance of Intel's HD Graphics 3000. In our Sandy Bridge Review I wondered if Intel was being limited by driver issues here as there's very little difference between the 3000 and 2000 GPUs. The E-350 benefits from all of the driver tweaks and experience AMD has from the Radeon side so it immediately puts its best foot forward. AMD's greatest ally in Fusion will be its driver experience.

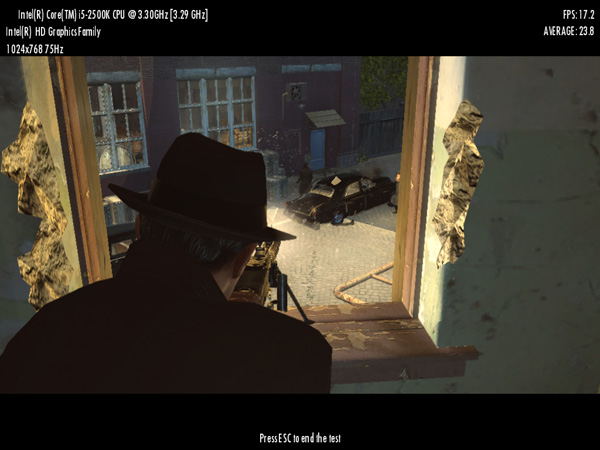

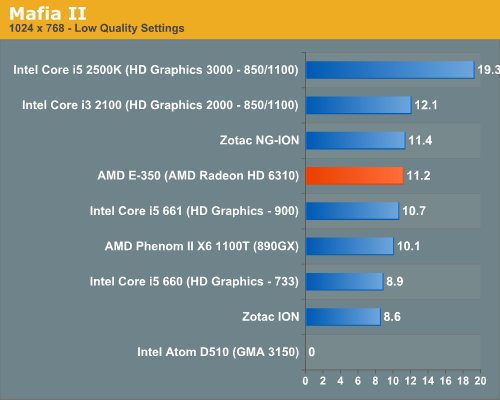

Mafia II

Mafia II ships with a built in benchmark which we used for our comparison:

Mafia II doesn't run well on any integrated graphics platform, regardless of vendor. The E-350 does well vs. the competition but none of these platforms are playable, even at the lowest settings.

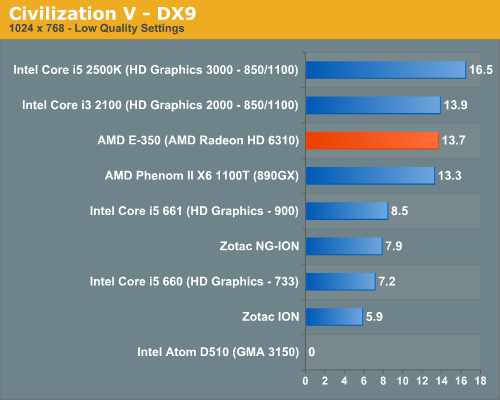

Civilization V

For our Civilization V test we're using the game's built in lateGameView benchmark. The test was run in DX9 mode with everything turned down at 1024 x 768:

Civilization V doesn't run well on any integrated graphics platform, but the E-350 runs it at least as well (or as poorly?) as Intel's Core i3 2100. The Bobcat cores help with a lot of the heavy lifting here giving the E-350 a substantial lead over Atom.

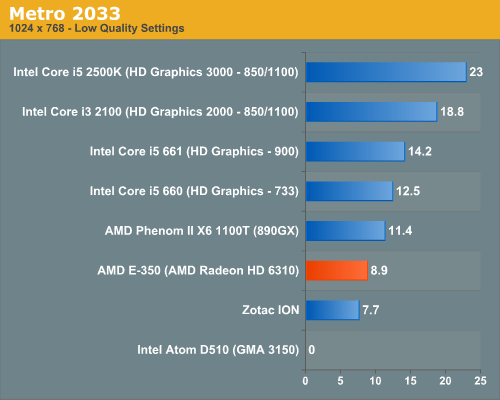

Metro 2033

We're using the Metro 2033 benchmark that ships with the game.

Metro 2033 is a bit too modern for these low end platforms. The E-350 is faster than ION but not in the realm of playability.

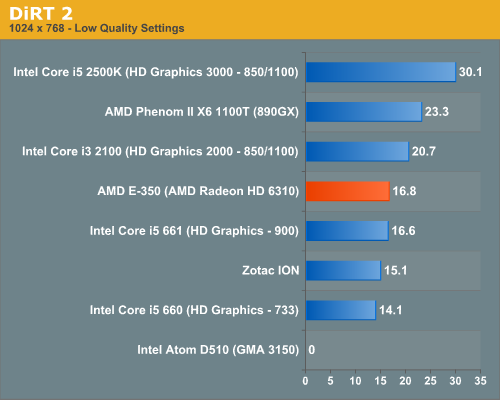

DiRT 2

Our DiRT 2 performance numbers come from the demo's built-in benchmark:

176 Comments

View All Comments

Belard - Tuesday, February 1, 2011 - link

It would fail at bluRay 3D.the 1080i is a bit unimportant since flatscreen TVs don't do interlace.

doctorpink - Tuesday, February 1, 2011 - link

if i remember correctly, nano runs 1.8ghz and is much faster but more power hungry...are there netbooks with nano anyway?

grego3d - Tuesday, February 1, 2011 - link

I'm sold on this Sandy Bridge / Atom killer. Well, maybe not Sandy Bridge killer yet, but i'm sure the second generation fusion processors will be. Now I need help finding a great little mini-itx case. I'd love to build a mini pc the size of my Wii. I have found a few small cases, but where on earth are the slot feed DVD/BD players. I always start my daily reading right here @ Anandtech.com so please save me some time and help us all out by rounding up a case review for this new Fusion platform. Go AMD Fusion - Boo Intel (and your 1$Billion oops!)Belard - Tuesday, February 1, 2011 - link

Sure is such excitement over such a tiny chip.It also reminds me why one of the P4s Dells I use at work is sooooooooooo Slooooooooooooooow.

I'll admit I'm using my very old computer (AMD X2 3800) as an HTPC somewhat, since it wasn't worth selling when I upgraded. But its a 90watt CPU... so saving power is the big thing, eh?

lovansoft - Tuesday, February 1, 2011 - link

I've got an Acer Aspire 5517 with an AMD Athlon tk-42 processor and integrated HD3200 video. It's also 1.6 Ghz so I wanted to showcase a relative clock for clock comparison. It is a 20W chip with 1meg cache built on a 65nm process and no VT. It's not an Athlon II, just an Athlon 64 x2, I believe.On Cinebench R10:

Single Thread: E-350=1174, TK-42=1340 :-: 1174/1340 = 87.6%

2 Threads: E-350=2251, TK-42=2373 :-: 2251/2373 = 94.4%

(1174+2251=3425)/(1340+2373=3713) = 92.2%

Scaling seems better on the new chips than on the older ones.

At idle with the screen off the laptop pulls about 18 watts. In Cinebench on a single thread it pulls about 30 watts, with 2 threads it pulls about 33 wats. Opening the screen to run the LCD at full brightness adds about 9 watts at any time.

I ran these tests with a Kill-A-Watt meter. It's not quite an exact comparison, but is pretty close. But to see that they kept performance close, added graphics, and still managed to shave 10% off TDP it's pretty dang impressive.

lovansoft - Tuesday, February 1, 2011 - link

Actually, I just let my laptop sit idle for a while. Now, idle power usage dips down to 11 watts with the lid closed and generally stays switching between 11-13 watts. Hmmm, the power usage on these new chips aren't quite as I would expect unless it's a platform thing. This article shows that the new chips pull 9W at full load under Cinebench. My testing shows I ramp from about 12W up to 33W which is a 21W increase by taxing the TK-42, right inline with the 20W spec giving my rounding of numbers. All other parts being equal and I only ramp up 9W instead of 21W then my peak should be about 12W less, or about 21W total instead of 33W total. That would be a significant gain. Interesting that this article has the new platform at 32.2W with the same workload. That's about 50% higher than my rough estimations. Is it because it's a desktop board and not a laptop design?sebanab - Wednesday, February 2, 2011 - link

Thanks for the performance comparison. I really helps putting the Zacate into perspective.On the power consumption comparison:

Ofcourse the desktop board system will consume more than a laptop with same specs.

Your laptop consumes less because of different PSU , less USBs , less components in general (PCIex) and so on.

So in order to make a correct comparison , wait for a HP DM1z review for example.

lovansoft - Wednesday, February 2, 2011 - link

I figured as much, but 50% seems high. But then again, it really is only a few watts... Lower efficiency PSU, a couple more chips to provide some extra ports. A couple of watts here and there do add up I suppose. And to think it provides more than 90% of my current performance into only 2/3 the power. I'd think if they can up it to 2Ghz it'd be about the same as mine without tapping the power too much. From the speculation I've read, it makes it seem that the revision coming in a year should grow the performance by quite a bit without really increasing power, about what you'd expect from a die shrink.You know, with this architecture, it'd be nice if a board could be made that would have multiples of these chips for server use. From my experience in SMB, I rarely find servers being CPU bound. Usually if they are, then there is some runaway process that needs to be tamed. Maybe this current generation isn't quite fast enough, but with a process shrink and some speed adjustments, getting a few of these on a board would make a very low energy server. But it'd only be feasible if there were something they could use that built in graphics portion for. Otherwise it'd be a waste.

Oh, and Cinnebench R10 on an AthlonXP 3000+ (2Ghz) = 1438.

Single Thread: E-350=1174, XP3000=1438 :-: 1174/1438 = 81.6%

1.6/2=80% Seems to be about the same IPC as the AthlonXP line.

I don't have power numbers for it, though.

torkemada - Wednesday, February 2, 2011 - link

Is E350IA-E45 board HDMI 1.4? Info on the net is confusing.Hrel - Thursday, February 3, 2011 - link

that VIA chip is pretty impressive.