The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTGaming Performance

There's simply no better gaming CPU on the market today than Sandy Bridge. The Core i5 2500K and 2600K top the charts regardless of game. If you're building a new gaming box, you'll want a SNB in it.

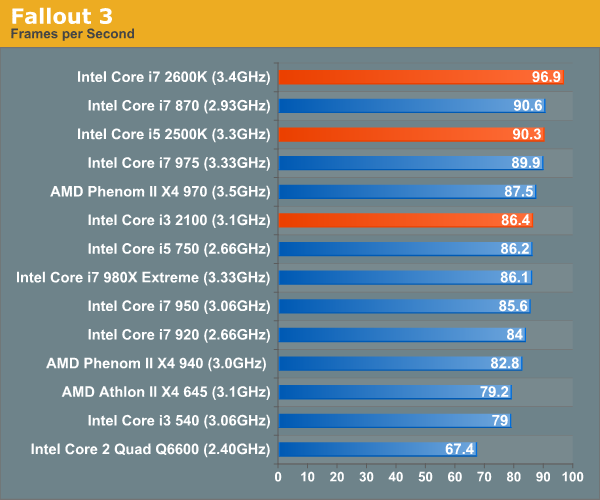

Our Fallout 3 test is a quick FRAPS runthrough near the beginning of the game. We're running with a GeForce GTX 280 at 1680 x 1050 and medium quality defaults. There's no AA/AF enabled.

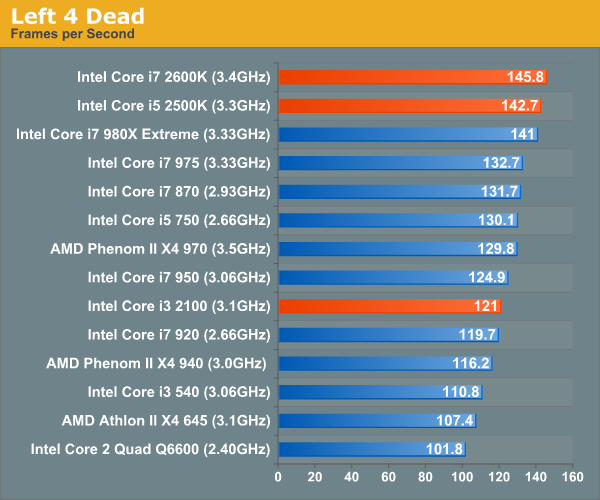

In testing Left 4 Dead we use a custom recorded timedemo. We run on a GeForce GTX 280 at 1680 x 1050 with all quality options set to high. No AA/AF enabled.

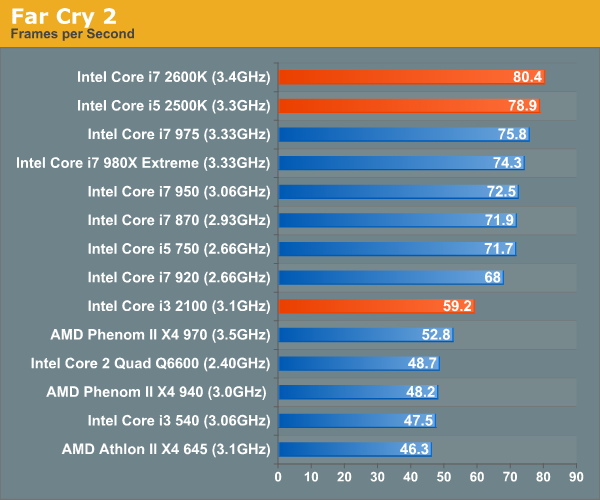

Far Cry 2 ships with several built in benchmarks. For this test we use the Playback (Action) demo at 1680 x 1050 in DX9 mode on a GTX 280. The game is set to medium defaults with performance options set to high.

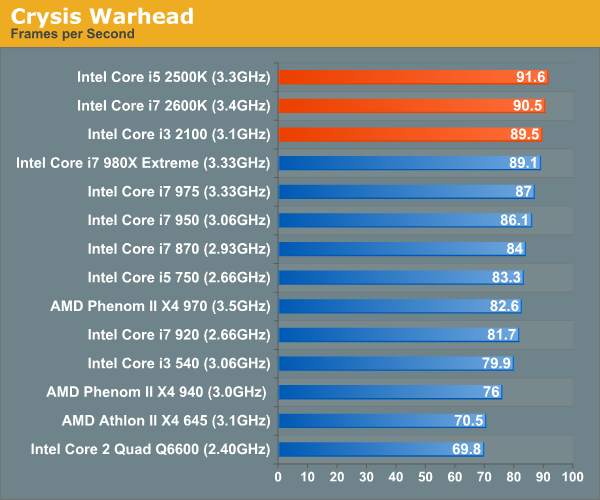

Crysis Warhead also ships with a number of built in benchmarks. Running on a GTX 280 at 1680 x 1050 we run the ambush timedemo with mainstream quality settings. Physics is set to enthusiast however to further stress the CPU.

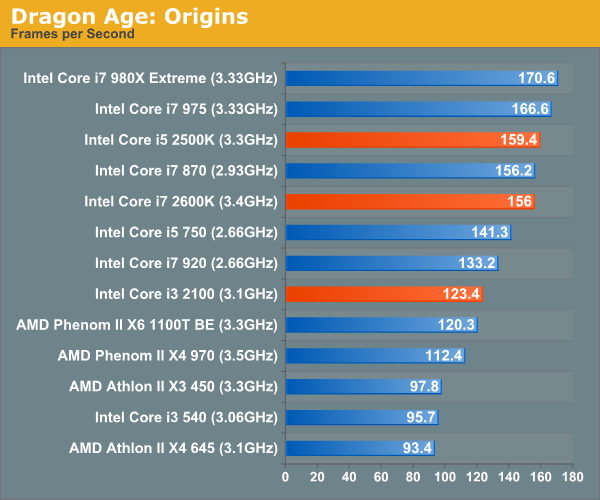

Our Dragon Age: Origins benchmark begins with a shift to the Radeon HD 5870. From this point on these games are run under our Bench refresh testbed under Windows 7 x64. Our benchmark here is the same thing we ran in our integrated graphics tests - a quick FRAPS walkthrough inside a castle. The game is run at 1680 x 1050 at high quality and texture options.

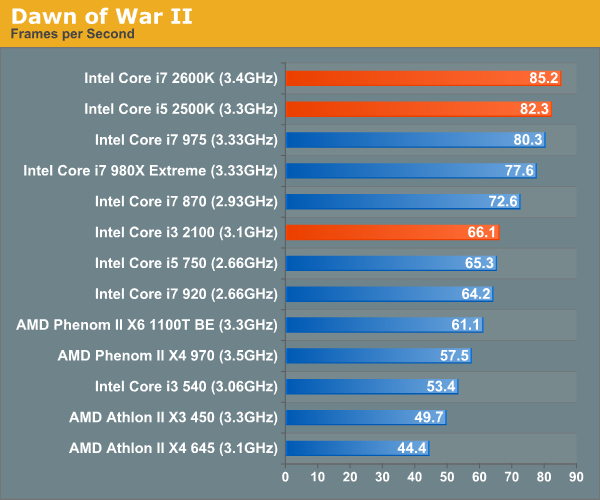

We're running Dawn of War II's internal benchmark at high quality defaults. Our GPU of choice is a Radeon HD 5870 running at 1680 x 1050.

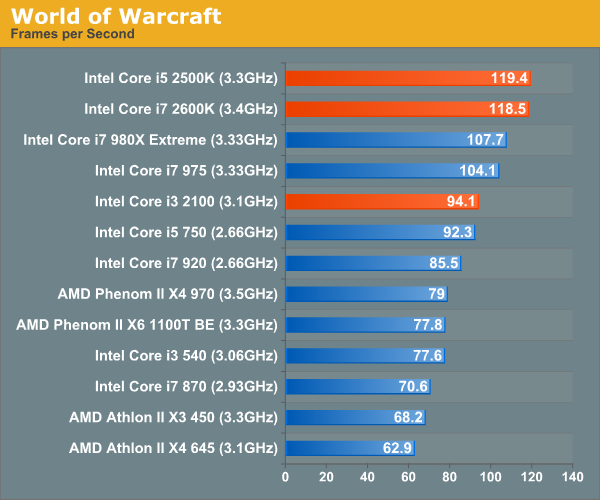

Our World of Warcraft benchmark is a manual FRAPS runthrough of a lightly populated server with no other player controlled characters around. The frame rates here are higher than you'd see in a real world scenario, but the relative comparison between CPUs is accurate.

We run on a Radeon HD 5870 at 1680 x 1050. We're using WoW's high quality defaults but with weather intensity turned down all the way.

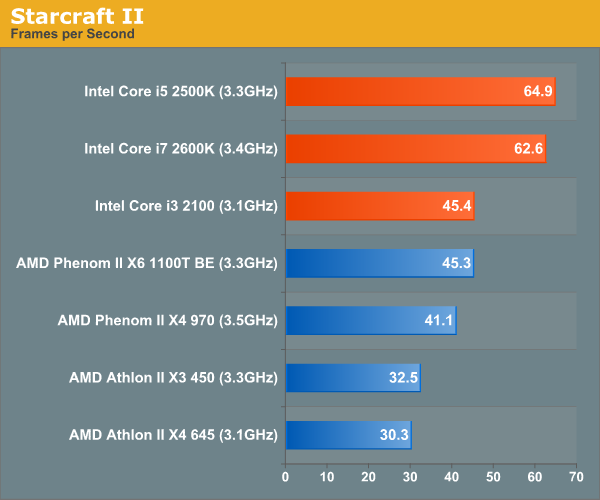

For Starcraft II we're using our heavy CPU test. This is a playback of a 3v3 match where all players gather in the middle of the map for one large, unit-heavy battle. While GPU plays a role here, we're mostly CPU bound. The Radeon HD 5870 is running at 1024 x 768 at medium quality settings to make this an even more pure CPU benchmark.

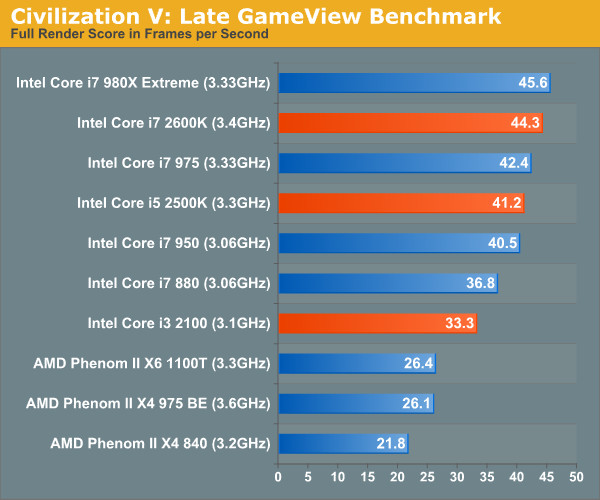

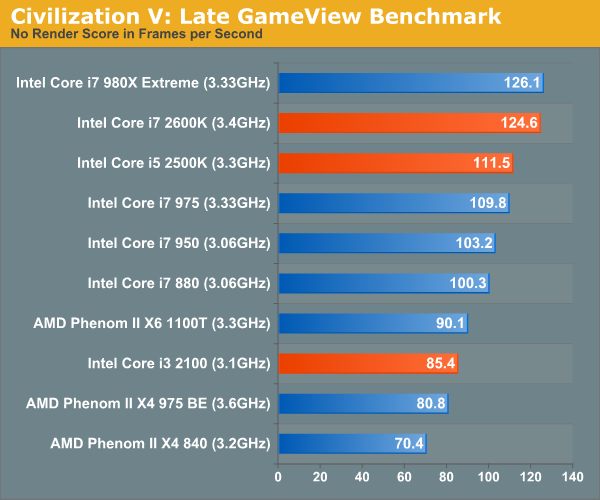

This is Civ V's built in Late GameView benchmark, the newest addition to our gaming test suite. The benchmark outputs three scores: a full render score, a no-shadow render score and a no-render score. We present the first and the last, acting as a GPU and CPU benchmark respectively.

We're running at 1680 x 1050 with all quality settings set to high. For this test we're using a brand new testbed with 8GB of memory and a GeForce GTX 580.

283 Comments

View All Comments

Rick83 - Monday, January 3, 2011 - link

I just checked the manual to MSI's 7676 Mainboard (high-end H67) and it lists cpu core multiplier in the bios (page 3-7 of the manual, only limitation mentioned is that of CPU support), with nothing grayed out and overclockability a feature. As this is the 1.1 Version, I think someone misunderstood something....Unless MSI has messed up its Manual after all and just reused the P67 Manual.... Still, the focus on over-clocking would be most ridiculous.

Rick83 - Monday, January 3, 2011 - link

also, there is this:http://www.eteknix.com/previews/foxconn-h67a-s-h67...Where the unlocked multiplier is specifically mentioned as a feature of the H67 board.

So I think anandtech got it wrong here....

RagingDragon - Monday, January 3, 2011 - link

Or perhaps CPU overclocking on H67 is not *officially* supported by Intel, but the motherboard makers are supporting it anyway?IanWorthington - Monday, January 3, 2011 - link

Seems to sum it up. If you want both you have to wait until Q2.<face palm>

8steve8 - Monday, January 3, 2011 - link

so if im someone who wants the best igp, but doesn't want to pay for overclockability, i still have to buy the K cpu... weird.beginner99 - Monday, January 3, 2011 - link

yep. This is IMHO extremely stupid. Wanted to build a PC for someone that mainly needs CPU power (video editing). An overclocked 2600k would be ideal with QS but either wait another 3 month or go all compromise...in that case H67 probably but still paying for K part and not being able to use it.Intel does know how to get the most money from you...

Hrel - Monday, January 3, 2011 - link

haha, yeah that is stupid. You'd think on the CPU's you can overclock "K" they use the lower end GPU or not even use one at all. Makes for an awkward HTPC choice.AkumaX - Monday, January 3, 2011 - link

omg omg omg wat do i do w/ my i7-875k... (p.s. how is this comment spam?)AssBall - Monday, January 3, 2011 - link

Maybe because you sound like a 12 year old girl with ADHD.usernamehere - Monday, January 3, 2011 - link

I'm surprised nobody cares there's no native USB 3.0 support coming from Intel until 2012. It's obvious they are abusing their position as the number 1 chip maker, trying to push Light Peak as a replacement to USB. The truth is, Light Peak needs USB for power, it can never live without it (unless you like to carry around a bunch of AC adapters).Intel wants light peak to succeed so badly, they are leaving USB 3.0 (it's competitor) by the wayside. Since Intel sits on the USB board, they have a lot of pull in the industry, and as long as Intel wont support the standard, no manufacturer will ever get behind it 100%. Sounds very anti-competitive to me.

Considering AMD is coming out with USB 3.0 support in Llano later this year, I've already decided to jump ship and boycott Intel. Not because I'm upset with their lack of support for USB 3.0, but because their anti-competitive practices are inexcusable; holding back the market and innovation so their own proprietary format can get a headstart. I'm done with Intel.