The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTPower Consumption

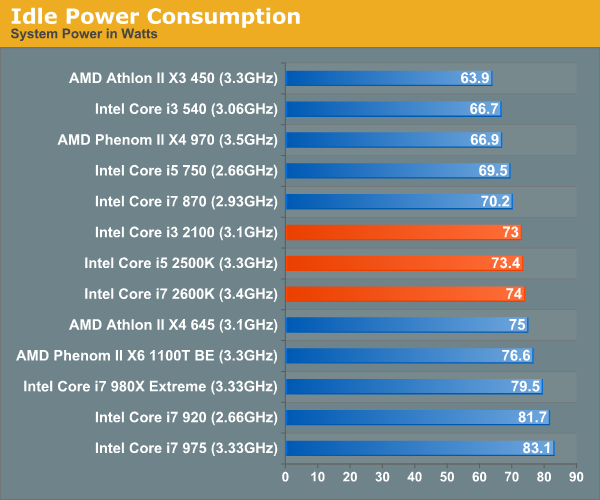

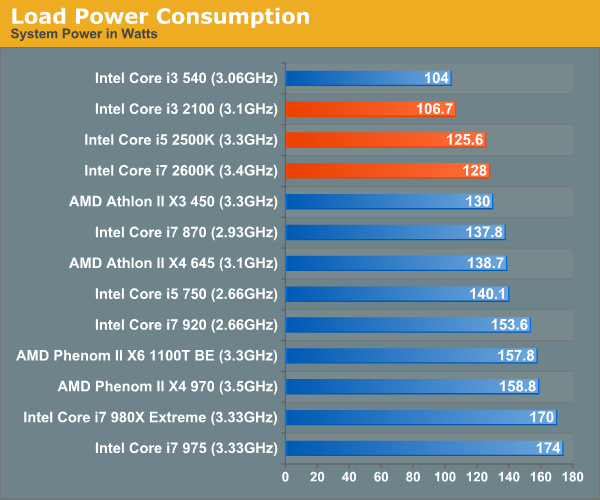

Power consumption is very low thanks to core power gating and Intel's 32nm process. Also, when the integrated GPU is not in use it is completely power gated as to not waste any power either. The end result is lower power consumption than virtually any other platform out there under load.

I also measured power at the ATX12V connector to give you an idea of what actual CPU power consumption is like (excluding the motherboard, PSU loss, etc...):

| Processor | Idle | Load (Cinebench R11.5) |

| Intel Core i7 2600K @ 4.4GHz | 5W | 111W |

| Intel Core i7 2600K (3.4GHz) | 5W | 86W |

| AMD Phenom II X4 975 BE (3.6GHz) | 14W | 96W |

| AMD Phenom II X6 1100T (3.3GHz) | 20W | 109W |

| Intel Core i5 661 (3.33GHz) | 4W | 33W |

| Intel Core i7 880 (3.06GHz) | 3W | 106W |

Idle power is a strength of Intel's as the cores are fully power gated when idle resulting in these great single digit power levels. Under load, there's actually not too much difference between an i7 2600K and a 3.6GHz Phenom II (only 10W). There's obviously a big difference in performance however (7.45 vs. 4.23 for the Phenom II in Cinebench R11.5), thus giving Intel better performance per watt. The fact that AMD is able to add two more cores at only a 13W load and 300MHz frequency penalty is pretty impressive as well.

283 Comments

View All Comments

auhgnist - Monday, January 17, 2011 - link

For example, between i3-2100 and i7-2600?timminata - Wednesday, January 19, 2011 - link

I was wondering, does the integrated GPU provide any benefit if you're using it with a dedicated graphics card anyway (GTX470) or would it just be idle?James5mith - Friday, January 21, 2011 - link

Just thought I would comment with my experience. I am unable to get bluray playback, or even CableCard TV playback with the Intel integrated graphics on my new I5-2500K w/ Asus Motherboard. Why you ask? The same problem Intel has always had, it doesn't handle the EDID's correctly when there is a receiver in the path between it and the display.To be fair, I have an older Westinghouse Monitor, and an Onkyo TX-SR606. But the fact that all I had to do was reinstall my HD5450 (which I wanted to get rid of when I did the update to SandyBridge) and all my problems were gone kind of points to the fact that Intel still hasn't gotten it right when it comes to EDID's, HDCP handshakes, etc.

So sad too, because otherwise I love the upgraded platform for my HTPC. Just wish I didn't have to add-in the discrete graphics.

palenholik - Wednesday, January 26, 2011 - link

As i could understand from article, you have used just this one software for all these testings. And I understand why. Is it enough to conclude that CUDA causes bad or low picture quality.I am very interested and do researches over H.264 and x264 encoding and decoding performance, especially over GPU. I have tested Xilisoft Video Converter 6, that supports CUDA, and i didn't problems with low quality picture when using CUDA. I did these test on nVidia 8600 GT and for TV station that i work for. I was researching for solution to compress video for sending over internet with low or no quality loss.

So, could it be that Arcsoft Media Converter co-ops bad with CUDA technology?

And must notice here how well AMD Phenom II x6 performs well comparable to nVidia GTX 460. This means that one could buy MB with integrated graphics and AMD Phenom II x6 and have very good encoding performances in terms of speed and quality. Though, Intel is winner here no doubt, but jumping from sck. to sck. and total platform changing troubles me.

Nice and very useful article.

ellarpc - Wednesday, January 26, 2011 - link

I'm curious why bad company 2 gets left out of Anand's CPU benchmarks. It seems to be a CPU dependent game. When I play it all four cores are nearly maxed out while my GPU barely reaches 60% usage. Where most other games seem to be the opposite.Kidster3001 - Friday, January 28, 2011 - link

Nice article. It cleared up much about the new chips I had questions on.A suggestion. I have worked in the chip making business. Perhaps you could run an article on how bin-splits and features are affected by yields and defects. Many here seem to believe that all features work on all chips (but the company chooses to disable them) when that is not true. Some features, such as virtualization, are excluded from SKU's for a business reason. These are indeed disabled by the manufacturer inside certain chips (they usually use chips where that feature is defective anyway, but can disable other chips if the market is large enough to sell more). Other features, such as less cache or lower speeds are missing from some SKU's because those chips have a defect which causes that feature to not work or not to run as fast in those chips. Rather than throwing those chips away, companies can sell them at a cheaper price. i.e. Celeron -> 1/2 the cache in the chip doesn't work right so it's disabled.

It works both ways though. Some of the low end chips must come from better chips that have been down-binned, otherwise there wouldn't be enough low-end chips to go around.

katleo123 - Tuesday, February 1, 2011 - link

It is not expected to compete Core i7 processors to take its place.Sandy bridge uses fixed function processing to produce better graphics using the same power consumption as Core i series.

visit http://www.techreign.com/2010/12/intels-sandy-brid...

jmascarenhas - Friday, February 4, 2011 - link

Problem is we need to choose between using integrated GPU where we have to choose a H67 board or do some over clocking with a P67. I wonder why we have to make this option... this just means that if we dont do gaming and the 3000 is fine we have to go for the H67 and therefore cant OC the processor.....jmascarenhas - Monday, February 7, 2011 - link

and what about those who want to OC and dont need a dedicated Graphic board??? I understand Intel wanting to get money out of early adopters, but dont count on me.fackamato - Sunday, February 13, 2011 - link

Get the K version anyway? The internal GPU gets disabled when you use an external GPU AFAIK.