The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTOverclocking Intel's HD Graphics

The base clock of both Intel's HD Graphics 2000 and 3000 on desktop SKUs is 850MHz. Thankfully, Intel's 32nm process allows for much headroom in both the CPU and GPU for overclocking. There are no clock locks or K-series parts to worry about when it comes to GPU overclocking; everything is unlocked. I started by trying to see how far I could push the Core i3-2100's HD Graphics 2000.

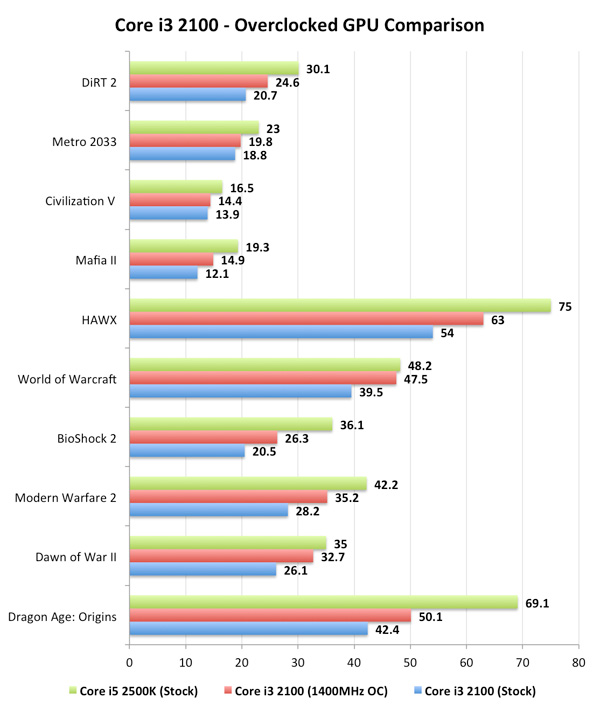

While I could get into Windows and run games at up to 1.6GHz, I needed to back down to 1.4GHz to maintain stability across all of our tests. That's a 64.7% overclock:

In some cases (Civilization V, WoW, Dawn of War II), the overclocked HD Graphics 2000 was enough to bring the 6 EU part close to the performance of the 3000 model. For the most part however the overclock just helped the Core i3-2100 perform halfway between it and the Core i5-2500K.

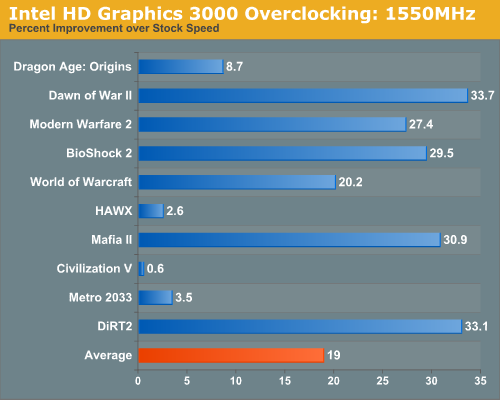

I tried the same experiment with the Core i5-2500K. While there's no chance it could catch up to a Radeon HD 5570, I managed to overclock my 2500K to 1.55GHz (the GPU clock can be adjusted in 50MHz increments):

The 82.4% increase in clock speed resulted in anywhere from a 0.6% to 33.7% increase in performance. While that's not terrible, it's also not that great. It looks like we're fairly memory bandwidth constrained here.

283 Comments

View All Comments

Taft12 - Tuesday, January 4, 2011 - link

You first.ReaM - Tuesday, January 4, 2011 - link

the six core 980x still owns them in all tests where all cores are used.I dont know 22k in cinebench is really not a reason to buy the new i7, I reach 24k on air with i7 860 and my i5 runs on 20k on air.

Short term performance is real good, but I dont care if I wait for a package to unpack for 7 seconds or 8, for long term like rendering, neither there is a reason to upgrade.

I recommend you get the older 1156 off ebay and save a ton of money.

I have the i5 on hackintosh, I am wondering if 1155 will be hackintoshable

Spivonious - Tuesday, January 4, 2011 - link

I have to disagree with Anand; I feel the QuickSync image is the best of the four in all cases. Yes, there is some edge-softening going on, so you lose some of the finer detail that ATi and SNB gives you, but when viewing on a small screen such as one on an iPhone/iPod, I'd rather have the smoothed-out shapes than pixel-perfect detail.wutsurstyle - Tuesday, January 4, 2011 - link

I started my computing days with Intel but I'm so put off by the way Intel is marketing their new toys. Get this but you can't have that...buy that, but your purchase must include other things. And even after I throw my wallet to Intel, I still would not have a OC'd Sandy Bridge with useful IGP and Quicksync. But wait, throw more money on a Z68 a little later. Oh...and there's a shiny new LGA2011 in the works. Anyone worried that they started naming sockets after the year it comes out? Yay for spending!AMD..please save us!

MrCrispy - Tuesday, January 4, 2011 - link

Why the bloody hell don't the K parts support VT-d ?! I can only imagine it will be introduced at a price premium in a later part.slick121 - Tuesday, January 4, 2011 - link

Wow I just realized this. I really hate this type of market segmentation.Navier - Tuesday, January 4, 2011 - link

I'm a little confused why Quick Sync needs to have a monitor connected to the MB to work. I'm trying to understand why having a monitor connected is so important for video transcoding, vs. playback etc.Is this a software limitation? Either in the UEFI (BIOS) or drivers? Or something more systemic in the hardware.

What happens on a P67 motherboard? Does the P67 board disable the on die GPU? Effectively disabling Quick Sync support? This seems a very unfortunate over-site for such a promising feature. Will a future driver/firmware update resolve this limitation?

Thanks

NUSNA_moebius - Tuesday, January 4, 2011 - link

Intel HD 3000 - ~115 Million transistorsAMD Radeon HD 3450 - 181 Million transistors - 8 SIMDs

AMD Radeon HD 4550 - 242 Million transistors - 16 SIMDs

AMD Radeon HD 5450 - 292 Million transistors - 16 SIMDs

AMD Xenos (Xbox 360 GPU) - 232 Million transistors + 105 Million (eDRAM daughter die) = 337 Million transistors - 48 SIMDs

Xenos I think in the end is still a good two, two and a half times more powerful than the Radeon 5450. Xenos does not have to be OpenCL, Direct Compute, DX11 nor fully DX10 compliant (a 50 million jump from the 4550 going from DX10.1 to 11), nor contains hardware video decode, integrated HDMI output with 5.1 audio controller (even the old Radeon 3200 clocks in at 150 million + transistors). What I would like some clarification on is if the transistor count for the Xenos includes Northbridge functions..............

Clearly PC GPUs have insane transistor counts in order to be highly compatible. It is commendable how well the Intel HD 3000 does with only 115 Million, but it's important to note that older products like the X1900 had 384 Million transistors, back when DX9.0c was the aim and in pure throughput, it should match or closely trail Xenos at 500 MHz. Going from the 3450 to 4550 GPUs, we go up another 60 million for 8 more SIMDs of a similar DX10.1 compatible nature, as well as the probable increases for hardware video decode, etc. So basically, to come into similar order as the Xenos in terms of SIMD counts (of which Xenos is 48 of it's own type I must emphasize), we would need 60 million transistors per 8 SIMDs, which would put us at about 360 million transistors for a 48 SIMD (240 SP) AMD part that is DX 10.1 compatible and not equipped with anything unrelated to graphics processing.

Yes, it's a most basic comparison (and probably fundamentally wrong in some regards), but I think it sheds some light on the idea that the Radeon HD 5450 really still pales in comparison to the Xenos. We have much better GPUs like Redwood that are twice as powerful with their higher clock speeds + 400 SPs (627 Million transistors total) and consume less energy than Xenos ever did. Of course, this isn't taking memory bandwidth or framebuffer size into account, nor the added benefits of console optimization.

frankanderson - Tuesday, January 4, 2011 - link

I'm still rocking my Q6600 + Gigabyte X38 DS5 board, upgraded to a GTX580 and been waiting for Sandy, definitely looking forward to this once the dust settles..Thanks Anand...

Spivonious - Wednesday, January 5, 2011 - link

I'm still on E6600 + P965 board. Honestly, I would upgrade my video card (HD3850) before doing a complete system upgrade, even with Sandy Bridge being so much faster than my old Conroe. I have yet to run a game that wasn't playable at full detail. Maybe my standards are just lower than others.